Can a compact iterative draft-and-revise solver surpass much larger autoregressive language models on ARC-AGI benchmarks? Researchers at Samsung SAIT in Montreal have introduced the Tiny Recursive Model (TRM), a lightweight recursive reasoning system with approximately 7 million parameters and just two layers. TRM achieves impressive test accuracies of 44.6-45% on ARC-AGI-1 and 7.8-8% on ARC-AGI-2, outperforming significantly larger models like DeepSeek-R1, o3-mini-high, and Gemini 2.5 Pro on identical public benchmarks. Additionally, TRM sets new records on challenging puzzle datasets, scoring 87.4% on Sudoku-Extreme and 85.3% on Maze-Hard, surpassing the previous Hierarchical Reasoning Model (HRM) which has 27 million parameters, all while employing fewer parameters and a more straightforward training approach.

Innovations Behind the Tiny Recursive Model

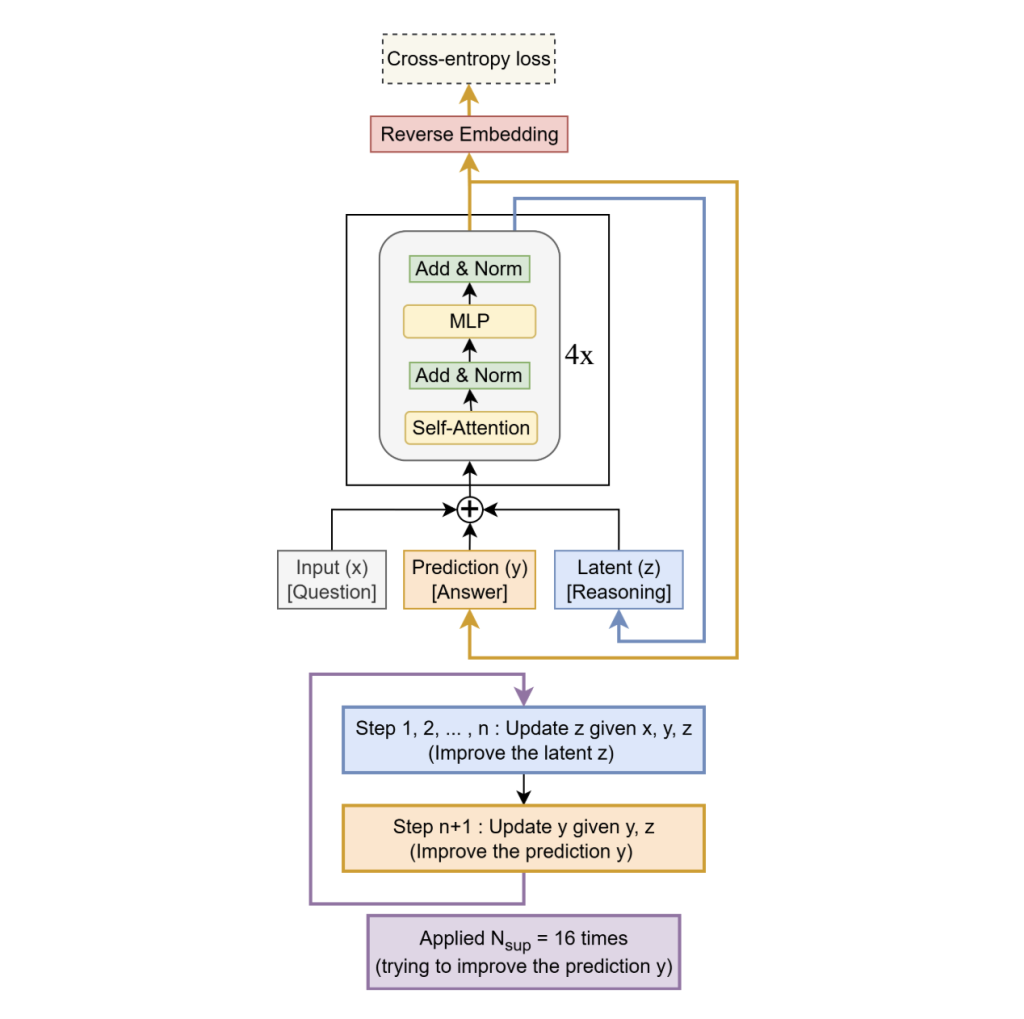

TRM departs from HRM’s dual-module architecture and fixed-point gradient approximations by utilizing a single compact network that iteratively processes a latent “scratchpad” state (denoted as z) alongside a current solution embedding (y):

- Unified recurrent core: Instead of HRM’s two-module hierarchy, TRM employs a two-layer network that simultaneously updates the latent scratchpad z and the solution embedding y. The model alternates between “thinking” steps, where z is updated based on the input x, current solution y, and previous scratchpad state, and “acting” steps, where y is refined using the updated scratchpad.

- Deep supervision through recursion: The iterative think-act cycle is unrolled up to 16 times during training, with deep supervision applied at each step. A learned halting mechanism guides the recursion, while at inference time the full unroll is executed. Information flows continuously through the latent states y and z across iterations.

- Complete backpropagation through recursive steps: Unlike HRM’s approximation using one-step implicit gradients, TRM performs full gradient backpropagation through all recursive iterations, a factor found critical for improved generalization.

Architecturally, TRM retains self-attention mechanisms for larger grid problems like Maze-Hard (30×30), while for smaller fixed-size grids such as Sudoku, it replaces attention with an MLP-Mixer-style token mixer to reduce model complexity and overfitting. Training stability is enhanced by applying an exponential moving average (EMA) to model weights. Instead of deep stacking, TRM achieves effective depth through recursion (e.g., 3 outer steps with 6 inner updates each), with experiments showing that a shallow two-layer network generalizes better than deeper alternatives given the same computational budget.

Performance Highlights and Benchmark Comparisons

- ARC-AGI-1 and ARC-AGI-2: TRM with attention (7M parameters) attains 44.6% accuracy on ARC-AGI-1 and 7.8% on ARC-AGI-2 (two attempts), outperforming HRM’s 40.3% and 5.0% respectively. In contrast, large-scale language models such as DeepSeek-R1 (671B parameters) achieve only 15.8% and 1.3%, o3-mini-high scores 34.5% and 3.0%, and Gemini 2.5 Pro reaches 37.0% and 4.9%. Notably, specialized Grok-4 models with much larger sizes report higher scores (66.7-79.6% on ARC-AGI-1 and 16-29.4% on ARC-AGI-2).

- Sudoku-Extreme (9×9 grids): TRM’s attention-free mixer variant achieves 87.4% accuracy, a substantial improvement over HRM’s 55.0%.

- Maze-Hard (30×30 grids): TRM reaches 85.3%, surpassing HRM’s 74.5%.

These results stem from models trained from scratch on relatively small, heavily augmented datasets, rather than relying on few-shot prompting. ARC remains a benchmark of interest, with the ARC Prize Foundation tracking leaderboard progress and setting ambitious targets such as an 85% accuracy threshold on ARC-AGI-2 for grand prize eligibility.

Why Does a 7M-Parameter Model Outperform Much Larger LLMs?

- Draft-and-revise approach over token-by-token generation: TRM generates a complete candidate solution and iteratively refines it through latent consistency checks against the input, mitigating exposure bias common in autoregressive decoding of structured outputs.

- Test-time computation focused on reasoning depth rather than parameter scale: The model’s effective depth is amplified by recursive unrolling (effective depth ≈ T × (n+1) layers), which yields superior generalization at fixed compute compared to simply increasing network layers.

- Inductive biases tailored to grid-based reasoning: For small, fixed-size grids like Sudoku, replacing self-attention with token mixing reduces model capacity and improves the bias-variance trade-off, while self-attention remains beneficial for larger, more complex grids.

Summary of Key Insights

- Model design: A compact, two-layer recursive solver with ~7 million parameters that alternates between latent “think” updates and “act” refinements, unrolled up to 16 steps with deep supervision and full gradient backpropagation through recursion.

- Benchmark achievements: TRM attains approximately 44.6-45% accuracy on ARC-AGI-1 and 7.8-8% on ARC-AGI-2 (two attempts), outperforming several much larger language models under the same evaluation protocols.

- Efficiency and scalability: Demonstrates that allocating computational resources to iterative refinement at test time can outperform parameter scaling on symbolic and geometric reasoning tasks, providing a compact, from-scratch training recipe with publicly available code.

Final Thoughts

This study introduces a novel, efficient recursive reasoning architecture that achieves competitive performance on challenging ARC-AGI benchmarks with a fraction of the parameters used by large language models. While ARC-AGI remains an open challenge with targets far beyond current results, TRM’s approach highlights the potential of architectural efficiency and iterative refinement over brute-force scaling. The research team has made the implementation publicly accessible, encouraging further exploration and development in this promising direction.