Introducing Skala: A Neural Exchange-Correlation Functional for Enhanced Density Functional Theory

Summary: Skala is an advanced deep-learning-based exchange-correlation (XC) functional designed for Kohn-Sham Density Functional Theory (DFT). It aims to deliver hybrid functional accuracy while maintaining computational costs comparable to semi-local methods. Demonstrating a mean absolute error (MAE) of approximately 1.06 kcal/mol on the W4-17 benchmark (0.85 kcal/mol on its single-reference subset) and a weighted total mean absolute deviation (WTMAD-2) of about 3.89 kcal/mol on the GMTKN55 dataset, Skala incorporates a fixed D3(BJ) dispersion correction. Currently optimized for main-group molecular chemistry, future developments plan to extend its applicability to transition metals and periodic systems. The model and associated tools are accessible through Azure AI Foundry Labs and the open-source microsoft/skala repository.

Revolutionizing DFT with Neural Exchange-Correlation Functionals

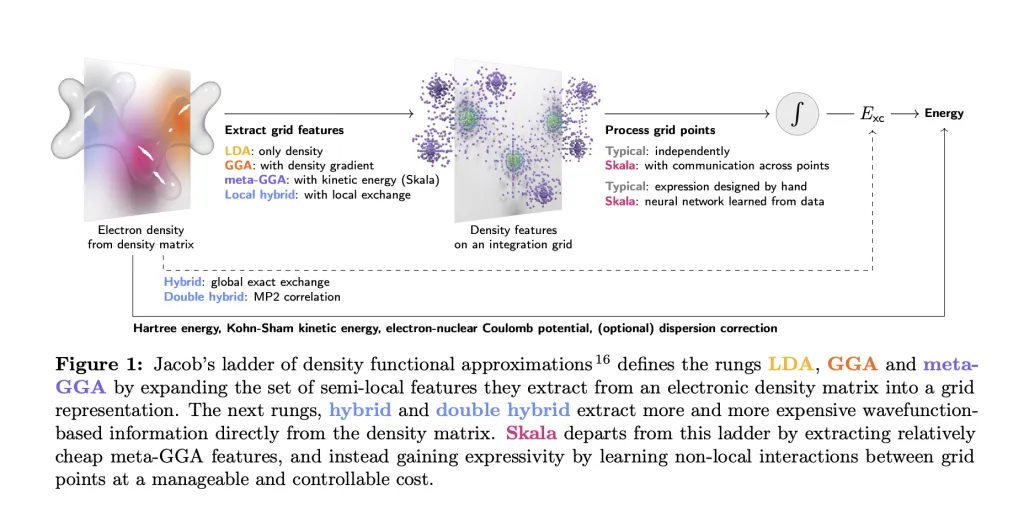

Microsoft Research has unveiled Skala, a novel neural network-based XC functional tailored for Kohn-Sham DFT calculations. Unlike traditional handcrafted functionals, Skala leverages deep learning to capture non-local electronic effects from data, all while preserving the computational efficiency typical of meta-GGA functionals. This approach opens new avenues for achieving high accuracy in quantum chemical simulations without the steep computational demands of hybrid functionals.

Illustration of Skala’s architecture and performance metrics.

Understanding Skala’s Scope and Design Philosophy

Skala replaces conventional, manually designed XC functionals with a neural network evaluated on standard meta-GGA grid features. Importantly, this initial version does not attempt to model dispersion interactions directly; instead, it relies on a fixed dispersion correction, specifically the D3(BJ) scheme, for benchmark comparisons. The primary objective is to achieve rigorous thermochemical accuracy for main-group molecules at a computational cost similar to semi-local functionals, rather than providing a universal solution for all chemical systems from the outset.

Meta-GGA grid features serve as the input for Skala’s neural functional evaluation.

Benchmarking Skala: Accuracy Meets Efficiency

Skala’s performance has been rigorously tested on prominent datasets. On the W4-17 atomization energy benchmark, it achieves an MAE of 1.06 kcal/mol across the full dataset and improves to 0.85 kcal/mol on the single-reference subset, indicating strong predictive power for well-behaved molecules. For the comprehensive GMTKN55 benchmark suite, Skala attains a WTMAD-2 score of 3.89 kcal/mol, placing it competitively alongside leading hybrid functionals. All these evaluations consistently apply the D3(BJ) dispersion correction to ensure fair comparisons.

Comparative performance of Skala against established functionals on benchmark datasets.

Innovative Architecture and Training Methodology

Skala operates by first computing meta-GGA features on a standard numerical integration grid. It then processes these features through a finite-range, non-local neural operator that respects essential physical constraints such as the Lieb-Oxford bound, size-consistency, and coordinate scaling. The training pipeline unfolds in two stages:

- Pre-training: Utilizing B3LYP-generated electron densities paired with exchange-correlation labels derived from high-level wavefunction calculations.

- SCF-in-the-loop fine-tuning: Refining the model using Skala’s own self-consistent field (SCF) densities, without backpropagation through the SCF procedure.

The training dataset comprises approximately 150,000 high-fidelity labels, including around 80,000 coupled-cluster-quality total atomization energies (MSR-ACC/TAE). To prevent data leakage, benchmark sets such as W4-17 and GMTKN55 were excluded from the training corpus.

Computational Efficiency and Software Integration

Designed to maintain a semi-local computational scaling (approximately O(N³)), Skala is optimized for GPU acceleration through the GauXC library. The open-source repository provides:

- A PyTorch implementation and a

microsoft-skalapackage available on PyPI, featuring integration hooks for PySCF and ASE. - A GauXC plugin enabling seamless incorporation of Skala into various DFT software frameworks.

The model contains roughly 276,000 trainable parameters, and the documentation includes straightforward examples to facilitate adoption.

Practical Applications in Computational Chemistry

Skala is particularly suited for workflows involving main-group molecular systems where balancing computational cost and accuracy is critical. Typical use cases include:

- High-throughput screening of reaction energetics, including reaction energies and barrier heights.

- Ranking of conformer and radical stabilities.

- Prediction of molecular geometries and dipole moments to support QSAR modeling and lead optimization in drug discovery.

Thanks to its compatibility with PySCF, ASE, and GPU-accelerated GauXC, researchers can efficiently perform batched SCF calculations at near meta-GGA speeds, reserving more expensive hybrid or coupled-cluster methods for final validation. Skala is accessible for experimentation via Azure AI Foundry Labs and as an open-source package on GitHub and PyPI.

Summary of Key Features

- Accuracy: Achieves MAE of 1.06 kcal/mol on W4-17 and WTMAD-2 of 3.89 kcal/mol on GMTKN55, with dispersion corrections applied via D3(BJ).

- Methodology: Employs a neural XC functional with meta-GGA inputs and learned finite-range non-locality, respecting fundamental exact constraints while maintaining semi-local computational cost.

- Training Data: Trained on a large dataset of ~150,000 high-accuracy labels, including ~80,000 CCSD(T)/CBS-quality atomization energies; incorporates SCF-in-the-loop fine-tuning using Skala’s own densities.

Final Thoughts

Skala represents a significant advancement in the development of neural XC functionals, offering hybrid-level accuracy at a fraction of the computational expense. Its current focus on main-group molecular chemistry makes it a practical tool for many quantum chemistry applications. With open access through Azure AI Foundry Labs and comprehensive software integration, Skala invites the scientific community to explore and benchmark its capabilities against existing meta-GGA and hybrid functionals.