nanochat: A streamlined, lightweight codebase designed for reproducible and customizable large language model (LLM) training on a single multi-GPU system.

This comprehensive pipeline manages every stage of the process: from tokenization and foundational pretraining to intermediate training on conversational, multiple-choice, and tool-usage datasets, followed by Supervised Fine-Tuning (SFT), optional reinforcement learning (RL) on GSM8K, evaluation, and deployment through both command-line and ChatGPT-style web interfaces. The ideal hardware setup involves an 8× NVIDIA H100 GPU node, with an estimated cost of approximately $24 per hour. Completing the entire training cycle in about four hours results in a total expense near $100. Upon completion, a detailed report.md file summarizes key performance metrics including CORE, ARC-Easy/Challenge, MMLU, GSM8K, HumanEval, and ChatCORE scores.

Advanced Tokenization and Dataset Management

- Tokenizer: Utilizes a custom-built Rust-based Byte Pair Encoding (BPE) tokenizer, developed with Maturin, featuring an extensive vocabulary of 65,536 tokens. Training data is sourced from the FineWeb-EDU dataset shards, which are reorganized and shuffled for efficient access. The tokenizer achieves approximately 4.8 characters per token compression, outperforming traditional GPT-2 and GPT-4 tokenizers in efficiency.

- Evaluation Suite: Incorporates a carefully selected evaluation bundle for the CORE benchmark, encompassing 22 diverse autocompletion datasets such as HellaSwag, ARC, and BoolQ. These datasets are automatically downloaded to

~/.cache/nanochat/eval_bundlefor seamless integration.

Model Architecture, Scaling Strategy, and Speedrun Objective

The default “speedrun” configuration trains a 20-layer Transformer model with roughly 560 million parameters, featuring 1280 hidden units and 10 attention heads each of dimension 128. This setup processes approximately 11.2 billion tokens, adhering to Chinchilla-style scaling laws (parameters multiplied by about 20 tokens). The resulting model is estimated to deliver around 4×1019 FLOPs of computational capability. Training employs the Muon library for matrix multiplications and the AdamW optimizer for embedding layers. Loss is measured in bits-per-byte (bpb) to maintain tokenizer-agnostic evaluation.

Intermediate Training, Fine-Tuning, and Integrated Tool Usage

Following base pretraining, the model undergoes mid-training to specialize in conversational tasks using the SmolTalk dataset, alongside explicit training on multiple-choice questions with 100,000 auxiliary MMLU samples. Tool usage capabilities are introduced by embedding <|python_start|>...<|python_end|> code blocks, enabling the model to perform calculator-like operations, seeded with a subset of GSM8K problems. The combined training mixture includes 460K SmolTalk rows, 100K MMLU auxiliary questions, and 8K GSM8K examples, totaling 568K training instances.

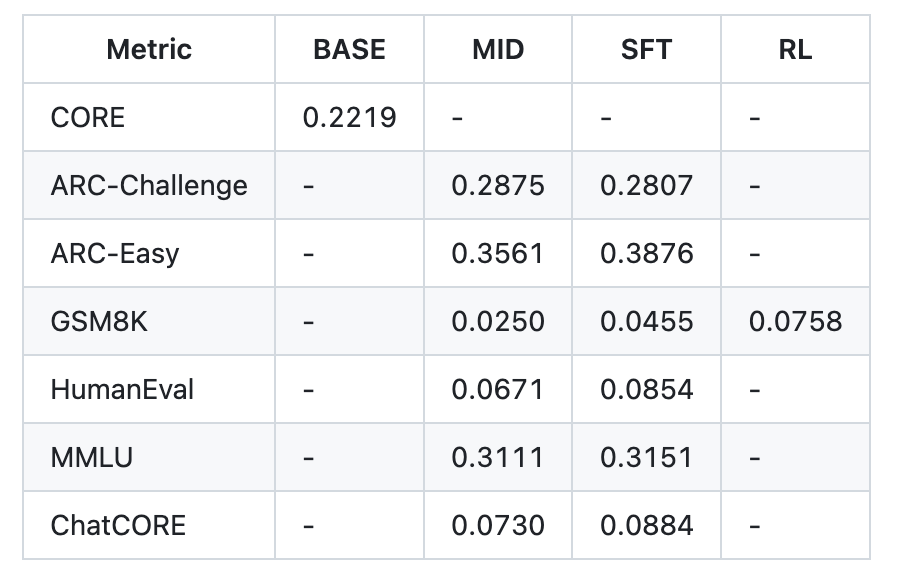

Subsequently, Supervised Fine-Tuning (SFT) refines the model on higher-quality conversational data, aligning training formats with inference-time expectations by using padded, non-concatenated inputs to minimize discrepancies. Post-SFT evaluation on the speedrun model reports scores such as ARC-Easy 38.76%, ARC-Challenge 28.07%, MMLU 31.51%, GSM8K 4.55%, HumanEval 8.54%, and ChatCORE 8.84%.

Tool integration is fully supported through a custom Engine that manages key-value caching, prefill and decode inference steps, and a sandboxed Python interpreter, facilitating tool-augmented training and evaluation workflows.

Reinforcement Learning Enhancement via Simplified GRPO

An optional final phase applies reinforcement learning on the GSM8K dataset using a streamlined Group Relative Policy Optimization (GRPO) algorithm. This approach simplifies traditional PPO-based RLHF by omitting trust region constraints, KL divergence penalties, and PPO clipping ratios. Instead, it performs on-policy updates with token-level normalization inspired by GAPO and employs mean-shifted advantage calculations. Functionally, this method resembles a REINFORCE algorithm augmented with group-relative advantage estimation. Example scripts scripts.chat_rl and scripts.chat_eval -i rl -a GSM8K illustrate this training loop.

Cost-Performance Scaling and Larger Model Options

Beyond the economical ~$100 speedrun, the project outlines two expanded training tiers:

- Mid-tier (~$300): Increases model depth to 26 layers, requiring roughly 12 hours of training. This configuration slightly outperforms GPT-2 on the CORE benchmark by leveraging additional pretraining shards and larger batch sizes.

- High-tier (~$1,000): Extends training to approximately 41.6 hours, yielding significant improvements in model coherence, reasoning, and coding capabilities.

Previous experimental runs with a 30-layer model trained for 24 hours achieved notable results, including 40% accuracy on MMLU, 70% on ARC-Easy, and 20% on GSM8K, demonstrating the scalability of the approach.

Performance Summary from the Speedrun Configuration

The report.md generated after the ~4-hour, $100 training cycle highlights the following metrics:

- Initial CORE benchmark: 22.19%

- Post mid-training and SFT improvements: ARC-Easy increased from 35.61% to 38.76%, ARC-Challenge slightly adjusted from 28.75% to 28.07%, MMLU from 31.11% to 31.51%, GSM8K from 2.50% to 4.55%, HumanEval from 6.71% to 8.54%, and ChatCORE from 7.30% to 8.84%

- Total wall-clock training time: 3 hours and 51 minutes

Summary Insights

- nanochat offers a compact, end-to-end ChatGPT-style training and inference framework (~8,000 lines of code) executable via a single

speedrun.shscript on an 8×H100 GPU node within four hours at an estimated cost of $100. - The pipeline encompasses a custom Rust BPE tokenizer, base pretraining, intermediate training phases, supervised fine-tuning, optional reinforcement learning on GSM8K, comprehensive evaluation, and deployment through both CLI and web interfaces.

- Speedrun results demonstrate competitive performance on multiple benchmarks, with clear pathways for scaling to more powerful models at higher computational budgets.

- Scaling options provide flexible trade-offs between cost and model quality, enabling users to tailor training duration and resources to their specific needs.

Final Thoughts

nanochat strikes a practical balance by delivering a single, dependency-minimal repository that integrates tokenizer training, pretraining on FineWeb-EDU, mid-training with conversational and multiple-choice datasets enhanced by tool-use tokens, supervised fine-tuning, and an optional simplified reinforcement learning stage. The lightweight Engine supports efficient inference with key-value caching and a sandboxed Python interpreter, culminating in a reproducible, transparent training pipeline complete with detailed performance reporting and a minimalistic web UI.