This guide delves into the sophisticated features of PyTest, a leading Python testing framework renowned for its flexibility and power. We construct a compact project from the ground up, illustrating key concepts such as fixtures, markers, plugins, parameterization, and custom configurations. Our goal is to demonstrate how PyTest transcends being a mere test runner to become a comprehensive, adaptable testing ecosystem suitable for complex, real-world software development. By the end, you’ll gain insight not only into writing effective tests but also into tailoring PyTest’s functionality to meet diverse project requirements.

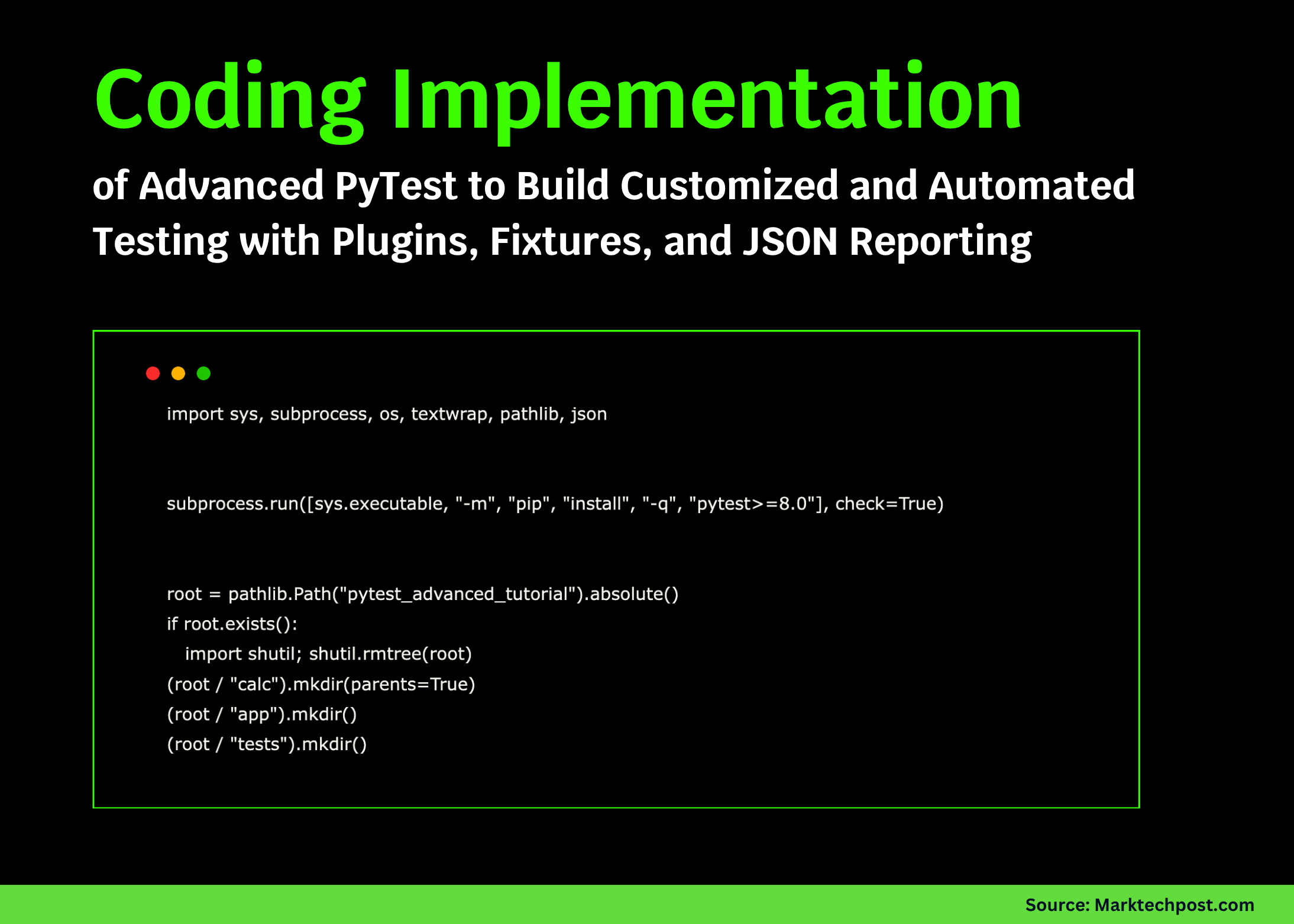

Establishing the Project Environment

We start by preparing our workspace, importing vital Python modules for file manipulation and subprocess management. To guarantee compatibility with the latest features, we install PyTest version 8.0 or higher. Next, we create a clean directory structure comprising folders for core logic, application components, and test cases. This organized layout lays a strong foundation for scalable and maintainable test development.

import sys, subprocess, os, textwrap, pathlib, json

subprocess.run([sys.executable, "-m", "pip", "install", "-q", "pytest>=8.0"], check=True)

root = pathlib.Path("pytestadvancedtutorial").absolute()

if root.exists():

import shutil

shutil.rmtree(root)

(root / "calc").mkdir(parents=True)

(root / "app").mkdir()

(root / "tests").mkdir()

Configuring PyTest with Custom Plugins and Markers

To enhance test management, we define a pytest.ini file specifying markers for categorizing tests, such as slow, io, and api. We also set default command-line options to streamline test execution. Complementing this, a conftest.py plugin extends PyTest’s capabilities by introducing a --runslow flag to selectively run slow tests, tracking test outcomes, and providing reusable fixtures. This modular approach empowers us to customize test discovery and reporting effectively.

(root / "pytest.ini").writetext(textwrap.dedent("""

[pytest]

addopts = -q -ra --maxfail=1 -m "not slow"

testpaths = tests

markers =

slow: marks tests as slow (use --runslow to execute)

io: tests involving file system operations

api: tests mocking external API calls

""").strip() + "n")

(root / "conftest.py").writetext(textwrap.dedent(r'''

import os, time, pytest

def pytestaddoption(parser):

parser.addoption("--runslow", action="storetrue", help="execute slow tests")

def pytestconfigure(config):

config.addinivalueline("markers", "slow: marks slow tests")

config.summary = {"passed": 0, "failed": 0, "skipped": 0, "slowran": 0}

def pytestcollectionmodifyitems(config, items):

if config.getoption("--runslow"):

return

skipslow = pytest.mark.skip(reason="need --runslow option to run")

for item in items:

if "slow" in item.keywords:

item.addmarker(skipslow)

def pytestruntestlogreport(report):

summary = report.config.summary

if report.when == "call":

if report.passed:

summary["passed"] += 1

elif report.failed:

summary["failed"] += 1

elif report.skipped:

summary["skipped"] += 1

if "slow" in report.keywords and report.passed:

summary["slowran"] += 1

def pytestterminalsummary(terminalreporter, exitstatus, config):

s = config.summary

terminalreporter.writesep("=", "SESSION SUMMARY (custom plugin)")

terminalreporter.writeline(f"Passed: {s['passed']} | Failed: {s['failed']} | Skipped: {s['skipped']}")

terminalreporter.writeline(f"Slow tests executed: {s['slowran']}")

if s["failed"] == 0:

terminalreporter.writeline("All tests passed successfully ✅")

else:

terminalreporter.writeline("Some tests failed ❌")

@pytest.fixture(scope="session")

def settings():

return {"env": "production", "maxretries": 2}

@pytest.fixture(scope="function")

def eventlog():

logs = []

yield logs

print("nEVENT LOG:", logs)

@pytest.fixture

def tempjsonfile(tmppath):

path = tmppath / "data.json"

path.writetext('{"message": "hello"}')

return path

@pytest.fixture

def fakeclock(monkeypatch):

currenttime = {"now": 1000.0}

monkeypatch.setattr(time, "time", lambda: currenttime["now"])

return currenttime

'''))

Developing Core Calculation Utilities

Within the calc package, we implement fundamental mathematical functions such as addition, division with zero-division safeguards, and a moving average calculator. Additionally, we introduce a Vector class that supports vector arithmetic, equality comparisons with precision tolerance, and magnitude calculation. These components serve as practical examples for testing both simple functions and complex objects.

(root / "calc" / "init.py").writetext(textwrap.dedent('''

from .vector import Vector

def add(a, b):

return a + b

def div(a, b):

if b == 0:

raise ZeroDivisionError("division by zero")

return a / b

def movingavg(values, windowsize):

if windowsize size > len(values):

raise ValueError("invalid window size")

averages = []

windowsum = sum(values[:windowsize])

averages.append(windowsum / windowsize)

for i in range(windowsize, len(values)):

windowsum += values[i] - values[i - windowsize]

averages.append(windowsum / windowsize)

return averages

'''))

(root / "calc" / "vector.py").writetext(textwrap.dedent('''

class Vector:

slots = ("x", "y", "z")

def init(self, x=0, y=0, z=0):

self.x, self.y, self.z = float(x), float(y), float(z)

def add(self, other):

return Vector(self.x + other.x, self.y + other.y, self.z + other.z)

def sub(self, other):

return Vector(self.x - other.x, self.y - other.y, self.z - other.z)

def mul(self, scalar):

return Vector(self.x scalar, self.y scalar, self.z scalar)

rmul = mul

def norm(self):

return (self.x2 + self.y2 + self.z2) 0.5

def eq(self, other):

tolerance = 1e-9

return (abs(self.x - other.x) repr(self):

return f"Vector({self.x:.2f}, {self.y:.2f}, {self.z:.2f})"

'''))

Implementing Application Utilities and Mock APIs

To simulate real-world scenarios, we add utility functions for JSON file operations and a timing wrapper to measure execution duration. We also create a mock API function that returns user names, with behavior toggled by an environment variable to simulate offline mode. These utilities enable us to test I/O and external dependencies without relying on actual services.

(root / "app" / "ioutils.py").writetext(textwrap.dedent('''

import json

import pathlib

import time

def savejson(path, obj):

path = pathlib.Path(path)

path.writetext(json.dumps(obj))

return path

def loadjson(path):

return json.loads(pathlib.Path(path).readtext())

def timedoperation(func, args, kwargs):

start = time.time()

result = func(args, kwargs)

end = time.time()

return result, end - start

'''))

(root / "app" / "api.py").writetext(textwrap.dedent('''

import os

import time

import random

def fetchusername(userid):

if os.environ.get("APIMODE") == "offline":

return f"cached{userid}"

time.sleep(0.001)

return f"user{userid}{random.randint(100, 999)}"

'''))

Crafting Comprehensive Test Suites

Our test modules leverage PyTest’s advanced features such as parameterized tests, expected failures, markers, and fixtures. We validate arithmetic functions, vector operations, JSON I/O, timing utilities, and API behavior. Additionally, a slow test demonstrates session-scoped fixtures managing event logs and simulated time, showcasing how to control test execution flow and state.

(root / "tests" / "testcalc.py").writetext(textwrap.dedent('''

import pytest

from calc import add, div, movingavg

from calc.vector import Vector

@pytest.mark.parametrize("a, b, expected", [(1, 2, 3), (0, 0, 0), (-1, 1, 0)])

def testadd(a, b, expected):

assert add(a, b) == expected

@pytest.mark.parametrize("a, b, expected", [(6, 3, 2), (8, 2, 4)])

def testdiv(a, b, expected):

assert div(a, b) == expected

@pytest.mark.xfail(raises=ZeroDivisionError)

def testdivzero():

div(1, 0)

def testmovingavg():

assert movingavg([1, 2, 3, 4, 5], 3) == [2, 3, 4]

def testvectoroperations():

v = Vector(1, 2, 3) + Vector(4, 5, 6)

assert v == Vector(5, 7, 9)

'''))

(root / "tests" / "testioapi.py").writetext(textwrap.dedent('''

import pytest

import os

from app.ioutils import savejson, loadjson, timedoperation

from app.api import fetchusername

@pytest.mark.io

def testiooperations(tempjsonfile, tmppath):

data = {"x": 5}

path = tmppath / "a.json"

savejson(path, data)

assert loadjson(path) == data

assert loadjson(tempjsonfile) == {"message": "hello"}

def testtimedoperation(capsys):

value, duration = timedoperation(lambda x: x 3, 7)

print(f"Duration={duration}")

captured = capsys.readouterr().out

assert "Duration=" in captured and value == 21

@pytest.mark.api

def testapibehavior(monkeypatch):

monkeypatch.setenv("APIMODE", "offline")

assert fetchusername(9) == "cached9"

'''))

(root / "tests" / "testslow.py").writetext(textwrap.dedent('''

import pytest

@pytest.mark.slow

def testslowprocess(eventlog, fakeclock):

eventlog.append(f"start@{fakeclock['now']}")

fakeclock["now"] += 3.0

eventlog.append(f"end@{fakeclock['now']}")

assert len(eventlog) == 2

'''))

Executing Tests and Summarizing Results

We execute the test suite twice: initially with the default settings that exclude slow tests, and subsequently with the --runslow option to include them. After both runs, a JSON summary is generated, detailing the total number of test files, the results of each run, the presence of slow tests, and a sample event log. This comprehensive report offers a transparent overview of the testing status, confirming the robustness of our setup.

print("📦 Project directory created at:", root)

print("n▶ RUN #1 (default, slow tests skipped)n")

result1 = subprocess.run([sys.executable, "-m", "pytest", str(root)], text=True)

print("n▶ RUN #2 (including slow tests with --runslow)n")

result2 = subprocess.run([sys.executable, "-m", "pytest", str(root), "--runslow"], text=True)

summaryfile = root / "summary.json"

summary = {

"totaltestfiles": sum("test" in str(p) for p in root.rglob("test*.py")),

"testruns": ["default", "--runslow"],

"results": ["success" if result1.returncode == 0 else "fail",

"success" if result2.returncode == 0 else "fail"],

"slowtestspresent": True,

"sampleeventlog": ["start@1000.0", "end@1003.0"]

}

summaryfile.write_text(json.dumps(summary, indent=2))

print("n📊 FINAL SUMMARY")

print(json.dumps(summary, indent=2))

print("n✅ Tutorial complete - all tests passed and summary generated successfully.")

Final Thoughts: Harnessing PyTest for Scalable Testing

This tutorial highlights how PyTest empowers developers to create intelligent, maintainable test suites. By designing a custom plugin to monitor test outcomes, leveraging fixtures for stateful testing, and managing slow tests through command-line options, we maintain a clean and modular workflow. The generated JSON summary exemplifies how PyTest seamlessly integrates with continuous integration systems and analytics tools. Equipped with this foundation, you can confidently expand your testing strategy to include coverage analysis, performance benchmarking, or parallel execution, elevating your projects to professional-grade quality assurance.