Is it truly necessary to rely on massive vision-language models when Alibaba’s latest Qwen3-VL 4B and 8B dense models, equipped with FP8 quantization, operate efficiently on limited VRAM while supporting an extensive context window ranging from 256K to 1 million tokens? The Qwen team at Alibaba has broadened its multimodal AI portfolio by introducing these compact yet powerful models, each available in two specialized modes-Instruct and Thinking-alongside FP8-quantized checkpoints designed for resource-constrained environments. These new releases complement the previously launched large-scale 30B and 235B Mixture-of-Experts (MoE) models, maintaining the same comprehensive capabilities including image and video analysis, optical character recognition (OCR), spatial reasoning, and graphical user interface (GUI) or agent control.

Introducing the New Compact Qwen3-VL Models

Model Variants and Specifications

The latest additions to the Qwen3-VL lineup feature four dense architectures: Qwen3-VL-4B and Qwen3-VL-8B, each offered in Instruct and Thinking configurations. These models come with FP8-quantized versions of both task profiles, enabling deployment on devices with limited memory without sacrificing performance. Official documentation emphasizes their “compact, dense” nature, highlighting reduced VRAM requirements while preserving the full spectrum of Qwen3-VL functionalities.

Extended Context and Multimodal Capabilities

Both the 4B and 8B models natively support a substantial context length of 256,000 tokens, with the ability to scale up to an impressive 1 million tokens. This extended context facilitates advanced understanding of lengthy documents and videos. The models also support OCR in 32 languages, 2D and 3D spatial grounding, visual programming, and agent-based GUI control across desktop and mobile platforms. These features ensure that the smaller models retain the versatility and depth of their larger counterparts.

Architectural Innovations

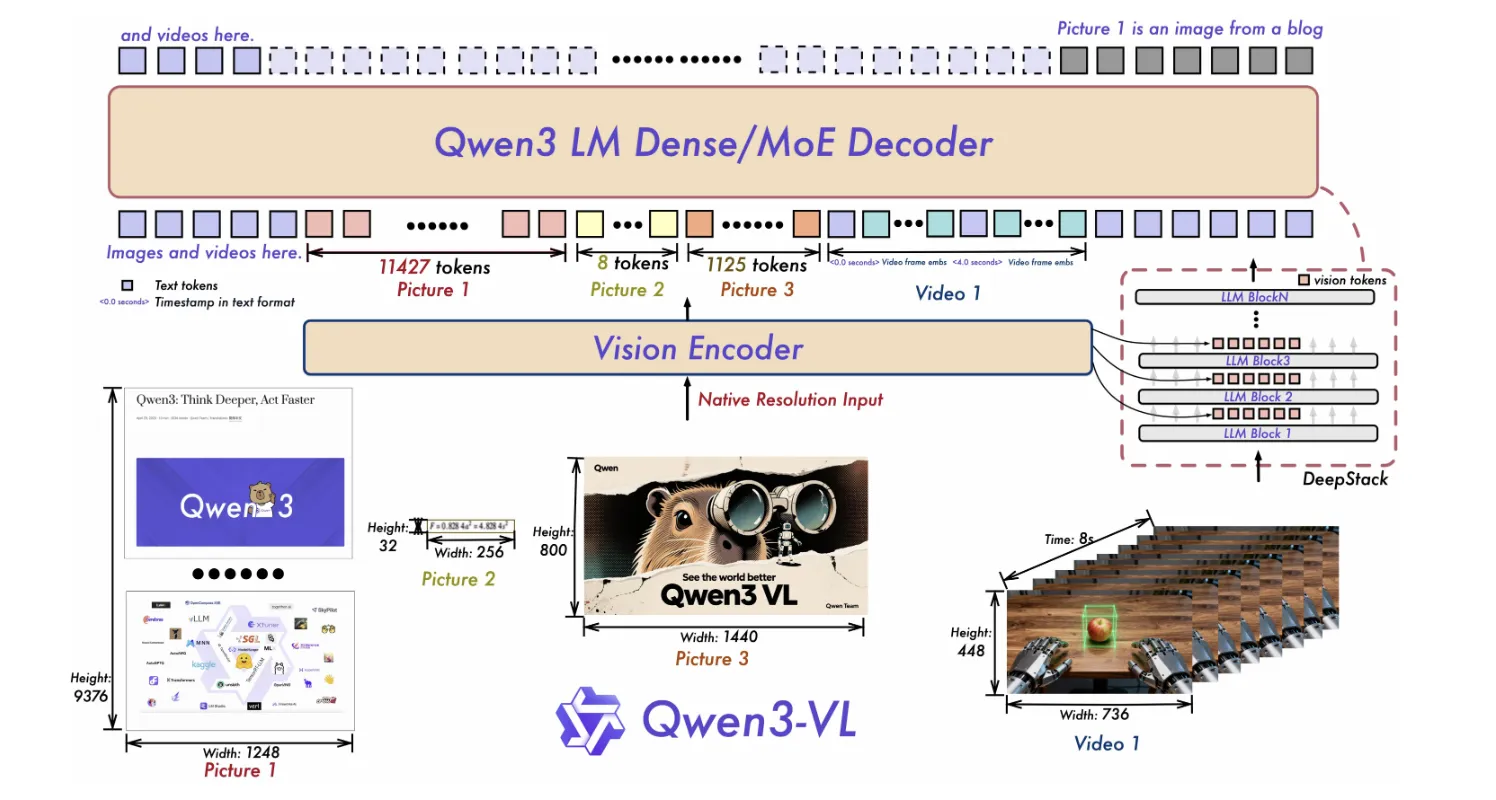

Qwen3-VL incorporates several cutting-edge architectural enhancements. The Interleaved-MRoPE mechanism improves positional encoding across temporal and spatial dimensions, crucial for processing long-duration videos. The DeepStack module integrates multi-level Vision Transformer (ViT) features to enhance alignment between visual and textual data. Additionally, Text-Timestamp Alignment surpasses traditional T-RoPE methods, enabling precise event localization within video streams. These innovations are consistently applied across all model sizes, ensuring architectural coherence.

Release Timeline

The Qwen3-VL 4B and 8B models, including both Instruct and Thinking variants, were officially released on October 15, 2025. This launch follows earlier deployments of the 30B MoE tier and the organization-wide rollout of FP8 quantization support, marking a significant milestone in Alibaba’s multimodal AI development.

FP8 Quantization: Enhancing Efficiency Without Compromise

Precision and Performance

The FP8 quantization applied to these models utilizes a fine-grained approach with a block size of 128, delivering performance metrics that closely mirror those of the original BF16 checkpoints. This level of precision is critical for maintaining accuracy across complex multimodal tasks, including vision encoding, cross-modal fusion, and long-context attention mechanisms. By providing vendor-optimized FP8 weights, Alibaba reduces the need for users to perform additional quantization and validation, streamlining deployment workflows.

Deployment Tools and Compatibility

Currently, the Transformers library does not support direct loading of these FP8 weights. Instead, deployment is recommended through frameworks such as vLLM or SGLang, both of which offer ready-to-use scripts and recipes for serving these models efficiently. Notably, vLLM’s guidelines advocate for FP8 checkpoints to maximize memory efficiency on NVIDIA H100 GPUs, underscoring the practical benefits of this quantization strategy for real-world applications.

Summary of Key Highlights

- Alibaba’s Qwen3-VL introduces dense 4B and 8B models, each available in Instruct and Thinking modes, with FP8 quantized checkpoints for low-memory environments.

- FP8 quantization employs a block size of 128, achieving near-BF16 accuracy; direct support in Transformers is pending, with vLLM and SGLang as current deployment options.

- The models maintain a robust capability set, including a native context window of 256K tokens expandable to 1 million, 32-language OCR, spatial grounding, video reasoning, and GUI/agent control.

- Model sizes are approximately 4.83 billion parameters for Qwen3-VL-4B and 8.77 billion parameters for Qwen3-VL-8B Instruct variant.

Insights and Implications for Deployment

Alibaba’s strategy to release dense Qwen3-VL 4B and 8B models with FP8 quantization and dual task profiles reflects a pragmatic approach to AI deployment. By focusing on lower VRAM footprints and providing explicit serving instructions, these models are well-suited for edge devices and single-GPU setups, where resource constraints are paramount. The preservation of advanced features such as extended context windows, multilingual OCR, and spatial reasoning ensures that users do not have to compromise on functionality despite the reduced model size. This balance between efficiency and capability is particularly valuable for developers and organizations aiming to integrate sophisticated multimodal AI into real-world applications without the overhead of massive hardware requirements.