OpenAI has unveiled the openai/circuit-sparsity model on Hugging Face alongside the openai/circuit_sparsity toolkit available on GitHub. This release encompasses the models and circuit frameworks detailed in their latest research, providing open-source tools for exploring weight-sparse transformer architectures.

Understanding Weight-Sparse Transformers

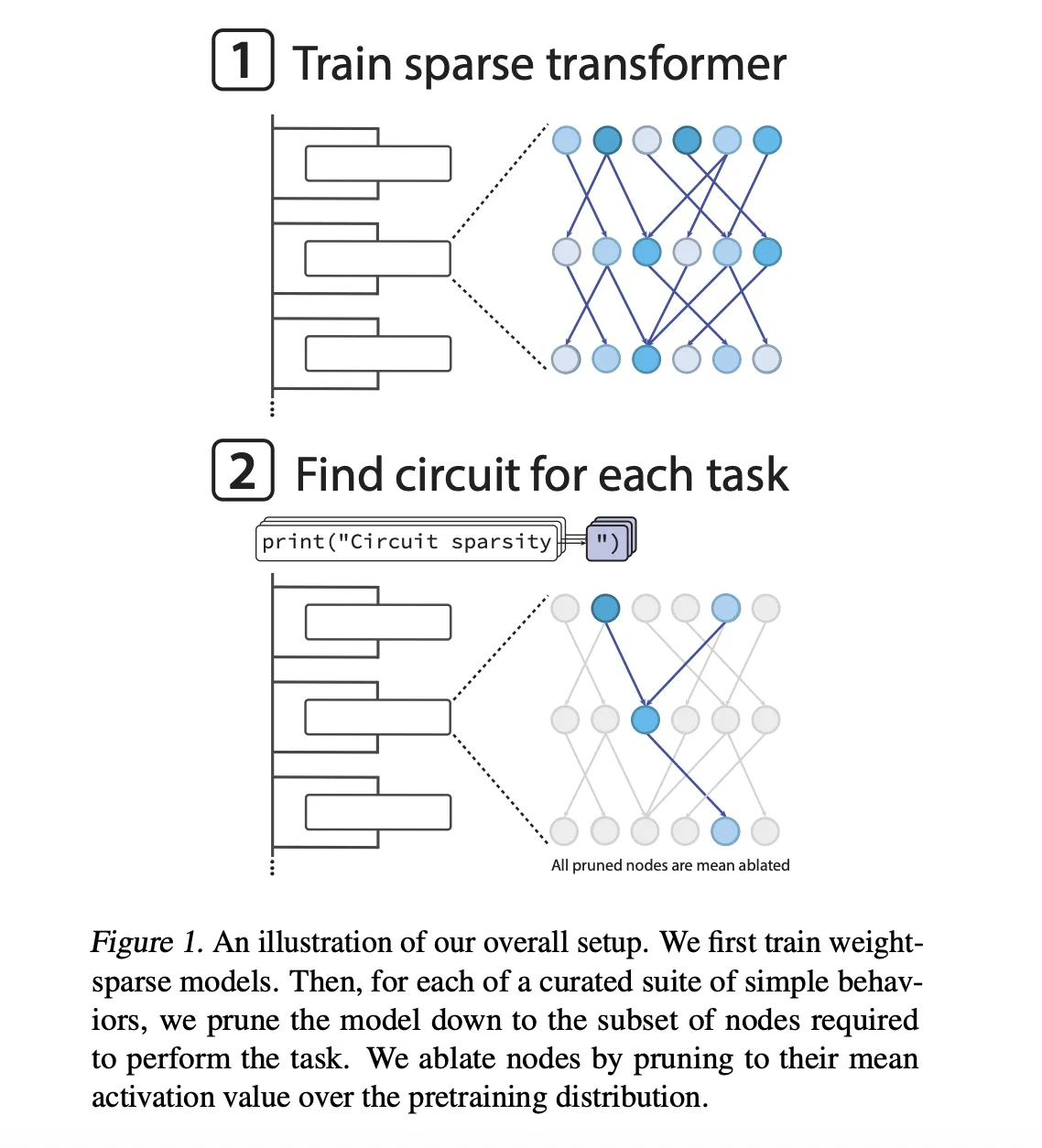

The released models are decoder-only transformers inspired by the GPT-2 architecture, specifically trained on Python code datasets. Unlike traditional pruning methods applied after training, sparsity here is integrated directly into the optimization process. After each AdamW optimization step, only the highest magnitude weights in every matrix and bias-including token embeddings-are retained, while the rest are zeroed out. This approach ensures a consistent sparsity ratio across all weight matrices.

At the extreme end, some models maintain just about 0.1% (1 in 1000) of their weights as nonzero. Additionally, the team enforces moderate activation sparsity, where roughly 25% of node activations remain active, spanning residual reads and writes, attention heads, and MLP neurons.

The sparsity level is gradually introduced during training, starting from a fully dense model and progressively reducing the nonzero weight budget to the target sparsity. This annealing strategy allows scaling model width while keeping the number of active parameters fixed, enabling a detailed study of the trade-offs between model interpretability and performance. Remarkably, circuits extracted from these sparse models are approximately 16 times smaller than those derived from their dense counterparts for equivalent pretraining loss.

Defining Sparse Circuits in Neural Networks

A core concept introduced is the sparse circuit, which represents the model’s internal computation at a fine-grained level. Each node corresponds to an individual neuron, attention channel, or residual channel, while edges represent nonzero connections between these nodes. The size of a circuit is quantified by the geometric mean of edges across various tasks.

To evaluate these circuits, the researchers designed 20 binary next-token prediction tasks based on Python code. Each task challenges the model to select between two possible token completions differing by a single token. Examples include:

single_double_quote: Predicting whether a string should close with a single or double quote.bracket_counting: Determining whether to output one or two closing brackets based on list nesting depth.set_or_string: Tracking whether a variable was initialized as a set or a string.

For each task, the model undergoes node-level pruning to isolate the minimal circuit that achieves a target loss of 0.15. Nodes removed during pruning are mean-ablated, meaning their activations are fixed to the average activation observed during pretraining. A binary mask optimized via a straight-through estimator balances task accuracy against circuit compactness.

Illustrative Circuits: From Quote Closure to Bracket Counting

The most concise circuit corresponds to the single_double_quote task, where the model must correctly close a string with the appropriate quote type. This circuit comprises just 12 nodes and 9 edges.

The mechanism unfolds in two stages within the network:

- In the first MLP layer (

0.mlp), two neurons specialize: one acts as a quote detector responding to both single and double quotes, while the other functions as a quote type classifier, distinguishing between the two. - Later, an attention head in layer

10.attnuses the quote detector as a key and the quote type classifier as a value. The final token’s constant positive query enables the attention output to replicate the correct quote type, ensuring proper string closure.

The bracket_counting task yields a slightly larger but algorithmically clear circuit. The embedding for the opening bracket [ activates several residual channels serving as bracket detectors. A layer 2 attention head averages these activations across the context, effectively computing the nesting depth and storing it in a residual channel. A subsequent attention head applies a threshold to this depth, activating a nested list close channel only when necessary, prompting the model to output the correct number of closing brackets.

Another example, set_or_string_fixedvarname, demonstrates how the model tracks the type of a variable named current. One attention head copies the embedding of current into either the set() or "" token embedding. A later head uses this embedding as both query and key to retrieve the relevant information when deciding between .add and += operations.

Bridging Sparse and Dense Models

To connect sparse models with their dense counterparts, the team developed a novel bridge mechanism. Each bridge consists of an encoder-decoder pair that translates dense activations into sparse activations and vice versa at every sublayer. The encoder applies a linear transformation followed by an AbsTopK activation, while the decoder is a linear map.

Training incorporates additional loss terms to ensure that hybrid sparse-dense forward passes closely replicate the behavior of the original dense model. This framework enables researchers to manipulate interpretable sparse features-such as the quote type classifier channel-and propagate these changes into dense models, facilitating controlled behavioral interventions.

Released Resources and How to Use Them

OpenAI has made available the openai/circuit-sparsity model on Hugging Face, a 0.4 billion parameter transformer tagged with custom_code and corresponding to the csp_yolo2 variant from their research. This model underpins qualitative demonstrations like bracket counting and variable binding. The entire codebase, including model checkpoints, task definitions, and a circuit visualization interface, is accessible on GitHub under the Apache 2.0 license.

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

PROMPT = "def square_sum(xs):n return sum(x * x for x in xs)nsquare_sum([1, 2, 3])n"

tokenizer = AutoTokenizer.from_pretrained("openai/circuit-sparsity", trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained(

"openai/circuit-sparsity",

trust_remote_code=True,

torch_dtype="auto",

)

model.to("cuda" if torch.cuda.is_available() else "cpu")

inputs = tokenizer(PROMPT, return_tensors="pt", add_special_tokens=False)["input_ids"].to(model.device)

with torch.no_grad():

output = model.generate(

inputs,

max_new_tokens=64,

do_sample=True,

temperature=0.8,

top_p=0.95,

return_dict_in_generate=False,

)

print(tokenizer.decode(output[0], skip_special_tokens=True))

Summary of Key Insights

- Integrated Weight Sparsity: Unlike traditional pruning, sparsity is enforced during training, resulting in models where most weights are zero and each neuron connects sparsely.

- Compact, Task-Specific Circuits: Circuits are defined at the granularity of individual neurons and channels, often comprising only tens of nodes and edges for specific binary Python token prediction tasks.

- Explicit Algorithmic Implementations: Tasks such as quote closure, bracket counting, and variable type tracking correspond to fully instantiated circuits that implement clear computational algorithms.

- Open Access to Models and Tools: The 0.4B parameter model and accompanying codebase, including visualization tools, are publicly available under Apache 2.0, fostering further research and experimentation.

- Bridging Sparse and Dense Representations: Encoder-decoder bridges enable mapping between sparse and dense activations, allowing controlled interventions and deeper understanding of model internals.