While Large Language Models (LLMs) often dominate AI discussions, the current artificial intelligence landscape encompasses a diverse array of specialized model architectures. These varied frameworks are revolutionizing how machines perceive their environment, strategize, execute tasks, segment data, conceptualize information, and operate efficiently even on compact devices. Each architecture addresses unique facets of artificial intelligence, collectively driving the evolution of next-generation AI technologies.

In this overview, we delve into five pivotal AI model categories: Large Language Models (LLMs), Vision-Language Models (VLMs), Mixture of Experts (MoE), Large Action Models (LAMs), and Small Language Models (SLMs).

Understanding Large Language Models (LLMs)

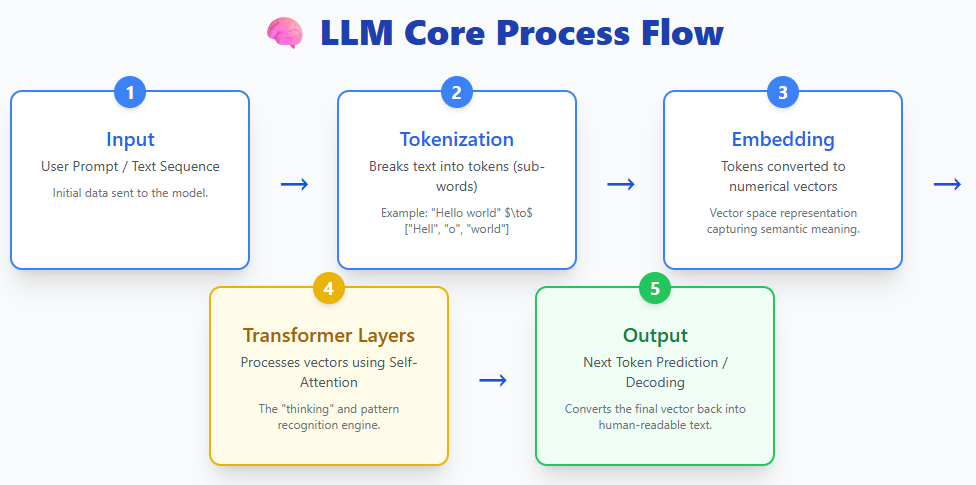

Large Language Models process textual input by segmenting it into tokens, converting these tokens into vector embeddings, and passing them through multiple transformer layers to generate coherent text outputs. Prominent examples include ChatGPT, Claude, Gemini, and LLaMA, all of which utilize this foundational approach.

Fundamentally, LLMs are deep neural networks trained on vast corpora of text, enabling them to grasp linguistic nuances, produce human-like responses, summarize content, write code, and answer complex queries. Their transformer-based architecture excels at managing lengthy sequences and capturing intricate language patterns.

Currently, LLMs are integrated into numerous consumer-facing applications and digital assistants, such as OpenAI’s ChatGPT, Anthropic’s Claude, Meta’s LLaMA series, Microsoft Copilot, and Google’s Gemini and BERT/PaLM models. Their adaptability and user-friendly interfaces have cemented their role as the backbone of contemporary AI solutions.

Vision-Language Models (VLMs): Bridging Visual and Textual Intelligence

Vision-Language Models merge two distinct processing streams:

- An image or video encoder that interprets visual data

- A text encoder that comprehends language

These inputs converge within a multimodal processor, culminating in a language model that produces the final output. Notable VLMs include GPT-4V, Gemini Pro Vision, and LLaVA.

Essentially, VLMs extend the capabilities of large language models by incorporating visual understanding. By integrating visual and textual representations, they can analyze images, interpret documents, answer questions about visual content, and describe videos.

Traditional computer vision systems are typically designed for narrow tasks-such as distinguishing between different animal species or extracting text from images-and lack the ability to generalize beyond their training scope. Introducing new categories or tasks often requires retraining from the ground up.

In contrast, VLMs overcome these constraints by training on extensive datasets comprising images, videos, and text. This enables them to perform a wide range of vision-related tasks without additional training, simply by following natural language instructions. Their capabilities span image captioning, optical character recognition (OCR), visual reasoning, and complex document analysis, all achieved zero-shot.

This versatility positions Vision-Language Models as one of the most significant breakthroughs in AI today.

Mixture of Experts (MoE): Enhancing Efficiency with Specialized Subnetworks

Mixture of Experts models build upon the transformer framework by replacing the single feed-forward network in each layer with multiple smaller expert networks. For each token processed, only a select few experts are activated, dramatically improving computational efficiency while maintaining vast model capacity.

In conventional transformers, every token passes through the same feed-forward network, engaging all parameters uniformly. MoE architectures introduce a routing mechanism that selects the top-K experts to handle each token, enabling sparse computation. This means that although MoE models may contain tens of billions of parameters, only a fraction is utilized per token.

For instance, the Mixtral 8×7B model boasts over 46 billion parameters, yet each token is processed using approximately 13 billion parameters.

This approach significantly lowers inference costs. Instead of increasing model depth or width-which raises floating-point operations per second (FLOPs)-MoE models scale by adding more experts, expanding capacity without increasing per-token computational load. Consequently, MoEs are often described as delivering “larger brains with reduced runtime expenses.”

Large Action Models (LAMs): From Understanding to Autonomous Execution

Large Action Models transcend mere text generation by converting user intent into concrete actions. Rather than simply responding to queries, LAMs interpret user goals, decompose tasks into manageable steps, plan sequences of actions, and autonomously execute them in digital or physical environments.

A typical LAM workflow involves:

- Perception: Interpreting user inputs

- Intent Recognition: Determining the user’s objectives

- Task Decomposition: Segmenting goals into actionable components

- Action Planning and Memory: Sequencing actions based on context and past interactions

- Execution: Performing tasks independently

Examples of LAM implementations include Rabbit R1, Microsoft’s UFO framework, and Claude Computer Use, which can operate software applications, navigate user interfaces, and complete complex workflows on behalf of users.

Trained on extensive datasets capturing real-world user behaviors, LAMs possess the capability to perform multi-step operations such as booking appointments, filling out forms, organizing digital files, and managing intricate processes. This evolution transforms AI from a passive assistant into an active agent capable of dynamic, real-time decision-making.

Small Language Models (SLMs): Compact Intelligence for Edge Devices

Small Language Models are streamlined versions of language models optimized for deployment on edge devices, smartphones, and other environments with limited computational resources. They employ efficient tokenization methods, refined transformer architectures, and aggressive quantization techniques to enable on-device AI processing. Examples include Phi-3, Gemma, Mistral 7B, and LLaMA 3.2 1B.

Unlike their larger counterparts, which may contain hundreds of billions of parameters, SLMs typically range from a few million to several billion parameters. Despite their reduced size, they maintain the ability to comprehend and generate natural language, supporting applications such as conversational agents, summarization, translation, and task automation without relying on cloud infrastructure.

Due to their minimal memory and computational demands, SLMs are particularly suited for:

- Mobile applications

- Internet of Things (IoT) and edge computing devices

- Privacy-sensitive or offline scenarios

- Low-latency use cases where cloud communication introduces delays

SLMs exemplify the growing trend toward fast, private, and cost-effective AI, bringing sophisticated language understanding directly to personal and embedded devices.