How can artificial intelligence systems be designed to continuously acquire new knowledge without losing previously learned information or requiring complete retraining? Researchers at Google have proposed an innovative machine learning technique called Nested Learning. This approach conceptualizes a model not as a single monolithic network optimized by one overarching process, but rather as a hierarchy of smaller, interlinked optimization tasks. The primary aim is to combat the issue of catastrophic forgetting and advance large-scale models toward continual learning, mirroring the way biological brains retain and adapt memories over time.

Introducing Nested Learning: A Hierarchical Optimization Framework

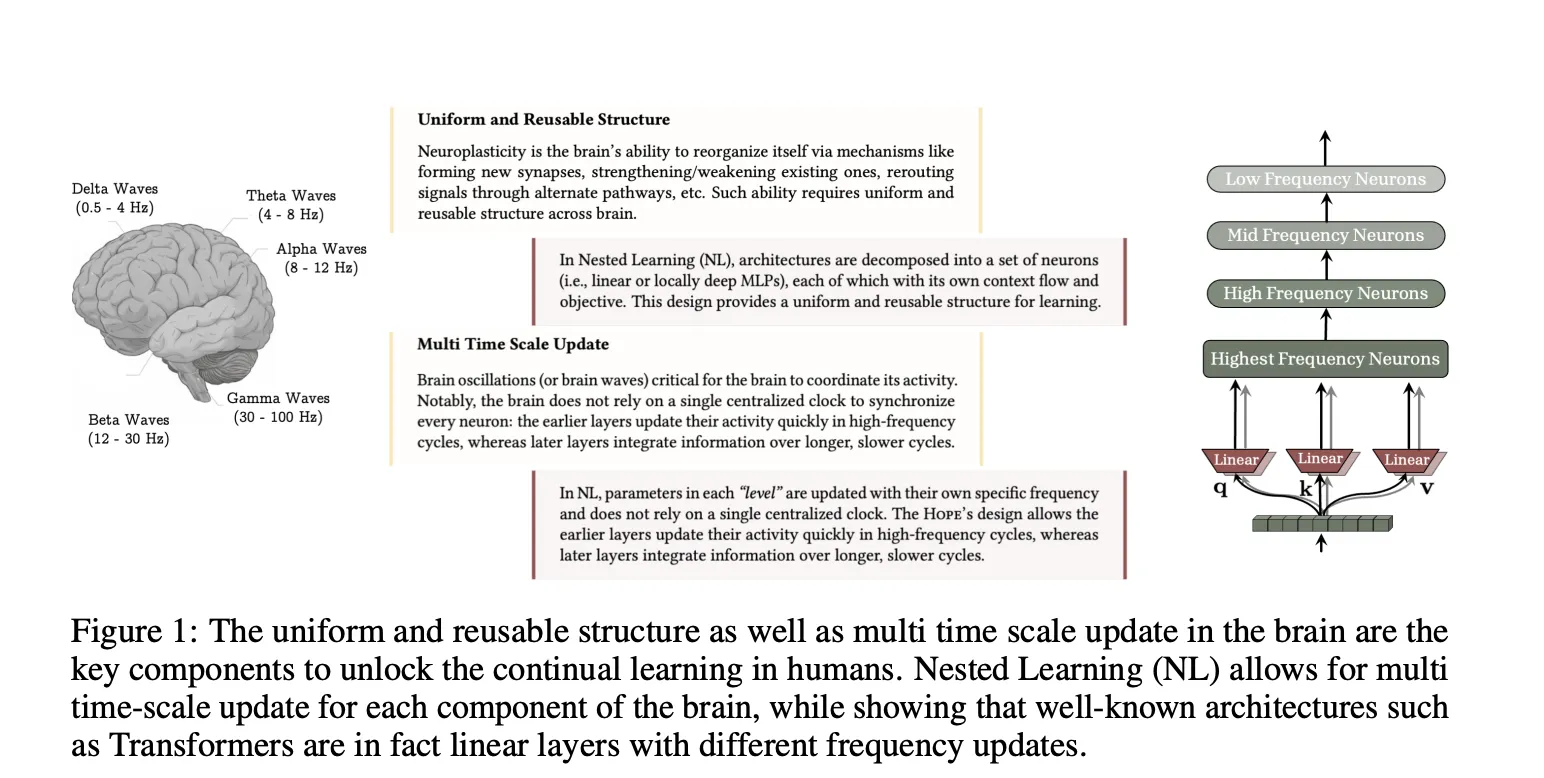

In their groundbreaking study, Google researchers redefine complex neural networks as collections of interconnected optimization problems that operate either nested within each other or in parallel. Each sub-problem maintains its own unique context flow-comprising sequences of inputs, gradients, or internal states-and updates at its own distinct frequency.

Unlike traditional training paradigms that treat models as flat stacks of layers optimized by a single algorithm, Nested Learning organizes parameters based on how frequently they are updated. Parameters that change rapidly reside in the innermost layers, while those updated less frequently form outer layers. This layered structure, termed a Neural Learning Module, enables each level to encode its specific context flow into its parameters. Remarkably, this framework generalizes common techniques such as backpropagation in multilayer perceptrons (MLPs), linear attention mechanisms, and standard optimizers, interpreting them all as forms of associative memory.

Within this context, associative memory is defined as any function that maps keys to values and is trained with an internal objective. The researchers demonstrate that backpropagation itself can be reframed as a single-step gradient descent that learns to associate inputs with local surprise signals-essentially the gradient of the loss relative to the output.

Reimagining Optimizers as Deep Associative Memories

By viewing optimizers as learning modules, Nested Learning opens the door to designing them with more sophisticated internal objectives. For example, conventional momentum optimization can be seen as a linear associative memory that stores past gradients, trained using a dot product similarity measure. This approach, however, does not capture dependencies between sequential data points.

To address this limitation, the researchers replaced the similarity objective with an L2 regression loss applied to gradient features. This modification results in an update rule that better manages finite memory capacity and more effectively memorizes sequences of gradients. Extending this concept further, they generalized momentum memory from a linear mapping to a multilayer perceptron, creating what they call Deep Momentum Gradient Descent. In this model, the momentum state is generated by a neural memory and can be transformed through nonlinear functions such as the Newton-Schulz iteration. This perspective also encompasses the Muon optimizer as a particular instance.

Continuum Memory System: A Spectrum of Temporal Memory Scales

Traditional sequence models typically separate memory into two categories: attention mechanisms serve as working memory for the current context window, while feedforward layers act as long-term memory, rarely updated after initial training. The Nested Learning team proposes a more nuanced model called the Continuum Memory System (CMS).

CMS consists of a series of MLP blocks, each with its own update frequency and chunk size, arranged sequentially. When processing an input sequence, these blocks are applied one after another, with each block’s parameters updated only every C^(k) steps. This design allows each block to compress information over different timescales, effectively creating a continuum of memory ranging from short-term to long-term. A standard Transformer with a single feedforward block is a special case of CMS with k equal to 1.

This approach transforms the concept of long-term memory from a static layer into a dynamic spectrum of memory levels, drawing parallels to multi-timescale synaptic consolidation processes observed in the brain, where different neural circuits learn and adapt at varying rates within a shared architecture.

HOPE: A Self-Optimizing Architecture Inspired by Titans

To demonstrate the practical viability of Nested Learning, the researchers developed HOPE, a self-referential sequence model that applies these principles to a recurrent architecture. HOPE builds upon Titans, a long-term memory framework where a neural memory module learns to store surprising events during inference, enhancing attention over distant past tokens.

While Titans operate with only two levels of parameter updates-yielding first-order in-context learning-HOPE introduces two key advancements. First, it is self-modifying, capable of optimizing its own memory through a recursive process, theoretically supporting unlimited levels of in-context learning. Second, it incorporates Continuum Memory System blocks, enabling memory updates at multiple frequencies and scaling effectively to longer context windows.

Evaluating Performance: HOPE Versus Contemporary Models

The team assessed HOPE alongside several baseline models across language modeling and commonsense reasoning tasks, using parameter scales of 340 million, 760 million, and 1.3 billion. Evaluation metrics included perplexity on Wiki and LMB datasets for language modeling, as well as accuracy on benchmarks such as PIQA, HellaSwag, WinoGrande, ARC Easy, ARC Challenge, Social IQa, and BoolQ for reasoning tasks.

Results indicate that HOPE consistently outperforms strong Transformer-based and recurrent architectures, demonstrating superior capabilities in language understanding, long-range reasoning, and continual learning. Comparative analyses included models like Transformer++, RetNet, Gated DeltaNet, TTT, Samba, and Titans, highlighting HOPE’s advancements in handling extended context and memory retention.

Summary of Core Insights

- Nested Learning conceptualizes models as hierarchies of nested optimization problems with varying update frequencies, directly addressing catastrophic forgetting in continual learning scenarios.

- This framework unifies architectural components and optimization processes by interpreting backpropagation, attention, and optimizers as associative memory modules that encode their own context flows.

- Deep optimizers within Nested Learning replace simplistic similarity objectives with richer losses like L2 regression and employ neural memories, resulting in more expressive and context-sensitive update mechanisms.

- The Continuum Memory System models memory as a layered spectrum of MLP blocks updating at different rates, enabling the formation of short-, medium-, and long-term memories rather than relying on a single static feedforward layer.

- The HOPE architecture, a self-modifying extension of Titans based on Nested Learning principles, achieves enhanced performance in language modeling, extended context reasoning, and continual learning compared to leading Transformer and recurrent models.

Final Thoughts

Nested Learning offers a compelling new perspective by integrating model architecture and optimization into a cohesive system of Neural Learning Modules. Innovations such as Deep Momentum Gradient Descent, the Continuum Memory System, and the HOPE architecture provide tangible pathways toward richer associative memories and more robust continual learning capabilities. This paradigm shift elevates continual learning from a peripheral concern to a central design principle in the development of advanced AI systems.