Meta FAIR has introduced the Code World Model (CWM), a powerful 32-billion-parameter dense decoder-only large language model designed to enhance code generation by integrating world modeling. Unlike traditional models trained solely on static source code, CWM learns from dynamic execution traces and extended agent-environment interactions, enabling a deeper understanding of code behavior.

Revolutionizing Code Learning Through Execution Prediction

CWM’s training process incorporates two extensive categories of observation-action sequences: (1) Python interpreter execution traces that capture the state of local variables after each line runs, and (2) agent-driven interactions within Dockerized code repositories, which include code edits, shell commands, and test results. This approach grounds the model in the semantics of code-how program state changes over time-rather than just its syntax.

To amass this rich dataset, the team developed executable repository images from thousands of GitHub projects. They then employed a specialized software-engineering agent, dubbed “ForagerAgent,” to explore multi-step trajectories within these environments. The dataset comprises approximately 3 million trajectories spanning around 10,000 images and 3,150 repositories, featuring variations such as mutate-fix and issue-fix scenarios.

Architecture and Extended Context Capabilities

CWM is built as a dense, decoder-only Transformer model without mixture-of-experts (MoE) components. It consists of 64 layers and utilizes advanced techniques including GQA attention (48 query heads / 8 key-value heads), SwiGLU activation, RMSNorm normalization, and Scaled Rotary Positional Embeddings (RoPE). The model’s attention mechanism alternates between local windows of 8,000 tokens and global sliding windows of 131,000 tokens, enabling an unprecedented effective context length of 131k tokens. Training employs document-causal masking to maintain autoregressive properties.

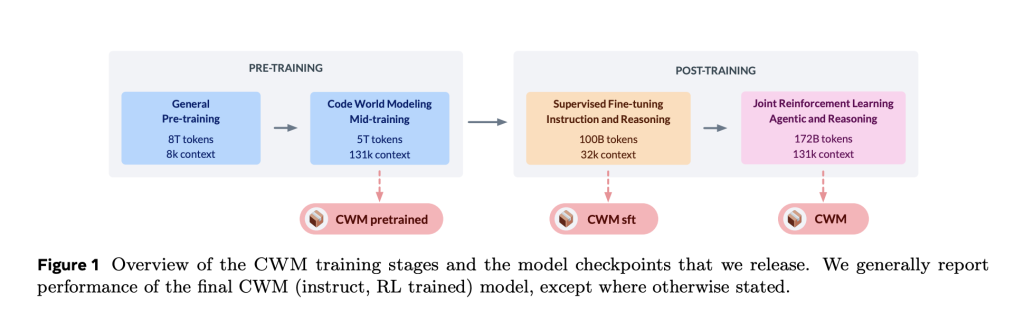

Comprehensive Training Pipeline: From Pretraining to Reinforcement Learning

- Initial pretraining involved processing 8 trillion tokens, focusing heavily on code, with an 8k token context window.

- Mid-training added 5 trillion tokens using the extended 131k token context, incorporating Python execution traces, ForagerAgent-generated data, pull request diffs, intermediate representations, compiler outputs, Triton kernels, and Lean theorem-proving code.

- Post-training included a 100 billion-token supervised fine-tuning (SFT) phase to enhance instruction-following and reasoning abilities, followed by approximately 172 billion tokens of multi-task reinforcement learning (RL). This RL phase targeted verifiable coding tasks, mathematical problem solving, and multi-turn software engineering environments, leveraging a GRPO-style algorithm and a minimal toolset (bash, edit, create, submit commands).

- Optimized quantized inference allows the model to run efficiently on a single 80 GB NVIDIA H100 GPU.

Performance Benchmarks and Evaluation

CWM demonstrates strong results across several benchmarks, with pass@1 accuracy and scores as follows (noting test-time scaling where applicable):

- SWE-bench Verified: 65.8%

- LiveCodeBench-v5: 68.6%; LiveCodeBench-v6: 63.5%

- Math-500: 96.6%; AIME-24: 76.0%; AIME-25: 68.2%

- CruxEval-Output: 94.3%

These results position CWM competitively against other open-weight models of similar size and even some larger or proprietary models, particularly on the SWE-bench Verified tasks.

The Importance of World Modeling in Code Generation

CWM’s design highlights two key functional capabilities:

- Execution-trace prediction: Given a function and an initial execution state, CWM can forecast stack frames (local variables) and the next executed line in a structured format. This feature acts as a “neural debugger,” enabling reasoning about code behavior without running it live.

- Agentic coding: The model engages in multi-turn reasoning with tool usage within real repositories, validated by hidden tests and patch similarity rewards. This trains CWM to identify bugs and produce complete end-to-end patches (git diffs) rather than isolated code snippets.

Technical Highlights and Innovations

- Tokenizer: Based on the Llama-3 family, with reserved control tokens to mark trace and reasoning segments during supervised fine-tuning.

- Attention pattern: A 3:1 ratio of local to global attention windows is repeated throughout the model’s depth. Long-context training uses large token batch sizes to stabilize gradient updates.

- Compute scaling: Learning rate and batch size schedules are informed by internal scaling law experiments, optimized for the overheads of long-context training.

Conclusion: Advancing Grounded Code Generation

CWM represents a significant advancement in grounded code generation by combining a large dense transformer with execution-trace learning and agentic, test-verified patch generation. Meta FAIR has released intermediate and post-trained checkpoints under a Non-Commercial Research License, providing a valuable resource for reproducible research on long-context, execution-aware coding models without conflating research with production deployment.