OpenAI has unveiled GDPval, an innovative evaluation framework crafted to assess AI systems on practical, economically impactful tasks spanning 44 professions within nine major U.S. GDP sectors. Moving beyond traditional academic benchmarks, GDPval emphasizes authentic work products-such as presentations, spreadsheets, briefs, CAD designs, and multimedia files-evaluated by industry specialists through blind pairwise comparisons. Alongside this, OpenAI launched a curated “gold” subset featuring 220 tasks and introduced an experimental automated evaluation tool accessible at evals.openai.com.

Constructing Real-World Tasks: The GDPval Approach

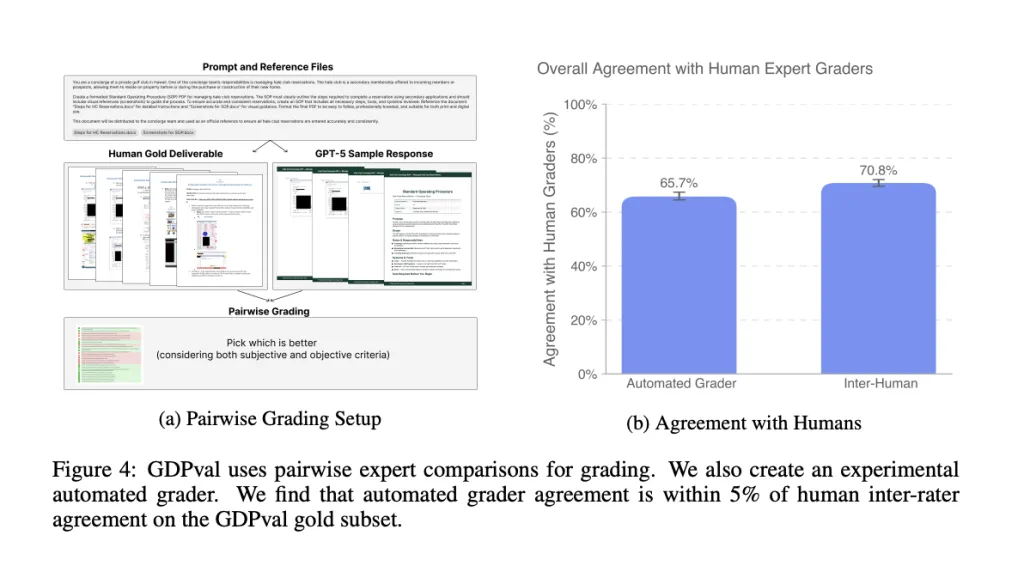

GDPval compiles a comprehensive set of 1,320 tasks developed by seasoned professionals averaging over 14 years of domain expertise. These tasks are aligned with O*NET’s work activity taxonomy and incorporate diverse file types-including documents, slides, images, audio, video, spreadsheets, and CAD files-with some tasks involving multiple reference materials. While the gold subset offers publicly available prompts and reference data, final scoring predominantly depends on expert pairwise evaluations due to the nuanced and subjective nature of the deliverables.

Performance Insights: AI Models Versus Human Experts

Analysis on the gold subset reveals that leading-edge AI models are nearing expert-level performance on a significant portion of tasks when assessed blindly by professionals. Model improvements exhibit a roughly linear progression across successive releases. Top models demonstrate near parity in win/tie rates compared to human experts, with common error types including lapses in instruction adherence, formatting inconsistencies, improper data utilization, and hallucinations. Enhancing reasoning depth and implementing robust scaffolding techniques-such as format validation and artifact self-review-consistently improve outcomes.

Evaluating Efficiency: Time and Cost Benefits of AI Integration

GDPval incorporates scenario-based analyses contrasting fully human workflows with AI-augmented processes that include expert oversight. It quantifies factors such as (i) human task completion time and wage expenses, (ii) reviewer effort and associated costs, (iii) AI model response latency and API usage fees, and (iv) empirically measured model success rates. Findings suggest that, after accounting for review overhead, AI assistance can substantially reduce both time and financial costs across numerous task categories.

Automated Evaluation: A Practical but Imperfect Proxy

For the gold subset, an automated pairwise grading system achieves approximately 66% agreement with human expert judgments, closely approaching the ~71% concordance observed between different human reviewers. This automated grader serves as a valuable tool for rapid iteration and accessibility but is not intended to replace expert evaluation.

Distinctive Features: Why GDPval Stands Apart

- Comprehensive occupational coverage: Encompasses leading GDP sectors and a broad spectrum of O*NET work activities, rather than focusing on narrow specialties.

- Authentic deliverables: Tasks involve complex, multi-file, and multi-modal inputs and outputs, emphasizing structural integrity, formatting precision, and data handling capabilities.

- Dynamic benchmarking: Utilizes human preference win rates against expert outputs, allowing continuous recalibration as AI models advance.

Limitations and Scope: Defining GDPval’s Boundaries

Currently, GDPval-v0 targets knowledge work mediated by computers. It excludes physical labor, extended interactive workflows, and organization-specific software tools. Tasks are designed to be one-off and explicitly defined, with performance notably declining when contextual information is reduced. The resource-intensive nature of task creation and evaluation motivates the use of the automated grader-whose limitations are transparently documented-and plans for future expansion.

Integration with Existing Evaluation Ecosystems

GDPval complements OpenAI’s existing evaluation suite by introducing occupationally diverse, multi-modal, and file-centric tasks, reporting on human preference outcomes, conducting time and cost analyses, and exploring the impact of reasoning effort and agent scaffolding. The initial version is version-controlled and designed to evolve with broader coverage and enhanced realism over time.

Conclusion: Establishing a New Standard for AI Assessment in Economic Work

GDPval formalizes the measurement of AI capabilities in economically significant knowledge work by combining expert-crafted tasks with blinded human preference assessments and an accessible automated grader. This framework not only quantifies model quality and practical efficiency trade-offs but also highlights failure modes and the benefits of enhanced reasoning and scaffolding. While currently focused on computer-mediated, one-shot tasks with expert review, GDPval sets a reproducible benchmark for tracking AI progress across diverse occupations.