Evaluating Large Language Model Outputs Using the Arena-as-a-Judge Framework

This guide demonstrates how to apply the Arena-as-a-Judge methodology for assessing responses generated by large language models (LLMs). Unlike traditional evaluation methods that assign isolated numerical scores, this approach conducts direct pairwise comparisons between model outputs. The evaluation is based on user-defined criteria such as clarity, tone, and helpfulness, enabling a more nuanced judgment of quality.

Models and Scenario Setup

For this example, we will generate replies using OpenAI’s GPT-4.1 and Google’s Gemini 2.5 Pro, then employ GPT-5 as the adjudicator to determine which response better meets the evaluation standards. The test case revolves around a customer support email where a user reports receiving the wrong product:

Dear Support,

I ordered a wireless mouse last week, but I received a keyboard instead.

Could you please assist in resolving this issue promptly?

Thank you,

John

Installing Required Libraries and API Access

To follow along, install the necessary Python packages:

pip install deepeval google-genai openaiAdditionally, you will need API keys for both OpenAI and Google services:

- Google API Key: Generate your key through the Google Cloud Console.

- OpenAI API Key: Obtain your key from the OpenAI platform. New users may need to provide billing details and make a minimum deposit to activate API access.

Since Deepeval relies on OpenAI’s API for evaluation, ensure your OpenAI key is set correctly.

import os

from getpass import getpass

os.environ["OPENAI_API_KEY"] = getpass("Enter your OpenAI API Key: ")

os.environ["GOOGLE_API_KEY"] = getpass("Enter your Google API Key: ")

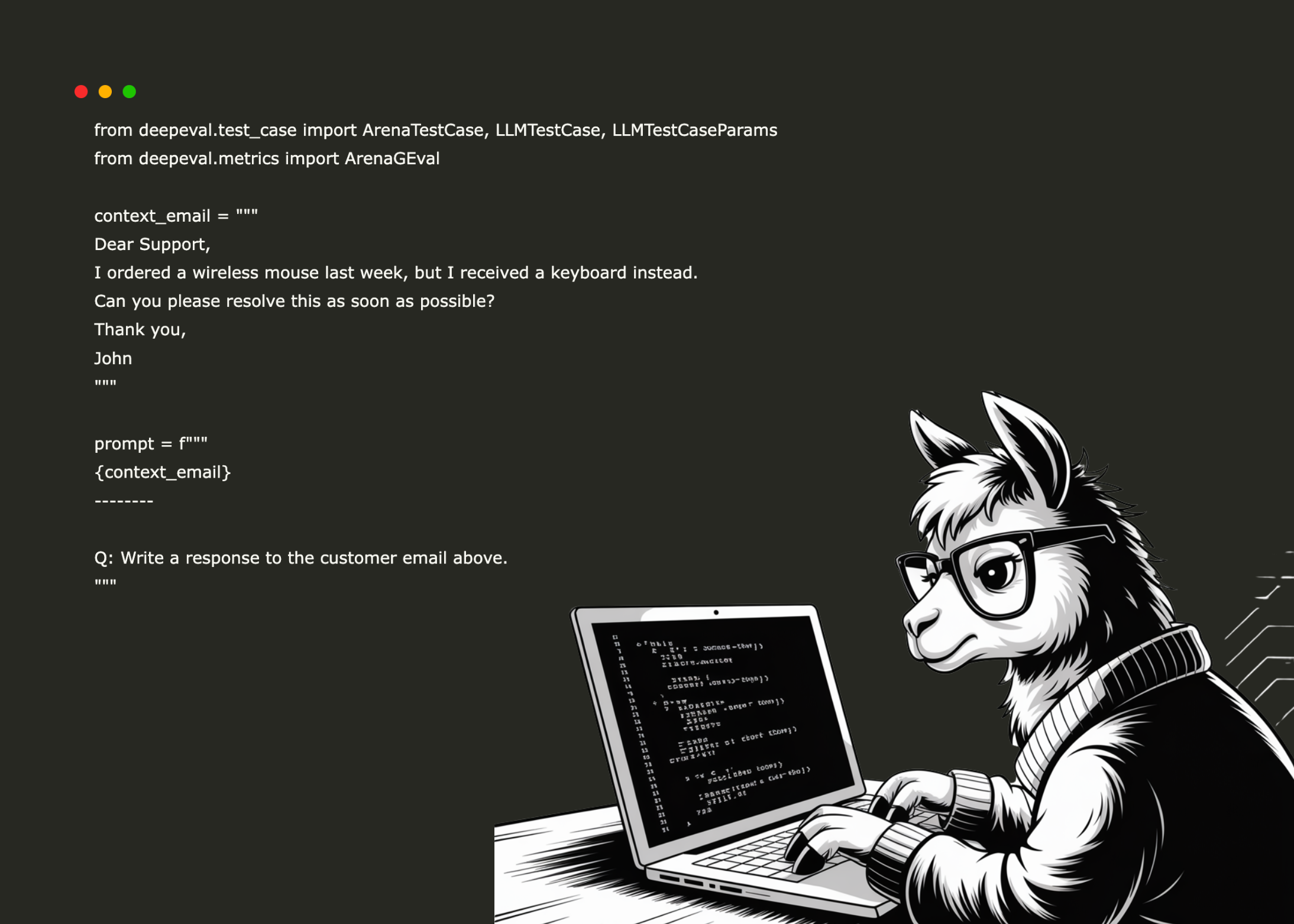

Establishing the Evaluation Context

We define the context by encapsulating the customer’s email and framing a prompt that instructs the models to generate an appropriate response:

from deepeval.test_case import ArenaTestCase, LLMTestCase, LLMTestCaseParams

customer_email = """

Dear Support,

I ordered a wireless mouse last week, but I received a keyboard instead.

Could you please assist in resolving this issue promptly?

Thank you,

John

"""

prompt = f"""

{customer_email}

--------

Q: Please compose a response to the customer's email above.

"""

Generating Responses from GPT-4.1

Using OpenAI’s Python client, we request a reply from GPT-4.1 based on the prompt:

from openai import OpenAI

client = OpenAI()

def fetch_gpt4_response(prompt: str, model: str = "gpt-4.1") -> str:

completion = client.chat.completions.create(

model=model,

messages=[{"role": "user", "content": prompt}]

)

return completion.choices[0].message.content

gpt4_reply = fetch_gpt4_response(prompt)

Obtaining Gemini 2.5 Pro’s Reply

Similarly, we generate a response from Google’s Gemini 2.5 Pro model:

from google import genai

client = genai.Client()

def fetch_gemini_response(prompt: str, model: str = "gemini-2.5-pro") -> str:

response = client.models.generate_content(

model=model,

contents=prompt

)

return response.text

gemini_reply = fetch_gemini_response(prompt)

Configuring the Arena Test Case for Comparison

We prepare an ArenaTestCase instance to pit the two model outputs against each other. Both models receive the same input and context, and their responses are stored for evaluation:

test_case = ArenaTestCase(

contestants={

"GPT-4": LLMTestCase(

input="Please compose a response to the customer's email above.",

context=[customer_email],

actual_output=gpt4_reply,

),

"Gemini": LLMTestCase(

input="Please compose a response to the customer's email above.",

context=[customer_email],

actual_output=gemini_reply,

),

},

)

Defining Evaluation Criteria with ArenaGEval

We establish an evaluation metric named Support Email Quality that prioritizes empathy, professionalism, and clarity. The goal is to select the response that best demonstrates understanding, politeness, and succinctness. GPT-5 is designated as the evaluator, with verbose logging enabled to provide detailed feedback:

from deepeval.metrics import ArenaGEval

evaluation_metric = ArenaGEval(

name="Support Email Quality",

criteria=(

"Choose the response that best balances empathy, professionalism, and clarity. "

"The reply should be polite, understanding, and concise."

),

evaluation_params=[

LLMTestCaseParams.CONTEXT,

LLMTestCaseParams.INPUT,

LLMTestCaseParams.ACTUAL_OUTPUT,

],

model="gpt-5",

verbose_mode=True

)

Executing the Evaluation Process

Finally, we run the evaluation to determine which model’s response is superior according to the defined criteria:

evaluation_metric.measure(test_case)

Analysis of Results

The evaluation reveals that GPT-4’s response excels in delivering a concise, courteous, and actionable customer support email. It acknowledges the mistake, apologizes, and clearly outlines the next steps, such as dispatching the correct product and providing return instructions. The tone is respectful and empathetic, aligning well with customer service best practices.

In contrast, Gemini’s reply, while empathetic and thorough, presents multiple response options accompanied by meta-commentary. This approach dilutes focus and reduces clarity and professionalism. Additionally, Gemini’s assertive claim of having located the order without clear evidence may undermine trust.

This comparison underscores GPT-4’s strength in producing focused, customer-oriented communication that balances empathy with clarity and professionalism.