Recent breakthroughs in large language model (LLM)-driven diagnostic AI systems have produced tools capable of engaging in sophisticated clinical conversations, generating differential diagnoses, and formulating management plans within simulated environments. However, the provision of definitive diagnoses and treatment advice remains tightly controlled by regulatory frameworks, reserving such responsibilities exclusively for licensed healthcare professionals. In conventional medical practice, a hierarchical oversight model is common-experienced physicians supervise and validate diagnostic and treatment proposals made by advanced practice providers (APPs), including nurse practitioners (NPs) and physician assistants (PAs). Consequently, the integration of AI in healthcare necessitates oversight mechanisms that replicate these established safety standards.

Innovative Framework: Guardrailed Diagnostic AI with Delayed Physician Review

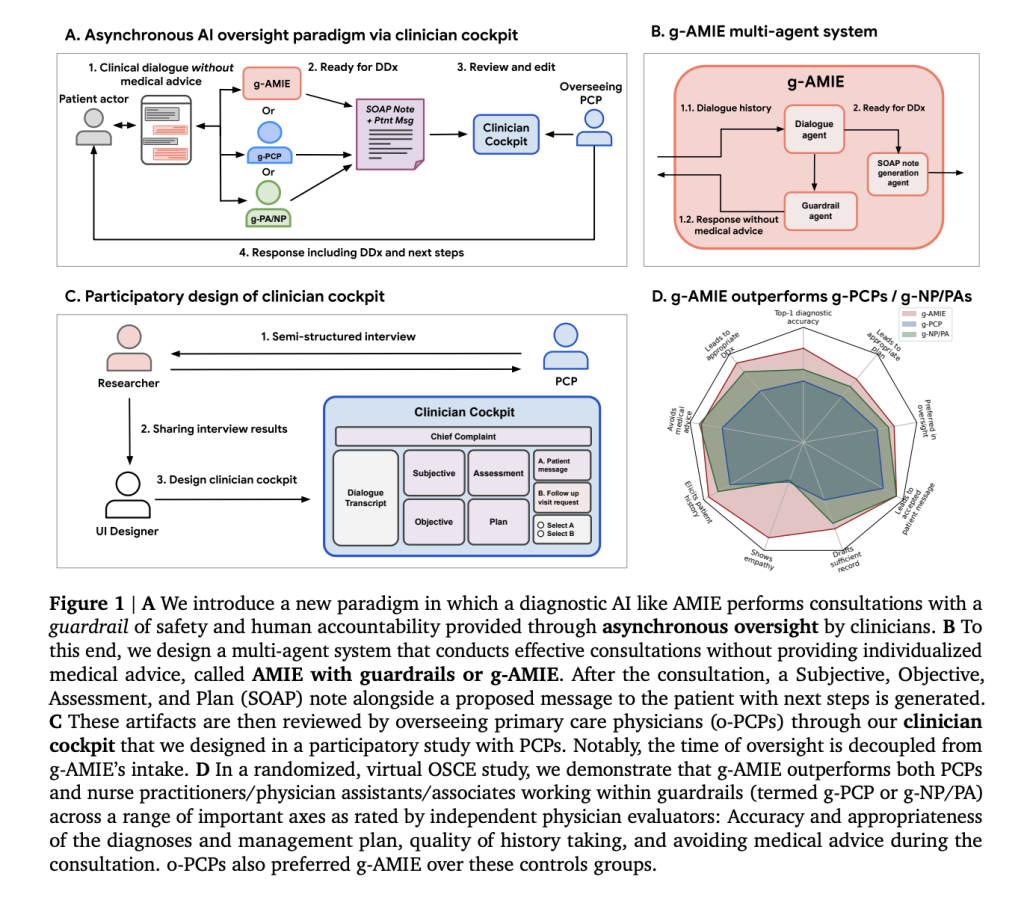

A collaborative team from Google DeepMind, Google Research, and Harvard Medical School introduced a novel multi-agent system named guardrailed-AMIE (g-AMIE), which operates on the Gemini 2.0 Flash platform and is derived from the Articulate Medical Intelligence Explorer (AMIE). This architecture distinctly separates the process of collecting patient history from the provision of personalized medical recommendations:

- Controlled History Collection: The AI engages patients in detailed history-taking conversations, records symptoms, and compiles clinical context without directly offering any diagnostic or treatment advice. A specialized “guardrail agent” supervises each interaction to ensure compliance, filtering out any inadvertent medical guidance before it reaches the patient.

- Structured Clinical Documentation: After completing the intake, a dedicated agent generates a comprehensive clinical summary formatted as a SOAP note (Subjective, Objective, Assessment, Plan), utilizing stepwise reasoning and constrained decoding techniques to maintain precision and coherence.

- Physician Review Interface: Licensed physicians, typically primary care providers (PCPs), access an interactive “clinician cockpit” to review, modify, and approve the AI-generated SOAP notes and patient communications. This interface, developed through extensive clinician feedback, allows detailed edits, commentary, and decisions on whether to accept the AI’s recommendations or request further evaluation.

This approach effectively decouples patient intake from physician oversight, enabling asynchronous review by clinicians. This model significantly enhances scalability compared to traditional “real-time” supervision methods used in some telemedicine platforms.

Comprehensive Validation: Virtual OSCE and Automated Assessment

To rigorously assess this framework, the researchers conducted a randomized, blinded virtual Objective Structured Clinical Examination (OSCE) involving:

- Participant Groups: The g-AMIE system was benchmarked against early-career PCPs (g-PCPs) and a combined group of NPs and PAs (g-NP/PA), all operating under the same guardrail constraints and overseen by senior PCPs (o-PCPs).

- Clinical Scenarios: Sixty meticulously designed cases, calibrated for complexity based on UK standards for physician assistants, were enacted by trained patient actors interacting with either AI or human clinicians.

- Evaluation Metrics: Independent physicians, patient actors, and LLM-based automated “auto-raters” assessed dialogue quality, SOAP note completeness and accuracy (using a modified QNote rubric), diagnostic and management correctness, and the oversight experience.

Significant Outcomes and Insights

- Adherence to Guardrails and Intake Thoroughness: Both g-AMIE and the NP/PA group refrained from delivering medical advice in over 90% of cases, outperforming the PCP group, which did so in 71.7% of scenarios. Notably, g-AMIE identified a higher proportion of critical “red flag” symptoms (64.2%) compared to human counterparts.

- Superior SOAP Note Quality: The initial SOAP notes generated by g-AMIE consistently surpassed those from human clinicians in terms of clarity, completeness, and accuracy, as confirmed by both expert reviewers and automated scoring systems.

- Enhanced Efficiency: Physician oversight time for g-AMIE cases was approximately 40% shorter than traditional PCP consultations, indicating substantial improvements in workflow efficiency.

- Diagnostic and Treatment Accuracy: Evaluations revealed that g-AMIE achieved a top-1 diagnostic accuracy of 81.7%, significantly higher than the 53.3% and 63.3% recorded for PCPs and NP/PAs respectively, alongside more comprehensive management plans.

- Positive Oversight Experience: Senior PCPs reported a more favorable experience supervising g-AMIE cases, often making edits to improve brevity, correct inaccuracies, or add necessary escalations. While edits enhanced diagnostic quality for human clinicians, g-AMIE’s outputs required fewer modifications.

- Patient Actor Feedback: Simulated patients consistently rated interactions with g-AMIE higher across empathy, communication, and trust dimensions, based on PACES and GMC evaluation rubrics.

- Advanced Practice Providers’ Strengths: The NP/PA group demonstrated better compliance with guardrails and elicited more comprehensive histories and differential diagnoses than early-career PCPs, likely reflecting their greater experience with structured intake protocols.

Implications: Advancing Safe and Scalable AI in Clinical Diagnostics

This study highlights that asynchronous physician oversight, facilitated by a structured multi-agent AI system and an intuitive review interface, can improve both the safety and efficiency of text-based diagnostic consultations. Systems like g-AMIE not only outperform less experienced clinicians and APPs in guarded history-taking, documentation quality, and decision-making under expert supervision but also offer a scalable model for integrating AI into healthcare workflows. Although further clinical trials and comprehensive training are essential before widespread adoption, this paradigm marks a pivotal advancement in responsible human-AI collaboration in medicine, maintaining accountability while delivering significant efficiency benefits.