Creating a Smart Agent with Lasting Memory and Customization

This guide walks you through developing an intelligent agent capable of remembering, learning, and evolving alongside its user. We implement a Persistent Memory and Personalization framework using straightforward, rule-based logic to mimic how advanced Agentic AI systems retain and retrieve contextual data. Throughout this process, you’ll observe how the agent’s replies improve with accumulated experience, how memory fading prevents information overload, and how personalization enhances interaction quality. Our goal is to illustrate how persistence transforms a simple chatbot into a dynamic, context-sensitive digital assistant.

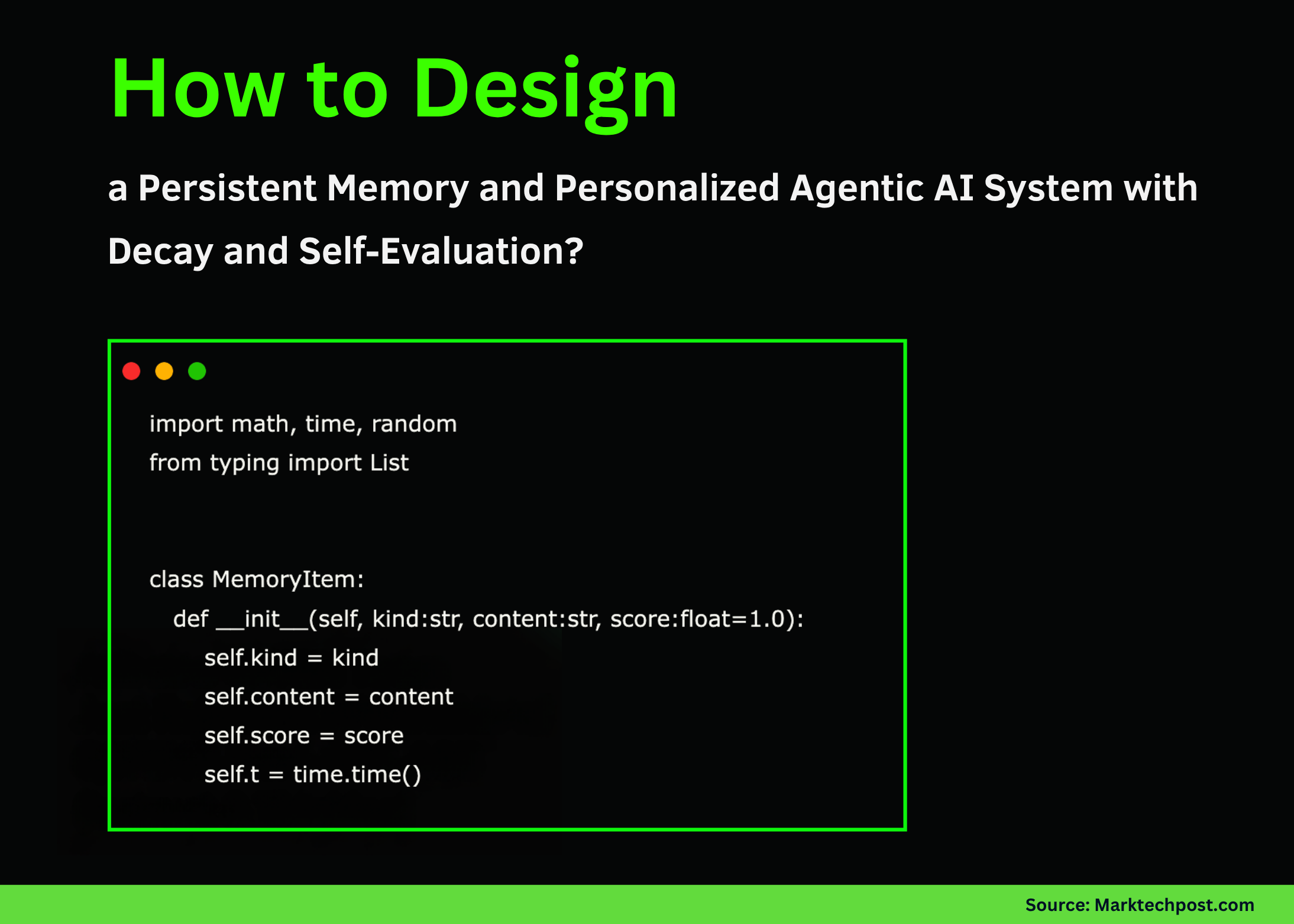

Establishing a Memory Foundation with Decay

We start by defining a MemoryItem class to encapsulate individual pieces of information, each tagged with a type, content, a relevance score, and a timestamp. These items are stored in a MemoryStore that incorporates an exponential decay function, simulating how human memory fades over time. This decay mechanism ensures that older memories gradually lose influence, preventing the agent from becoming overwhelmed by outdated data.

import time

from typing import List

class MemoryItem:

def init(self, category: str, content: str, score: float = 1.0):

self.category = category

self.content = content

self.score = score

self.timestamp = time.time()

class MemoryStore:

def init(self, halflifeseconds=1800):

self.items: List[MemoryItem] = []

self.halflife = halflifeseconds

def calculatedecay(self, item: MemoryItem) -> float:

elapsed = time.time() - item.timestamp

return 0.5 * (elapsed / self.halflife)

Enhancing Memory: Adding, Searching, and Pruning

Next, we expand the memory system with methods to add new memories, search for relevant ones based on keyword overlap, and remove those that have decayed below a significance threshold. This approach allows the agent to prioritize recent and pertinent information, much like how humans tend to recall more relevant experiences.

def addmemory(self, category: str, content: str, score: float = 1.0):

self.items.append(MemoryItem(category, content, score))

def searchmemory(self, query: str, topresults: int = 3) -> List[MemoryItem]:

scoreditems = []

querywords = set(query.lower().split())

for item in self.items:

decay = self.calculatedecay(item)

overlap = len(querywords.intersection(set(item.content.lower().split())))

relevance = (item.score decay) + overlap

scoreditems.append((relevance, item))

scoreditems.sort(key=lambda x: x[0], reverse=True)

return [item for score, item in scoreditems[:topresults] if score > 0]

def prunememory(self, threshold: float = 0.1):

self.items = [item for item in self.items if item.score * self.calculatedecay(item) > threshold]

Building the Agent: Memory-Driven Interaction

We then create an Agent class that leverages the memory store to tailor its responses. A simulated language model function generates replies influenced by stored user preferences and topics. The agent’s perception method captures new user inputs, categorizing them to enrich its memory dynamically. This design enables the agent to adapt its behavior based on accumulated knowledge.

class Agent:

def init(self, memorystore: MemoryStore, agentname: str = "SmartAgent"):

self.memory = memorystore

self.name = agentname

def simulateresponse(self, prompt: str, context: List[str]) -> str:

baseresponse = "Understood. "

if any("brief" in c for c in context):

baseresponse = ""

response = baseresponse + f"Analyzed {len(context)} relevant notes. "

promptlower = prompt.lower()

if "summarize" in promptlower:

return response + "Summary: " + " | ".join(context[:2])

if "suggest" in promptlower or "recommend" in promptlower:

if any("machine learning" in c for c in context):

return response + "Suggestion: focus on machine learning advancements."

if any("blockchain" in c for c in context):

return response + "Suggestion: explore blockchain applications next."

return response + "Suggestion: continue with your current theme."

return response + "Response to your query: " + prompt

def perceiveinput(self, usertext: str):

textlower = usertext.lower()

if "i enjoy" in textlower or "my preference" in textlower:

self.memory.addmemory("preference", usertext, 1.5)

if "subject:" in textlower:

self.memory.addmemory("topic", usertext, 1.2)

if "assignment" in textlower or "project" in textlower:

self.memory.addmemory("project", usertext, 1.0)

def respond(self, usertext: str):

relevantmemories = self.memory.searchmemory(usertext, topresults=4)

contextcontents = [mem.content for mem in relevantmemories]

reply = self.simulateresponse(usertext, contextcontents)

self.memory.addmemory("dialogue", f"user said: {usertext}", 0.6)

self.memory.prunememory()

return reply, contextcontents

Measuring the Impact of Personalization

To quantify the benefits of memory-based personalization, we introduce an evaluation function. It compares the agent’s recommendations when equipped with user memories against a baseline agent without any prior knowledge. This comparison highlights how stored context enriches the agent’s output, improving relevance and user satisfaction.

def assesspersonalization(agent: Agent):

agent.memory.addmemory("preference", "User enjoys articles on artificial intelligence", 1.6)

query = "What should I write about next?"

personalizedresponse, = agent.respond(query)

emptymemory = MemoryStore()

freshagent = Agent(emptymemory)

baselineresponse, = freshagent.respond(query)

improvement = len(personalizedresponse) - len(baselineresponse)

return personalizedresponse, baselineresponse, improvement

Demonstration: Teaching and Interacting with the Agent

Finally, we showcase the agent in action. We feed it several user statements to build its memory, then pose a question to observe how it leverages stored knowledge to provide a personalized recommendation. We also evaluate the personalization advantage and display the current memory contents, illustrating the agent’s evolving understanding.

if name == "main":

memory = MemoryStore(halflifeseconds=60)

agent = Agent(memory)

print("=== Training the agent with user preferences ===")

userinputs = [

"I prefer brief and concise answers.",

"I enjoy writing about blockchain and decentralized systems.",

"Subject: artificial intelligence, neural networks, deep learning.",

"My current project involves developing a blockchain voting system."

]

for inputtext in userinputs:

agent.perceiveinput(inputtext)

print("n=== Querying the agent ===")

question = "Can you suggest what to write about next?"

response, usedcontext = agent.respond(question)

print("User:", question)

print("Agent:", response)

print("Context used:", usedcontext)

print("n=== Evaluating personalization effect ===")

personalized, baseline, gain = assesspersonalization(agent)

print("With memory:", personalized)

print("Without memory:", baseline)

print("Personalization gain (characters):", gain)

print("n=== Current memory snapshot ===")

for item in agent.memory.items:

print(f"- {item.category} | {item.content[:60]}... | score approx. {round(item.score, 2)}")

Summary: The Power of Persistent Memory in Agentic AI

Through this tutorial, we demonstrated how integrating persistent memory and personalization transforms a static chatbot into a responsive, context-aware assistant. By mimicking human-like memory decay and retrieval, the agent can remember user preferences, adapt its suggestions, and naturally discard irrelevant information. Even with simple rule-based logic, these mechanisms significantly enhance the agent’s relevance and interaction quality. Persistent memory is a cornerstone for next-generation Agentic AI systems that continuously learn, customize experiences intelligently, and maintain dynamic context-all achievable in a fully offline, local environment.