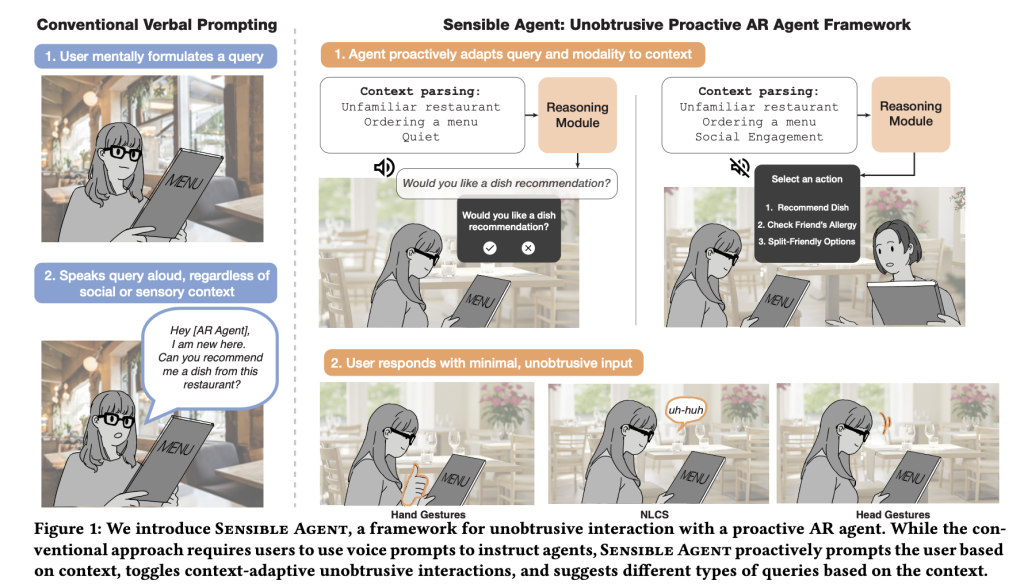

Google’s Sensible Agent represents an innovative AI-driven framework designed to enhance augmented reality (AR) experiences by simultaneously determining what action an AR assistant should take and how to communicate that action effectively. This dual decision-making process is dynamically informed by real-time multimodal context-such as whether the user’s hands are occupied, ambient sound levels, or the social environment-ensuring interactions are both timely and socially appropriate. Unlike traditional systems that separate the choice of suggestion from its delivery method, Sensible Agent integrates these decisions to reduce user friction and social discomfort in everyday scenarios.

Addressing Common Pitfalls in AR Interaction

Conventional voice-first AR prompts often falter under practical constraints: they can be slow when users are rushed, impractical when hands or eyes are busy, and socially awkward in public settings. Sensible Agent’s foundational insight is that even the most relevant suggestion becomes ineffective if delivered through an unsuitable channel. To tackle this, the system jointly optimizes what the agent proposes-whether it’s a recommendation, reminder, or automation-and how it is presented and confirmed. This includes a variety of modalities such as visual cues, audio signals, or a combination, alongside input methods like head gestures, gaze fixation, finger poses, brief speech commands, or non-verbal conversational sounds. By aligning content with feasible and socially acceptable interaction modes, the framework aims to minimize user effort while maximizing usefulness.

Runtime Architecture: A Three-Stage Pipeline

The Sensible Agent prototype, running on an Android-class extended reality (XR) headset, operates through a structured pipeline comprising three key phases. First, context parsing merges egocentric visual data-leveraging vision-language models to interpret scenes, activities, and familiarity-with ambient audio classification (using YAMNet) to assess environmental factors like background noise or ongoing conversations. Next, a proactive query generator employs a large multimodal model, guided by few-shot learning examples, to decide the optimal action, the query format (such as binary choice, multiple options, or icon-based cues), and the delivery modality. Finally, the interaction layer activates only those input channels compatible with the current sensory context-for instance, enabling head nods for affirmative responses when speaking aloud is inappropriate, or gaze-based selection when hands are engaged.

Data-Driven Policy Formation: Beyond Intuition

The system’s decision policies are grounded in empirical research rather than mere designer intuition. Two foundational studies informed the few-shot exemplar database: an expert workshop involving 12 specialists identified scenarios where proactive assistance is beneficial and cataloged socially acceptable micro-interactions; and a context mapping study with 40 participants generating 960 data points across diverse everyday environments-such as gyms, grocery stores, museums, commutes, and kitchens. Participants indicated preferred agent actions and interaction modalities tailored to each context. These insights enable the system to select contextually appropriate “what and how” combinations, such as favoring multi-choice queries in unfamiliar settings or binary prompts under time constraints, and opting for visual iconography in socially sensitive situations.

Supported Interaction Techniques in the Prototype

The prototype accommodates a variety of intuitive input methods tailored to the interaction type. For binary confirmations, it recognizes head nods and shakes; for multi-choice selections, a head-tilt mechanism maps left, right, and backward tilts to options one, two, and three respectively. Numeric choices and approval/disapproval gestures are captured through finger poses, while gaze dwell activates visual buttons, offering a less cumbersome alternative to precise pointing. Minimal speech commands-such as “yes,” “no,” or numeric options-provide a streamlined verbal input path. Additionally, non-lexical conversational sounds like “mm-hm” serve as subtle confirmations in noisy or whisper-only environments. Importantly, the system dynamically filters available modalities based on current context, for example, suppressing audio prompts in quiet spaces or disabling gaze-based inputs when the user is not focused on the heads-up display.

Evaluating Interaction Efficiency: Early User Feedback

Initial user testing with 10 participants compared Sensible Agent’s joint decision framework against a traditional voice-prompt baseline in both AR and 360° VR environments. Results indicated a noticeable reduction in perceived interaction effort and intrusiveness, while maintaining usability and user preference. Although the sample size is limited and typical of early-stage human-computer interaction research, these findings support the hypothesis that integrating intent and modality selection can streamline user engagement and reduce cognitive load.

Audio Processing with YAMNet: Why It Matters

YAMNet, a lightweight audio event classifier based on MobileNet-v1 and trained on Google’s extensive AudioSet dataset, identifies 521 distinct sound classes. Within Sensible Agent, it serves as a rapid ambient audio analyzer, detecting speech, music, crowd noise, and other environmental sounds. This capability enables the system to intelligently gate audio prompts or prioritize visual and gesture-based interactions when vocal communication would be disruptive or ineffective. YAMNet’s widespread availability through TensorFlow Hub and compatibility with edge devices facilitate seamless on-device deployment.

Integrating Sensible Agent into Existing AR Ecosystems

Adopting Sensible Agent within current AR or mobile assistant platforms involves a straightforward process: (1) implement a lightweight context parser combining vision-language models on egocentric video frames with ambient audio tagging to generate a concise environmental state; (2) develop a few-shot policy table mapping contexts to corresponding actions, query types, and modalities, informed by internal testing or user research; (3) utilize a large multimodal model to simultaneously generate both the content and delivery method; (4) restrict input options to those feasible under the current context, defaulting to binary confirmations for simplicity; and (5) collect interaction data to refine policies through offline learning. Demonstrated feasibility on WebXR and Chrome running on Android-class hardware suggests that porting to native head-mounted displays or smartphone-based HUDs primarily requires engineering adaptation.

Conclusion: A New Paradigm for Proactive AR Interaction

Sensible Agent redefines proactive assistance in augmented reality by framing the problem as a unified decision of what action to take and how to communicate it, conditioned on the user’s real-time context. Validated through a functional WebXR prototype and preliminary user studies, this approach reduces interaction effort compared to voice-only systems. Rather than delivering a finished product, the framework offers a replicable methodology: a dataset linking contexts to interaction strategies, few-shot prompting techniques to operationalize these mappings, and a suite of low-effort input methods that respect social norms and sensory constraints.