Retrieval-Augmented Generation (RAG) frameworks predominantly utilize dense embedding models that transform queries and documents into fixed-length vector representations. Although this technique has become standard in many AI-driven retrieval systems, recent findings from a Google DeepMind research team reveal a fundamental architectural constraint that cannot be overcome merely by scaling model size or enhancing training methods.

Understanding the Theoretical Boundaries of Embedding Dimensions

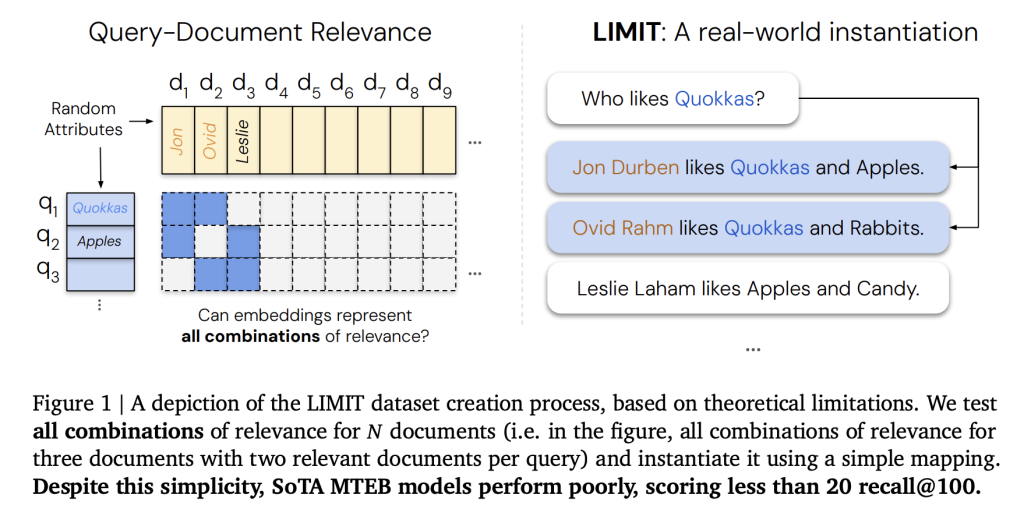

The crux of the problem lies in the limited representational power of fixed-dimensional embeddings. An embedding vector of dimension d cannot encode every possible relevant document combination once the dataset surpasses a certain threshold. This limitation is grounded in principles from communication complexity and sign-rank theory.

- With 512-dimensional embeddings, retrieval performance deteriorates around 500,000 documents.

- At 1024 dimensions, the upper bound extends to roughly 4 million documents.

- For embeddings sized at 4096 dimensions, the theoretical maximum reaches approximately 250 million documents.

These figures represent optimistic scenarios assuming free embedding optimization, where vectors are directly tuned against test labels. In practical applications, where embeddings are constrained by language models, the breakdown occurs even sooner.

How the LIMIT Benchmark Highlights Embedding Constraints

To empirically validate these theoretical limits, the Google DeepMind team developed the LIMIT (Limitations of Embeddings in Information Retrieval) benchmark, designed to rigorously challenge embedding models. LIMIT is available in two configurations:

- LIMIT full (50,000 documents): In this large-scale environment, even state-of-the-art embedders struggle, with recall@100 frequently dropping below 20%.

- LIMIT small (46 documents): Despite its modest size, this setup still exposes significant weaknesses. Performance varies but remains suboptimal:

- Promptriever Llama3 8B: 54.3% recall@2 (4096 dimensions)

- GritLM 7B: 38.4% recall@2 (4096 dimensions)

- E5-Mistral 7B: 29.5% recall@2 (4096 dimensions)

- Gemini Embed: 33.7% recall@2 (3072 dimensions)

Notably, no embedding model achieves perfect recall even with just 46 documents, underscoring that the limitation stems from the single-vector embedding architecture rather than dataset size alone.

In contrast, BM25, a traditional sparse lexical retrieval method, does not encounter this ceiling. Sparse models operate in effectively infinite-dimensional spaces, enabling them to represent complex document-query relationships that dense embeddings cannot capture.

Implications for Retrieval-Augmented Generation Systems

Current RAG implementations often assume that embedding vectors can scale seamlessly with increasing data volumes. However, the Google DeepMind study clarifies that embedding dimensionality inherently restricts retrieval capacity. This limitation impacts several critical applications:

- Enterprise search platforms managing millions of documents.

- Agentic AI systems that perform intricate logical reasoning over large corpora.

- Instruction-driven retrieval tasks, where query relevance is dynamically defined.

Moreover, popular benchmarks like MTEB fail to reveal these constraints because they evaluate only a narrow subset of query-document interactions.

Exploring Alternatives Beyond Single-Vector Embeddings

To overcome these fundamental limits, the research suggests moving away from single-vector embeddings toward more expressive architectures:

- Cross-Encoders: These models directly score query-document pairs, achieving near-perfect recall on the LIMIT benchmark but at the expense of significantly increased inference time.

- Multi-Vector Models (e.g., ColBERT): By representing each document with multiple vectors, these models enhance retrieval expressiveness and improve performance on challenging tasks like LIMIT.

- Sparse Retrieval Methods (BM25, TF-IDF, neural sparse retrievers): While they scale efficiently in high-dimensional spaces, they typically lack the semantic understanding offered by dense embeddings.

The overarching conclusion is that innovative architectural designs are essential to advance retrieval capabilities, rather than simply increasing embedding size.

Key Insights and Takeaways

The analysis from the Google DeepMind team reveals a mathematical ceiling for dense embeddings: they cannot encode all relevant document combinations once the corpus size exceeds limits tied to embedding dimensionality. The LIMIT benchmark concretely demonstrates this failure:

- On LIMIT full (50,000 documents), recall@100 falls below 20%.

- On LIMIT small (46 documents), even the best models plateau at around 54% recall@2.

Consequently, classical sparse retrieval techniques like BM25, alongside emerging multi-vector and cross-encoder architectures, remain indispensable for building robust, scalable retrieval systems.