EmbeddingGemma represents Google’s latest advancement in text embedding models, specifically engineered for on-device artificial intelligence. It strikes a remarkable balance between computational efficiency and cutting-edge retrieval capabilities, making it ideal for mobile and offline applications.

How Does EmbeddingGemma Compare in Size and Speed?

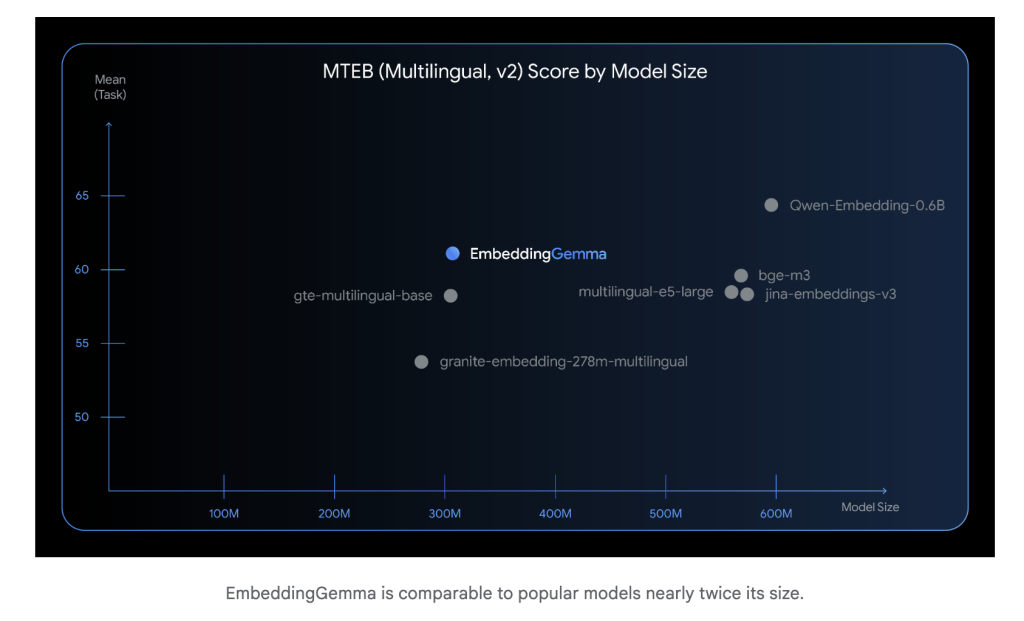

With a compact architecture comprising only 308 million parameters, EmbeddingGemma is significantly smaller than many contemporary embedding models, enabling seamless operation on smartphones and edge devices without cloud dependency. Despite its modest size, it delivers performance on par with much larger models. For instance, it achieves inference times under 15 milliseconds for processing 256 tokens on EdgeTPU hardware, supporting real-time use cases such as instant search and interactive AI assistants.

Multilingual Capabilities and Benchmark Excellence

Trained on a diverse corpus spanning over 100 languages, EmbeddingGemma excels in multilingual understanding. It secured the top position on the Massive Text Embedding Benchmark (MTEB) among models with fewer than 500 million parameters. Its prowess is especially notable in cross-lingual retrieval tasks and semantic search, outperforming embedding models nearly twice its size. This makes it a powerful tool for global applications requiring nuanced language comprehension.

Architectural Foundations of EmbeddingGemma

At its core, EmbeddingGemma utilizes a Gemma 3-based encoder backbone combined with mean pooling to generate fixed-length embeddings. Unlike Gemma 3’s multimodal variant, which incorporates bidirectional attention layers for image processing, EmbeddingGemma employs a conventional transformer encoder stack with full-sequence self-attention tailored for text. This design yields 768-dimensional embeddings and supports input sequences up to 2,048 tokens, making it well-suited for applications like retrieval-augmented generation (RAG) and long-document semantic search.

Adaptive Embedding Dimensions via Matryoshka Representation Learning

EmbeddingGemma introduces Matryoshka Representation Learning (MRL), a technique that enables dynamic truncation of embeddings from 768 dimensions down to 512, 256, or even 128 dimensions. This flexibility allows developers to optimize the balance between storage requirements and retrieval accuracy without the need for retraining, facilitating deployment across devices with varying resource constraints.

Designed for Fully Offline Operation

One of EmbeddingGemma’s standout features is its capability to function entirely offline. Sharing a tokenizer with Gemma 3n, it supports compact, local retrieval pipelines that enhance user privacy by eliminating reliance on cloud-based inference. This offline-first design is particularly valuable for sensitive environments and applications where data security is paramount.

Integration with Popular AI Frameworks and Tools

EmbeddingGemma seamlessly fits into the modern AI development ecosystem, offering compatibility with:

- Hugging Face libraries, including transformers, Sentence-Transformers, and transformers.js

- LangChain and LlamaIndex for building sophisticated RAG workflows

- Weaviate and other vector databases for efficient similarity search

- ONNX Runtime to enable optimized, cross-platform deployment

This broad support ensures developers can effortlessly incorporate EmbeddingGemma into existing pipelines and applications.

Practical Steps to Implement EmbeddingGemma

Step 1: Load and Generate Embeddings

from sentence_transformers import SentenceTransformer

model = SentenceTransformer("google/embeddinggemma-300m")

embeddings = model.encode(["sample text for embedding"])

Step 2: Customize Embedding Size

Choose the full 768-dimensional vectors for highest precision or reduce to 512, 256, or 128 dimensions to save memory and speed up retrieval.

Step 3: Build Offline RAG Pipelines

Perform local similarity searches using cosine similarity metrics, then feed the top results into Gemma 3n for generation tasks, enabling a fully offline retrieval-augmented generation system.

Why Choose EmbeddingGemma?

- Scalable Efficiency: Delivers top-tier multilingual retrieval accuracy within a compact model size.

- Versatile Embeddings: Adjustable dimensionality through MRL to fit diverse deployment needs.

- Privacy-Centric: Supports end-to-end offline workflows, safeguarding user data.

- Developer-Friendly: Open-source weights, permissive licensing, and extensive ecosystem integration.

EmbeddingGemma exemplifies how smaller, resource-efficient embedding models can rival larger counterparts in retrieval performance, paving the way for scalable, privacy-aware AI solutions on edge devices.