In the evolving landscape of code-centric large language models (LLMs), the shift from simple autocomplete tools to comprehensive software engineering assistants is well underway. By 2025, top-tier models are expected to handle complex tasks such as resolving authentic GitHub issues, refactoring extensive multi-repository backends, generating robust tests, and operating as autonomous agents capable of managing extensive context windows. The critical consideration for development teams is no longer whether these models can write code, but rather which model aligns best with specific project constraints and requirements.

Top Seven Coding Models Powering Modern Software Development

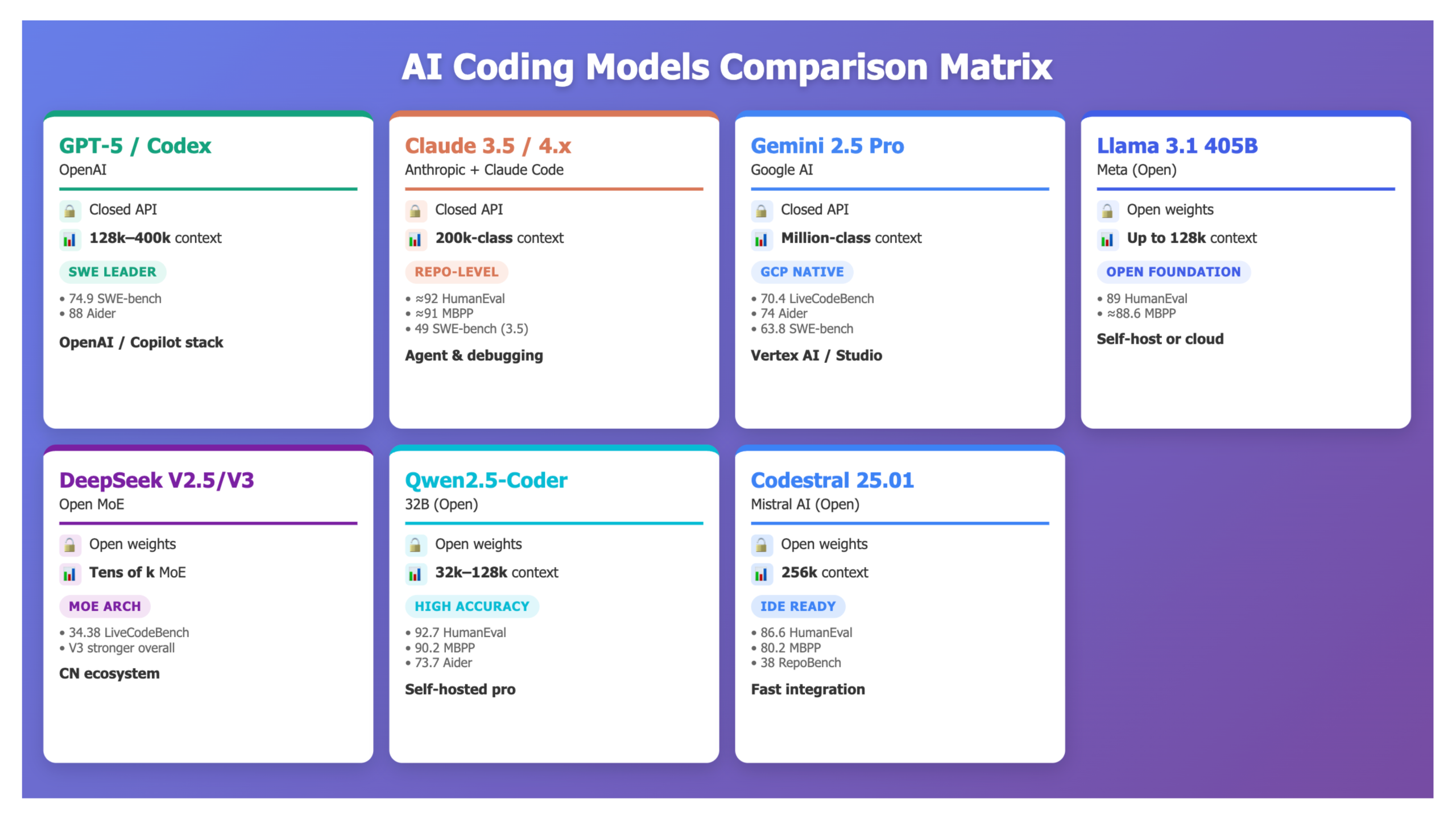

Below is an overview of seven prominent LLMs and their associated ecosystems that currently address the majority of real-world coding challenges:

- OpenAI’s GPT-5 / GPT-5-Codex

- Anthropic’s Claude 3.5 Sonnet / Claude 4.x Sonnet with the Claude Code platform

- Google DeepMind’s Gemini 2.5 Pro

- Meta’s Llama 3.1 405B Instruct

- DeepSeek-V2.5-1210 and its successor DeepSeek-V3

- Alibaba’s Qwen2.5-Coder-32B-Instruct

- Mistral’s Codestral 25.01

Key Criteria for Model Assessment

To provide a comprehensive comparison, these models are evaluated across six critical dimensions:

- Fundamental Coding Proficiency: Performance on benchmarks like HumanEval, MBPP, and code repair tasks in Python.

- Repository and Bug Resolution Efficiency: Effectiveness on real-world engineering benchmarks such as SWE-bench Verified, Aider Polyglot, RepoBench, and LiveCodeBench.

- Handling of Extended Contexts: Documented maximum context lengths and practical performance during prolonged coding sessions.

- Deployment Flexibility: Availability as closed APIs, cloud services, containerized solutions, on-premises installations, or fully open-weight self-hosted models.

- Tooling and Ecosystem Integration: Support for native agents, IDE plugins, cloud platform compatibility, GitHub workflows, and CI/CD pipelines.

- Cost Efficiency and Scalability: Pricing models for token usage in closed systems and hardware requirements for open-source deployments.

1. OpenAI GPT-5 / GPT-5-Codex: The Premier Hosted Coding Powerhouse

OpenAI’s GPT-5 stands as the flagship model for reasoning and code generation, powering ChatGPT’s most advanced tiers. It excels in real-world coding scenarios, boasting:

- SWE-bench Verified: 74.9%

- Aider Polyglot: 88%

These benchmarks simulate authentic software engineering tasks, with SWE-bench Verified testing against live repositories and Aider Polyglot evaluating multi-language whole-file edits.

Context Capabilities:

- GPT-5 (chat): Supports up to 128,000 tokens in context.

- GPT-5-Pro / GPT-5-Codex: Model cards indicate up to 400,000 tokens combined context, with practical production limits around 272,000 tokens input plus 128,000 tokens output for stability.

Deployment: Available exclusively via OpenAI’s cloud API and integrated into platforms like ChatGPT (Plus, Pro, Team, Enterprise) and GitHub Copilot.

Advantages:

- Industry-leading scores on SWE-bench Verified and Aider Polyglot benchmarks.

- Exceptional at multi-step debugging and complex bug fixes using chain-of-thought reasoning.

- Robust ecosystem with extensive third-party IDE and agent integrations.

Considerations:

- Closed-source with no option for self-hosting; all data must pass through OpenAI’s infrastructure.

- High costs associated with long-context processing, necessitating efficient retrieval and diff-based strategies for large monorepos.

Ideal for teams seeking top-tier hosted performance on large-scale repository tasks and comfortable with cloud-based governance.

2. Anthropic Claude Sonnet Series: Advanced Repo-Aware Coding Agents

Anthropic’s Claude 3.5 Sonnet served as a leading coding model before the introduction of the Claude 4 series. It demonstrates strong performance on coding benchmarks:

- HumanEval: Approximately 92%

- MBPP EvalPlus: Approximately 91%

In 2025, Anthropic expanded its lineup with Claude 4 Opus, Sonnet, and Sonnet 4.5, with Sonnet 4.5 positioned as the most capable coding and agent model to date.

Claude Code Platform:

- Provides a managed virtual machine tightly integrated with GitHub repositories.

- Enables file browsing, editing, automated testing, and pull request generation.

- Includes an SDK for building custom coding agents leveraging Claude as the backend.

Strengths:

- High accuracy on HumanEval and MBPP benchmarks, with strong empirical results in debugging and code review.

- Production-ready coding agent environment supporting persistent VMs and GitHub workflows.

Limitations:

- Closed, cloud-hosted deployment similar to OpenAI’s governance model.

- Published SWE-bench Verified scores for Claude 3.5 Sonnet lag behind GPT-5, though Claude 4.x is expected to close the gap.

Best suited for teams requiring explainable debugging, comprehensive code review, and managed repo-level coding agents within a closed cloud environment.

3. Google Gemini 2.5 Pro: Integrated Coding and Reasoning on GCP

Google DeepMind’s Gemini 2.5 Pro is a versatile model designed for coding and reasoning tasks, integrated deeply with Google Cloud Platform (GCP). Its benchmark results include:

- LiveCodeBench v5: 70.4%

- Aider Polyglot: 74.0%

- SWE-bench Verified: 63.8%

These figures position Gemini 2.5 Pro ahead of many predecessors, trailing only behind Claude 3.7 and GPT-5 on SWE-bench Verified.

Context and Platform Integration:

- Supports up to 1 million tokens context across the Gemini family, with 2.5 Pro as the stable tier powering Gemini Apps, Google AI Studio, and Vertex AI.

- Seamlessly integrates with GCP services such as BigQuery, Cloud Run, and Google Workspace.

Advantages:

- Balanced performance across multiple benchmarks combined with first-class GCP ecosystem support.

- Ideal for projects combining data analytics and backend application code within Google Cloud.

Drawbacks:

- Closed-source and restricted to Google Cloud environments.

- For pure software engineering benchmarks, GPT-5 and Claude 4.x currently outperform Gemini 2.5 Pro.

Recommended for organizations standardized on GCP seeking a long-context coding model integrated into their cloud infrastructure.

4. Meta Llama 3.1 405B Instruct: Open-Weight Generalist with Strong Coding Skills

Meta’s Llama 3.1 series, particularly the 405B Instruct variant, offers an open-weight foundation model excelling in both coding and general reasoning. Benchmark highlights include:

- HumanEval (Python): 89.0%

- MBPP (EvalPlus): Approximately 88.6%

The model card emphasizes superior performance over many open and closed chat models, optimized for multilingual dialogue and complex reasoning.

Strengths:

- High benchmark scores with permissive licensing and open weights.

- Strong generalist capabilities across tasks like MMLU, enabling a unified model for product features and coding agents.

Limitations:

- Large parameter count (405B) results in significant serving costs and latency unless deployed on substantial GPU clusters.

- More specialized models such as Qwen2.5-Coder-32B and Codestral 25.01 offer better cost-efficiency for fixed compute budgets focused solely on coding.

Ideal for teams seeking a versatile open foundation model that handles both coding and general reasoning, with control over GPU infrastructure.

5. DeepSeek-V2.5-1210 and DeepSeek-V3: Open Mixture-of-Experts Models for Coding and Reasoning

DeepSeek’s V2.5-1210 is an advanced Mixture-of-Experts (MoE) model combining chat and coding capabilities. Recent benchmarks show:

- LiveCodeBench (Aug-Dec 2024): Improvement from 29.2% to 34.38%

- MATH-500: Increase from 74.8% to 82.8%

The successor, DeepSeek-V3, features 671B parameters with 37B active per token, trained on 14.8 trillion tokens. It rivals leading closed models on many reasoning and coding tasks, with public dashboards indicating superior performance over V2.5.

Advantages:

- Open MoE architecture delivering strong coding and math performance relative to model size.

- Efficient use of active parameters reduces inference costs.

Challenges:

- V2.5 is superseded by V3, which is still maturing.

- Smaller ecosystem compared to OpenAI, Google, and Anthropic; users must build their own IDE and agent integrations.

Recommended for organizations seeking open MoE-based coding models with self-hosting capabilities and willingness to adopt the latest DeepSeek advancements.

6. Alibaba Qwen2.5-Coder-32B-Instruct: High-Accuracy Open Code Specialist

Alibaba’s Qwen2.5-Coder family spans six model sizes (0.5B to 32B) and is pretrained on over 5.5 trillion tokens of code-intensive data. The 32B Instruct variant benchmarks include:

- HumanEval: 92.7%

- MBPP: 90.2%

- LiveCodeBench: 31.4%

- Aider Polyglot: 73.7%

- Spider: 85.1%

- CodeArena: 68.9%

Strengths:

- Competitive with closed models on pure coding benchmarks.

- Multiple parameter sizes allow adaptation to diverse hardware environments.

Limitations:

- Less effective for broad general reasoning compared to generalist models like Llama 3.1 or DeepSeek-V3.

- English-language tooling and documentation are still evolving.

Best for teams needing a self-hosted, high-precision coding assistant that can be complemented by a general LLM for non-coding tasks.

7. Mistral Codestral 25.01: Fast, Mid-Size Open Code Model for Interactive Use

Mistral’s Codestral 25.01 introduces architectural and tokenizer improvements, delivering code generation approximately twice as fast as its predecessor. Benchmark results include:

- HumanEval: 86.6%

- MBPP: 80.2%

- Spider: 66.5%

- RepoBench: 38.0%

- LiveCodeBench: 37.9%

Supporting over 80 programming languages and a 256,000 token context window, Codestral 25.01 is optimized for low-latency, high-frequency tasks such as code completion and fill-in-the-middle (FIM) operations.

Advantages:

- Strong RepoBench and LiveCodeBench performance for a mid-sized open model.

- Designed for rapid interactive workflows in IDEs and SaaS platforms, with open weights and extensive context support.

Limitations:

- Lower absolute HumanEval and MBPP scores compared to larger models like Qwen2.5-Coder-32B, consistent with its parameter scale.

Ideal for developers and product teams requiring a compact, fast open-source code model for scalable completions and interactive coding tasks.

Comparative Overview of Leading Coding Models

| Feature | GPT-5 / GPT-5-Codex | Claude 3.5 / 4.x + Claude Code | Gemini 2.5 Pro | Llama 3.1 405B Instruct | DeepSeek-V2.5-1210 / V3 | Qwen2.5-Coder-32B | Codestral 25.01 |

|---|---|---|---|---|---|---|---|

| Primary Use Case | Hosted generalist with strong coding and agent capabilities | Hosted models plus managed repo-level coding VM | Hosted coding and reasoning within GCP ecosystem | Open-weight generalist with strong coding skills | Open MoE coder and chat model | Open code-specialized model | Open mid-size code model optimized for speed |

| Context Window | 128k tokens (chat), up to 400k tokens (Pro / Codex) | Approximately 200k tokens (varies by tier) | Up to 1 million tokens across Gemini family | Up to 128k tokens in many deployments | Tens of thousands of tokens, scalable via MoE | 32k-128k tokens depending on deployment | 256k tokens |

| Benchmark Highlights | 74.9% SWE-bench, 88% Aider Polyglot | ~92% HumanEval, ~91% MBPP, 49% SWE-bench (3.5); 4.x expected higher | 70.4% LiveCodeBench, 74% Aider, 63.8% SWE-bench | 89% HumanEval, ~88.6% MBPP | 34.38% LiveCodeBench; V3 surpasses V2.5 | 92.7% HumanEval, 90.2% MBPP, 31.4% LiveCodeBench, 73.7% Aider | 86.6% HumanEval, 80.2% MBPP, 38% RepoBench, 37.9% LiveCodeBench |

| Deployment Model | Closed API via OpenAI / Copilot | Closed API via Anthropic console and Claude Code | Closed API via Google AI Studio / Vertex AI | Open weights, self-hosted or cloud | Open weights, self-hosted; V3 available via providers | Open weights, self-hosted or commercial APIs | Open weights, multi-cloud availability |

| Integration Ecosystem | ChatGPT, OpenAI API, GitHub Copilot | Claude app, Claude Code, SDKs | Gemini Apps, Vertex AI, GCP services | Hugging Face, vLLM, cloud marketplaces | Hugging Face, vLLM, custom stacks | Hugging Face, commercial APIs, local runners | Azure, GCP, custom inference, IDE plugins |

| Optimal Use Case | Maximum SWE-bench and Aider performance in hosted environments | Repo-level agents and advanced debugging workflows | GCP-centric engineering combining data and code | Unified open foundation model for coding and reasoning | Open MoE experimentation and Chinese ecosystem focus | Self-hosted, high-accuracy code assistance | Fast, open model for IDE and SaaS product integration |

Choosing the Right Model for Your Needs

- For the most powerful hosted repo-level coding: Opt for GPT-5 / GPT-5-Codex. Claude Sonnet 4.x is a close contender, but GPT-5 leads in published benchmarks.

- For comprehensive coding agents with VM and GitHub integration: Choose Claude Sonnet + Claude Code for persistent, repo-aware workflows.

- If your infrastructure is Google Cloud-based: Gemini 2.5 Pro is the natural choice within Vertex AI and AI Studio.

- When seeking a single open generalist model: Llama 3.1 405B Instruct offers strong coding and reasoning capabilities.

- For the best open-source code specialist: Qwen2.5-Coder-32B-Instruct delivers high accuracy and flexibility.

- If you prefer open MoE models: Start with DeepSeek-V2.5-1210 and plan to transition to DeepSeek-V3 as it matures.

- For fast, mid-size open models suited to IDEs and SaaS: Codestral 25.01 provides low-latency completions and extensive context support.

Final Thoughts

Currently, GPT-5, Claude Sonnet 4.x, and Gemini 2.5 Pro set the benchmark for hosted coding model performance, particularly on SWE-bench Verified and Aider Polyglot. Meanwhile, open models like Llama 3.1 405B, Qwen2.5-Coder-32B, DeepSeek-V2.5/V3, and Codestral 25.01 demonstrate that high-quality coding assistants can be effectively self-hosted, granting teams full control over model weights and data privacy.

For most software engineering organizations, a hybrid approach is optimal: leveraging one or two hosted frontier models for complex, multi-service refactoring tasks, complemented by one or two open-source models for internal tooling, compliance-sensitive codebases, and latency-critical IDE integrations.