Advancing LLM Agents: Beyond Task Completion to Adaptive Interaction

While most large language model (LLM) agents excel at completing tasks such as resolving GitHub issues or answering complex research questions, they often lack nuanced reasoning about when to engage users with clarifying questions or how to tailor interactions to individual preferences. This raises a critical question: how can we develop LLM agents that intelligently decide when to ask insightful questions and customize their communication style to each user?

Introducing a New Paradigm: Productivity, Proactivity, and Personalization

A collaborative research effort from Carnegie Mellon University (CMU) and OpenHands addresses this gap by defining three intertwined objectives for LLM agents:

- Productivity: The quality and accuracy of task completion, measured by metrics such as F1 score on software engineering benchmarks or exact match rates in research query tasks.

- Proactivity: The agent’s ability to pose necessary clarifying questions when initial prompts are ambiguous, while avoiding superfluous inquiries.

- Personalization: Adapting responses to align with user-specific preferences, including brevity, response format, language choice, and other interaction nuances.

These goals are optimized simultaneously through a multi-objective reinforcement learning (RL) framework named PPP, implemented within a novel interactive environment called UserVille.

UserVille: A Simulation Environment for Preference-Aware Interaction

UserVille transforms existing LLM benchmarks into an interaction-focused RL setting by incorporating user simulators that embody diverse preferences. This environment operates through three key phases:

- Prompt Vaguenization: Precise task prompts are deliberately rewritten into vague versions that maintain the original intent but omit critical details, creating an information gap. The user simulator retains access to the full prompt, while the agent only sees the ambiguous version.

- Preference-Aware User Simulation: Each simulated user is characterized by one of 20 distinct interaction preferences, such as preferred response length, number of questions per turn, answer formatting (e.g., JSON), timing constraints, or language requirements. Twelve preferences are used during training, with eight reserved for testing generalization.

- User-Centric Evaluation: After task completion, the simulator categorizes each agent question by effort level-low, medium, or high-based on the difficulty of providing an answer using the full prompt. Proactivity is scored as 1 if all questions are low effort; otherwise, 0. Personalization is scored as 1 if the agent adheres to the user’s preferences, averaged over sessions where questions were asked.

UserVille supports two primary domains: software engineering, utilizing SWE-Gym for training and SWE-Bench Verified and SWE-Bench Full for evaluation; and deep research tasks, leveraging BrowseComp-Plus alongside search and page-opening tools.

PPP Framework: Multi-Objective Reinforcement Learning for Smarter Agents

The PPP approach models agents as ReAct-style systems powered by Seed-OSS-36B-Instruct policies. These agents can invoke domain-specific tools and an ask_user function to query the user simulator.

The reward function guiding training is a composite of three components:

- Productivity Reward (RProd): Reflects task performance metrics such as F1 score on software function localization or exact match on research queries.

- Proactivity Reward (RProact): Grants a +0.05 bonus if all questions are low effort, while penalizing medium effort questions by -0.1 and high effort questions by -0.5.

- Personalization Reward (RPers): Adds +0.05 for adherence to user preferences and applies penalties for violations based on specific preference rules.

Training employs a GRPO-based RL algorithm enhanced with the Clip Higher strategy and token-level policy gradient loss inspired by DAPO. Optimization focuses exclusively on tokens generated by the LLM. The environment is built using Verl, with training conducted over 200 steps, batch size 64, and group size 8. Maximum output lengths vary by task, reaching up to 65,000 tokens for complex software engineering tasks. GPT 5 Nano serves as the user simulator, while SWE tasks utilize OpenHands scaffolds and deep research tasks incorporate search and page-opening tools with Qwen3-Embed-8B as the retriever.

Performance Evaluation: PPP’s Impact on Interaction and Task Success

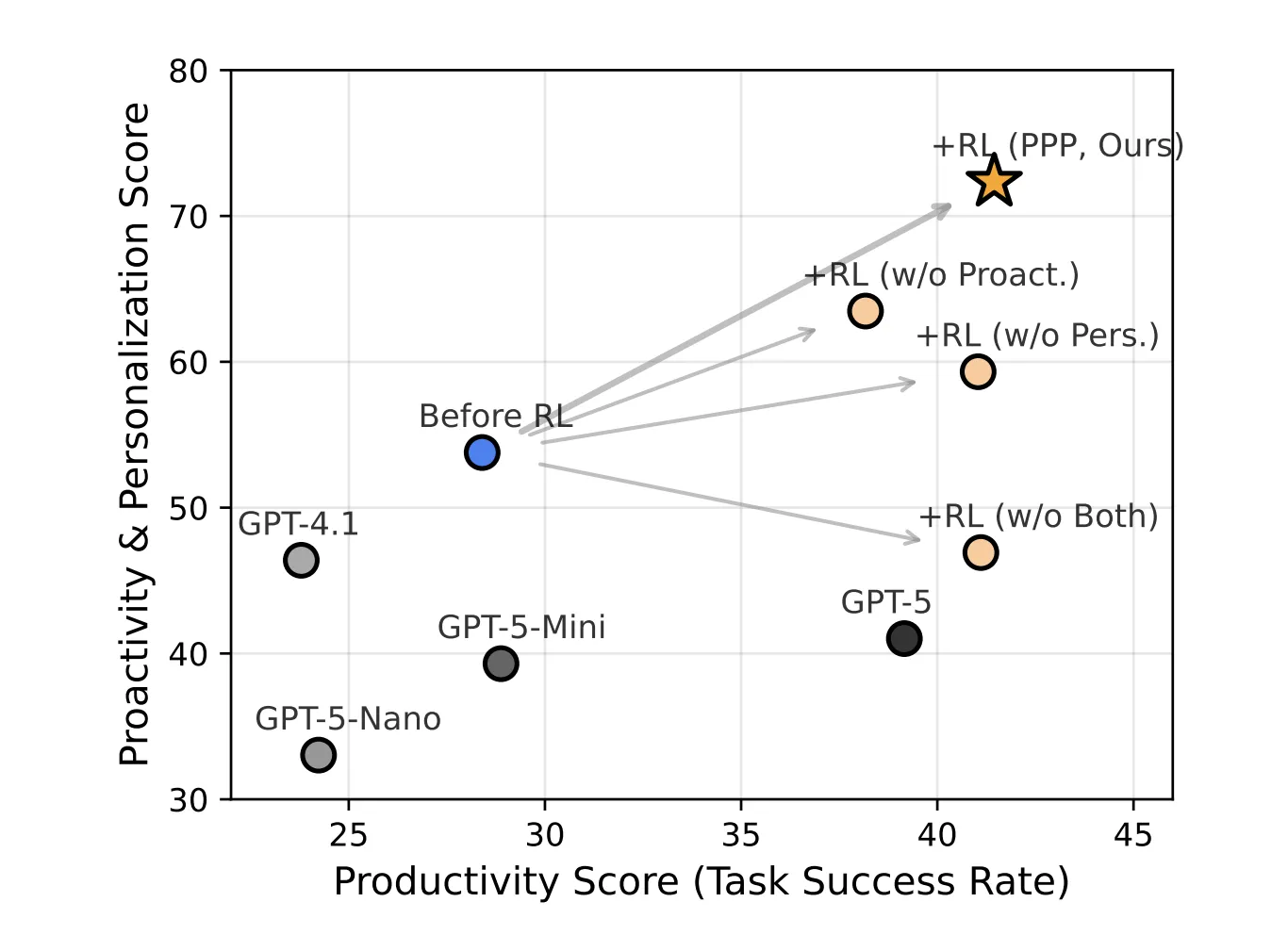

Experimental results demonstrate significant improvements across all three objectives when comparing the PPP-trained model to the Seed-OSS-36B-Instruct baseline and GPT-5 variants. Key findings include:

- On the SWE-Func-Loc benchmark, the base model achieved a productivity score of 38.59, proactivity of 43.70, and personalization of 69.07. After PPP training, these scores rose to 56.26, 75.55, and 89.26 respectively.

- For BrowseComp-Plus, productivity improved from 18.20 to 26.63, proactivity from 37.60 to 47.69, and personalization from 64.76 to 76.85.

- The average enhancement across all metrics and datasets was approximately 16.72 points, underscoring PPP’s effectiveness.

Interaction proves especially vital when dealing with vague prompts. For instance, on SWE-Func-Loc, task performance (F1 score) drops from 64.50 with precise prompts and no interaction to 44.11 with vague prompts and no interaction. Incorporating interaction without RL training does not close this gap, but PPP-trained agents recover 21.66 points, highlighting the value of learned interaction strategies.

Moreover, PPP influences agent behavior by increasing the frequency of user queries under ambiguous conditions-from 50% to 100% on SWE-Func-Loc and from 51% to 85% on deep research tasks-while maintaining low query rates for clear prompts. The number of questions per session rises early in training, then stabilizes, with a majority being low effort and very few high effort questions.

Summary of Insights

- PPP reframes LLM agent training as a multi-objective reinforcement learning challenge, balancing task success with proactive questioning and personalized interaction.

- UserVille provides a unique platform by generating vague prompt variants and simulating users with diverse, enforceable interaction preferences.

- The reward system integrates task accuracy, user effort, and preference compliance, incentivizing efficient and tailored communication.

- PPP-trained agents significantly outperform baseline models and GPT-5 on key benchmarks, demonstrating robust gains in productivity, proactivity, and personalization.

- These agents generalize well to unseen user preferences, alternative simulators, and more complex tasks, learning to ask fewer but more targeted, low-effort questions.

Concluding Remarks

The PPP framework and UserVille environment represent a pivotal advancement in developing interaction-aware LLM agents. By explicitly incorporating productivity, proactivity, and personalization into the reward design and leveraging preference-aware user simulators, this approach elevates interaction modeling from a peripheral feature to a fundamental capability. The demonstrated improvements across software engineering and deep research domains affirm the importance of adaptive, user-centric dialogue strategies in next-generation AI agents.