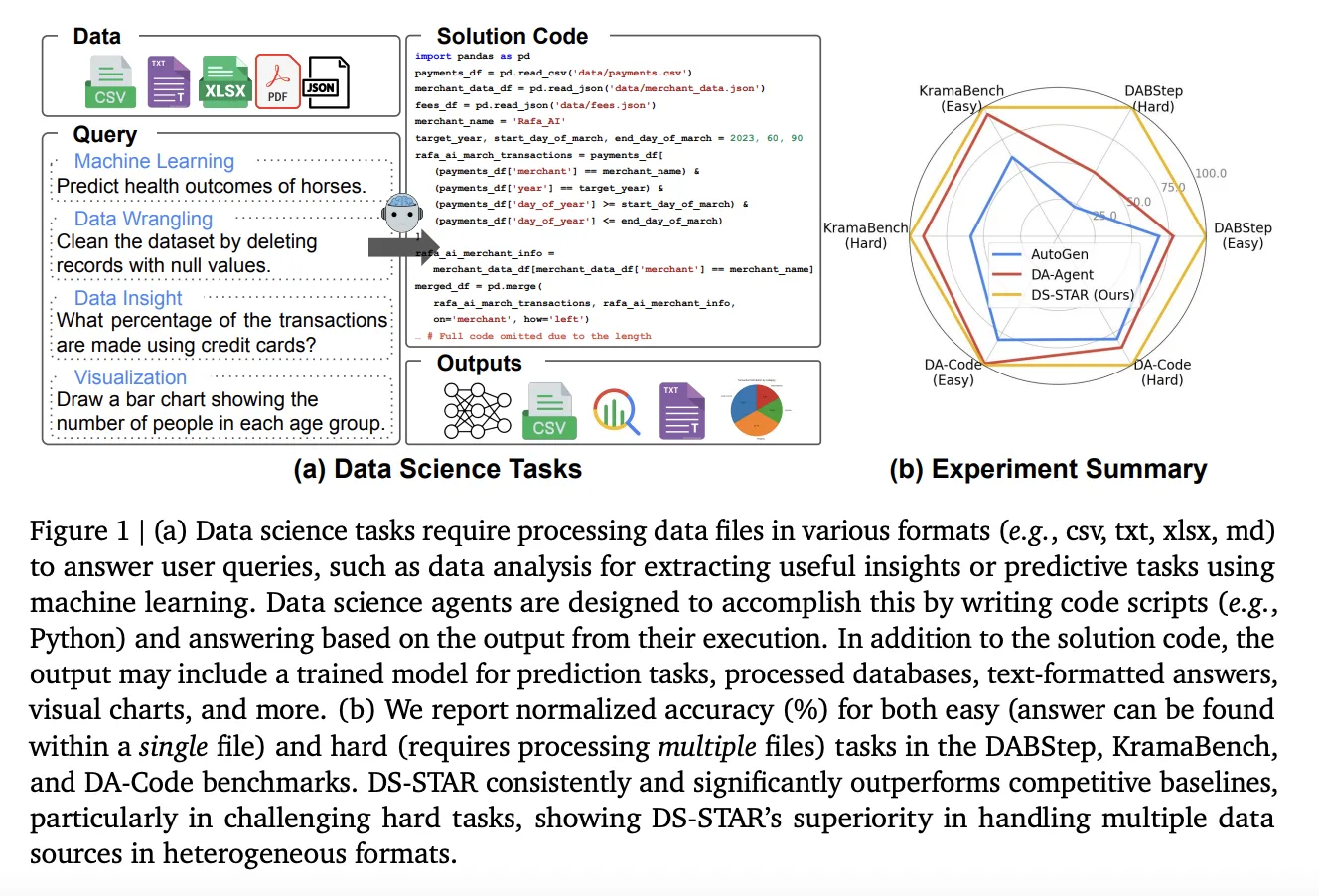

How can ambiguous business inquiries involving disorganized collections of CSV, JSON, and text files be transformed into dependable Python code without human intervention? Google’s research team unveils DS STAR (Data Science Agent via Iterative Planning and Verification), an innovative multi-agent system designed to convert open-ended data science questions into executable Python scripts that seamlessly handle diverse file formats. Unlike traditional approaches that assume a pristine SQL database and a single query, DS STAR embraces a Text to Python paradigm, directly processing heterogeneous data sources such as CSV, JSON, Markdown, and unstructured text.

Revolutionizing Data Science: From Text Queries to Python Scripts Across Mixed Data

Most existing data science agents focus on translating natural language queries into SQL commands targeting structured relational databases. This approach, however, falls short in real-world enterprise settings where data is scattered across various formats including documents, spreadsheets, and log files.

DS STAR redefines this challenge by generating Python code capable of loading, integrating, and analyzing any assortment of files provided by the dataset. Initially, it summarizes each file to extract key metadata and content insights, which then inform a comprehensive plan to solve the query through multiple steps. This flexible framework enables DS STAR to excel on complex benchmarks like DABStep, KramaBench, and DA Code, all of which demand multi-stage analysis over mixed file types and require outputs in precise formats.

Phase One: Comprehensive Data File Profiling with Aanalyzer

The first phase involves constructing a detailed map of the data repository. For every file (denoted as Di), the Aanalyzer agent crafts a Python script (si_desc) that parses the file to extract vital information such as column headers, data types, metadata, and textual summaries. Executing this script yields a concise description (di) that captures the essence of the file’s structure and content.

This method is effective for both structured and unstructured data: CSV files provide column-level statistics and sample data, while JSON and text files generate structural outlines and highlight key excerpts. The collection of these descriptions {di} forms a shared knowledge base that guides subsequent agents in the pipeline.

Phase Two: Iterative Strategy Development, Coding, and Validation

Following the initial data profiling, DS STAR embarks on an iterative cycle that mimics the workflow of a human data scientist working in a notebook environment:

- Aplanner devises an initial executable plan (p0) based on the user query and the file summaries, such as loading relevant datasets.

- Acoder translates the current plan (p) into Python code (s), which DS STAR then runs to obtain results (r).

- Averifier, an LLM-powered evaluator, assesses the combined plan, query, code, and execution output, issuing a binary verdict: sufficient or insufficient.

- If the plan is deemed insufficient, Arouter determines the next step-either appending a new action with the token Add Step or identifying a flawed step to remove and regenerate.

Aplanner adapts its planning based on the latest execution feedback (rt-1), ensuring each iteration addresses previous errors. This loop of routing, planning, coding, executing, and verifying continues until the solution passes verification or reaches a maximum of 20 refinement cycles.

To meet stringent benchmark output requirements, a dedicated Afinalyzer agent finalizes the solution code, enforcing formatting rules such as rounding precision and CSV output compliance.

Enhancing Reliability: Debugging and Intelligent File Retrieval

In practical scenarios, data pipelines often encounter schema changes or missing columns that cause failures. DS STAR incorporates Adebugger, a module that repairs malfunctioning scripts by analyzing the faulty code, error tracebacks, and the detailed file descriptions {di}. This multi-faceted approach is crucial because many data-related bugs depend on understanding schema details like column names or sheet structures, beyond just error messages.

Addressing the challenge posed by large domain-specific data lakes with thousands of files, as seen in KramaBench, DS STAR employs a Retriever component. This module uses a pretrained embedding model to encode both the user query and file descriptions, selecting the top 100 most relevant files to focus the agent’s context. The research team utilized Gemini Embedding 001 for this similarity search, optimizing efficiency and relevance.

Performance Highlights on Leading Benchmarks

All primary evaluations of DS STAR utilized Gemini 2.5 Pro as the foundational large language model, allowing up to 20 refinement iterations per task.

On the DABStep benchmark, Gemini 2.5 Pro alone achieved a 12.70% accuracy on challenging tasks. In contrast, DS STAR boosted this figure dramatically to 45.24% on hard tasks and 87.50% on easier ones-an improvement exceeding 32 percentage points on the difficult subset. This performance surpasses other prominent agents such as ReAct, AutoGen, Data Interpreter, DA Agent, and various commercial solutions listed on public leaderboards.

Comparative results indicate DS STAR elevates overall accuracy from 41.0% to 45.2% on DABStep, from 39.8% to 44.7% on KramaBench, and from 37.0% to 38.5% on DA Code, outperforming the best alternative systems on each dataset.

Specifically, for KramaBench, which demands effective retrieval from extensive data lakes, DS STAR combined with Gemini 2.5 Pro achieved a normalized score of 44.69, surpassing the leading baseline DA Agent’s 39.79.

On DA Code, DS STAR again outperformed DA Agent, reaching 37.1% accuracy on difficult tasks compared to DA Agent’s 32.0%, both using Gemini 2.5 Pro.

Essential Insights and Implications

- DS STAR reimagines data science automation by shifting from Text to SQL on structured tables to a versatile Text to Python approach that handles diverse file types including CSV, JSON, Markdown, and unstructured text.

- The architecture employs a collaborative multi-agent loop-Aanalyzer, Aplanner, Acoder, Averifier, Arouter, and Afinalyzer-that iteratively plans, executes, and validates Python code until the solution meets quality standards.

- Robustness is enhanced through Adebugger, which repairs faulty scripts using comprehensive schema context, and Retriever, which efficiently narrows down relevant files from large datasets.

- Leveraging Gemini 2.5 Pro and up to 20 refinement cycles, DS STAR achieves substantial accuracy improvements across major benchmarks, notably increasing DABStep’s hard task accuracy from 12.70% to 45.24%.

- Experimental ablations confirm the critical role of detailed file analysis and dynamic routing, while tests with GPT-5 demonstrate the system’s model-agnostic design and the necessity of iterative refinement for tackling complex, multi-step analytical challenges.

Concluding Thoughts

DS STAR exemplifies how effective data science automation requires structured orchestration around large language models rather than relying solely on prompt engineering. By integrating agents like Aanalyzer, Averifier, Arouter, and Adebugger, DS STAR transforms unstructured data lakes into a controlled, iterative Text to Python workflow. This approach is measurable across benchmarks such as DABStep, KramaBench, and DA Code, and adaptable to different LLMs including Gemini 2.5 Pro and GPT-5. This advancement marks a significant step toward practical, end-to-end automated analytics systems beyond simple table-based demonstrations.