Building an Advanced Speech Enhancement and Recognition Pipeline with SpeechBrain

This guide demonstrates a sophisticated yet accessible workflow for speech processing using SpeechBrain. We begin by synthesizing clean speech samples with gTTS, intentionally introduce noise to mimic real-world acoustic conditions, and then apply SpeechBrain’s MetricGAN+ model to improve audio clarity. Following enhancement, we perform automatic speech recognition (ASR) using a language model-rescored CRDNN system, comparing word error rates (WER) before and after denoising. This stepwise approach showcases how SpeechBrain facilitates the creation of a comprehensive speech enhancement and recognition pipeline with minimal code.

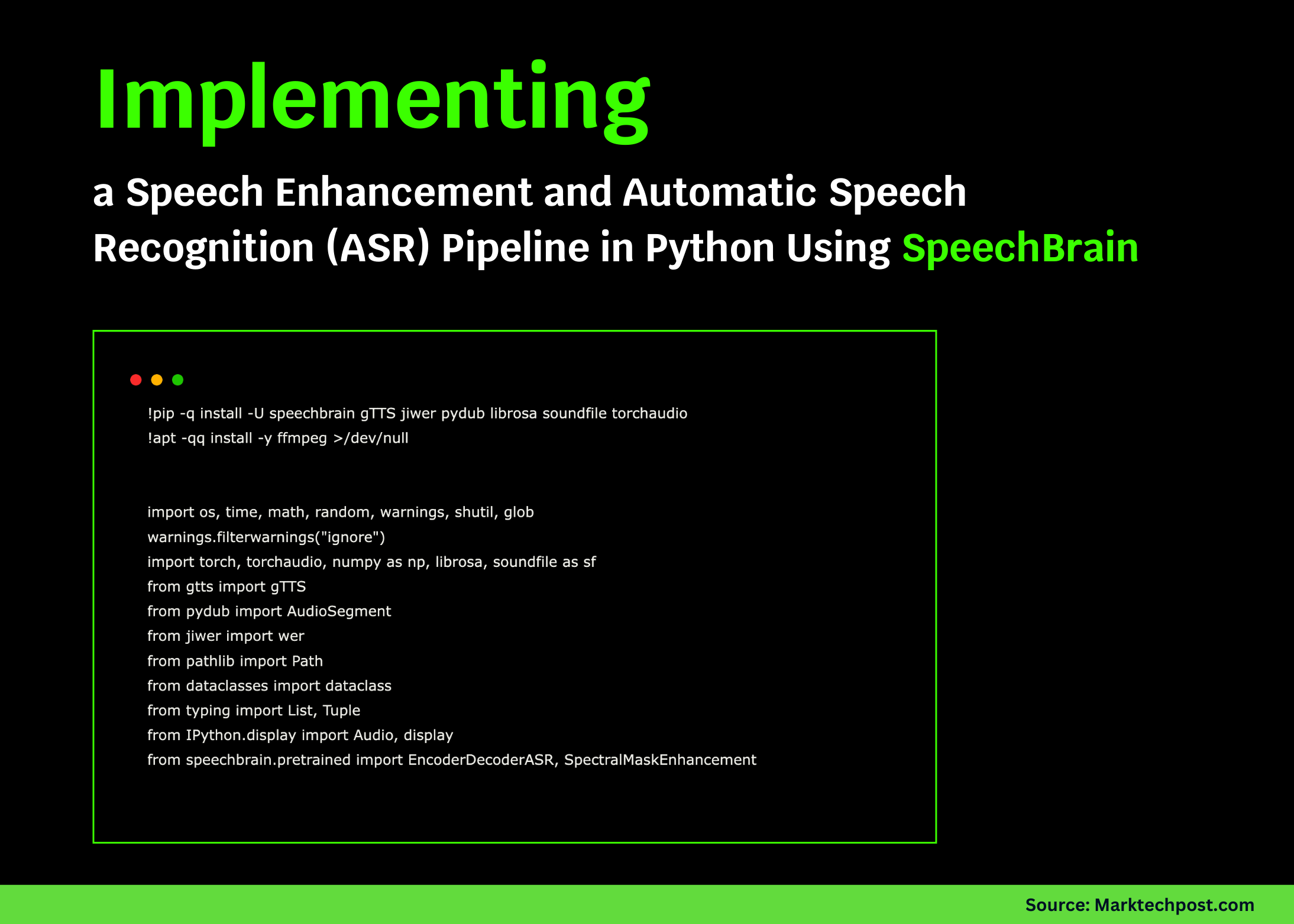

Setting Up the Environment and Dependencies

First, we prepare our environment by installing essential libraries such as SpeechBrain, gTTS, and audio processing tools. We configure paths and parameters, and detect whether a GPU is available to accelerate processing. This setup ensures a smooth foundation for building our speech pipeline.

!pip install -q -U speechbrain gTTS jiwer pydub librosa soundfile torchaudio

!apt-get -qq install -y ffmpeg >/dev/null

import os

import time

import warnings

import torch

import torchaudio

import numpy as np

import librosa

import soundfile as sf

from gtts import gTTS

from pydub import AudioSegment

from jiwer import wer

from pathlib import Path

from dataclasses import dataclass

from typing import List, Tuple

from IPython.display import Audio, display

from speechbrain.pretrained import EncoderDecoderASR, SpectralMaskEnhancement

warnings.filterwarnings("ignore")

root = Path("speechbraindemo")

root.mkdir(existok=True)

samplerate = 16000

device = "cuda" if torch.cuda.isavailable() else "cpu"

Utility Functions for Speech Synthesis, Noise Injection, and Playback

We define helper functions to streamline our workflow. The ttstowav function converts text to speech using gTTS and saves it as a WAV file. The addnoise function adds Gaussian noise at a specified signal-to-noise ratio (SNR) to simulate challenging listening environments. Additional utilities allow audio playback within notebooks and text normalization for accurate evaluation. A Sample dataclass organizes each utterance’s clean, noisy, and enhanced audio file paths.

def ttstowav(text: str, outputwav: str, lang="en"):

tempmp3 = outputwav.replace(".wav", ".mp3")

gTTS(text=text, lang=lang).save(tempmp3)

audio = AudioSegment.fromfile(tempmp3, format="mp3").setchannels(1).setframerate(samplerate)

audio.export(outputwav, format="wav")

os.remove(tempmp3)

def addnoise(inputwav: str, snrdb: float, outputwav: str):

y, = librosa.load(inputwav, sr=samplerate, mono=True)

rmssignal = np.sqrt(np.mean(y2) + 1e-12)

noise = np.random.normal(0, 1, len(y))

noise = noise / (np.sqrt(np.mean(noise2) + 1e-12))

targetnoiserms = rmssignal / (10*(snrdb / 20))

noisysignal = np.clip(y + noise targetnoiserms, -1.0, 1.0)

sf.write(outputwav, noisysignal, samplerate)

def playaudio(title: str, filepath: str):

print(f"▶️ {title}: {filepath}")

display(Audio(filepath, rate=samplerate))

def normalizetext(text: str) -> str:

return " ".join("".join(ch.lower() if ch.isalnum() or ch.isspace() else " " for ch in text).split())

@dataclass

class Sample:

text: str

cleanwav: str

noisywav: str

enhancedwav: str

Generating Speech Samples and Introducing Noise

We create a set of example sentences, synthesize their clean speech versions, and generate noisy counterparts by adding noise at varying SNR levels. These samples are stored in Sample objects for easy management throughout the pipeline.

sentences = [

"Machine learning is revolutionizing technology.",

"Open-source frameworks accelerate innovation worldwide.",

"SpeechBrain simplifies building speech applications in Python."

]

samples: List[Sample] = []

print("🗣️ Generating speech samples with gTTS...")

for idx, sentence in enumerate(sentences, 1):

cleanpath = str(root / f"clean{idx}.wav")

noisypath = str(root / f"noisy{idx}.wav")

enhancedpath = str(root / f"enhanced{idx}.wav")

ttstowav(sentence, cleanpath)

noiselevel = 5.0 if idx % 2 == 1 else 0.0 # Add noise to odd samples

addnoise(cleanpath, snrdb=noiselevel, outputwav=noisypath)

samples.append(Sample(text=sentence, cleanwav=cleanpath, noisywav=noisypath, enhancedwav=enhancedpath))

playaudio("Clean Sample #1", samples[0].cleanwav)

playaudio("Noisy Sample #1", samples[0].noisywav)

Loading Pretrained Models for Enhancement and Recognition

We load SpeechBrain’s pretrained MetricGAN+ model for speech enhancement and a CRDNN-based ASR model with language model rescoring. These models provide the core functionality to denoise audio and transcribe speech with improved accuracy.

print("⬇️ Downloading pretrained models...")

asrmodel = EncoderDecoderASR.fromhparams(

source="speechbrain/asr-crdnn-rnnlm-librispeech",

runopts={"device": device},

savedir=str(root / "pretrainedasr"),

)

enhancementmodel = SpectralMaskEnhancement.fromhparams(

source="speechbrain/metricgan-plus-voicebank",

runopts={"device": device},

savedir=str(root / "pretrainedenhancement"),

)

Enhancing Audio and Evaluating Recognition Performance

We define functions to enhance noisy audio files, transcribe speech, and calculate WER by comparing transcriptions to reference text. Processing each sample, we measure the impact of enhancement on recognition accuracy and record inference times.

def enhanceaudio(inputwav: str, outputwav: str):

enhancedsignal = enhancementmodel.enhancefile(inputwav)

if enhancedsignal.dim() == 1:

enhancedsignal = enhancedsignal.unsqueeze(0)

torchaudio.save(outputwav, enhancedsignal.cpu(), samplerate)

def transcribeaudio(wavpath: str) -> str:

transcription = asrmodel.transcribefile(wavpath)

return normalizetext(transcription)

def evaluatetranscription(reference: str, wavpath: str) -> Tuple[str, float]:

hypothesis = transcribeaudio(wavpath)

errorrate = wer(normalizetext(reference), hypothesis)

return hypothesis, errorrate

print("🔬 Comparing ASR results on noisy vs enhanced audio...")

results = []

starttime = time.time()

for sample in samples:

enhanceaudio(sample.noisywav, sample.enhancedwav)

hypnoisy, wernoisy = evaluatetranscription(sample.text, sample.noisywav)

hypenhanced, werenhanced = evaluatetranscription(sample.text, sample.enhancedwav)

results.append((sample.text, hypnoisy, wernoisy, hypenhanced, werenhanced))

endtime = time.time()

Presenting Results and Insights

We display detailed transcription results for each utterance, including WER before and after enhancement, and report total inference time. Additionally, batch decoding is demonstrated to highlight efficiency gains. Listening to enhanced audio samples illustrates the qualitative improvements achieved.

def formatfloat(value):

return f"{value:.3f}" if isinstance(value, float) else value

print(f"⏱️ Total inference time: {endtime - starttime:.2f} seconds on {device.upper()}")

print("n# --- Recognition Results (Noisy → Enhanced) ---")

for idx, (ref, noisyhyp, noisywer, enhhyp, enhwer) in enumerate(results, 1):

print(f"nUtterance {idx}")

print("Reference: ", ref)

print("Noisy ASR: ", noisyhyp)

print("WER (Noisy): ", formatfloat(noisywer))

print("Enhanced ASR: ", enhhyp)

print("WER (Enhanced):", formatfloat(enhwer))

print("n🔄 Batch decoding clean and noisy samples:")

batchfiles = [s.cleanwav for s in samples] + [s.noisywav for s in samples]

batchstart = time.time()

batchtranscriptions = [transcribeaudio(f) for f in batchfiles]

batchend = time.time()

for filepath, transcription in zip(batchfiles, batchtranscriptions):

print(f"{os.path.basename(filepath)} → {transcription[:80]}{'...' if len(transcription) > 80 else ''}")

print(f"⏳ Batch decoding time: {batchend - batchstart:.2f} seconds")

playaudio("Enhanced Sample #1 (MetricGAN+)", samples[0].enhancedwav)

avgwernoisy = sum(r[2] for r in results) / len(results)

avgwerenhanced = sum(r[4] for r in results) / len(results)

print("n📊 Summary:")

print(f"Average WER (Noisy): {avgwernoisy:.3f}")

print(f"Average WER (Enhanced): {avgwer_enhanced:.3f}")

print("Tip: Experiment with different noise levels, longer sentences, or switch to GPU for faster processing.")

Conclusion: Harnessing SpeechBrain for Robust Speech Processing

This tutorial highlights the effectiveness of combining speech enhancement and recognition within a single pipeline using SpeechBrain. By synthesizing audio, simulating noisy environments, applying MetricGAN+ for denoising, and transcribing with a powerful ASR model, we observe significant improvements in transcription accuracy under adverse conditions. This open-source framework offers a flexible foundation for expanding to larger datasets, experimenting with alternative enhancement techniques, or customizing ASR models for specific applications.