The MiMo team at Xiaomi has unveiled MiMo-Audio, an advanced audio-language model boasting 7 billion parameters. This model uniquely integrates a next-token prediction objective across intertwined text and discretized speech data, enabling pretraining on an unprecedented scale exceeding 100 million hours of audio.

Innovations in Audio-Language Modeling

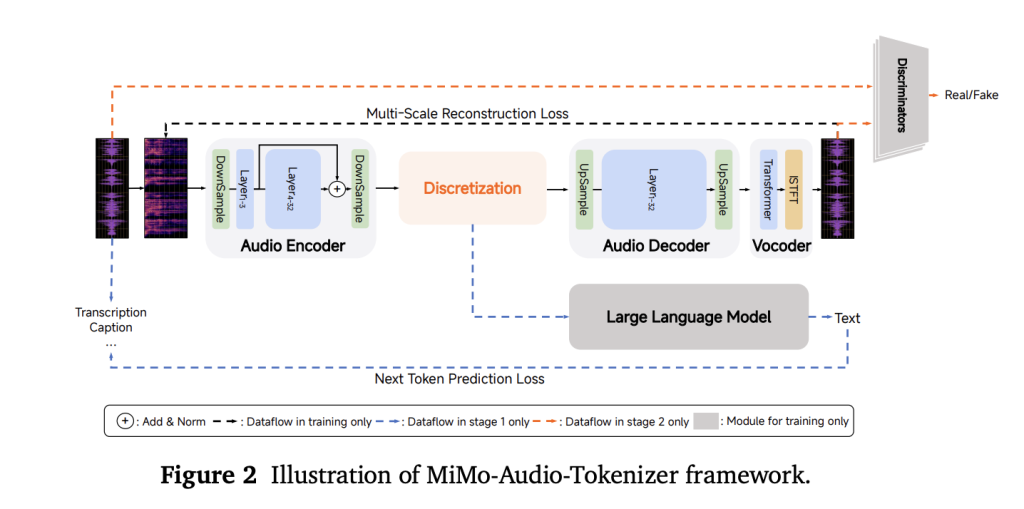

Departing from conventional methods that depend on task-specific output layers or lossy acoustic token representations, MiMo-Audio introduces a custom Residual Vector Quantization (RVQ) tokenizer. This tokenizer is meticulously designed to maintain semantic accuracy while ensuring high-fidelity audio reconstruction. Operating at a frequency of 25 Hz, it generates eight RVQ layers-approximately 200 tokens per second-providing the language model with near “lossless” speech features. These features can be autoregressively modeled alongside textual data, preserving nuances such as prosody and speaker characteristics.

Model Structure: From Patch Encoding to Decoding

To reconcile the differing temporal resolutions of audio and text, MiMo-Audio compresses four audio timesteps into a single patch, effectively downsampling from 25 Hz to 6.25 Hz for efficient processing by the language model. The system then reconstructs the full-rate RVQ streams through a causal patch decoder. A novel delayed multi-layer RVQ generation strategy sequences predictions across codebooks, enhancing synthesis stability and respecting dependencies between layers. The entire pipeline-including the patch encoder, the 7-billion-parameter MiMo backbone, and the patch decoder-is trained cohesively under a unified next-token prediction objective.

Scaling Up: The Key to Emergent Capabilities

Training unfolds in two major phases: initially, a comprehension phase focusing on optimizing text-token loss over combined speech-text datasets; followed by a joint phase that incorporates audio-specific losses to enable speech continuation, speech-to-text (S2T), text-to-speech (T2S), and instruction-driven tasks. This approach reveals a critical threshold in compute and data volume-around 100 million hours of audio-beyond which few-shot learning abilities emerge, mirroring patterns observed in large-scale text-only language models.

Performance Across Speech and Audio Benchmarks

MiMo-Audio has been rigorously tested on diverse benchmarks, including speech reasoning challenges like SpeechMMLU and comprehensive audio understanding tests such as MMAU. The model achieves leading results across speech, sound, and music domains, significantly narrowing the performance gap between text-only and speech-in/speech-out modalities. To foster community engagement, Xiaomi has released MiMo-Audio-Eval, an open-source evaluation toolkit, alongside interactive demos showcasing capabilities like speech continuation, voice and emotion conversion, noise reduction, and speech translation.

Significance of MiMo-Audio’s Approach

MiMo-Audio’s design philosophy emphasizes simplicity and generality-eschewing complex multi-head architectures or specialized ASR/TTS objectives during pretraining. Instead, it relies solely on GPT-style next-token prediction over high-fidelity audio tokens and text. The core engineering breakthroughs include: (1) a tokenizer that retains critical speech attributes such as prosody and speaker identity without information loss; (2) patchification to manage sequence lengths effectively; and (3) delayed RVQ decoding to maintain audio quality during generation. These innovations empower developers to perform few-shot speech-to-speech editing and robust speech continuation with minimal fine-tuning.

Six Key Technical Insights

- Precision Tokenization: The custom RVQ tokenizer operates at 25 Hz with eight codebooks, preserving essential speech qualities like timbre and prosody while remaining compatible with language modeling.

- Efficient Sequence Handling: By grouping four audio timesteps into one patch, the model reduces sequence length from 25 Hz to 6.25 Hz, enabling the 7B language model to process extended speech sequences without sacrificing detail.

- Unified Training Objective: MiMo-Audio employs a single next-token prediction loss across interleaved audio and text, eliminating the need for separate heads for ASR, TTS, or dialogue tasks and simplifying the architecture.

- Emergent Few-Shot Learning: Once trained on massive datasets (~100 million hours), the model exhibits few-shot capabilities such as speech continuation, voice transformation, emotional expression transfer, and speech translation.

- Benchmark Excellence: The model achieves state-of-the-art results on SpeechMMLU (Speech-to-Speech 69.1, Text-to-Speech 71.5) and MMAU (overall 66.0), reducing the modality gap between text and speech to a mere 3.4 points.

- Open-Source Ecosystem: Xiaomi has made available the tokenizer, 7B model checkpoints (both base and instruction-tuned), the MiMo-Audio-Eval toolkit, and public demos, encouraging researchers and developers to explore and expand speech-to-speech intelligence.

Conclusion: Advancing Speech Intelligence Through Scalable Modeling

MiMo-Audio exemplifies how combining high-fidelity, RVQ-based “lossless” tokenization with patchified next-token pretraining at scale can unlock sophisticated few-shot speech understanding and generation without relying on specialized task heads. The integrated pipeline-comprising tokenizer, patch encoder, large language model, and patch decoder-effectively bridges the temporal resolution gap between audio and text while preserving speaker identity and prosodic features through delayed multi-layer RVQ decoding. Empirical results demonstrate the model’s ability to close the performance gap between text and speech modalities, generalize across diverse audio benchmarks, and support in-context speech-to-speech editing and continuation tasks.