What does it take to develop a single open-source model capable of consistently interpreting text, images, audio, and video while maintaining efficient performance? Researchers at Harbin Institute of Technology, Shenzhen, have unveiled Uni-MoE-2.0-Omni, an advanced open omnimodal large-scale model that advances the Lychee Uni-MoE series toward sophisticated language-centered multimodal reasoning. Built from the ground up on a Qwen2.5-7B dense transformer backbone, this model incorporates a Mixture of Experts (MoE) framework with dynamic capacity routing, a carefully designed training regimen combining supervised and reinforcement learning, and is trained on approximately 75 billion tokens of meticulously curated multimodal data. Uni-MoE-2.0-Omni excels at understanding and generating across modalities including text, images, audio, and video.

Unified Architecture: Language at the Core of Multimodal Integration

At the heart of Uni-MoE-2.0-Omni lies a Qwen2.5-7B style transformer that functions as a language-centric nucleus. Surrounding this core, the system integrates a unified speech encoder that transforms diverse audio inputs-ranging from environmental sounds to speech and music-into a shared representational space. On the visual front, pre-trained encoders process images and video frames, converting them into token sequences fed into the same transformer. For content generation, the model employs a context-aware MoE-based text-to-speech (TTS) module alongside a task-specific diffusion transformer responsible for image synthesis.

All input modalities are tokenized into a common format, enabling the transformer’s self-attention layers to simultaneously process text, visual, and audio tokens. This unified token interface simplifies cross-modal fusion and positions the language model as the central orchestrator for both comprehension and generation tasks. The architecture supports up to 10 different cross-modal input combinations, such as image-text, video-speech, and even tri-modal inputs, facilitating versatile multimodal interactions.

Advanced Fusion Techniques: Omni Modality 3D RoPE and Expert Routing

To achieve precise cross-modal alignment, Uni-MoE-2.0-Omni introduces an innovative Omni Modality 3D Rotary Positional Embedding (RoPE) mechanism. Unlike traditional one-dimensional positional encodings used for text, this approach assigns three-dimensional coordinates-time, height, and width-to tokens from visual and audio streams, while speech tokens receive temporal positioning. This spatial-temporal encoding grants the transformer explicit awareness of when and where each token occurs, a critical feature for tasks involving video comprehension and audio-visual reasoning.

The Mixture of Experts layers replace conventional multilayer perceptrons with a specialized MoE stack comprising three expert categories: empty experts that enable computation skipping during inference, routed experts dedicated to modality-specific knowledge (audio, vision, or text), and shared experts that facilitate cross-modal communication by remaining active for all inputs. A routing network dynamically selects which experts to engage based on the input token, optimizing computational efficiency without sacrificing specialized processing.

Comprehensive Training Strategy: From Cross-Modal Pretraining to Reinforcement Learning

The training process follows a meticulously crafted, multi-stage pipeline. Initially, the model undergoes language-centric cross-modal pretraining using paired datasets of image-text, audio-text, and video-text. This phase aligns all modalities into a unified semantic space anchored by language. The base model is trained on roughly 75 billion open-source multimodal tokens and is equipped with specialized tokens for speech and image generation, enabling it to learn generative capabilities conditioned on linguistic prompts.

Subsequently, a progressive supervised fine-tuning phase activates modality-specific experts grouped by audio, vision, and text. During this stage, control tokens are introduced to enable tasks such as text-conditioned speech synthesis and image generation within the unified language interface. Following large-scale supervised fine-tuning, a data-balanced annealing step adjusts dataset weights across modalities and tasks, employing a reduced learning rate to prevent overfitting and enhance the model’s stability in handling diverse inputs.

To enhance long-form reasoning abilities, Uni-MoE-2.0-Omni incorporates iterative policy optimization using Guided Supervised Policy Optimization (GSPO) and Direct Preference Optimization (DPO). GSPO leverages the model itself or an external large language model as a judge to generate preference signals, while DPO translates these preferences into stable policy updates, outperforming traditional reinforcement learning from human feedback. Multiple rounds of this GSPO-DPO loop yield the Uni-MoE-2.0-Thinking variant, which builds upon the omnimodal base with improved stepwise reasoning capabilities.

Multimodal Generation: Controlled Speech and Image Synthesis

For speech synthesis, Uni-MoE-2.0-Omni employs a context-aware MoE TTS module layered atop the language model. The transformer outputs control tokens specifying attributes such as timbre, style, and language alongside textual content. The MoE TTS module then converts this sequence into discrete audio tokens, which an external codec decodes into waveforms, maintaining alignment with the unified speech encoder used during input processing. This integration elevates speech generation to a fully controlled task within the unified model framework.

On the visual generation side, a task-aware diffusion transformer is conditioned on both task-specific tokens and image tokens derived from the omnimodal backbone. Task tokens indicate whether the model should perform text-to-image generation, image editing, or enhancement. Lightweight projection layers map these tokens into the diffusion transformer’s conditioning space, enabling instruction-guided image manipulation while keeping the main omnimodal model frozen during fine-tuning. This design ensures efficient and flexible visual content creation.

Performance Highlights and Accessibility

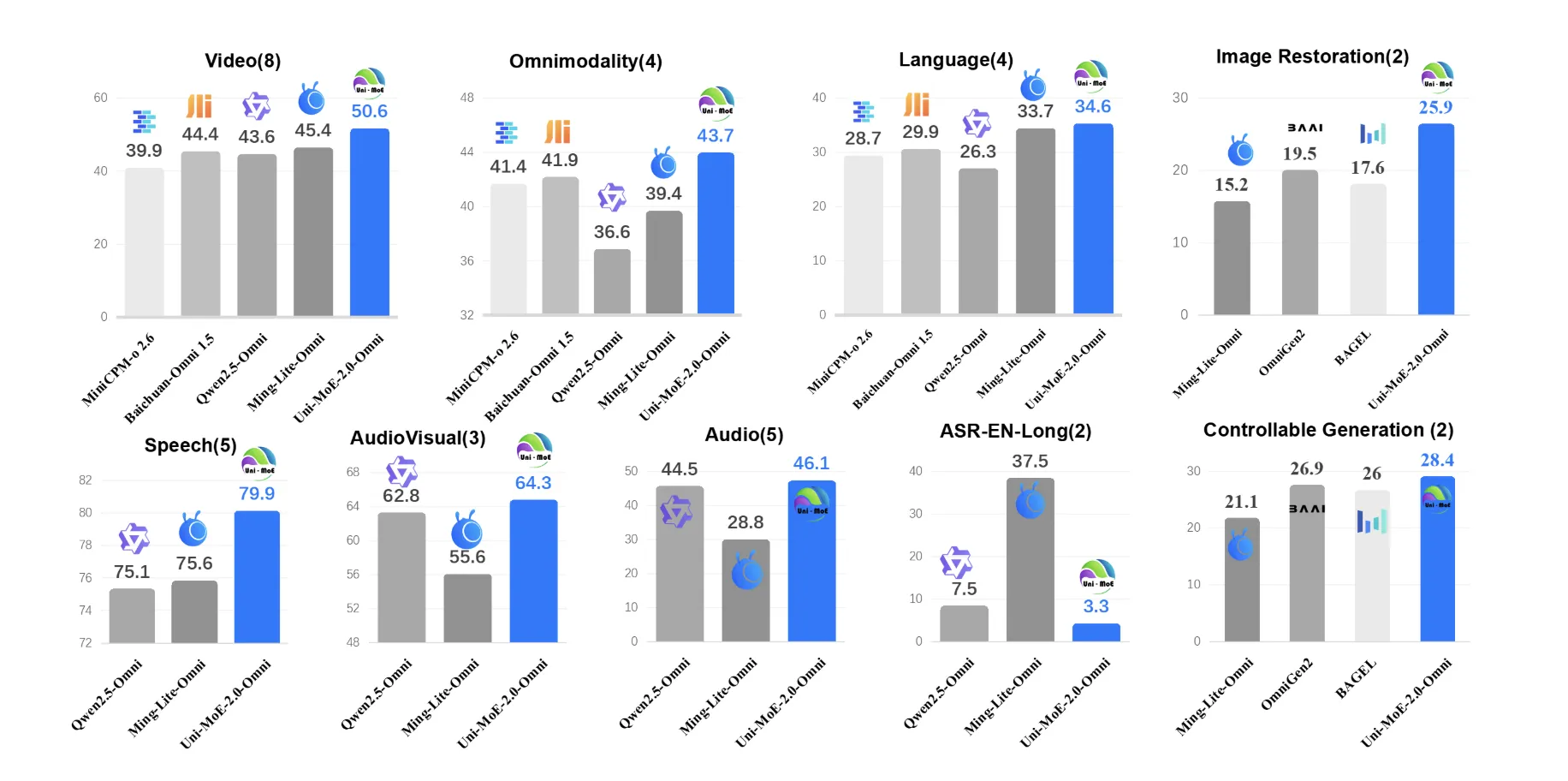

Uni-MoE-2.0-Omni has been rigorously tested across 85 multimodal benchmarks encompassing image, text, video, audio, and complex cross- and tri-modal reasoning tasks. It outperforms its predecessor, Qwen2.5-Omni-which was trained on approximately 1.2 trillion tokens-on over 50 of 76 shared benchmarks. Notable improvements include an average 7% boost in video understanding across eight tasks, a 7% gain in omnimodal comprehension on benchmarks like OmniVideoBench and WorldSense, and a 4% increase in audio-visual reasoning accuracy.

In long-form speech processing, the model achieves up to a 4.2% relative reduction in word error rate (WER) on extended LibriSpeech datasets and approximately a 1% WER improvement on TinyStories-en text-to-speech tasks. Its image generation and editing capabilities rival specialized visual models, with consistent gains of around 0.5% on the GEdit benchmark compared to Ming Lite Omni, and superior performance over Qwen Image and PixWizard on several low-level image processing metrics.

Summary of Innovations and Impact

- Uni-MoE-2.0-Omni is an open-source omnimodal large model built from scratch on a Qwen2.5-7B dense transformer backbone, enhanced with a Mixture of Experts architecture supporting 10 cross-modal input configurations and joint understanding across text, images, audio, and video.

- The model introduces a Dynamic Capacity MoE system featuring shared, routed, and null experts, combined with Omni Modality 3D RoPE positional embeddings, balancing computational efficiency and multimodal capability by routing experts per token while preserving spatiotemporal alignment within self-attention layers.

- A staged training approach-including cross-modal pretraining, progressive supervised fine-tuning with modality-specific experts, data-balanced annealing, and GSPO-DPO reinforcement learning-enables the Uni-MoE-2.0-Thinking variant to excel in long-form, stepwise reasoning.

- Uni-MoE-2.0-Omni supports comprehensive omnimodal understanding and generation of images, text, and speech through a unified language-centric interface, featuring dedicated MoE-based TTS and diffusion modules for controllable speech and image synthesis.

- Across 85 benchmarks, the model surpasses Qwen2.5-Omni on more than 50 shared tasks, delivering approximately 7% improvements in video and omnimodal understanding, 4% in audio-visual reasoning, and up to 4.2% relative WER reduction in long-form speech recognition.