Revolutionizing Multilingual Translation: Tencent’s Latest Innovations

Tencent’s Hunyuan research team has unveiled two groundbreaking models in the realm of machine translation: Hunyuan-MT-7B, a dedicated multilingual translation engine, and Hunyuan-MT-Chimera-7B, an advanced ensemble model. These models were introduced as part of Tencent’s entry into the WMT2025 General Machine Translation shared task, where Hunyuan-MT-7B impressively secured the top position in 30 out of 31 language pairs, setting a new benchmark for translation accuracy and versatility.

Deep Dive into the Models

Hunyuan-MT-7B: A Compact Powerhouse

- Built with 7 billion parameters, this model excels in multilingual translation.

- Supports seamless bidirectional translation across 33 languages, including underrepresented Chinese ethnic minority languages such as Tibetan, Mongolian, Uyghur, and Kazakh.

- Engineered to perform robustly on both high-resource and low-resource language pairs, delivering state-of-the-art results comparable to much larger models.

Hunyuan-MT-Chimera-7B: Fusion for Enhanced Precision

- Employs a novel weak-to-strong fusion strategy that integrates multiple translation hypotheses during inference.

- Utilizes reinforcement learning and sophisticated aggregation methods to refine outputs, surpassing the quality of individual translation systems.

- Marks the first open-source model of its kind, pushing the boundaries of ensemble translation techniques.

Innovative Training Pipeline

The development of these models followed a meticulous five-phase training regimen tailored for multilingual translation excellence:

- Comprehensive Pre-training

- Leveraged an enormous dataset of 1.3 trillion tokens spanning 112 languages and dialects.

- Data quality was ensured through rigorous evaluation of knowledge relevance, authenticity, and writing style.

- Maintained linguistic and thematic diversity via detailed tagging across disciplines and industries.

- Translation-Specific Pre-training

- Incorporated monolingual corpora from mC4 and OSCAR, filtered using advanced tools like fastText for language identification, minLSH for deduplication, and KenLM for perplexity-based quality control.

- Parallel datasets from OPUS and ParaCrawl were refined with CometKiwi scoring to ensure alignment quality.

- Included 20% replay of general pre-training data to prevent forgetting previously learned knowledge.

- Supervised Fine-Tuning (SFT)

- Stage I involved approximately 3 million parallel sentence pairs, including Flores-200, WMT test sets, curated Mandarin-minority language data, synthetic pairs, and instruction-tuning datasets.

- Stage II focused on a high-quality subset of around 268,000 pairs, selected through automated metrics (CometKiwi, GEMBA) and manual validation.

- Reinforcement Learning (RL)

- Applied the GRPO algorithm to optimize translation quality.

- Reward functions incorporated XCOMET-XXL and DeepSeek-V3-0324 for quality assessment, terminology-aware rewards (TAT-R1), and penalties to discourage repetitive or degenerate outputs.

- Weak-to-Strong Reinforcement Learning

- Generated multiple candidate translations which were aggregated based on reward scores.

- This approach, implemented in Hunyuan-MT-Chimera-7B, significantly enhances translation robustness and reduces errors caused by repetition.

Performance Benchmarks and Comparative Analysis

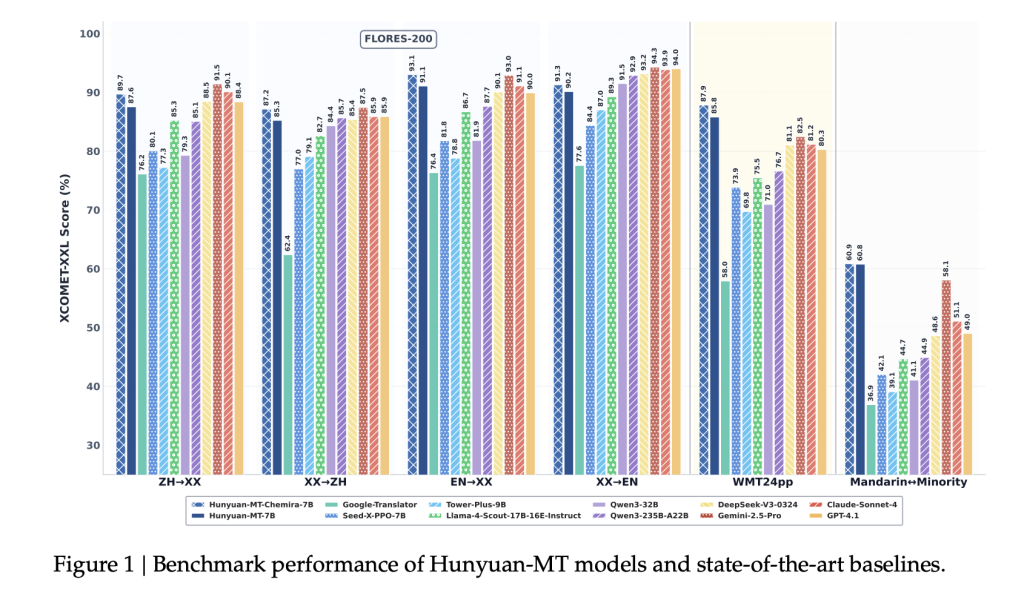

Automated Metrics Showcase Superior Accuracy

- On the WMT24pp benchmark (English↔multiple languages), Hunyuan-MT-7B achieved an outstanding XCOMET-XXL score of 0.8585, outperforming larger competitors such as Gemini-2.5-Pro (0.8250) and Claude-Sonnet-4 (0.8120).

- Across FLORES-200’s 33 languages and 1056 language pairs, it scored 0.8758 on XCOMET-XXL, surpassing open-source giants like Qwen3-32B (0.7933).

- In challenging Mandarin↔minority language translations, it achieved a notable 0.6082, significantly ahead of Gemini-2.5-Pro’s 0.5811, highlighting its strength in low-resource scenarios.

Outperforming Industry Leaders

- Demonstrates a 15-65% improvement over Google Translate across various evaluation metrics.

- Surpasses specialized translation models like Tower-Plus-9B and Seed-X-PPO-7B despite having fewer parameters.

- The Chimera-7B ensemble model further boosts performance by approximately 2.3% on FLORES-200, especially excelling in Chinese↔other and non-English↔non-Chinese language pairs.

Human-Centric Evaluation Highlights

Using a bespoke evaluation dataset spanning social, medical, legal, and internet domains, Hunyuan-MT-7B was benchmarked against leading models:

- Hunyuan-MT-7B: Average score of 3.189

- Gemini-2.5-Pro: Average score of 3.223

- DeepSeek-V3: Average score of 3.219

- Google Translate: Average score of 2.344

These results underscore that despite its relatively compact size, Hunyuan-MT-7B delivers translation quality nearly on par with much larger proprietary systems.

Illustrative Use Cases Demonstrating Model Strengths

- Handling Cultural Nuances: Accurately translates the Chinese term “小红书” as the social media platform “REDnote,” avoiding Google Translate’s erroneous “sweet potatoes.”

- Idiomatic Expressions: Correctly interprets “You are killing me” as a humorous expression rather than a literal threat, preserving intended meaning.

- Medical Terminology: Precisely renders “uric acid kidney stones,” whereas other models produce inaccurate or malformed translations.

- Minority Language Fluency: Generates coherent translations for Kazakh and Tibetan, where many baselines fail or produce nonsensical output.

- Chimera Model Enhancements: Improves translation of specialized jargon in gaming, intensifiers, and sports terminology, showcasing its ensemble strength.

Final Thoughts: Setting a New Benchmark in Open-Source Translation

Tencent’s introduction of Hunyuan-MT-7B and Hunyuan-MT-Chimera-7B marks a significant leap forward in open-source multilingual machine translation. By leveraging a sophisticated training pipeline and focusing on both high-resource and underrepresented languages, these models deliver translation quality that rivals or surpasses larger closed-source alternatives. Their availability empowers the AI research community with powerful, accessible tools to advance multilingual communication and translation technology worldwide.