Revolutionizing LLM Inference with Stream-Oriented Dataflow Compilation

Traditional approaches to large language model (LLM) inference often rely on batching kernels and shuttling data back and forth between compute units and off-chip DRAM. However, a novel compiler framework challenges this paradigm by leveraging on-chip streaming and dataflow architectures to optimize performance and energy efficiency. This innovative system translates PyTorch LLM models-including GPT-2, Llama, Qwen, and Gemma-into stream-scheduled dataflow accelerators deployed on AMD’s Alveo U55C FPGA, significantly reducing latency and power consumption.

Introducing Iterative Tensors for Streamlined Dataflow

At the heart of this compiler lies the concept of iterative tensors (itensors), a specialized tensor type that encodes the tiling, iteration order, and layout of data streams. This abstraction enables precise coordination between kernels, ensuring that intermediate data tiles flow seamlessly through on-chip FIFOs without unnecessary off-chip memory accesses. By automating the insertion and sizing of DMA engines, FIFOs, and layout converters, the compiler guarantees correct and efficient inter-kernel streaming, minimizing costly DRAM round-trips.

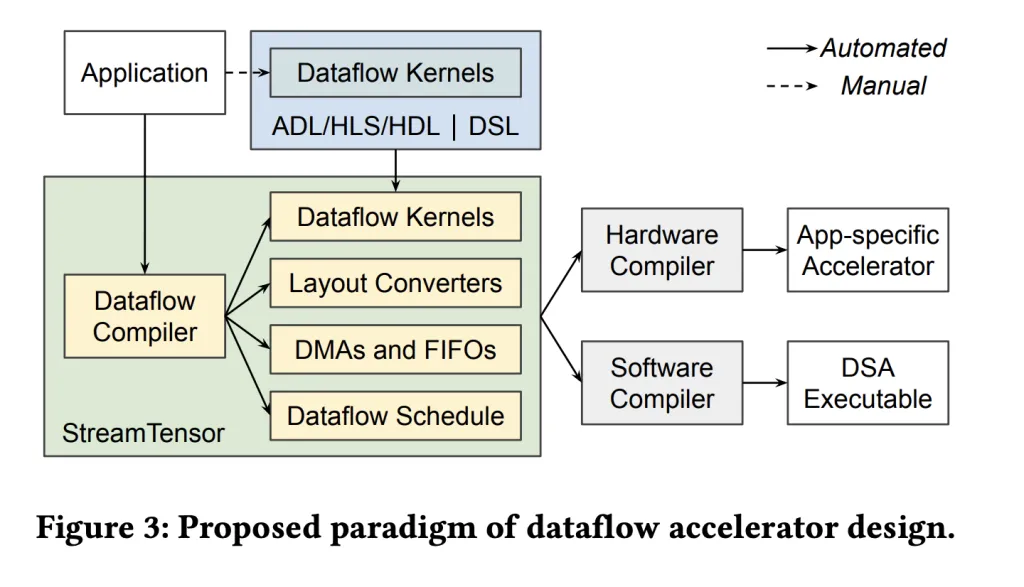

StreamTensor: From PyTorch Graphs to Optimized FPGA Dataflows

StreamTensor transforms PyTorch computational graphs into a stream-oriented dataflow design, where intermediate results are predominantly passed through on-chip buffers rather than external memory. This approach not only reduces latency but also enhances energy efficiency by limiting data movement. The compiler’s iterative tensor system explicitly captures stream compatibility between kernels, allowing for safe fusion and targeted converter generation only when producer and consumer data layouts differ.

Moreover, StreamTensor employs a hierarchical search strategy to optimize tiling, fusion, and resource allocation. A linear programming model is used to determine the optimal FIFO sizes, preventing pipeline stalls or deadlocks while conserving precious on-chip memory resources such as BRAM and URAM.

Key Innovations Driving Performance Gains

- Multi-level Design Space Exploration: The compiler systematically explores optimizations at various levels, including loop tiling, unrolling, vectorization, and permutation within the Linalg IR, fusion strategies constrained by memory and hardware resources, and stream width and resource allocation to maximize throughput under bandwidth limitations.

- Seamless End-to-End Compilation: Models are ingested via Torch-MLIR, converted into MLIR Linalg dialect, and finally lowered into a dataflow intermediate representation. This IR directly maps to hardware kernels with explicit streaming interfaces and runtime support, eliminating the need for manual RTL coding.

- Iterative Tensor Typing System: By making iteration order and data layout first-class citizens, the itensor type enables the compiler to enforce stream order correctness, facilitate kernel fusion, and synthesize minimal buffer and format converters when necessary.

- Formalized FIFO Buffer Sizing: A linear programming approach ensures that inter-kernel FIFOs are sized optimally to avoid deadlocks and stalls, while minimizing on-chip memory usage, a critical factor for FPGA implementations.

Performance Highlights on AMD Alveo U55C FPGA

Evaluations on LLM decoding tasks demonstrate that this streaming dataflow compiler achieves up to 36% reduction in latency compared to GPU baselines (0.64× latency) and nearly double the energy efficiency (1.99×) relative to NVIDIA A100 GPUs, depending on the model. The Alveo U55C platform, equipped with 16 GB of HBM2 memory delivering 460 GB/s bandwidth, dual QSFP28 network interfaces, and PCIe Gen3×16 or dual Gen4×8 connectivity, provides an ideal environment for this streaming architecture.

Implications and Future Directions

This work exemplifies how compiler-driven streaming dataflows can unlock significant efficiency gains for LLM inference on FPGA platforms. By tightly integrating model compilation with hardware-aware optimizations-such as iterative tensor typing and formal FIFO sizing-the approach minimizes off-chip memory bottlenecks and maximizes throughput.

While current results focus on decoding workloads across popular LLMs, extending this methodology to training or other model architectures could further enhance the applicability of streaming dataflow accelerators. Additionally, as FPGA technology evolves with higher bandwidth memory and more sophisticated interconnects, such compiler frameworks will be pivotal in harnessing their full potential.

Explore the latest advancements in FPGA-based LLM acceleration and stay updated with emerging compiler technologies to optimize AI workloads efficiently.