Why do many contemporary audio AI models tend to decline in performance when tasked with extended reasoning, rather than basing their conclusions directly on the audio input? The StepFun research team introduces Step-Audio-R1, an innovative audio large language model (LLM) engineered to leverage increased computational resources during inference. This model addresses the common accuracy degradation seen with chain-of-thought reasoning by revealing that the issue stems not from audio data limitations but from training methodologies and insufficient grounding in the audio modality.

Understanding the Challenge: Reliance on Textual Proxies in Audio Reasoning

Current audio AI systems predominantly inherit their reasoning strategies from text-based training paradigms. Instead of interpreting sounds directly, these models simulate understanding by internally generating textual representations or imagined transcripts. This phenomenon, termed Textual Surrogate Reasoning by the StepFun team, causes models to prioritize verbal descriptions over genuine acoustic features such as pitch variations, rhythmic patterns, timbre, or ambient noise characteristics.

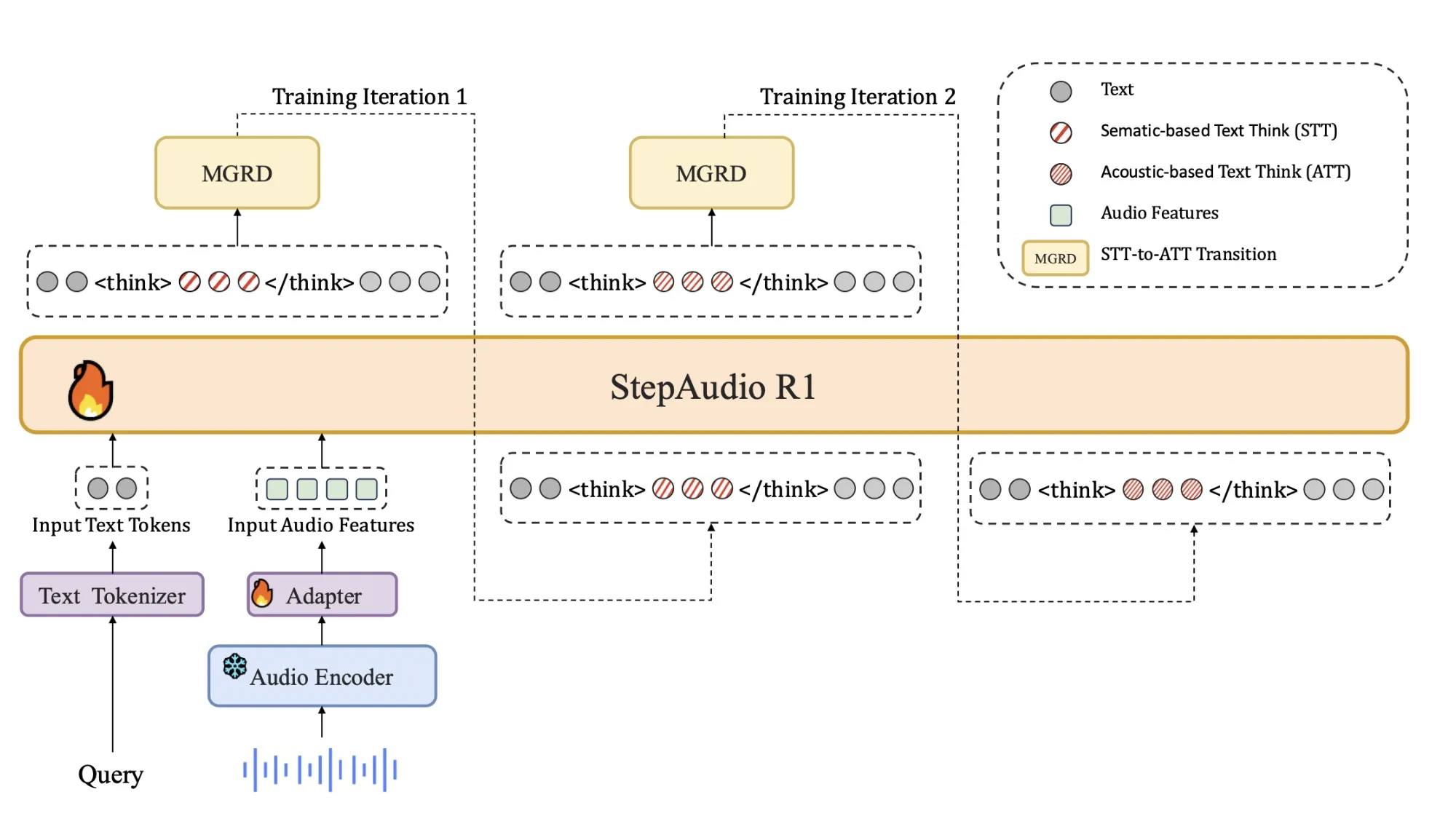

This disconnect explains why longer chains of thought often degrade performance: the model expends computational effort elaborating on inaccurate or irrelevant textual assumptions rather than grounding its reasoning in the actual audio signal. Step-Audio-R1 confronts this by compelling the model to substantiate its answers with explicit acoustic evidence. The training framework, called Modality Grounded Reasoning Distillation (MGRD), selectively distills reasoning paths that explicitly reference audio attributes, thereby aligning the model’s thought process with the sound data.

Innovative Model Architecture

Step-Audio-R1 builds upon the architecture of previous Step Audio systems with key enhancements:

- An audio encoder based on Qwen2 processes raw waveform inputs at a frequency of 25 Hz.

- An audio adaptor reduces the encoder’s output rate by half to 12.5 Hz, synchronizing audio frames with the language token stream.

- A powerful Qwen2.5 32-billion parameter decoder ingests these audio features and generates textual output.

Crucially, the decoder always produces a dedicated reasoning segment enclosed within <think> and </think> tags before delivering the final answer. This explicit separation allows the training objectives to sculpt the reasoning content and structure without compromising task accuracy. The model is publicly available as a 33-billion parameter audio-to-text LLM under an open-source license.

Comprehensive Training Strategy: From Initialization to Audio-Grounded Reinforcement Learning

The training pipeline consists of two main phases: a supervised cold start and a reinforcement learning stage, both integrating text and audio tasks.

The cold start phase utilizes approximately 5 million examples, encompassing 1 billion tokens of pure text data and 4 billion tokens from paired audio-text datasets. Audio tasks include automatic speech recognition, paralinguistic analysis, and audio question-answering dialogues. A subset of the audio data contains chain-of-thought reasoning traces generated by earlier models. Text data spans multi-turn conversations, knowledge-based question answering, mathematical problem solving, and code reasoning. All training samples adhere to a format where reasoning is encapsulated within <think> tags, even if initially empty.

This supervised learning phase teaches Step-Audio-R1 to generate coherent reasoning for both audio and text inputs, establishing a baseline chain-of-thought capability that still leans heavily on text-based reasoning.

Modality Grounded Reasoning Distillation (MGRD): Enhancing Audio-Centric Thought

MGRD is applied iteratively to refine the model’s audio reasoning. In each iteration, the team selects audio questions whose answers depend on authentic acoustic properties-such as speaker emotions, environmental sounds, or musical structures. The model generates multiple reasoning and answer candidates per query. A filtering mechanism retains only those reasoning chains that satisfy three criteria:

- Explicit references to acoustic features rather than mere textual or imagined transcripts.

- Logical coherence, presented as concise, stepwise explanations.

- Correct final answers verified against ground truth labels or programmatic checks.

The filtered reasoning traces form a distilled dataset emphasizing audio-grounded chain-of-thought. The model is fine-tuned on this alongside the original text reasoning data. Subsequently, Reinforcement Learning with Verified Rewards (RLVR) is employed, where rewards for text questions depend solely on answer accuracy, while audio questions receive a composite reward balancing correctness (80%) and reasoning quality (20%). Training uses Proximal Policy Optimization (PPO) with around 16 sampled responses per prompt and supports sequences up to 10,240 tokens, enabling extended deliberation.

Benchmark Performance: Narrowing the Gap with Leading Models

Step-Audio-R1 demonstrates impressive results on a comprehensive speech-to-text benchmark suite, including Big Bench Audio, Spoken MQA, MMSU, MMAU, and Wild Speech datasets. It achieves an average accuracy of approximately 83.6%, outperforming Gemini 2.5 Pro’s 81.5% and approaching Gemini 3 Pro’s 85.1%. Notably, on Big Bench Audio alone, Step-Audio-R1 attains 98.7%, surpassing both Gemini models.

For speech-to-speech reasoning, the Step-Audio-R1 Realtime variant employs a streaming approach that simultaneously listens and thinks while speaking. On Big Bench Audio speech-to-speech tasks, it reaches 96.1% reasoning accuracy with a first-packet latency of about 0.92 seconds. This performance exceeds GPT-based real-time baselines and Gemini 2.5 Flash’s native audio dialog capabilities, all while maintaining sub-second interaction speeds.

Insights from Ablation Studies: Critical Factors for Effective Audio Reasoning

The ablation experiments offer valuable guidance for audio AI developers:

- Incorporating a reasoning format reward is essential; without it, reinforcement learning tends to truncate or eliminate chain-of-thought reasoning, reducing audio benchmark performance.

- Reinforcement learning data should focus on moderately challenging problems. Selecting questions with intermediate difficulty ensures stable reward signals and preserves extended reasoning.

- Simply increasing the volume of RL audio data without careful selection does not improve outcomes. The quality of prompts and labels is more impactful than sheer dataset size.

Additionally, the team developed a self-cognition correction mechanism to reduce instances where the model claims it can only process text and not audio, despite being trained on sound. This approach uses Direct Preference Optimization on curated preference pairs, encouraging the model to acknowledge and utilize audio inputs correctly.

Summary of Key Contributions

- Step-Audio-R1 is among the first audio LLMs to convert longer chain-of-thought reasoning into consistent accuracy improvements for audio tasks, overcoming the inverse scaling issues seen in prior models.

- The model directly addresses Textual Surrogate Reasoning by applying Modality Grounded Reasoning Distillation, filtering for reasoning that depends on genuine acoustic cues like pitch, timbre, and rhythm rather than imagined transcripts.

- Its architecture integrates a Qwen2-based audio encoder, an adaptor, and a Qwen2.5 32-billion parameter decoder that always outputs explicit reasoning segments before answers, released as a 33-billion parameter audio-to-text model under Apache 2.0.

- Across diverse audio understanding and reasoning benchmarks-including speech, environmental sounds, and music-Step-Audio-R1 outperforms Gemini 2.5 Pro and rivals Gemini 3 Pro, with a real-time variant enabling low-latency speech-to-speech interaction.

- The training methodology combines large-scale supervised chain-of-thought learning, modality-grounded distillation, and Reinforcement Learning with Verified Rewards, offering a replicable framework for future audio reasoning models that benefit from increased inference compute.

Final Thoughts

Step-Audio-R1 marks a significant advancement by transforming chain-of-thought reasoning from a performance bottleneck into a powerful asset for audio AI. By directly confronting the limitations of Textual Surrogate Reasoning through Modality Grounded Reasoning Distillation and Reinforcement Learning with Verified Rewards, it demonstrates that scaling compute at test time can enhance audio model accuracy when reasoning is firmly anchored in acoustic features. The model delivers benchmark results on par with top-tier systems like Gemini 3 Pro, while remaining accessible and practical for developers. This research establishes a robust, reproducible design pattern for extended deliberation in audio LLMs, turning a persistent failure mode into a controllable strength.