How can synthetic data remain dynamic and varied for cutting-edge AI models without causing a single orchestration pipeline to become a performance choke point? Researchers at Meta AI have unveiled Matrix, an innovative decentralized framework that encodes both control and data flows into message objects, which then traverse distributed queues. As training large language models (LLMs) increasingly depends on synthetic dialogues, tool usage logs, and chains of reasoning, many current systems still rely on centralized controllers or domain-specific configurations. This approach often leads to inefficient GPU utilization, increased coordination complexity, and restricted data variety. Matrix circumvents these issues by employing peer-to-peer agent scheduling on a Ray cluster, achieving token throughput improvements ranging from 2 to 15 times on real-world tasks, all while preserving output quality.

Decentralizing Control: From Central Orchestrators to Peer-to-Peer Agents

Conventional agent-based frameworks typically centralize workflow state and control logic within a single orchestrator. Every interaction-be it agent calls, tool invocations, or retries-passes through this central hub. While straightforward to manage, this architecture struggles to scale efficiently when tasked with managing tens of thousands of simultaneous synthetic conversations or tool execution paths.

Matrix revolutionizes this paradigm by encapsulating both control and data flows into a unified message entity known as an orchestrator. This orchestrator maintains the task’s state, including dialogue history, intermediate outputs, and routing decisions. Stateless agents, implemented as Ray actors, retrieve orchestrators from distributed queues, execute their designated logic, update the state, and forward the orchestrator directly to the next agent specified within the message. This eliminates the need for a central scheduler in the critical execution loop. Tasks progress independently at the individual message level, avoiding the batch-level synchronization barriers common in frameworks like Spark or Ray Data.

This architecture minimizes idle times caused by varying trajectory lengths and localizes fault tolerance to individual tasks. Consequently, failure in one orchestrator does not impede the progress of others, enhancing overall system robustness.

Architecture Overview and Core Components

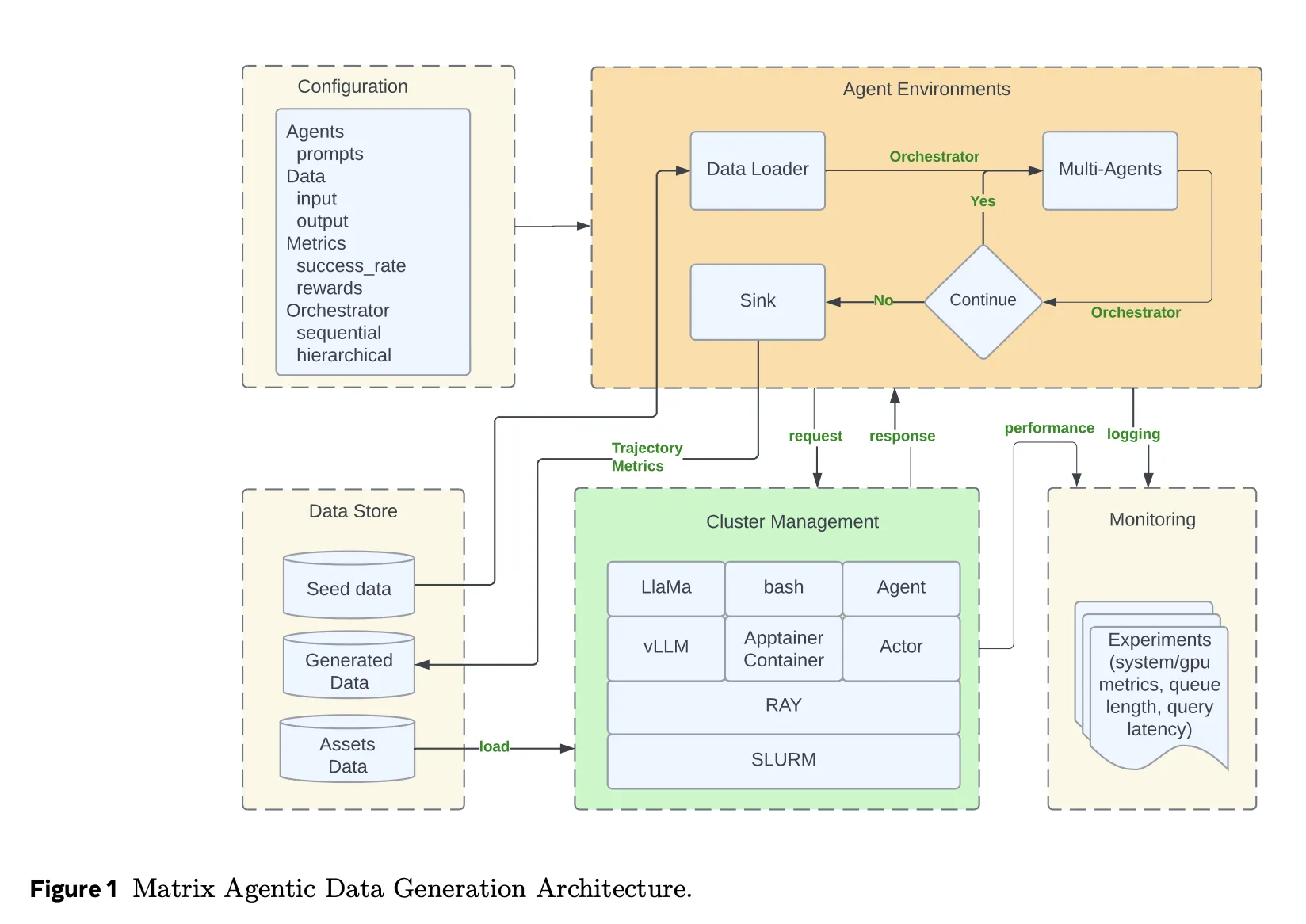

Matrix operates atop a Ray cluster, typically deployed on SLURM-managed infrastructure. Ray facilitates distributed actors and queue management, while Ray Serve exposes LLM endpoints powered by vLLM and SGLang. Additionally, it supports routing requests to external APIs such as Azure OpenAI or Gemini through proxy layers.

Complex tool invocations and auxiliary services run within Apptainer containers, isolating agent runtimes from sandboxed code execution, HTTP-based tools, or custom evaluators. Configuration management-including agent roles, orchestrator types, resource allocation, and input/output schemas-is handled by Hydra. Real-time monitoring of queue lengths, task backlogs, token throughput, and GPU utilization is enabled through Grafana dashboards integrated with Ray metrics.

To optimize network bandwidth, Matrix implements message offloading: when conversation histories exceed a predefined size, large payloads are stored in Ray’s object store, and only lightweight references remain within orchestrators. This strategy supports high-throughput LLM serving with gRPC-based model backends without overwhelming cluster communication channels.

Case Study 1: Enhancing Multi-Agent Dialogue with Collaborative Reasoner

Collaborative Reasoner (Coral) is a multi-agent dialogue system where two LLM agents debate a question, challenge each other’s viewpoints, and converge on a final answer. The original Coral implementation relies on a centralized controller to manage thousands of self-collaboration trajectories.

Matrix reimplements this protocol using its decentralized peer-to-peer orchestrators and stateless agents. Running on 31 A100 GPUs with LLaMA 3.1 8B Instruct models, Matrix achieves concurrency across 248 GPUs with 50 queries per GPU, totaling 12,400 simultaneous conversations. In contrast, the Coral baseline optimally runs at 5,000 concurrent conversations on the same hardware.

Matrix produces approximately 2 billion tokens in about 4 hours, whereas Coral generates around 620 million tokens over 9 hours. This represents a 6.8-fold increase in token throughput, with both systems maintaining similar agreement accuracy near 47%.

Case Study 2: NaturalReasoning – Curating Reasoning Data from Web Corpora

NaturalReasoning builds a reasoning dataset by processing extensive web text collections. Matrix models this pipeline with three distinct agents: a Filter agent that uses a lightweight classifier to identify English passages likely containing reasoning; a Score agent that employs a larger instruction-tuned model to assign quality scores; and a Question agent that extracts questions, answers, and reasoning chains.

Out of 25 million DCLM web documents, only about 5.45% pass all filters, resulting in roughly 1.19 million question-answer pairs with associated reasoning steps. On a 500,000-document subset, Matrix evaluates various parallelism strategies. The optimal setup combines data and task parallelism, partitioning data into 20 segments with 700 concurrent tasks per partition, yielding a 1.61x throughput improvement over task concurrency scaling alone.

Across the full dataset, Matrix achieves 5,853 tokens per second, outperforming a Ray Data batch baseline (with 14,000 concurrent tasks) that reaches 2,778 tokens per second. This 2.1x throughput gain stems solely from Matrix’s peer-to-peer, fine-grained scheduling rather than model differences.

Case Study 3: Tau2-Bench – Tool Usage Trajectories in Conversational Agents

Tau2-Bench assesses conversational agents tasked with utilizing tools and databases in customer support scenarios. Matrix models this environment with four agents: a user simulator, an assistant, a tool executor, and a reward calculator, alongside a sink agent that aggregates metrics. Tool APIs and reward mechanisms are adapted from the Tau2 reference implementation and containerized for isolation.

Deployed on a cluster with 13 H100 GPUs and multiple LLM replicas, Matrix generates 22,800 trajectories in approximately 1.25 hours, equating to about 41,000 tokens per second. The baseline Tau2-agent, running on a single node with 500 concurrent threads, produces roughly 2,654 tokens per second and 1,519 trajectories. Both systems maintain comparable average rewards, confirming that Matrix’s speedup does not compromise task fidelity. Overall, Matrix delivers a 15.4x increase in token throughput on this benchmark.

Summary of Innovations and Benefits

- Matrix replaces traditional centralized orchestrators with a decentralized, message-driven architecture where each task functions as an autonomous state machine progressing through stateless agents.

- Built entirely on open-source technologies-including SLURM, Ray, vLLM, SGLang, and Apptainer-Matrix scales efficiently to tens of thousands of concurrent multi-agent workflows for synthetic data generation, benchmarking, and data processing.

- Across diverse case studies-Collaborative Reasoner, NaturalReasoning, and Tau2-Bench-Matrix achieves 2 to 15.4 times higher token throughput compared to specialized baselines on identical hardware, while preserving output quality and reward metrics.

- By offloading large conversation histories to Ray’s object store and maintaining only lightweight references in orchestrator messages, Matrix reduces network bandwidth demands and supports high-throughput LLM serving with gRPC-based backends.

Concluding Insights

Matrix represents a practical systems advancement that elevates multi-agent synthetic data generation from custom scripts to a robust operational runtime. By encoding both control and data flows into orchestrators and delegating execution to stateless peer-to-peer agents on Ray, it effectively decouples scheduling, LLM inference, and tool integration. The demonstrated case studies highlight that meticulous system design-not novel model architectures-is currently the key driver for scaling synthetic data pipelines efficiently.