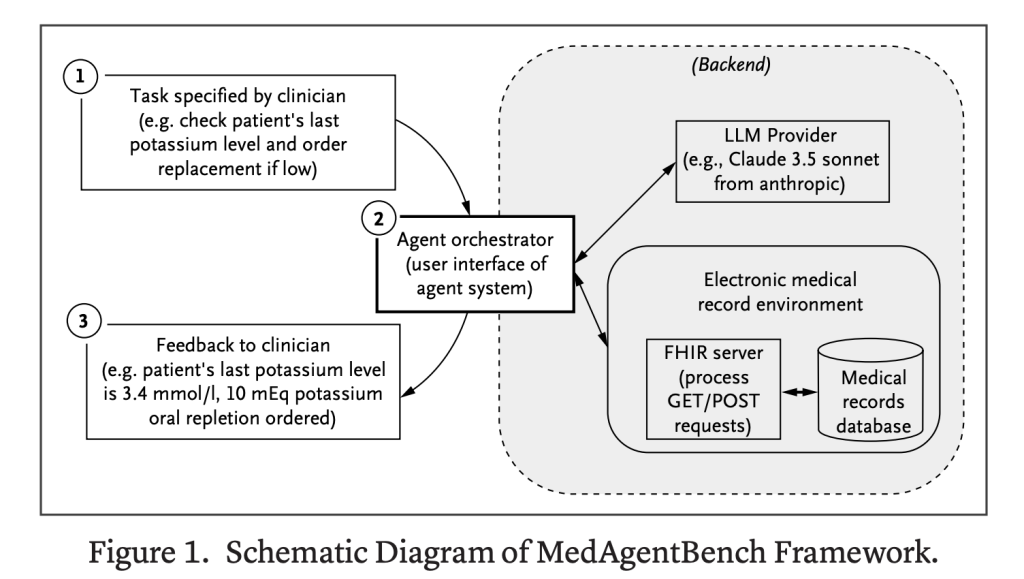

Researchers at Stanford University have introduced MedAgentBench, an innovative benchmark suite crafted to assess the performance of large language model (LLM) agents within healthcare environments. Unlike traditional question-answering datasets, MedAgentBench simulates a virtual electronic health record (EHR) system where AI agents must actively engage, strategize, and carry out complex, multi-step clinical procedures. This approach represents a paradigm shift from evaluating static reasoning abilities to testing dynamic, tool-driven medical workflows in real time.

The Growing Need for Agent-Centric Benchmarks in Healthcare AI

Modern LLMs have evolved beyond simple conversational roles, now demonstrating agentic capabilities such as interpreting complex instructions, invoking APIs, synthesizing patient data, and automating intricate clinical tasks. This progression holds promise for mitigating critical challenges in healthcare, including workforce shortages, excessive documentation demands, and administrative bottlenecks.

While there are existing general agent benchmarks like AgentBench and tau-bench, the healthcare sector has lacked a dedicated, standardized evaluation framework that captures the nuances of medical data interoperability, longitudinal patient histories, and FHIR standards. MedAgentBench addresses this void by providing a clinically relevant, reproducible platform tailored to healthcare AI assessment.

Inside MedAgentBench: Composition and Design

Task Framework and Clinical Relevance

MedAgentBench encompasses 300 physician-curated tasks spanning 10 clinical categories, including patient data retrieval, laboratory monitoring, clinical documentation, diagnostic test ordering, specialist referrals, and medication management. Each task typically involves 2 to 3 sequential steps, reflecting authentic workflows encountered in both inpatient and outpatient settings.

Robust Patient Data Foundation

The benchmark utilizes 100 de-identified patient profiles sourced from Stanford’s STARR database, which contains over 700,000 clinical records such as lab results, vital signs, diagnoses, procedures, and prescriptions. Data privacy is ensured through de-identification and subtle data perturbations, while maintaining clinical accuracy and realism.

FHIR-Compliant Interactive Environment

MedAgentBench operates within a FHIR-standardized environment that supports both data retrieval (GET) and updates (POST) to the EHR. This setup enables AI agents to perform realistic clinical actions, such as recording vital signs or placing medication orders, making the benchmark directly applicable to real-world EHR systems.

Evaluation Methodology and Model Performance

- Performance Metric: Task success rate (SR) measured using a stringent pass@1 criterion to ensure safety and reliability in clinical contexts.

- Models Assessed: Twelve state-of-the-art LLMs, including GPT-4o, Claude 3.5 Sonnet, Gemini 2.0, DeepSeek-V3, Qwen2.5, and Llama 3.3.

- Agent Orchestration: A baseline orchestration framework employing nine FHIR functions, with a maximum of eight interaction cycles per task.

Top Performing AI Agents and Insights

- Claude 3.5 Sonnet v2 emerged as the leader, achieving a 69.67% overall success rate, excelling particularly in data retrieval tasks with an 85.33% success.

- GPT-4o demonstrated balanced capabilities, securing a 64.0% success rate across both retrieval and action-oriented tasks.

- DeepSeek-V3 led among open-weight models with a 62.67% success rate.

- General Observation: Most models performed well on query-based tasks but encountered difficulties with multi-step action tasks that require precise and safe execution.

Common Pitfalls in Model Execution

Two primary error types were identified during evaluation:

- Non-compliance with instructions: Errors such as invalid API calls or improperly formatted JSON responses.

- Output format inconsistencies: Providing verbose textual answers when structured numerical data was expected.

These issues underscore the critical need for enhanced accuracy and dependability in clinical AI applications, where errors can have significant consequences.

Conclusion: Advancing Healthcare AI with MedAgentBench

MedAgentBench represents the first comprehensive benchmark designed to evaluate LLM agents within realistic EHR environments, combining 300 clinician-authored tasks with a FHIR-compliant interface and a rich dataset of 100 patient profiles. While leading models like Claude 3.5 Sonnet v2 show promising results, with nearly 70% task success, there remains a substantial gap between effective data retrieval and the safe execution of complex clinical actions. Despite limitations such as reliance on single-institution data and a focus on EHR workflows, MedAgentBench offers an open, reproducible framework that will catalyze the development of more reliable and capable AI agents in healthcare.