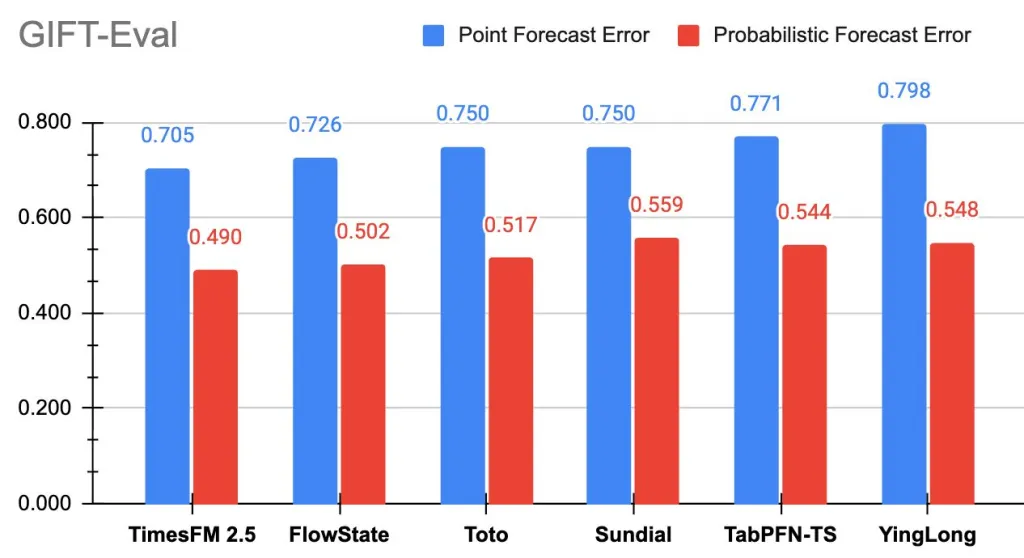

Google Research has unveiled TimesFM-2.5, a streamlined time-series foundation model featuring 200 million parameters and a remarkable 16,384-step context window. This decoder-only architecture natively supports probabilistic forecasting and is now accessible via Hugging Face. On the GIFT-Eval benchmark, TimesFM-2.5 currently leads the pack among zero-shot foundation models, excelling in accuracy metrics such as MASE and CRPS.

Understanding Time-Series Forecasting

Time-series forecasting involves analyzing data points collected sequentially over time to detect trends, seasonal patterns, and other temporal dynamics, enabling predictions of future values. This technique is vital across numerous sectors, from anticipating consumer demand in e-commerce to forecasting energy consumption and tracking climate variables. By modeling temporal dependencies and cyclical behaviors, organizations can make informed decisions in rapidly changing environments.

Advancements in TimesFM-2.5 Compared to Version 2.0

- Parameter Reduction: The model size has been optimized to 200 million parameters, down from 500 million, enhancing efficiency without sacrificing performance.

- Extended Context Length: The maximum input sequence length has been expanded eightfold to 16,384 points, allowing the model to capture long-term dependencies and complex seasonal effects.

- Quantile Forecasting: An optional 30 million-parameter quantile head enables continuous quantile predictions up to a 1,000-step horizon, improving uncertainty estimation.

- Input Simplification: The model no longer requires a “frequency” indicator, and introduces new inference options such as flip-invariance, positivity constraints, and quantile-crossing corrections.

- Future Enhancements: Plans include a Flax-based implementation for accelerated inference, reintroduction of covariate support, and expanded documentation to facilitate adoption.

The Significance of a Longer Context Window

With the ability to process 16,384 historical data points in a single forward pass, TimesFM-2.5 can effectively model multi-seasonal patterns, abrupt regime shifts, and low-frequency trends without relying on complex tiling or hierarchical methods. This capability reduces the need for extensive preprocessing and enhances model stability, particularly in domains where the historical context far exceeds the forecast horizon, such as electricity load forecasting and retail sales prediction.

Research Foundations and Evaluation Framework

The core concept behind TimesFM-a unified, decoder-only foundation model tailored for time-series forecasting-was initially presented in a 2024 ICML publication and detailed on Google’s research platform. The GIFT-Eval benchmark, developed by Salesforce, provides a standardized evaluation suite covering diverse domains, frequencies, forecast horizons, and both univariate and multivariate settings. This benchmark is publicly accessible with a leaderboard hosted on Hugging Face, fostering transparency and comparability.

Highlights and Practical Implications

- Compact and Efficient: TimesFM-2.5 achieves superior accuracy with less than half the parameters of its predecessor, enabling faster inference and reduced computational costs.

- Enhanced Historical Insight: The extended 16K context length allows the model to leverage deeper historical information, improving forecast reliability.

- Top-Ranked Performance: It currently holds the #1 position among zero-shot foundation models on GIFT-Eval for both point forecast accuracy (MASE) and probabilistic accuracy (CRPS).

- Ready for Deployment: Its efficient architecture and support for quantile forecasting make it well-suited for integration into production environments across industries.

- Wide Accessibility: The model is publicly available on Hugging Face, facilitating immediate experimentation and adoption.

Conclusion

TimesFM-2.5 represents a significant leap forward in the evolution of foundation models for time-series forecasting, transitioning from experimental prototypes to robust, production-ready solutions. By halving the parameter count while vastly increasing the context window and leading benchmark evaluations, it sets a new standard for efficiency and forecasting accuracy. With ongoing integration efforts such as BigQuery and Model Garden support, TimesFM-2.5 is poised to accelerate the deployment of zero-shot forecasting models in real-world applications.