In environments where applications can access a diverse array of large language models (LLMs) with varying costs and capabilities, determining which model should handle each query becomes a complex challenge. Addressing this, the Salesforce AI research team has developed xRouter, an advanced routing system that leverages reinforcement learning to intelligently decide when to respond using local models and when to delegate requests to external LLMs, all while meticulously monitoring token-level expenses.

Introducing xRouter: Intelligent LLM Orchestration

xRouter is an orchestration framework centered on tool-calling, built upon the Qwen2.5-7B-Instruct model as its core decision-making engine. This instruction-tuned router is equipped with the ability to invoke various downstream LLMs, craft tailored prompts for them, and determine whether to generate a synthesized response or select the best answer from multiple outputs. The system employs Distributional Advantage Policy Optimization (DAPO) within the Verl reinforcement learning framework and offers an API compatible with OpenAI standards.

Within its ecosystem, xRouter manages over 20 distinct LLM tools categorized into premium, standard, budget, and specialized tiers. These include cutting-edge models such as GPT-5, GPT-4.1, GPT-5-Mini, GPT-5-Nano, o3, Kimi K2, DeepSeek-R1, various Qwen3-235B variants, and GPT-OSS models. A focused offloading subset of 12 models-featuring GPT-5, GPT-5-Mini, GPT-5-Nano, GPT-4o, GPT-4.1, o3, o3-Pro, o4-Mini, GPT-OSS-120B, GPT-OSS-20B, and two Gemini-2.5 variants-enables efficient delegation based on task requirements and cost considerations.

Balancing Accuracy and Cost: The Reward Mechanism

The routing challenge is formulated as a reinforcement learning task where each interaction episode’s reward is a combination of a binary success indicator and a cost penalty. Specifically, the system awards a fixed positive reward when the final response is correct, then deducts a penalty proportional to the normalized cumulative cost of all model invocations. Incorrect answers yield zero reward regardless of cost savings.

Mathematically, the reward function is expressed as reward = quality − λ × normalized_cost, where λ represents the cost penalty coefficient. This “success-gated, cost-shaped” objective ensures the router prioritizes correctness before optimizing for cost efficiency. Training experiments utilize three distinct cost penalty values, resulting in three xRouter-7B variants-xRouter-7B-1, xRouter-7B-2, and xRouter-7B-3-each offering a different balance between accuracy and expense.

Curating Training Data and Designing Learning Signals

The training dataset for xRouter is derived from Reasoning360, a comprehensive benchmark encompassing mathematical problems, coding challenges, and general reasoning tasks. Difficulty levels are estimated using a robust reference model, Qwen3-32B, allowing samples to be stratified into easy, medium, and hard categories. To enhance the router’s decision-making, additional straightforward queries such as casual conversation, retrieval, and factual questions are included, teaching the system when it can confidently respond without external assistance.

Each training example is annotated with model descriptions and pricing information across different tiers. To prevent overfitting to static cost structures, the model catalog is periodically updated and cost values are perturbed during training. Incorrect or inefficient routing decisions-such as delegating to expensive models unnecessarily or producing wrong answers-incur full cost penalties and receive zero reward, providing a clear and effective learning signal.

Inference Strategies: How xRouter Operates in Real-Time

At inference, xRouter supports three distinct modes of operation:

- Direct Response: The router answers queries using its backbone model without invoking external tools.

- Synthesized Response: It calls multiple downstream models and combines their outputs through its own reasoning process to generate a final answer.

- Selective Response: The router queries several models and employs a specialized

select_responsefunction to choose the best reply among them.

These modes are implemented via function calls in an OpenAI-style interface, executed through orchestration engines like LiteLLM and SGLang. Empirical observations reveal that trained xRouter models frequently blend direct and synthesized responses, contrasting with off-the-shelf routers such as GPT-4o, GPT-4.1, GPT-5, Qwen2.5-7B, and Qwen3-8B, which tend to respond directly even when uncertain. This behavioral distinction contributes significantly to xRouter’s efficiency gains.

Performance Metrics and Cost Efficiency

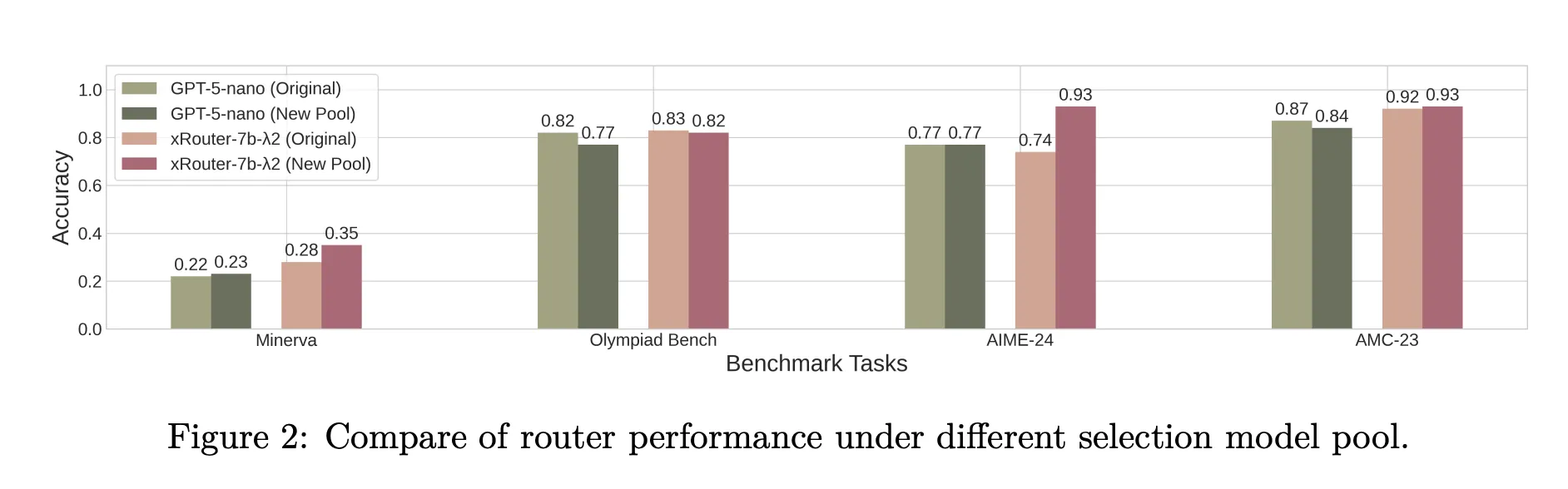

When benchmarked against static routing baselines on datasets including Minerva, MATH-500, Olympiad Bench, AIME-24, AMC-23, Codeforces, Code-Contests, and Human-EvalPlus, xRouter-7B variants consistently outperform untrained routers using the same base model. For instance, xRouter-7B-2 achieves accuracy close to GPT-5 on the challenging Olympiad Bench while incurring only about one-eighth of GPT-5’s evaluation cost.

System-level evaluations on LiveCodeBenchv5, GPQADiamond, AIME25, MT-Bench, IFEval, and LiveBench demonstrate that xRouter-7B-3 attains the highest average accuracy on LiveCodeBenchv5 among all tested systems, maintaining moderate cost levels. Across tasks like GPQA, xRouter variants reach approximately 80-90% of GPT-5’s accuracy while consuming less than 20% of the cost. Overall, the cost-aware reward framework enables inference cost reductions of up to 80% without sacrificing completion rates. Hugging Face model card reports further indicate up to 60% cost savings for comparable quality under alternative configurations.

The team also introduces the concept of cost utility, defined as accuracy divided by cost. While some open-source single models with very low API fees achieve higher cost utility, they often deliver lower absolute accuracy. xRouter strikes a balance by trading some cost utility for enhanced task performance, aligning with the priorities of most production environments.

Summary of Insights

- xRouter is a reinforcement learning-based tool-calling router built on Qwen2.5-7B-Instruct, capable of selecting from over 20 external LLMs with explicit cost-awareness.

- Its reward mechanism is success-gated, granting positive rewards only for correct answers, and incorporates a cost penalty scaled by a coefficient λ, resulting in three variants that balance accuracy and cost differently.

- Training on the stratified Reasoning360 dataset, augmented with simple queries and dynamic cost perturbations, equips xRouter to discern when to answer directly and when to delegate, enhancing robustness to evolving model catalogs.

- Across diverse benchmarks in mathematics, coding, and reasoning, xRouter-7B models approach GPT-5-level accuracy on difficult tasks like Olympiad Bench and achieve 80-90% of GPT-5 accuracy on GPQA, while reducing offloading costs by 60-80% depending on the evaluation scenario.

Concluding Remarks

xRouter represents a significant advancement in cost-aware orchestration for heterogeneous LLM deployments. By integrating a mid-sized router trained with DAPO on a carefully curated dataset and employing a success-gated, cost-shaped reward, it consistently delivers near state-of-the-art accuracy while dramatically lowering inference expenses. This approach paves the way for more efficient and scalable AI systems that intelligently balance performance and operational cost.