Integrating Supervised and Reinforcement Fine-Tuning for Large Language Models

Large language models (LLMs) typically undergo refinement after their initial pretraining phase through two main approaches: supervised fine-tuning (SFT) and reinforcement fine-tuning (RFT). Each method offers unique advantages and drawbacks. SFT excels at teaching models to follow instructions by learning from curated examples, but it can result in inflexible behavior and limited adaptability to new tasks. Conversely, RFT leverages reward signals to optimize models for task-specific success, enhancing performance but sometimes causing instability and dependence on a strong initial policy. Although these techniques are often applied sequentially, the interplay between them remains insufficiently explored, prompting the question: how can we develop a cohesive framework that harnesses the structured guidance of SFT alongside the goal-oriented learning of RFT?

Challenges and Advances in Post-Training Strategies for LLMs

Recent research at the crossroads of reinforcement learning (RL) and LLM fine-tuning has accelerated, especially in efforts to cultivate reasoning capabilities within models. Offline RL, which relies on static datasets, often produces subpar policies due to limited data diversity. This limitation has driven interest in hybrid approaches that combine offline and online RL to enhance learning outcomes. In the context of LLMs, the prevailing practice involves initially applying SFT to instill desirable behaviors, followed by RFT to fine-tune performance. However, the nuanced relationship between these two stages is not yet fully understood, and devising effective integration methods remains a significant open problem in the field.

Introducing Prefix-RFT: A Unified Fine-Tuning Framework

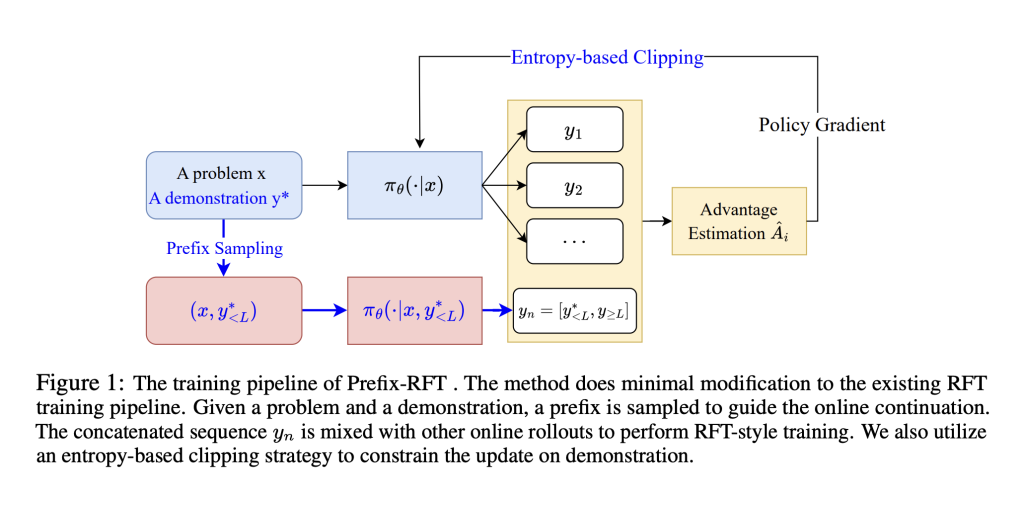

A collaborative team of researchers from institutions including the University of Edinburgh, Fudan University, Alibaba Group, Stepfun, and the University of Amsterdam has proposed an innovative framework named Prefix Reinforcement Fine-Tuning (Prefix-RFT). This approach synergizes supervised and reinforcement fine-tuning by guiding exploration through partial demonstrations, or “prefixes,” enabling the model to flexibly generate subsequent outputs. Evaluated on complex mathematical reasoning benchmarks, Prefix-RFT consistently surpasses the performance of standalone SFT, RFT, and mixed-policy techniques. Its design allows seamless integration into existing training pipelines and demonstrates resilience to variations in the quality and quantity of demonstration data. By blending example-driven learning with exploratory behavior, Prefix-RFT paves the way for more adaptive and effective LLM training.

How Prefix-RFT Balances Stability and Exploration

Prefix-RFT capitalizes on the stability of SFT, which imitates expert demonstrations, while incorporating the exploratory benefits of RFT’s reward-based optimization. The method involves providing the model with a partial demonstration as a prefix and allowing it to autonomously complete the task, thus reducing overreliance on full supervision. To maintain training stability and efficiency, Prefix-RFT employs techniques such as entropy-based clipping and a cosine decay scheduler for prefix length. This balanced strategy fosters a more adaptive fine-tuning process compared to previous methods, enabling the model to explore novel solutions without sacrificing learned behaviors.

Performance Highlights on Math Reasoning Benchmarks

Prefix-RFT was rigorously tested using high-quality offline math datasets, including OpenR1-Math-220K, which contains approximately 46,000 filtered problems. Experiments conducted on models like Qwen2.5-Math-7B, Qwen2.5-Math-1.5B, and LLaMA-3.1-8B demonstrated superior results across multiple benchmarks such as AIME 2024/25, AMC, MATH500, Minerva, and OlympiadBench. The framework achieved the highest average scores at 32 samples (avg@32) and pass rates on the first attempt (pass@1), outperforming established methods including RFT, SFT, ReLIFT, and LUFFY. Utilizing the Dr. GRPO algorithm, Prefix-RFT selectively updated the top 20% of tokens with the highest entropy in the prefix, gradually reducing prefix length from 95% to 5%. This approach preserved intermediate SFT loss levels, indicating a well-maintained balance between imitation and exploration, particularly on challenging problem sets.

Efficiency and Robustness of Prefix-RFT

Despite its straightforward design, Prefix-RFT consistently outperforms both individual and hybrid fine-tuning baselines across diverse models and datasets. Remarkably, it maintains strong performance even when trained on just 1% of the data (approximately 450 prompts), with only a slight decrease in avg@32 scores from 40.8 to 37.6. The entropy-based token update strategy focusing on the top 20% of tokens proved most effective, yielding the highest benchmark results with more concise outputs. Additionally, the cosine decay scheduler for prefix length contributed to enhanced training stability and improved learning dynamics compared to uniform scheduling, especially on complex tasks like the AIME competition.

Conclusion: Towards More Adaptive LLM Fine-Tuning

Prefix-RFT represents a significant step forward in unifying supervised and reinforcement fine-tuning techniques for large language models. By leveraging partial demonstrations to guide learning and balancing imitation with exploration, it achieves superior performance and robustness. This framework offers a promising direction for future research and practical applications, enabling the development of LLMs that are both reliable and adaptable across a wide range of challenging tasks.