Understanding the Limitations of Sequential Reasoning in Large Language Models

Traditionally, enhancing the reasoning capabilities of large language models (LLMs) during inference has involved extending single, linear chains of thought. While this method can improve performance to some extent, it quickly encounters diminishing returns. For instance, experiments with DeepSeek-R1-distill-Qwen-1.5B reveal that increasing the token budget from 32,000 up to 128,000 tokens results in minimal accuracy improvements. This plateau stems from what is known as early token commitment, where initial mistakes in the reasoning sequence cascade through subsequent steps, a phenomenon termed Tunnel Vision. This suggests that the bottleneck is not due to the model’s inherent capacity but rather the sequential nature of the reasoning process itself.

Diagnosing Tunnel Vision: Why Recovery from Early Errors Is Difficult

To investigate the impact of early errors, researchers tested the model’s ability to recover by forcing it to continue reasoning from deliberately flawed prefixes of varying lengths, ranging from 100 to 1,600 tokens. The results showed a steady decline in accuracy as the length of the erroneous prefix increased, confirming that once the model commits to an incorrect reasoning path, it struggles to correct itself-even with additional computational resources. This inefficiency highlights the limitations of sequential compute scaling, where resources are disproportionately spent on extending a single flawed trajectory.

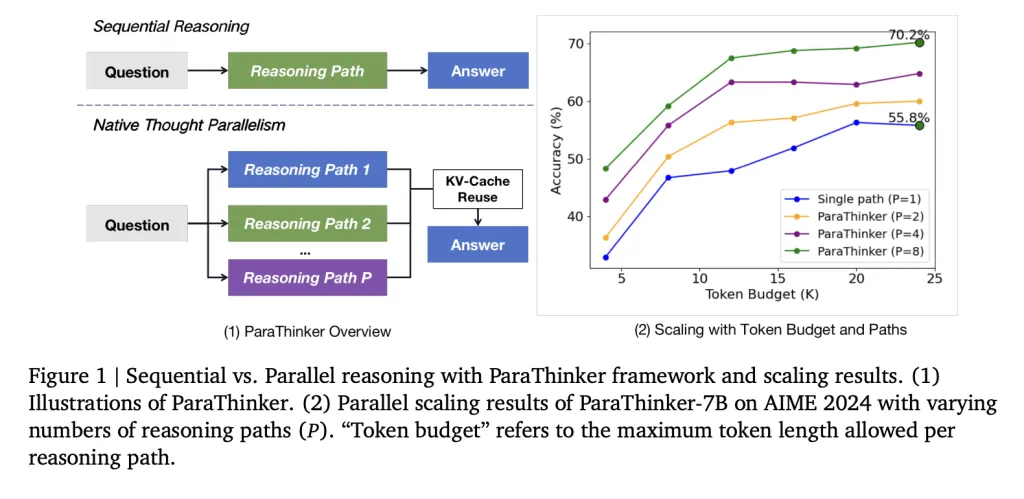

Introducing ParaThinker: Harnessing Parallel Reasoning to Overcome Bottlenecks

Addressing these challenges, a research team from Tsinghua University developed ParaThinker, an innovative framework designed to enable LLMs to generate multiple diverse reasoning paths simultaneously and then integrate them into a more accurate final answer. This approach embodies native thought parallelism, shifting from depth-focused sequential reasoning to breadth-oriented parallel exploration.

Core Components of ParaThinker’s Architecture

- Distinct control tokens (e.g.,

<think i>) that trigger separate reasoning threads. - Path-specific positional embeddings that differentiate tokens across parallel streams, preventing confusion during the synthesis phase.

- Two-stage attention masking that maintains independence among reasoning paths during generation and enables controlled merging when producing the final output.

Additionally, ParaThinker improves efficiency by reusing key-value (KV) caches from the reasoning phase during summarization, avoiding redundant computations and reducing latency.

Training ParaThinker: Multi-Path Reasoning Fine-Tuning

ParaThinker was fine-tuned using supervised learning on datasets containing multiple solution paths per problem. These datasets were created by sampling diverse reasoning trajectories from teacher models such as DeepSeek-R1 and GPT-OSS-20B. Each training example included several <think i> paths alongside a final <summary> answer. To enhance generalization, token sampling was randomized, allowing the model to handle more reasoning paths during inference than seen during training.

The fine-tuning process utilized Qwen-2.5 models with 1.5 billion and 7 billion parameters, supporting context windows up to 28,000 tokens. Training data was sourced from Open-R1, DeepMath, s1k, and LIMO datasets, supplemented with additional solutions sampled at a temperature of 0.8. The training was conducted on multiple NVIDIA A800 GPUs to handle the computational demands.

Performance Evaluation: ParaThinker’s Impact on Reasoning Benchmarks

ParaThinker was evaluated on several challenging benchmarks, including AIME 2024, AIME 2025, AMC 2023, and MATH-500, demonstrating significant improvements:

- Accuracy Gains:

- The 1.5B parameter ParaThinker model outperformed sequential baselines by 12.3% and majority voting methods by 4.3%.

- The 7B parameter variant achieved a 7.5% improvement over sequential approaches and a 2.0% increase compared to majority voting.

- With eight parallel reasoning paths, the 1.5B ParaThinker reached a 63.2% pass@1 rate, surpassing sequential 7B models operating under similar computational budgets.

- Efficiency Metrics:

- Parallel reasoning introduced only a 7.1% average latency overhead.

- Generating 16 reasoning paths incurred less than double the latency of a single path, thanks to optimized GPU memory usage.

- Optimal Termination Strategy: The First-Finish method, which concludes reasoning as soon as the first path completes, outperformed alternatives like Last-Finish and Half-Finish in both speed and accuracy.

Insights from Ablation Studies: What Drives ParaThinker’s Success?

- Fine-tuning on multi-path datasets alone, without ParaThinker’s architectural enhancements, did not yield performance improvements, underscoring the importance of the model’s design innovations.

- Eliminating thought-specific positional embeddings led to accuracy drops, while naive flattening of token encodings caused severe degradation due to positional information loss over long sequences.

- Baseline methods relying on re-prefilling KV caches showed worsening performance as the number of reasoning paths increased, validating ParaThinker’s cache reuse strategy as a key efficiency booster.

Comparing ParaThinker with Existing Parallel Reasoning Techniques

Traditional parallel reasoning methods such as majority voting, self-consistency, and Tree of Thoughts depend heavily on external verification or post-processing to select the best answer, which limits their scalability. Token-parallel diffusion approaches struggle with reasoning tasks due to inherent sequential dependencies. Architectural solutions like PARSCALE require extensive model restructuring and pretraining. In contrast, ParaThinker maintains the standard Transformer architecture, introducing parallelism directly during the reasoning phase and consolidating multiple KV caches into a unified summarization step, offering a more scalable and efficient solution.

Conclusion: Embracing Native Thought Parallelism for Future LLM Scaling

ParaThinker reveals that the limitations observed in test-time scaling of LLMs are largely due to the sequential nature of reasoning rather than model capacity constraints. By distributing computational effort across multiple parallel reasoning trajectories instead of elongating single chains, smaller models can outperform much larger counterparts with only a slight increase in latency. This paradigm shift highlights native thought parallelism as a vital avenue for advancing the scalability and effectiveness of future large language models.