How can we effectively assess whether large language models truly grasp Indian languages and cultural nuances in practical scenarios? OpenAI has introduced IndQA, a specialized benchmark designed to measure AI models’ comprehension and reasoning abilities on culturally significant questions posed in various Indian languages.

The Rationale Behind IndQA

With approximately 80% of the global population not using English as their first language, existing benchmarks for non-English AI capabilities remain limited, often relying on simplistic translation tasks or multiple-choice formats. Popular multilingual benchmarks like MMMLU and MGSM have reached performance plateaus, where top models achieve nearly identical scores, making it difficult to track meaningful advancements or evaluate understanding of local contexts, histories, and everyday realities.

India serves as an ideal starting point for region-specific benchmarks due to its linguistic diversity and market significance. Over a billion Indians primarily communicate in one of 22 official languages, with at least seven languages spoken by more than 50 million people each. Additionally, India ranks as the second largest market for ChatGPT, underscoring the importance of culturally aware AI evaluation.

Scope of the Dataset: Languages and Cultural Domains

IndQA encompasses 2,278 thoughtfully crafted questions spanning 12 Indian languages and 10 culturally rich domains. The dataset was developed in collaboration with 261 domain experts from across India, ensuring authenticity and depth. The languages covered include Bengali, English, Hindi, Hinglish (reflecting common code-switching), Kannada, Marathi, Odia, Telugu, Gujarati, Malayalam, Punjabi, and Tamil.

The cultural domains represented are Architecture and Design, Arts and Culture, Everyday Life, Food and Cuisine, History, Law and Ethics, Literature and Linguistics, Media and Entertainment, Religion and Spirituality, and Sports and Recreation. Each question is originally composed in an Indian language, accompanied by an English translation for transparency, a detailed grading rubric, and an expert-crafted ideal answer.

Innovative Rubric-Based Scoring System

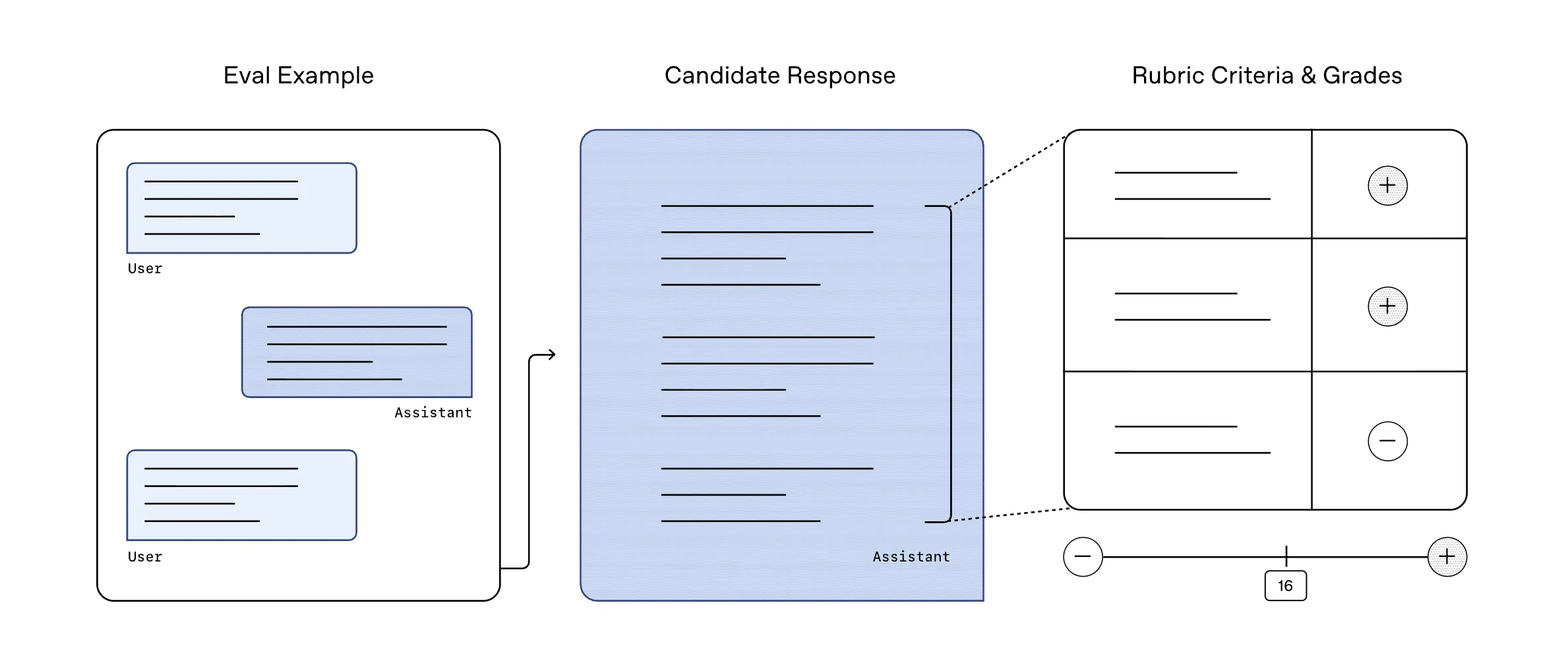

Unlike traditional benchmarks that rely on exact answer matching, IndQA employs a nuanced rubric-based evaluation. For every question, domain specialists define multiple criteria outlining what constitutes a comprehensive and culturally accurate response, assigning weights to each criterion based on importance.

A model-driven grading system then assesses AI-generated answers against these criteria, awarding partial credit where appropriate. This approach mirrors academic grading of short-answer questions, capturing subtlety, cultural relevance, and reasoning quality beyond mere token overlap.

Methodical Dataset Creation and Rigorous Filtering

OpenAI’s dataset construction followed a meticulous four-phase process:

- Expert Collaboration: Partnering with Indian organizations, OpenAI recruited native speakers with deep subject matter expertise across 10 domains to author challenging, context-rich questions rooted in regional culture, history, and social practices.

- Adversarial Filtering: Each draft question was tested against OpenAI’s most advanced models at the time-GPT-4o, OpenAI o3, GPT-4.5, and later GPT-5. Only questions that these models predominantly failed to answer correctly were retained, ensuring the benchmark remains challenging and future-proof.

- Rubric Development: Experts created detailed grading rubrics for each question, which are consistently applied during model evaluations to maintain fairness and rigor.

- Quality Assurance: Ideal answers and English translations underwent peer review and iterative refinement until experts approved their accuracy and clarity.

Tracking AI Progress in Indian Language Understanding

IndQA serves as a vital tool for monitoring advancements in AI’s proficiency with Indian languages. OpenAI’s evaluations reveal significant improvements in recent models, including GPT-5 Thinking High, while highlighting areas where further development is needed. Performance metrics are broken down by language and domain, providing granular insights into strengths and weaknesses.

Essential Insights from IndQA

- Culturally Anchored Evaluation: IndQA prioritizes understanding and reasoning about culturally relevant questions in Indian languages, moving beyond mere translation or multiple-choice accuracy.

- Robust, Expert-Curated Dataset: With over 2,200 questions across 12 languages and 10 domains, the benchmark reflects diverse aspects of Indian life, crafted by a large pool of domain experts.

- Rubric-Based Grading Enables Nuance: The evaluation framework supports partial credit and assesses cultural correctness, capturing the complexity of real-world understanding.

- Adversarial Filtering Ensures Challenge: By excluding questions easily answered by top-tier models, IndQA maintains a high bar that encourages continuous AI improvement.

Final Thoughts on IndQA’s Impact

IndQA addresses a critical gap in AI evaluation by focusing on India’s rich linguistic and cultural landscape, which includes both widely spoken and low-resource languages. Unlike many multilingual benchmarks that emphasize English-centric content or translation tasks, IndQA offers a rigorous, expert-driven, and culturally sensitive framework. Its adversarial filtering against cutting-edge models ensures it remains a relevant and challenging benchmark, positioning IndQA as a key reference point for assessing Indian language reasoning capabilities in next-generation AI systems.