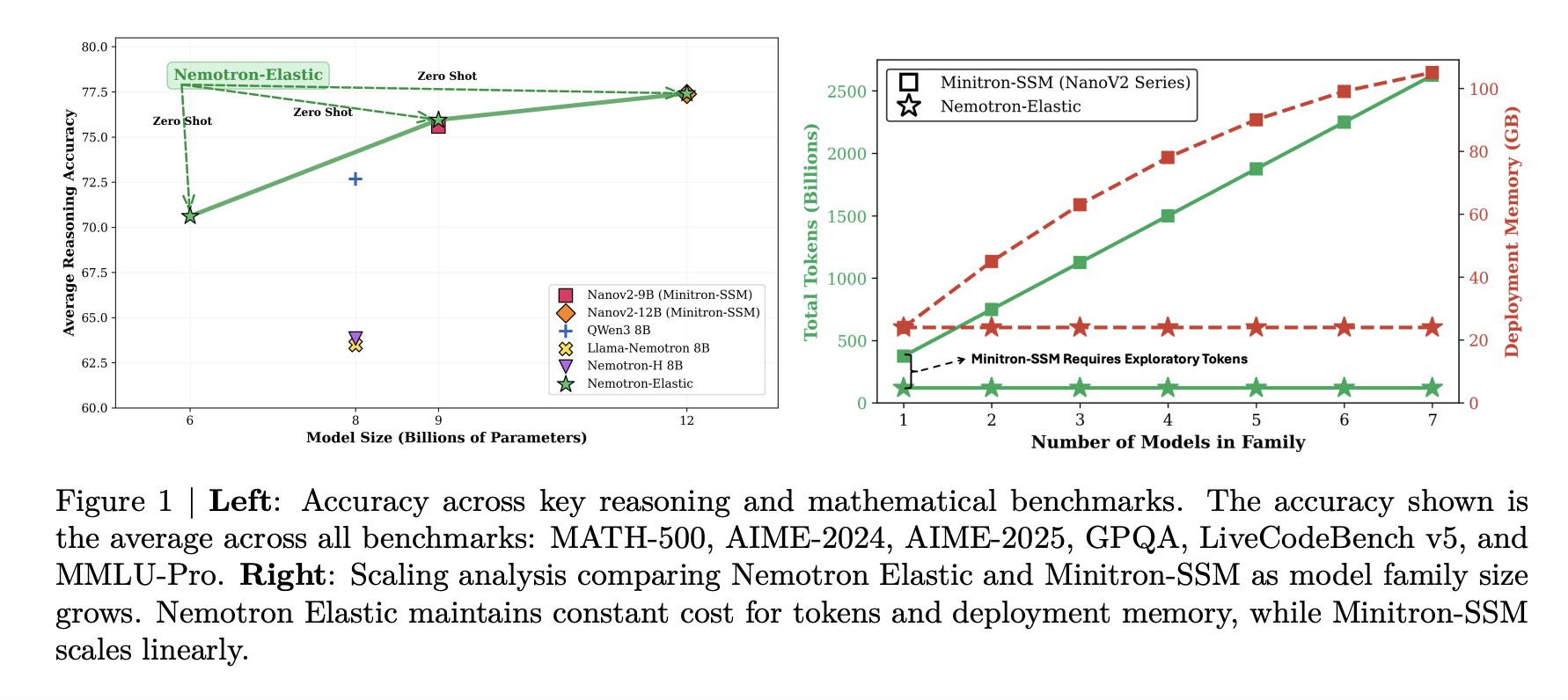

Why do AI development teams continue to train and maintain multiple large language models tailored for various deployment scenarios when a single adaptable model can efficiently generate multiple sizes at no additional cost? NVIDIA is revolutionizing the conventional approach by consolidating the typical ‘model family’ hierarchy into one unified training process. Their AI division has introduced Nemotron-Elastic-12B, a 12-billion parameter reasoning model that seamlessly integrates nested 9B and 6B variants within the same parameter framework. This innovation allows all three model sizes to be derived from a single elastic checkpoint, eliminating the need for separate distillation procedures for each variant.

Unified Model Family: Multiple Sizes from One Source

In practical applications, AI systems often require a spectrum of model sizes: a large-scale model for server environments, a medium-sized model optimized for edge GPUs, and a compact version designed for scenarios with strict latency or power constraints. Traditionally, each model size is trained or distilled independently, leading to increased computational costs and storage demands proportional to the number of variants.

Nemotron Elastic challenges this norm by building upon the Nemotron Nano V