Meta AI researchers have developed an innovative technique that condenses repetitive reasoning sequences into succinct, named routines called “behaviors.” These behaviors are then leveraged during inference or integrated into models through fine-tuning. This approach leads to a remarkable reduction of up to 46% fewer reasoning tokens on the MATH benchmark, while maintaining or enhancing accuracy. Additionally, in a self-improvement framework on the AIME dataset, it achieves accuracy improvements of up to 10% without altering the underlying model parameters. This advancement introduces the concept of procedural memory for large language models (LLMs)-focusing on how to reason effectively rather than merely recalling information-implemented via a curated, searchable “behavior handbook.”

Addressing Redundancy in Chain-of-Thought Reasoning

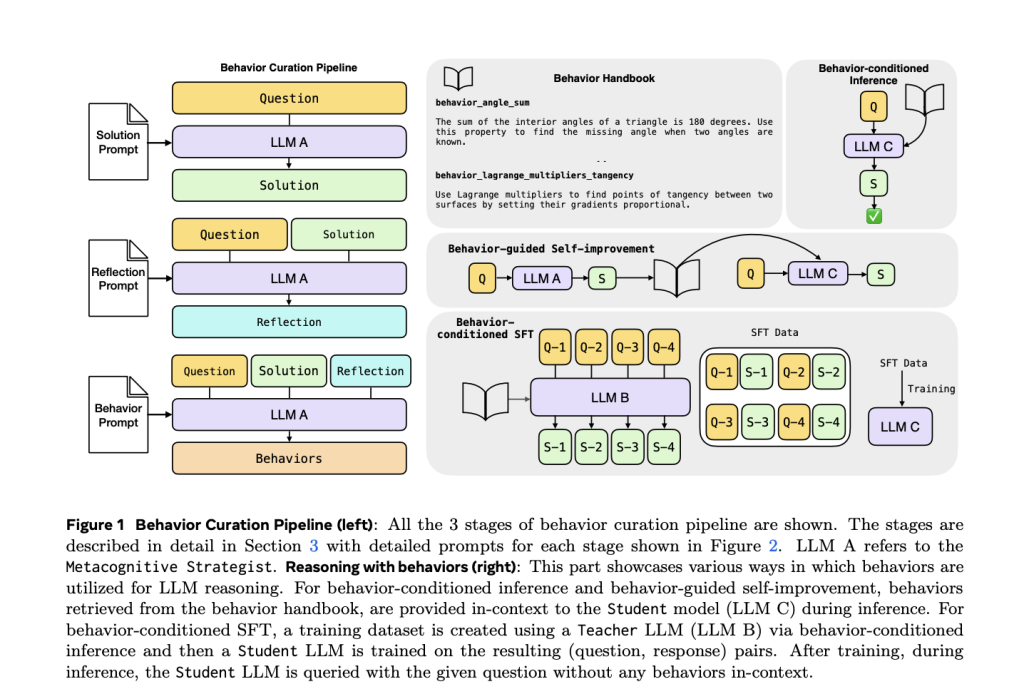

Long chain-of-thought (CoT) reasoning often involves repeatedly reconstructing common subroutines such as the inclusion-exclusion principle, base conversions, or geometric angle calculations. This redundancy inflates token usage, increases latency, and limits the model’s capacity to explore alternative reasoning paths. Meta’s solution is to encapsulate these recurring reasoning steps into compact, named behaviors-each consisting of a behavior name paired with a concise instruction. These behaviors are extracted from previous reasoning traces through an LLM-driven reflection process and then reused in subsequent problem-solving tasks. Experiments on challenging math datasets like MATH-500 and AIME-24/25 demonstrate significant reductions in output length without sacrificing solution quality.

How the Behavior Extraction and Utilization Pipeline Functions

The system operates through three distinct roles collaborating around a central “behavior handbook”:

- Metacognitive Strategist (R1-Llama-70B): This role involves solving problems to generate detailed reasoning traces, reflecting on these traces to identify generalizable reasoning steps, and formalizing these as

(behavior_name → instruction)entries that populate the behavior handbook, effectively serving as procedural memory. - Teacher Model (LLM B): Utilizes the behavior handbook to produce behavior-conditioned responses, which form the basis of training datasets.

- Student Model (LLM C): During inference, the student model references behaviors in-context or is fine-tuned on behavior-conditioned data. Retrieval of relevant behaviors is topic-based for MATH and embedding-based (using BGE-M3 embeddings combined with FAISS) for AIME.

Explicit prompt templates guide each stage-problem solving, reflection, behavior extraction, and behavior-conditioned inference (BCI). In BCI, the model is prompted to explicitly cite behaviors during reasoning, promoting concise and structured solution paths.

Evaluation Strategies for Behavior-Driven Reasoning

- Behavior-Conditioned Inference (BCI): Retrieves a set number of relevant behaviors and prepends them to the input prompt to guide reasoning.

- Behavior-Guided Self-Improvement: Extracts behaviors from the model’s own previous attempts and reintroduces them as hints to refine subsequent solutions.

- Behavior-Conditioned Supervised Fine-Tuning (BC-SFT): Fine-tunes student models on teacher-generated outputs that incorporate behavior-guided reasoning, enabling the model to internalize behaviors and eliminate the need for retrieval during inference.

Performance Highlights on MATH and AIME Benchmarks

- Token Reduction: On the MATH-500 dataset, BCI achieves up to a 46% decrease in reasoning tokens compared to baseline models without behaviors, while maintaining or improving accuracy. This effect is consistent across different model sizes, including R1-Llama-70B and Qwen3-32B, and across various token budgets ranging from 2,048 to 16,384 tokens.

- Enhanced Self-Improvement: On AIME-24, behavior-guided self-improvement surpasses traditional critique-and-revise methods at nearly all token budgets, delivering up to a 10% boost in accuracy as token budgets increase, indicating superior scaling of reasoning quality.

- Superior Fine-Tuning Outcomes: BC-SFT consistently outperforms standard supervised fine-tuning and base models across multiple architectures such as Llama-3.1-8B-Instruct and Qwen variants, achieving higher accuracy with greater token efficiency. Notably, this improvement is not due to easier training data, as teacher correctness rates remain comparable between original and behavior-conditioned datasets, yet BC-SFT models generalize better on AIME-24/25.

Why Procedural Memory Enhances Reasoning Efficiency

The behavior handbook encapsulates procedural knowledge-the “how-to” strategies for reasoning-distinct from traditional retrieval-augmented generation (RAG) systems that focus on declarative knowledge or factual recall. By transforming verbose, repetitive derivations into concise, reusable steps, the model avoids redundant computations and reallocates resources to novel subproblems. Behavior prompts act as structured cues that steer the model’s decoder toward efficient and accurate reasoning paths. Through BC-SFT, these reasoning patterns become ingrained within the model’s parameters, enabling implicit invocation of behaviors without additional prompt overhead.

Examples of Behaviors in Practice

Behaviors span from general reasoning tactics to specific mathematical procedures, such as:

behavior_inclusion_exclusion_principle: systematically subtract overlapping sets to prevent double counting;behavior_formalize_word_problem: translate verbal problem statements into algebraic equations;behavior_point_to_line_distance: calculate distance using the formula |Ax + By + C| / √(A² + B²) for geometric tangency checks.

During behavior-conditioned inference, the model explicitly references these behaviors, resulting in transparent and compact reasoning traces.

Behavior Retrieval and Cost Efficiency

For the MATH dataset, behaviors are retrieved based on problem topics, while for AIME, retrieval leverages semantic similarity via BGE-M3 embeddings and FAISS indexing. Although BCI introduces additional input tokens (the behaviors), these tokens are precomputed and non-autoregressive, often incurring lower costs than output tokens on commercial APIs. Since BCI significantly reduces output token counts, the overall computational expense and latency are lowered. BC-SFT further optimizes efficiency by removing the need for retrieval during inference.

Conclusion: Advancing LLM Reasoning with Procedural Memory

Meta’s behavior handbook framework pioneers the integration of procedural memory into large language models by abstracting repetitive reasoning steps into reusable behaviors. This method, applied through behavior-conditioned inference or distilled via supervised fine-tuning, achieves up to a 46% reduction in reasoning tokens while maintaining or enhancing accuracy-demonstrating approximately 10% gains in self-correction scenarios. The approach is straightforward to implement, requiring only an index, a retriever, and optional fine-tuning, and it produces auditable, interpretable reasoning traces. Future challenges include scaling this methodology beyond mathematical domains and managing an expanding repository of behaviors.