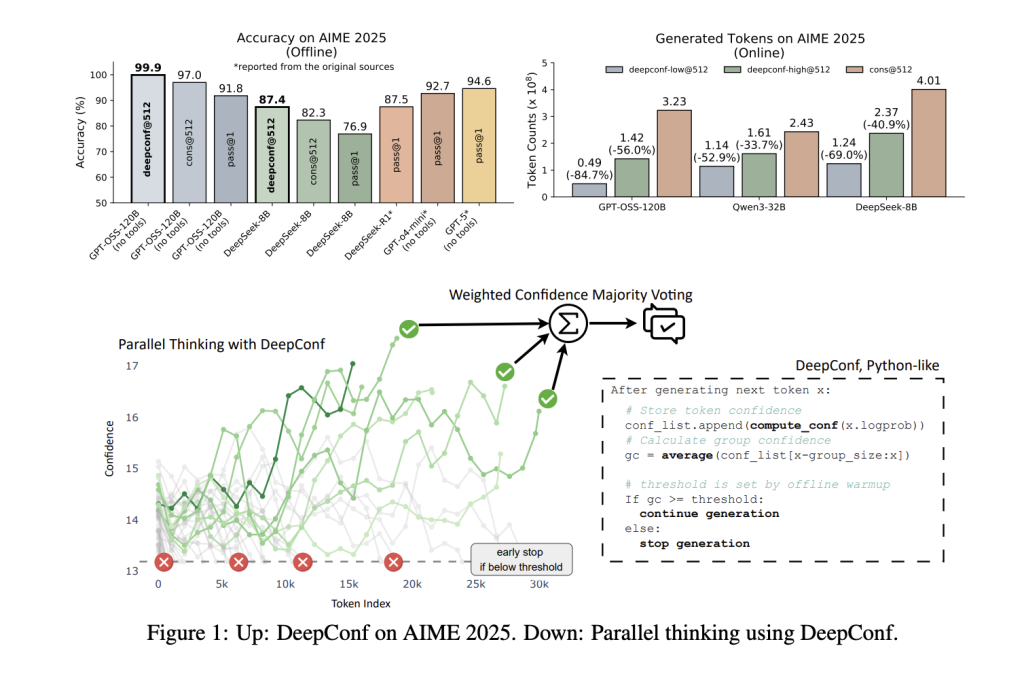

Large language models (LLMs) have revolutionized artificial intelligence reasoning, with techniques like parallel reasoning and self-consistency often hailed as major breakthroughs. Yet, these methods encounter a critical compromise: while sampling numerous reasoning paths enhances accuracy, it also significantly increases computational demands. Addressing this challenge, researchers from Meta AI and UCSD have developed Deep Think with Confidence (DeepConf), an innovative AI framework that nearly removes this compromise. DeepConf achieves cutting-edge reasoning accuracy combined with remarkable efficiency improvements-for instance, reaching an impressive 99.9% accuracy on the demanding AIME 2025 math contest using the open-source GPT-OSS-120B model, while generating up to 85% fewer tokens than traditional parallel reasoning methods.

The Motivation Behind DeepConf

Parallel reasoning, often implemented as self-consistency with majority voting, is the prevailing approach to enhance LLM reasoning: multiple candidate solutions are generated, and the most frequent answer is selected. Although effective, this approach suffers from diminishing returns-accuracy gains plateau or even degrade as more reasoning paths are sampled, since low-quality or erroneous traces can skew the majority vote. Additionally, producing hundreds or thousands of reasoning sequences per query is computationally expensive and time-consuming.

DeepConf overcomes these limitations by leveraging the model’s intrinsic confidence signals. Instead of treating all reasoning paths equally, it dynamically discards low-confidence trajectories-either during generation (online) or after generation (offline)-and bases the final decision solely on the most trustworthy reasoning chains. This approach is model-agnostic, requires no additional training or hyperparameter tuning, and can be seamlessly integrated into any existing model or inference pipeline with minimal coding effort.

DeepConf’s Mechanism: Confidence as a Compass

DeepConf introduces novel metrics to quantify and utilize confidence throughout the reasoning process:

- Token-Level Confidence: Calculates the negative average log-probability of the top-k token candidates at each generation step, providing a fine-grained measure of certainty.

- Segment Confidence: Averages token confidence over a sliding window (e.g., 2048 tokens) to smooth out fluctuations and capture intermediate reasoning quality.

- Final Segment Confidence: Focuses on the concluding portion of the reasoning trace, where the final answer typically emerges, to detect late-stage errors.

- Minimum Segment Confidence: Identifies the weakest confidence segment within a trace, often signaling reasoning breakdowns.

- Lowest Percentile Confidence: Highlights the poorest confidence segments, which are strong predictors of incorrect answers.

These confidence indicators are employed to weight votes-giving more influence to high-confidence traces-or to filter out low-confidence paths, retaining only the top η% most reliable sequences. In online mode, DeepConf halts the generation of a reasoning path as soon as its confidence dips below a dynamically set threshold, significantly cutting down unnecessary computation.

Impressive Outcomes: Accuracy and Efficiency Gains

DeepConf was rigorously tested on various challenging reasoning datasets-including AIME 2024/2025, HMMT 2025, BRUMO25, and GPQA-Diamond-across multiple models such as DeepSeek-8B, Qwen3-8B/32B, and GPT-OSS-20B/120B. The findings are remarkable:

| Model | Dataset | Pass@1 Accuracy | Self-Consistency Accuracy (512 samples) | DeepConf Accuracy (512 samples) | Token Reduction |

|---|---|---|---|---|---|

| GPT-OSS-120B | AIME 2025 | 91.8% | 97.0% | 99.9% | -84.7% |

| DeepSeek-8B | AIME 2024 | 83.0% | 86.7% | 93.3% | -77.9% |

| Qwen3-32B | AIME 2024 | 80.6% | 85.3% | 90.8% | -56.0% |

Accuracy enhancement: DeepConf consistently boosts accuracy by up to 10 percentage points compared to traditional majority voting, often reaching or surpassing benchmark ceilings.

Efficiency breakthroughs: By terminating low-confidence reasoning paths early, DeepConf cuts token generation by 43% to 85% without sacrificing-and frequently improving-final accuracy.

Seamless integration: DeepConf requires no retraining or hyperparameter adjustments and can be incorporated into any model or serving framework with roughly 50 lines of additional code.

Lightweight deployment: Implemented as a simple extension to existing inference engines, DeepConf only needs access to token-level log probabilities and a few lines of logic for confidence evaluation and early stopping.

Effortless Adoption: Minimal Code Changes, Maximum Benefits

Integrating DeepConf into inference systems like vLLM is straightforward:

- Enhance the log-probability processor to compute sliding-window confidence scores.

- Insert early stopping checks before emitting each token output.

- Configure confidence thresholds through API parameters, eliminating the need for model retraining.

This design enables any OpenAI-compatible endpoint to support DeepConf with a simple configuration toggle, facilitating rapid adoption in production environments.

Summary

DeepConf from Meta AI marks a significant advancement in LLM reasoning by harmonizing peak accuracy with exceptional computational efficiency. By intelligently harnessing the model’s internal confidence signals, DeepConf attains near-perfect performance on elite reasoning challenges-previously unattainable for open-source models-while drastically reducing computational overhead.

Frequently Asked Questions

How does DeepConf enhance both accuracy and efficiency compared to majority voting?

DeepConf prioritizes reasoning paths with higher model confidence, improving accuracy by up to 10 percentage points across various benchmarks. Simultaneously, it curtails token usage by up to 85% through early termination of low-confidence sequences, delivering superior performance with substantial efficiency gains.

Is DeepConf compatible with all language models and serving frameworks?

Absolutely. DeepConf is model-agnostic and can be integrated into any inference stack-whether open-source or commercial-without requiring model modifications or retraining. Deployment involves minimal code additions (approximately 50 lines for vLLM) and leverages token log probabilities for confidence assessment and early stopping.

Does DeepConf necessitate retraining, specialized datasets, or complex tuning?

No. DeepConf operates entirely during inference, requiring no additional training, fine-tuning, or hyperparameter optimization. It utilizes existing log probability outputs and works immediately with standard API configurations, making it robust and scalable for real-world applications.