Revolutionizing the landscape of AI in healthcare, a pioneering collaboration between Stanford University, ETH Zurich, and industry giants like Google Research and Amazon has unveiled OpenTSLM-an innovative suite of Time-Series Language Models (TSLMs) designed to transform the analysis of continuous medical data.

Overcoming the Challenges of Time-Series Data in Medical AI

Healthcare data is inherently temporal, with patient monitoring relying on the continuous tracking of vital signs, biomarkers, and physiological signals such as ECGs, EEGs, and data from wearable devices. Despite the surge in digital health technology, existing large language models (LLMs) have struggled to effectively interpret this type of data due to a fundamental “modality gap.” This gap arises because LLMs are optimized for discrete text tokens, whereas medical time-series data is continuous and complex.

Traditional methods attempted to bridge this divide by converting time-series signals into textual descriptions or static images, but these approaches have proven inefficient and lack scalability, often losing critical temporal nuances essential for accurate medical interpretation.

Why Vision-Language Models Fall Short in Time-Series Interpretation

One prevalent workaround has been to transform time-series data into visual formats like line graphs and feed them into Vision-Language Models (VLMs). However, this strategy is inadequate for precise medical data analysis. VLMs are primarily trained on natural images and excel at recognizing objects and scenes, but they are ill-equipped to decode the intricate, sequential patterns embedded in medical time-series visualizations.

For example, when high-frequency signals such as ECG waveforms are converted into pixel-based images, subtle but clinically significant variations-like arrhythmias or sleep stage transitions-can be obscured. This loss of detail hampers the model’s ability to detect critical health events, underscoring the necessity to treat time-series data as a unique modality rather than mere images.

OpenTSLM: Integrating Time-Series as a Native Data Modality

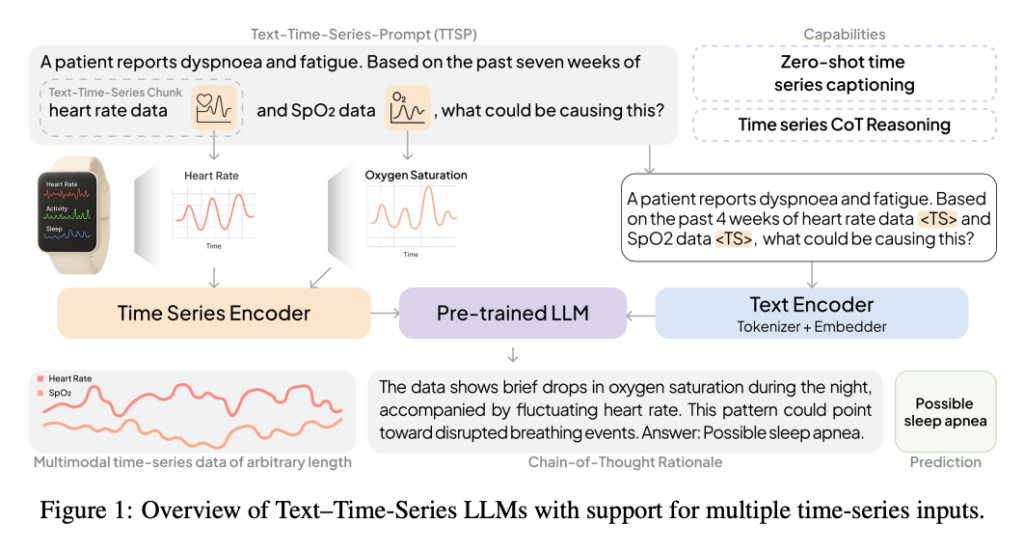

Addressing these limitations, OpenTSLM introduces a groundbreaking approach by embedding time-series data directly into pretrained LLMs (including models like Llama and Gemma) as a native modality. This integration allows for seamless natural language querying and sophisticated reasoning over complex longitudinal health data.

Exploring OpenTSLM Architectures: SoftPrompt vs. Flamingo

1. OpenTSLM-SoftPrompt: Implicit Time-Series Encoding

This model encodes time-series inputs into learnable tokens that are combined with textual tokens through a technique known as soft prompting. While this method is computationally efficient for short sequences, it faces significant scalability challenges. As the length of the time-series data increases, memory consumption grows exponentially, limiting its practicality for analyzing extended medical recordings.

2. OpenTSLM-Flamingo: Explicit and Scalable Modeling

Inspired by the Flamingo architecture, this variant explicitly treats time-series data as a distinct modality. It employs a specialized encoder alongside a Perceiver Resampler to condense variable-length sequences into fixed-size representations. These are then integrated with text inputs via gated cross-attention, enabling efficient and scalable processing regardless of data length.

During training on complex ECG datasets, OpenTSLM-Flamingo demonstrated remarkable memory efficiency, requiring only 40 GB of VRAM compared to 110 GB for the SoftPrompt model using the same LLM backbone.

Benchmarking Success: OpenTSLM Surpasses GPT-4o in Medical Reasoning

To evaluate OpenTSLM’s capabilities, researchers developed three novel Chain-of-Thought (CoT) datasets tailored to medical reasoning tasks: HAR-CoT (human activity recognition), Sleep-CoT (EEG-based sleep staging), and ECG-QA-CoT (ECG question answering).

- Sleep Staging: OpenTSLM achieved an impressive 69.9% F1 score, dramatically outperforming the best fine-tuned text-only baseline, which scored just 9.05%.

- Activity Recognition: The model reached a 65.4% F1 score, showcasing robust performance in interpreting complex sensor data.

Notably, even smaller OpenTSLM models with around 1 billion parameters significantly outperformed GPT-4o, which managed only 15.47% on the Sleep-CoT dataset when processing data as text tokens. This highlights the advantage of specialized architectures tailored to time-series data over general-purpose large models.

These results suggest that domain-specific AI models can deliver superior accuracy without the need for massive scale, opening avenues for efficient, on-device medical AI applications that prioritize both performance and resource economy.

Clinical Validation: Building Trust Through Transparent AI Reasoning

Trustworthiness is paramount in medical AI. Unlike conventional models that provide opaque predictions, OpenTSLM offers transparent, human-readable explanations through Chain-of-Thought rationales, enhancing interpretability in clinical environments.

To assess this, five cardiologists at Stanford Hospital reviewed the ECG interpretations generated by the OpenTSLM-Flamingo model. The evaluation revealed that the model delivered accurate or partially accurate interpretations in an outstanding 92.9% of cases. Furthermore, it excelled at incorporating clinical context, receiving positive assessments in 85.1% of reviews, demonstrating advanced reasoning over raw sensor inputs.

Implications and Future Directions in Multimodal AI

The advent of OpenTSLM signifies a major leap forward in multimodal machine learning, effectively bridging the divide between LLMs and continuous time-series data. This innovation not only promises to enhance healthcare diagnostics but also holds potential for diverse fields such as financial analytics, industrial monitoring, and environmental sensing.

To foster further advancements, the research teams from Stanford and ETH Zurich have committed to open-sourcing their models and datasets, encouraging the global AI community to build upon this foundation.