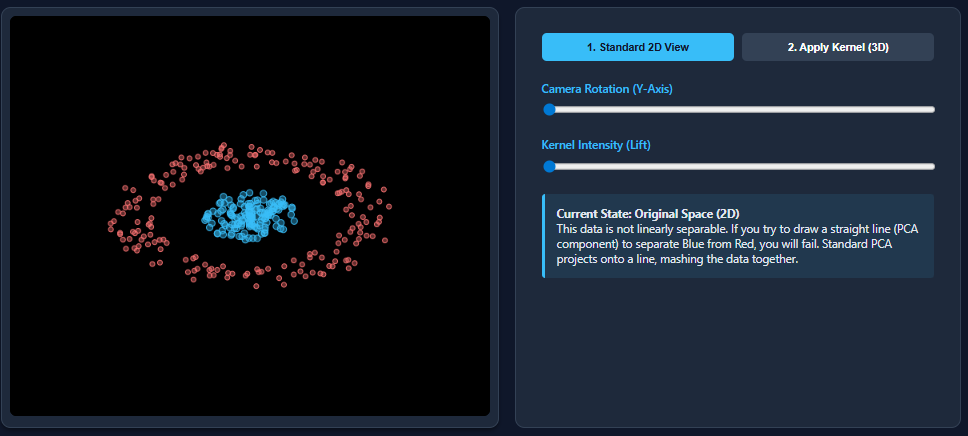

Dimensionality reduction methods such as Principal Component Analysis (PCA) excel when dealing with datasets that exhibit linear separability. However, their effectiveness diminishes significantly when confronted with nonlinear data patterns. A classic example is the two moons dataset, where PCA tends to collapse the inherent structure, causing the classes to overlap and become indistinguishable.

To overcome this limitation, Kernel PCA introduces a powerful extension by projecting data into a higher-dimensional feature space. In this transformed space, nonlinear relationships become linearly separable, enabling more effective dimensionality reduction. This article explores the mechanics of Kernel PCA and demonstrates, through a practical example, how it outperforms traditional PCA in separating nonlinear datasets.

Understanding PCA and Kernel PCA: A Comparative Overview

Principal Component Analysis (PCA) is a foundational linear technique for reducing the dimensionality of data. It identifies principal components-orthogonal directions capturing the greatest variance in the dataset-and projects the data onto these axes. These components are uncorrelated and ranked so that the first few retain most of the dataset’s variability.

Despite its utility, PCA is inherently limited to linear transformations. When applied to datasets with nonlinear structures, such as the “two moons” configuration, PCA fails to disentangle the classes effectively, resulting in overlapping clusters after projection.

Kernel PCA addresses this shortcoming by leveraging kernel functions-like the Radial Basis Function (RBF), polynomial, or sigmoid kernels-to implicitly map data into a higher-dimensional space. In this new space, nonlinear patterns become linearly separable. The algorithm then performs PCA on this transformed representation using a kernel matrix, without explicitly computing the coordinates in the higher-dimensional space. This approach, known as the “kernel trick,” enables Kernel PCA to capture complex, nonlinear relationships that standard PCA cannot.

Visualizing the Difference: PCA vs. Kernel PCA on Nonlinear Data

To illustrate the contrast between PCA and Kernel PCA, we generate a nonlinear “two moons” dataset, a popular benchmark for testing nonlinear dimensionality reduction techniques. This dataset consists of two interleaving half circles, which are not linearly separable in the original feature space.

Creating the Nonlinear Dataset

import matplotlib.pyplot as plt

from sklearn.datasets import make_moons

# Generate a two moons dataset with slight noise

X, y = make_moons(n_samples=1000, noise=0.02, random_state=123)

# Plot the original dataset

plt.scatter(X[:, 0], X[:, 1], c=y, cmap='viridis')

plt.title("Original Two Moons Dataset")

plt.xlabel("Feature 1")

plt.ylabel("Feature 2")

plt.show()

The scatter plot reveals the characteristic crescent shapes of the two moons, clearly nonlinear and intertwined.

Applying Standard PCA

from sklearn.decomposition import PCA

# Perform PCA to reduce to two components

pca = PCA(n_components=2)

X_pca = pca.fit_transform(X)

# Visualize the PCA-transformed data

plt.scatter(X_pca[:, 0], X_pca[:, 1], c=y, cmap='viridis')

plt.title("PCA Projection")

plt.xlabel("Principal Component 1")

plt.ylabel("Principal Component 2")

plt.show()

After PCA transformation, the two moons remain entangled, demonstrating PCA’s inability to separate nonlinear structures. This occurs because PCA only applies linear transformations, such as rotations and scalings, which cannot “unfold” the curved shapes.

Implementing Kernel PCA with an RBF Kernel

from sklearn.decomposition import KernelPCA

# Apply Kernel PCA with RBF kernel to capture nonlinear structure

kpca = KernelPCA(kernel='rbf', gamma=15)

X_kpca = kpca.fit_transform(X)

# Plot the Kernel PCA result

plt.scatter(X_kpca[:, 0], X_kpca[:, 1], c=y, cmap='viridis')

plt.title("Kernel PCA Projection (RBF Kernel)")

plt.xlabel("Kernel Principal Component 1")

plt.ylabel("Kernel Principal Component 2")

plt.show()

Kernel PCA successfully separates the two moons into distinct clusters by mapping the data into a space where the classes become linearly separable. This transformation is crucial for enhancing the performance of subsequent tasks such as classification, clustering, and visualization.

Why Kernel PCA Matters: Benefits and Practical Considerations

The primary objective of dimensionality reduction is not merely to compress data but to reveal its intrinsic structure while preserving meaningful variance. In datasets with nonlinear patterns, Kernel PCA’s nonlinear mapping enables the algorithm to “unfold” complex shapes, making the data more interpretable and easier to analyze.

Once transformed, simple linear models can effectively distinguish between classes that were previously inseparable, simplifying downstream machine learning workflows.

Limitations and Challenges of Kernel PCA

Despite its advantages, Kernel PCA presents several challenges:

- Computational Complexity: Kernel PCA requires calculating pairwise similarities between all data points, resulting in O(n²) time and memory complexity. This can be prohibitive for very large datasets.

- Kernel and Parameter Selection: Choosing an appropriate kernel function and tuning hyperparameters like gamma often demands trial-and-error or domain knowledge, impacting the quality of the results.

- Interpretability: The transformed components in Kernel PCA do not correspond directly to original features, making interpretation less intuitive compared to standard PCA.

- Sensitivity to Data Quality: Missing values and outliers can distort the kernel matrix, adversely affecting the transformation and subsequent analysis.

Conclusion

Kernel PCA extends the capabilities of traditional PCA by enabling effective dimensionality reduction on nonlinear datasets. Through implicit mapping into higher-dimensional spaces, it reveals complex structures that linear methods cannot detect. While it introduces computational and interpretability challenges, its ability to transform nonlinear data into linearly separable forms makes it an invaluable tool in modern data analysis and machine learning pipelines.