The enthusiasm for generative AI has grown. Experts warn that we should proceed cautiously. As with any new technology, generative artificial intelligence can be a powerful tool for society, but if it is not regulated, it can have serious consequences.

Natasha Govender Ropert is one of these voices. She is the Head of AI for Financial Crimes, at Rabobank. She spoke with TNW founder Boris Veldhuijzen van Zanten about AI ethics, bias and whether we’re outsourcing the brains of machines.

Watch the full interview, recorded en route for TNW2025. Kia’s all-electric EV9:

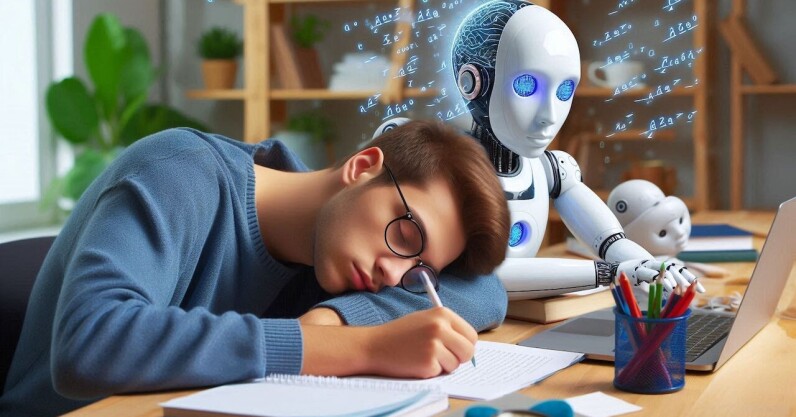

We should ask ourselves, as we rely more and more on generative AI for answers, how this could affect our own intelligence.

A study conducted by MITon the use of ChatGPT for writing essays has sparked a slew sensationalist headlines. From “Researchers claim using ChatGPT could rot your brain,” to “ChatGPT may make you lazy and stupid.” Is this actually true?

Your Brain on Gen AI

This is what really occurred: Researchers gave 54 Boston area students an essay task. One group used ChatGPT (without AI), another used Google, and the third had no choice but to write using their brains. While they wrote, the brain activity of each participant was measured with electrodes.

The brain-only group showed higher levels of mental connectivity after three sessions. ChatGPT users? The lowest. The AI-assisted people seemed to be on autopilot, while the other participants had to work harder to get the words on the paper.

In round four, the roles were reversed. This time, the brain-only group was able to use ChatGPT while the AI group was forced to go alone. The result? The first group improved their essays. The latter had trouble remembering what they’d originally written.

The study found that, over the course of four months, brain-only participants performed better than the other groups on neural, linguistic and behavioral levels. Those who used ChatGPT spent less effort on their essays by simply copying/pasting.

English Teachers who reviewed their work stated that it lacked “soul” and original thought. Sounds alarming, doesn’t it? The truth is more complex than sensationalist headlines would suggest.

Less about brain decay, and more about mental shortcuts. They showed that relying too heavily on LLMs could reduce mental engagement. These risks can be avoided by using LLMs in a thoughtful and active way. Researchers also stressed that while the study raised interesting questions for future research, it was too small and simple to make definitive conclusions.

Is critical thinking dead?

Although the findings (which have not yet been peer reviewed) require further research and reflection on how we should use this tool in educational and professional contexts as well as personal ones, perhaps what is actually rotting our minds are TLDR headlines designed for clicks rather than accuracy.

It seems that the researchers share these concerns. They created a FAQ page on their website where they asked reporters to refrain from using language that was inaccurate and sensationalized the findings.

Ironically, they attributed the resulting “noise” to reporters using LLMs to summarize the paper and added, “Your HUMAN feedback is very welcome, if you read the paper or parts of it. As a reminder, we have listed the limitations of the study in both the paper and the webpage.

We can safely draw two conclusions from this study.

- More study is needed to determine how LLMs are best used in educational settings.

- Students and reporters should remain critical when evaluating information, whether it comes from the media or from generative AI.

Researchers at the Vrije University Amsterdam worry that our ability

Students may be less likely to conduct comprehensive or extensive searches themselves because they deferred to the authoritative tone of GenAI output. They may be less inclined to question – or even identify – the unstated viewpoints underlying the output. They may not consider the perspectives that are being glossed-over and the assumptions that inform the claims. We can overlook biases when we take the outputs of AI at face value. This requires not only technical fixes but also a critical reflection on the meaning of bias. These issues are at the heart of Natasha Govender Ropert’s work as Head of AI for Financial Crimes at Rabobank. Her role is to build a responsible, trustworthy AI through eliminating bias. As she explained to TNW founder Boris Veldhuijzen van Zanten, in “Kia’s Next Big Drive,” a bias is a subjective word and needs to defined for each person and company.

Bias does not have a consistent meaning. What I consider biased or unbiased might be different from what someone else does. We as humans and individuals have to decide this. We need to choose and say that this is the standard we will use when analyzing our data,” said Govender Ropert.

The social norms and biases do not remain fixed, but are constantly changing. The historical data that we use to train our LLMs does not change as society changes. We must remain critical and question the information we receive from both our fellow humans and our machines to build a just and equitable society.