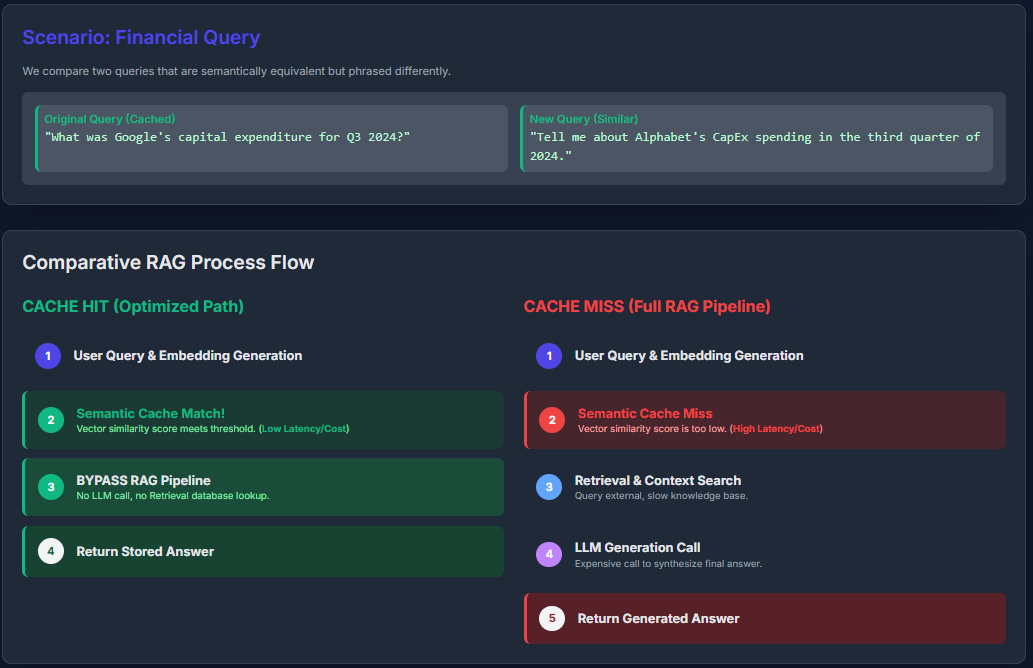

Semantic caching in Large Language Model (LLM) applications enhances efficiency by storing and reusing answers based on the underlying meaning of queries rather than relying on exact text matches. When a new question is submitted, it is transformed into a vector embedding that captures its semantic essence. This embedding is then compared against cached embeddings using similarity search algorithms. If a sufficiently close match is detected-surpassing a predefined similarity threshold-the system promptly returns the cached response, bypassing the resource-intensive retrieval and generation steps. If no suitable match exists, the full Retrieval-Augmented Generation (RAG) process is executed, and the new query-response pair is added to the cache for future reference.

Understanding Semantic Caching in LLM Systems

Semantic caching operates by indexing responses according to the conceptual content of user inputs rather than their literal phrasing. Each incoming query is encoded into a high-dimensional vector embedding that represents its semantic meaning. The system then employs Approximate Nearest Neighbor (ANN) search techniques to efficiently find embeddings in the cache that closely resemble the new query’s embedding.

When a cached entry exceeds the similarity threshold, its associated response is immediately served, eliminating the need for repeated calls to the LLM or document retrieval modules. If no match is found, the RAG pipeline retrieves relevant documents and generates a fresh answer, which is subsequently cached. This approach significantly reduces latency and computational overhead, especially for queries that are semantically alike but phrased differently.

What Data Is Stored in Semantic Caches?

In RAG-based applications, semantic caching selectively stores responses only for queries that have been previously processed, rather than preemptively caching all conceivable questions. Each cache entry typically contains the query’s embedding vector and the corresponding LLM-generated response. Depending on the architecture, the cache might also retain the retrieved documents used to generate the answer, enabling more comprehensive reuse.

To maintain optimal performance and manage memory usage, cache entries are often governed by policies such as Time-To-Live (TTL) expiration or Least Recently Used (LRU) eviction. These strategies ensure that the cache remains populated with the most relevant and frequently accessed query-response pairs, preventing stale or rarely used data from consuming resources.

Practical Demonstration: Semantic Caching in Action

Installing Required Libraries

pip install openai numpyConfiguring the Environment

import os

from getpass import getpass

os.environ['OPENAI_API_KEY'] = getpass('Please enter your OpenAI API key: ')While this example uses OpenAI’s API, the principles apply to any LLM provider supporting embeddings and text generation.

Initializing the OpenAI Client

from openai import OpenAI

client = OpenAI()Benchmarking Without Caching

To establish a baseline, we execute the same query ten times consecutively using the GPT-4.1 model without any caching. Each request triggers a full generation cycle, resulting in redundant processing and increased latency.

import time

def query_gpt(text):

start_time = time.time()

response = client.responses.create(

model="gpt-4.1",

input=text

)

elapsed = time.time() - start_time

return response.output[0].content[0].text, elapsed

query = "Briefly explain semantic caching."

total_duration = 0

for i in range(10):

_, duration = query_gpt(query)

total_duration += duration

print(f"Iteration {i+1} duration: {duration:.2f} seconds")

print(f"Total time for 10 iterations: {total_duration:.2f} seconds")Despite identical inputs, each call takes between 1 to 3 seconds, accumulating to roughly 22 seconds total. This inefficiency underscores the value of semantic caching in reducing redundant computations and API expenses.

Integrating Semantic Caching for Enhanced Efficiency

Next, we implement semantic caching to reuse responses for semantically similar queries, drastically cutting down response times and costs.

The process involves generating an embedding for each query using the text-embedding-3-small model. We then compute cosine similarity between the new query’s embedding and those stored in the cache. If a cached embedding exceeds a similarity threshold (e.g., 0.85), the cached response is returned immediately. Otherwise, the system queries the LLM, caches the new response and embedding, and returns the fresh answer.

import numpy as np

from numpy.linalg import norm

import time

semantic_cache = []

def get_embedding(text):

embedding_response = client.embeddings.create(model="text-embedding-3-small", input=text)

return np.array(embedding_response.data[0].embedding)

def cosine_similarity(vec1, vec2):

return np.dot(vec1, vec2) / (norm(vec1) * norm(vec2))

def ask_with_cache(query, threshold=0.85):

query_emb = get_embedding(query)

for cached_query, cached_emb, cached_response in semantic_cache:

similarity = cosine_similarity(query_emb, cached_emb)

if similarity > threshold:

print(f"🔄 Returning cached response (similarity: {similarity:.2f})")

return cached_response, 0.0 # No API call time

start = time.time()

response = client.responses.create(

model="gpt-4.1",

input=query

)

end = time.time()

answer = response.output[0].content[0].text

semantic_cache.append((query, query_emb, answer))

return answer, end - startTesting Semantic Caching with Multiple Queries

queries = [

"Explain semantic caching in simple terms.",

"What is semantic caching and how does it function?",

"How does caching operate in LLMs?",

"Describe semantic caching for language models.",

"Explain semantic caching simply."

]

total_time = 0

for q in queries:

response, duration = ask_with_cache(q)

total_time += duration

print(f"⏱ Query processed in {duration:.2f} seconds")

print(f"Total processing time with caching: {total_time:.2f} seconds")In this example, the initial query requires a full API call, taking approximately 8 seconds. Subsequent queries with high semantic similarity (e.g., similarity scores above 0.85) retrieve cached responses instantly, saving significant time. Queries that differ more substantially trigger new API calls, each taking around 10 seconds. The final query, nearly identical to the first, is served from cache immediately, demonstrating the effectiveness of semantic caching in handling paraphrased or related questions.

By leveraging semantic caching, developers can dramatically reduce latency and operational costs in LLM-powered applications, especially when dealing with repetitive or closely related queries. This technique is particularly valuable in customer support bots, knowledge bases, and interactive AI systems where similar questions frequently recur.