Assessing the performance of Large Language Model (LLM) applications, especially those incorporating Retrieval-Augmented Generation (RAG), is a vital yet frequently overlooked step. Without thorough evaluation, it becomes challenging to verify whether the retriever component is functioning effectively, if the LLM’s responses are accurately grounded in source material rather than fabricated, and whether the context window size is appropriately configured.

Why Synthetic Evaluation Data Matters for RAG Systems

Early-stage testing often suffers from a lack of authentic user interaction data, making it difficult to establish a reliable performance baseline. A practical workaround is to create synthetic evaluation datasets that mimic real-world queries and responses. This article demonstrates how to generate such realistic test cases using DeepEval, an open-source tool designed to streamline LLM evaluation. DeepEval enables you to benchmark your RAG pipeline comprehensively before deploying it in production.

Setting Up Your Environment

Installing Required Libraries

Begin by installing the necessary Python packages to run DeepEval and its dependencies:

!pip install deepeval chromadb tiktoken pandasObtaining Your OpenAI API Key

DeepEval relies on external language models to compute detailed evaluation metrics, so you will need an OpenAI API key to proceed:

- Visit the OpenAI platform and generate a new API key.

- If you are new to OpenAI, you may be required to add billing information and make a minimal initial payment (usually around $5) to activate your account fully.

Creating a Source Document for Synthetic Data Generation

Next, define a text variable that will serve as the foundational document for generating synthetic evaluation data. This text should encompass a broad range of factual information across various fields such as biology, physics, history, space science, environmental studies, medicine, computing, and ancient cultures. Providing diverse content ensures the LLM has rich material to generate meaningful questions and answers.

DeepEval’s Synthesizer will:

- Segment the text into semantically coherent sections,

- Identify relevant contexts for question generation, and

- Create synthetic “golden” pairs-input queries paired with expected outputs-that simulate authentic user interactions and ideal LLM responses.

After defining the text, save it as a plain text file so DeepEval can process it. You can substitute this with any well-structured document, such as a scientific article, encyclopedia entry, or technical blog post.

text = """

Dolphins exhibit complex social behaviors and can recognize themselves in mirrors, indicating high cognitive abilities.

The mantis shrimp possesses one of the most sophisticated visual systems, capable of detecting polarized light and a broad spectrum of colors.

In physics, graphene is a revolutionary material known for its exceptional strength and electrical conductivity, opening new frontiers in nanotechnology.

Historically, the Great Library of Timbuktu was a renowned center of knowledge in Africa, preserving thousands of manuscripts despite centuries of conflict.

The Hubble Space Telescope has provided unprecedented views of the universe since its launch in 1990, vastly expanding our understanding of cosmic phenomena.

On Earth, the Congo Basin rainforest plays a critical role in carbon sequestration, helping mitigate climate change.

Coral reefs, often referred to as the "rainforests of the ocean," support approximately 30% of marine biodiversity despite covering less than 1% of the ocean floor.

In healthcare, CRISPR gene-editing technology is revolutionizing treatments by enabling precise genetic modifications.

In computing, quantum processors are emerging as the next leap forward, promising exponential speedups for certain algorithms.

The Mariana Trench remains the deepest known point in the world's oceans, reaching depths of nearly 11,000 meters.

Ancient civilizations such as the Indus Valley developed sophisticated urban planning and drainage systems thousands of years ago.

"""with open("source_document.txt", "w") as file:

file.write(text)Automating Synthetic Evaluation Data Creation

Utilize DeepEval’s Synthesizer class to automatically generate synthetic evaluation datasets-referred to as “goldens”-from your source document. For this example, the lightweight model gpt-4.1-nano is chosen to balance performance and resource usage. The synthesizer reads the document and produces question-answer pairs that reflect the content’s complexity and diversity, ideal for benchmarking your LLM’s comprehension and retrieval capabilities.

For instance, generated inputs might include prompts like “Analyze the cognitive skills of dolphins in self-recognition tasks” or “Explain the ecological importance of the Congo Basin rainforest.” Each output contains a well-structured answer supported by relevant excerpts from the source text, showcasing DeepEval’s ability to create high-quality synthetic datasets.

from deepeval.synthesizer import Synthesizer

synthesizer = Synthesizer(model="gpt-4.1-nano")

synthesizer.generate_goldens_from_docs(

document_paths=["source_document.txt"],

include_expected_output=True

)

for golden in synthesizer.synthetic_goldens[:3]:

print(golden, "n")Enhancing Input Diversity with EvolutionConfig

To enrich the complexity and variety of generated questions, configure the EvolutionConfig in DeepEval. This allows you to assign weights to different evolution strategies such as:

- REASONING: Encourages logical deduction and inference-based questions.

- MULTICONTEXT: Promotes questions that integrate multiple information sources.

- COMPARATIVE: Generates queries that require comparison between concepts.

- HYPOTHETICAL: Creates scenario-based or “what-if” questions.

- IN_BREADTH: Focuses on broad, comprehensive inquiries.

The num_evolutions parameter controls how many of these strategies are applied per text segment, enabling the synthesizer to produce multifaceted and nuanced evaluation examples. This approach tests the LLM’s ability to handle complex reasoning and diverse contexts effectively.

For example, one generated question might ask about dolphins’ problem-solving abilities and social cognition, while another could compare the historical significance of the Great Library of Timbuktu with modern digital archives. Each synthetic golden includes the original context, applied evolution types, and a quality score, ensuring a robust and varied evaluation dataset.

from deepeval.synthesizer.config import EvolutionConfig, Evolution

evolution_config = EvolutionConfig(

evolutions={

Evolution.REASONING: 0.2,

Evolution.MULTICONTEXT: 0.2,

Evolution.COMPARATIVE: 0.2,

Evolution.HYPOTHETICAL: 0.2,

Evolution.IN_BREADTH: 0.2,

},

num_evolutions=3

)

synthesizer = Synthesizer(evolution_config=evolution_config)

synthesizer.generate_goldens_from_docs(["source_document.txt"])Building a Continuous Improvement Cycle for Your RAG Pipeline

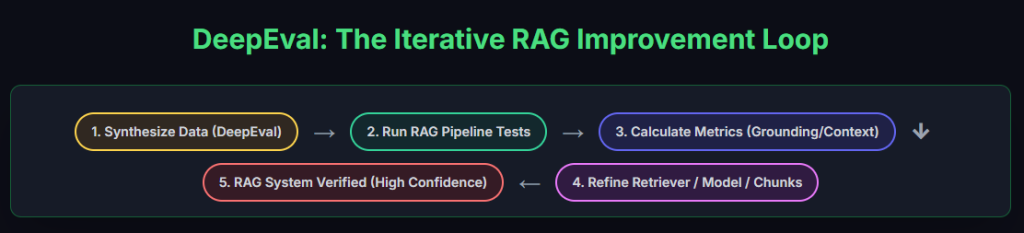

Generating sophisticated synthetic datasets with DeepEval allows you to overcome the initial challenge of limited real user data. By leveraging the Synthesizer alongside tailored evolution configurations, you can create comprehensive test cases that probe your RAG system’s capabilities across multiple dimensions-ranging from multi-context reasoning to hypothetical scenarios.

This rich, custom-generated dataset serves as a reliable benchmark, enabling iterative refinement of both retrieval and generation components. By continuously measuring key metrics such as grounding accuracy and context relevance, you can systematically enhance your pipeline’s performance and ensure it delivers trustworthy, well-supported answers before handling live queries.

The iterative evaluation loop powered by DeepEval fosters a rigorous testing environment, providing actionable feedback to optimize your RAG system’s retriever and LLM modules. This process builds confidence in your system’s reliability and robustness, ultimately leading to a high-quality deployment.