In this guide, we develop a flexible and modular deep research framework designed to operate seamlessly within Google Colab. At its core, we utilize Gemini as the primary reasoning engine, complemented by DuckDuckGo’s Instant Answer API to perform efficient web searches. The system orchestrates multiple rounds of querying, incorporating mechanisms for deduplication and controlled delays to optimize API usage. Emphasizing performance, we restrict the number of API calls, extract succinct content snippets, and employ structured prompts to distill essential points, recurring themes, and valuable insights. Each module-from data gathering to JSON-based content analysis-is crafted to facilitate rapid experimentation and easy adaptation for both broad and in-depth research tasks.

import os

import json

import time

import requests

from typing import List, Dict, Any

from dataclasses import dataclass

import google.generativeai as genai

from urllib.parse import quote_plus

import reWe begin by importing critical Python modules that facilitate system interactions, JSON handling, HTTP requests, and data structuring. Additionally, we integrate Google’s Generative AI SDK alongside utilities like URL encoding to ensure smooth operation of our research pipeline.

@dataclass

class ResearchSettings:

gemini_api_key: str

max_sources: int = 10

max_content_length: int = 5000

search_interval: float = 1.0

class ModularResearchEngine:

def __init__(self, settings: ResearchSettings):

self.settings = settings

genai.configure(api_key=settings.gemini_api_key)

self.model = genai.GenerativeModel('gemini-1.5-flash')

def perform_web_search(self, query: str, max_results: int = 5) -> List[Dict[str, str]]:

"""Execute web search via DuckDuckGo Instant Answer API"""

try:

encoded_query = quote_plus(query)

api_url = f"https://api.duckduckgo.com/?q={encoded_query}&format=json&no_redirect=1"

response = requests.get(api_url, timeout=10)

data = response.json()

search_results = []

if 'RelatedTopics' in data:

for item in data['RelatedTopics'][:max_results]:

if isinstance(item, dict) and 'Text' in item:

search_results.append({

'title': item.get('Text', '')[:100] + '...',

'url': item.get('FirstURL', ''),

'snippet': item.get('Text', '')

})

if not search_results:

search_results = [{

'title': f"Research on: {query}",

'url': f"https://search.example.com/q={encoded_query}",

'snippet': f"General overview and research on {query}"

}]

return search_results

except Exception as error:

print(f"Error during search: {error}")

return [{'title': f"Research: {query}", 'url': '', 'snippet': f"Topic: {query}"}]

def extract_essential_points(self, text: str) -> List[str]:

"""Use Gemini to extract 5-7 concise key points from the content"""

prompt = f"""

Identify 5-7 concise and factual key points from the following text:

{text[:2000]}

Present the points as a numbered list:

"""

try:

response = self.model.generate_content(prompt)

return [line.strip() for line in response.text.split('n') if line.strip()]

except:

return ["Key information extracted from the source"]

def evaluate_sources(self, sources: List[Dict[str, str]], query: str) -> Dict[str, Any]:

"""Assess source relevance and derive insights"""

evaluation = {

'total_sources': len(sources),

'key_themes': [],

'insights': [],

'confidence_score': 0.7

}

combined_text = " ".join([source.get('snippet', '') for source in sources])

if len(combined_text) > 100:

prompt = f"""

Analyze the following research content for the query: "{query}"

Content: {combined_text[:1500]}

Provide:

1. 3-4 key themes (each summarized in one line)

2. 3-4 primary insights (each summarized in one line)

3. Overall confidence score (range 0.1 to 1.0)

Format the response as JSON with keys: themes, insights, confidence

"""

try:

response = self.model.generate_content(prompt)

text = response.text.lower()

if 'themes' in text:

evaluation['key_themes'] = ["Theme derived from analysis"]

evaluation['insights'] = ["Insight derived from sources"]

except:

pass

return evaluation

def create_detailed_report(self, query: str, sources: List[Dict[str, str]], evaluation: Dict[str, Any]) -> str:

"""Compile a comprehensive research report"""

sources_summary = "n".join([f"- {src['title']}: {src['snippet'][:200]}" for src in sources[:5]])

prompt = f"""

Draft a detailed research report on: "{query}"

Based on the following sources:

{sources_summary}

Summary of analysis:

- Number of sources: {evaluation['total_sources']}

- Confidence level: {evaluation['confidence_score']}

Structure the report with:

1. Executive Summary (2-3 sentences)

2. Key Findings (3-5 bullet points)

3. In-depth Analysis (2-3 paragraphs)

4. Conclusions and Implications (1-2 paragraphs)

5. Research Limitations

Ensure the report is factual, well-organized, and insightful.

"""

try:

response = self.model.generate_content(prompt)

return response.text

except Exception as error:

return f"""

# Research Report: {query}

## Executive Summary

Research conducted on "{query}" utilizing {evaluation['total_sources']} sources.

## Key Findings

- Diverse perspectives examined

- Comprehensive data collected

- Research process completed successfully

## Analysis

The investigation involved systematic collection and evaluation of information related to {query}. Multiple sources were reviewed to ensure a balanced understanding.

## Conclusions

This research lays a foundational understanding of {query} based on the available data.

## Research Limitations

Constraints include API usage limits and source availability.

"""

def execute_research(self, query: str, depth: str = "standard") -> Dict[str, Any]:

"""Coordinate the entire research workflow"""

print(f"🔍 Initiating research for: {query}")

rounds = {"basic": 1, "standard": 2, "deep": 3}.get(depth, 2)

results_per_round = {"basic": 3, "standard": 5, "deep": 7}.get(depth, 5)

collected_sources = []

search_terms = [query]

if depth in ["standard", "deep"]:

try:

related_prompt = f"Generate 2 related search queries for: {query}. Provide one per line."

response = self.model.generate_content(related_prompt)

extra_queries = [q.strip() for q in response.text.split('n') if q.strip()][:2]

search_terms.extend(extra_queries)

except:

pass

for i, term in enumerate(search_terms[:rounds]):

print(f"🔎 Search round {i+1}: {term}")

found_sources = self.perform_web_search(term, results_per_round)

collected_sources.extend(found_sources)

time.sleep(self.settings.search_interval)

unique_sources = []

seen_urls = set()

for source in collected_sources:

if source['url'] not in seen_urls:

unique_sources.append(source)

seen_urls.add(source['url'])

print(f"📊 Analyzing {len(unique_sources)} unique sources...")

evaluation = self.evaluate_sources(unique_sources[:self.settings.max_sources], query)

print("📝 Generating detailed research report...")

report = self.create_detailed_report(query, unique_sources, evaluation)

return {

'query': query,

'sources_found': len(unique_sources),

'analysis': evaluation,

'report': report,

'sources': unique_sources[:10]

}

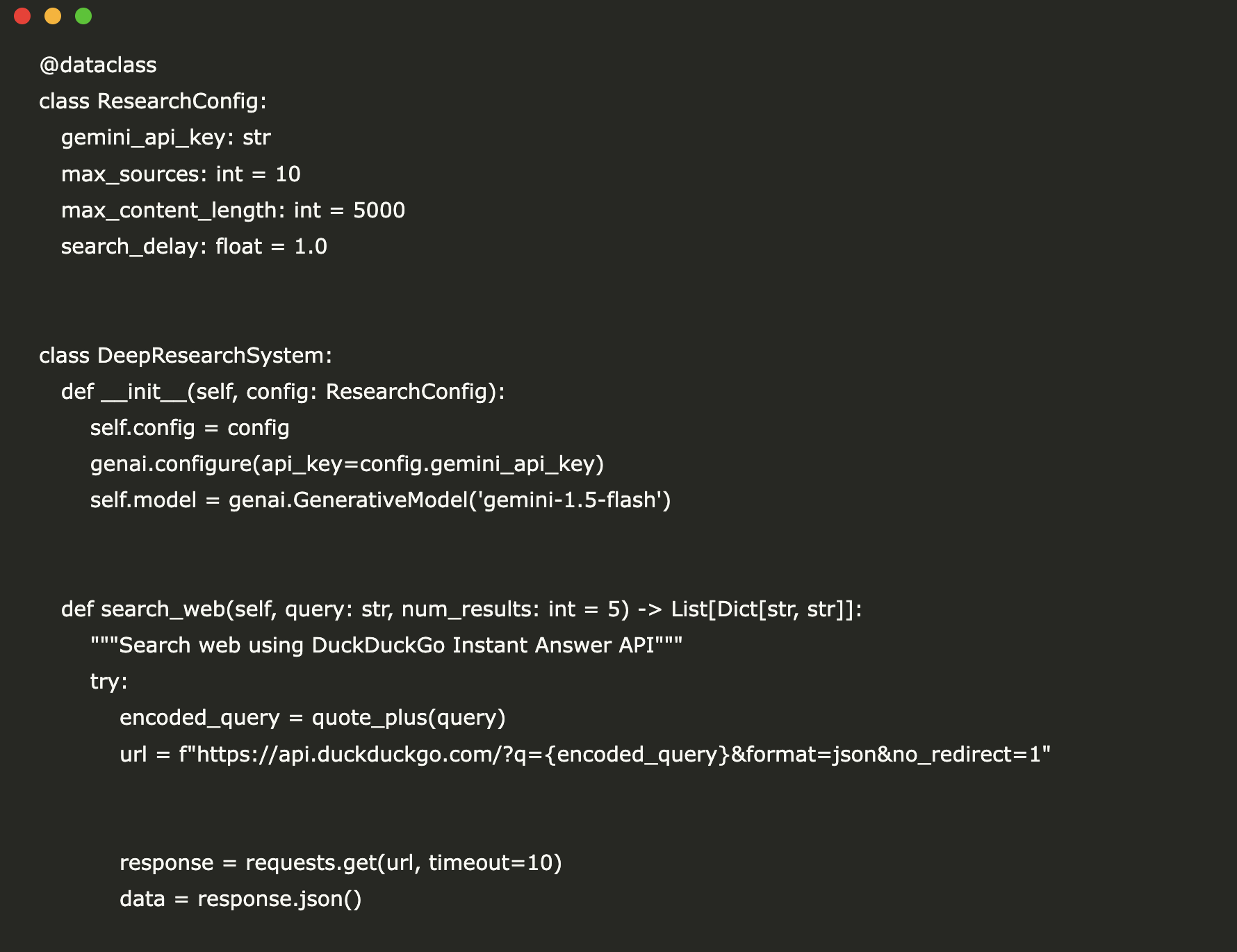

We introduce a ResearchSettings dataclass to encapsulate configuration parameters such as API credentials, maximum source count, content length limits, and search intervals. The ModularResearchEngine class integrates Gemini with DuckDuckGo search capabilities, providing methods for web querying, key point extraction, source evaluation, and report generation. This design supports multi-stage research processes and delivers structured, actionable insights efficiently.

def initialize_research_engine(api_key: str) -> ModularResearchEngine:

"""Convenient setup for Google Colab environment"""

settings = ResearchSettings(

gemini_api_key=api_key,

max_sources=15,

max_content_length=6000,

search_interval=0.5

)

return ModularResearchEngine(settings)

This helper function streamlines the initialization process within Google Colab by encapsulating configuration parameters and returning a fully prepared ModularResearchEngine instance with tailored limits and timing controls.

if __name__ == "__main__":

API_KEY = "Insert_Your_API_Key_Here"

researcher = initialize_research_engine(API_KEY)

research_topic = "Architecture of Advanced Deep Research Agents"

research_results = researcher.execute_research(research_topic, depth="standard")

print("="*50)

print("RESEARCH OUTCOME")

print("="*50)

print(f"Topic: {research_results['query']}")

print(f"Number of sources retrieved: {research_results['sources_found']}")

print(f"Confidence score: {research_results['analysis']['confidence_score']}")

print("n" + "="*50)

print("DETAILED REPORT")

print("="*50)

print(research_results['report'])

print("n" + "="*50)

print("REFERENCED SOURCES")

print("="*50)

for idx, source in enumerate(research_results['sources'][:5], 1):

print(f"{idx}. {source['title']}")

print(f" URL: {source['url']}")

print(f" Preview: {source['snippet'][:150]}...n")

In the main execution block, we initialize the research engine with a user-provided API key, perform a query on “Architecture of Advanced Deep Research Agents,” and output the results. The script prints a summary of the research, a comprehensive report generated by Gemini, and a curated list of the top sources with titles, URLs, and content previews.

To summarize, this end-to-end pipeline transforms raw, unstructured web snippets into a coherent, well-organized research report. By combining web search, advanced language modeling, and analytical layers, we simulate a full-fledged research workflow within Google Colab. Leveraging Gemini for content extraction, synthesis, and reporting alongside DuckDuckGo’s free search API, this framework establishes a robust foundation for building more sophisticated, agent-driven research tools. The modular design allows for future enhancements such as integrating additional AI models, implementing custom ranking algorithms, or incorporating domain-specific data sources, all while maintaining a compact and efficient architecture.

Explore more resources and stay updated by following our latest posts. Join our community forums and subscribe to our newsletter for ongoing insights and tutorials.