How can compact language models master complex tasks they currently struggle with, without merely copying examples or depending on flawless solution paths? Researchers from Google Cloud AI Research and UCLA have introduced an innovative training paradigm called Supervised Reinforcement Learning (SRL), designed to enable 7-billion parameter models to genuinely learn from challenging mathematical problems and agent trajectories-scenarios where traditional supervised fine-tuning and reward-based reinforcement learning (RL) often fall short.

Overcoming Limitations of Conventional Fine-Tuning in Small Models

Open-source models like Qwen2.5 7B Instruct typically fail to solve the most difficult problems in the s1K 1.1 benchmark, even when provided with high-quality teacher demonstrations. Applying standard supervised fine-tuning on complete DeepSeek R1-style solution sequences leads the model to mimic token-by-token, which is problematic due to the lengthy sequences and limited dataset size (only 1,000 examples). This approach often results in performance degradation, with final scores dropping below the original base model.

Introducing Supervised Reinforcement Learning: A Stepwise Approach

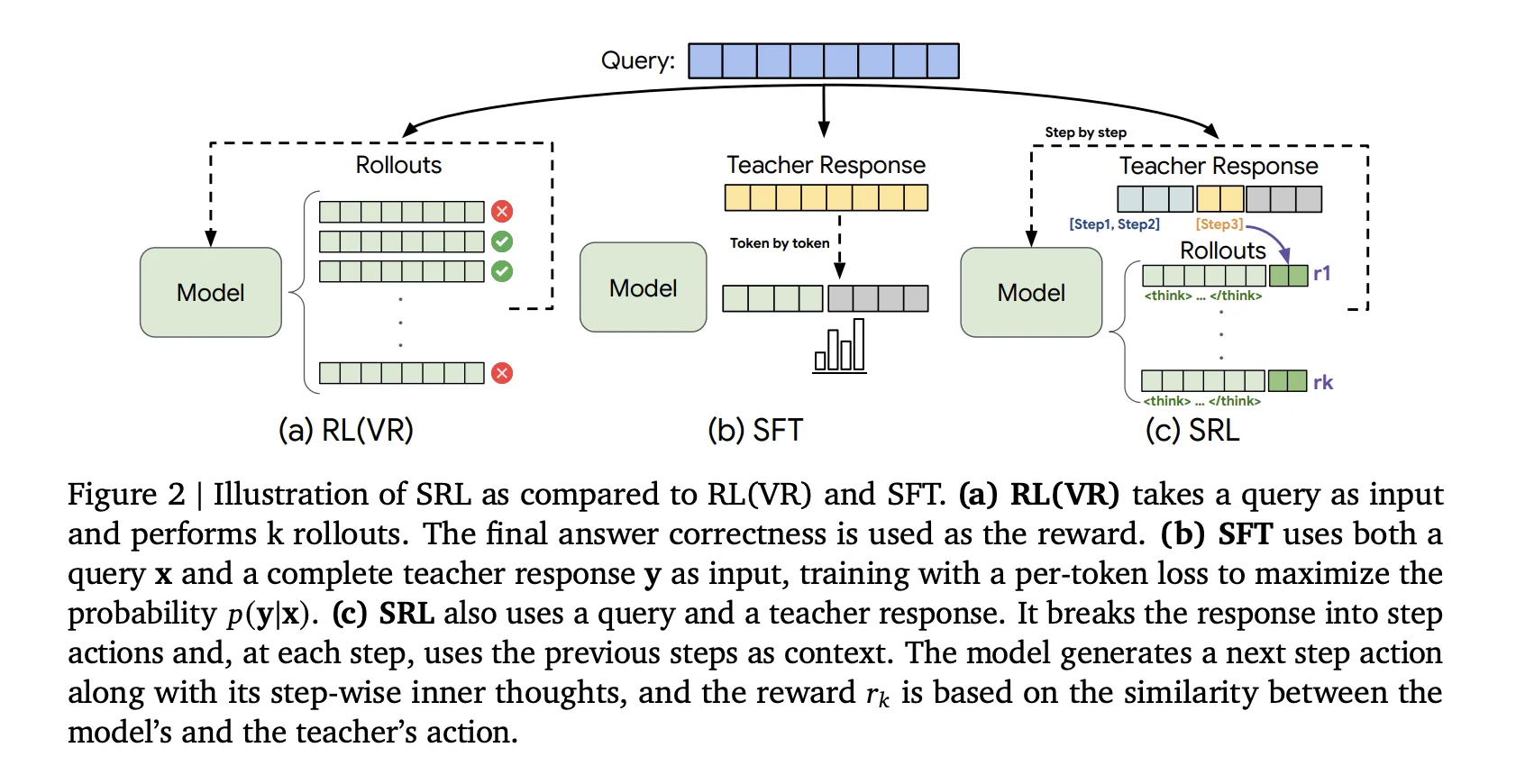

SRL innovates by maintaining the reinforcement learning optimization framework but channels supervision through the reward mechanism rather than the loss function. Each expert trajectory from the s1K 1.1 dataset is broken down into a sequence of discrete actions. For every prefix of this sequence, a new training instance is created where the model first generates an internal reasoning segment enclosed within <think> ... </think> tags. Following this, the model outputs a single action for that step. Crucially, only this action is compared against the expert’s action using a sequence similarity metric derived from Python’s difflib library. This design provides dense, stepwise rewards-even if the final answer is incorrect-while allowing the reasoning text to remain unconstrained, encouraging the model to explore its own problem-solving pathways rather than copying the teacher verbatim.

Quantitative Gains in Mathematical Reasoning

All models evaluated were initialized from Qwen2.5 7B Instruct and trained on the identical DeepSeek R1 formatted s1K 1.1 dataset, ensuring fair comparisons. The key performance metrics on math benchmarks are as follows:

- Base Qwen2.5 7B Instruct: AMC23 (greedy) 50.0%, AIME24 (greedy) 13.3%, AIME25 (greedy) 6.7%

- SRL alone: AMC23 50.0%, AIME24 16.7%, AIME25 13.3%

- SRL followed by RL with Value Replay (RLVR): AMC23 57.5%, AIME24 20.0%, AIME25 10.0%

These results highlight that SRL not only prevents the performance drop seen with supervised fine-tuning but also significantly boosts scores on challenging math problems. The combination of SRL and RLVR yields the highest open-source benchmark results, underscoring the synergy between these methods.

Enhancing Software Engineering Capabilities with SRL

The research team extended SRL to software engineering tasks using Qwen2.5 Coder 7B Instruct. They leveraged 5,000 verified agent trajectories generated by Claude 3 7 Sonnet, decomposing them into 134,000 stepwise training instances. Evaluation on the SWE Bench Verified benchmark revealed substantial improvements:

- Base model: 5.8% accuracy in oracle file edit mode, 3.2% end-to-end

- SWE Gym 7B baseline: 8.4% oracle, 4.2% end-to-end

- SRL-trained model: 14.8% oracle, 8.6% end-to-end

SRL nearly doubles the base model’s performance and surpasses the supervised fine-tuning baseline, demonstrating its effectiveness in complex code generation and editing tasks.

Essential Insights and Advantages of SRL

- Stepwise Reasoning and Reward: SRL reframes difficult reasoning as a sequence of action predictions, where the model first articulates an internal thought process before selecting an action. Only the action is rewarded based on similarity to expert steps, providing meaningful feedback even when the overall solution is incorrect.

- Robustness Against Overfitting and Collapse: Unlike supervised fine-tuning, SRL avoids overfitting to lengthy demonstrations. Compared to RLVR, it remains stable even when no rollout perfectly matches the expert trajectory.

- Optimal Training Pipeline: The best results arise from initializing with SRL and subsequently applying RLVR, which together elevate reasoning benchmarks beyond what either method achieves alone.

- Cross-Domain Applicability: The SRL framework generalizes effectively from mathematical problem-solving to agentic software engineering, leveraging verified expert trajectories to boost performance across domains.

- Efficiency and Simplicity: SRL maintains a GRPO-style objective without requiring an additional reward model. It relies solely on expert action sequences and lightweight string similarity metrics, making it practical for small, challenging datasets.

Concluding Perspectives on SRL’s Impact

Supervised Reinforcement Learning represents a pragmatic advancement by integrating process supervision directly into the reinforcement learning reward structure. This approach ensures consistent, informative feedback even in difficult problem regimes where traditional methods falter. Demonstrated success on both mathematical reasoning and software engineering benchmarks with a unified training recipe highlights SRL’s versatility and robustness. Importantly, the research confirms that combining SRL with RLVR yields superior outcomes, positioning SRL as a viable and accessible strategy for open-source models to tackle complex tasks. Overall, SRL bridges the gap between supervised learning and reinforcement learning, offering a streamlined path for model developers to enhance reasoning capabilities efficiently.