Contents Overview

Ensuring dependable multi-agent systems fundamentally revolves around effective memory architecture. When agents interact with tools, collaborate, and execute extended workflows, it becomes crucial to have clear strategies for what information is stored, how it is accessed, and how the system handles inaccuracies or missing data.

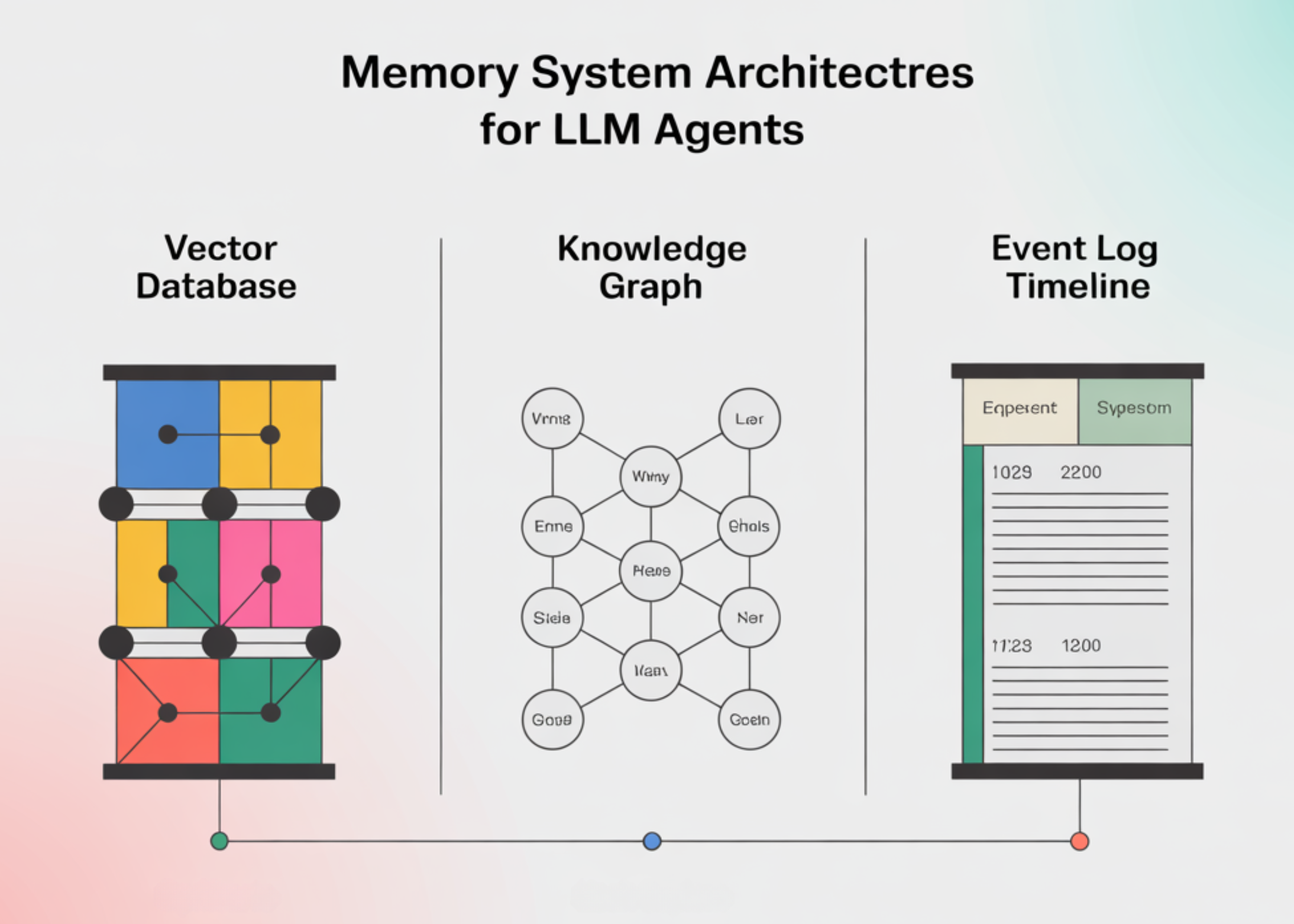

This discussion explores six prevalent memory architecture patterns utilized in agent frameworks, categorized into three main groups:

- Vector-based memory

- Graph-structured memory

- Event logs and execution histories

Our analysis emphasizes retrieval speed, accuracy of recall, and common failure scenarios in multi-agent planning.

Overview of Memory Architectures

| Category | Memory Model | Data Representation | Advantages | Limitations |

|---|---|---|---|---|

| Vector | Basic Vector Retrieval-Augmented Generation (RAG) | Embedding vectors | Simple implementation, rapid approximate nearest neighbor (ANN) search, broad tool support | Lacks temporal and structural context, prone to semantic drift |

| Vector | Hierarchical Vector Memory (MemGPT-style virtual context) | Active working set plus vector archive | Enhanced reuse of critical information, controlled context size | Errors in paging policies, divergence among agents |

| Graph | Temporal Knowledge Graph Memory (e.g., Zep, Graphiti) | Time-stamped knowledge graph | Robust temporal and cross-session reasoning, unified shared state | Requires schema design and update pipelines, potential for outdated edges |

| Graph | Knowledge-Graph RAG (GraphRAG) | Knowledge graph with hierarchical communities | Supports multi-document, multi-hop queries, global summarization | Bias in graph construction and summarization, complex traceability |

| Event / Logs | Execution Logs and Checkpoints (ALAS, LangGraph) | Ordered, versioned event logs | Authoritative action records, enables replay and targeted repair | Log size growth, incomplete instrumentation, requires side-effect-safe replay |

| Event / Logs | Episodic Long-Term Memory | Segmented episodes with metadata | Supports long-term recall and pattern reuse across tasks | Errors in episode boundaries, consolidation mistakes, agent misalignment |

Next, we delve into each memory category in detail.

1. Vector-Based Memory Architectures

1.1 Basic Vector RAG

Definition

This is the foundational approach in many retrieval-augmented generation and agent frameworks:

- Textual data (messages, tool outputs, documents) are transformed into embedding vectors.

- These vectors are stored in an approximate nearest neighbor (ANN) index such as FAISS, HNSW, or ScaNN.

- Queries are embedded similarly, and the system retrieves the top-k closest vectors, optionally applying reranking.

This method forms the core of vector store memory in most large language model (LLM) orchestration tools.

Performance and Latency

ANN indexes are optimized for sublinear retrieval times relative to dataset size:

- Graph-based ANN structures like HNSW typically exhibit near-logarithmic latency growth as the corpus expands.

- On well-configured single-node setups, querying millions of vectors usually takes only tens of milliseconds, excluding reranking overhead.

Key latency contributors include:

- ANN search operations.

- Optional reranking steps (e.g., cross-encoder models).

- LLM attention over concatenated retrieved content.

Recall Accuracy

High recall is observed when:

- Queries relate to recent or local context (“what was just discussed?”).

- Information is concentrated in a few chunks with embeddings well-aligned to the query.

However, vector RAG struggles with:

- Temporal queries (e.g., “what decision was made last week?”).

- Cross-session reasoning and extended histories.

- Multi-hop questions requiring explicit relational chains.

Benchmarks like Deep Memory Retrieval (DMR) and LongMemEval highlight these limitations, showing performance degradation on long-term and temporal tasks.

Common Failure Scenarios in Multi-Agent Planning

- Missed constraints: critical global rules (budget limits, compliance) are omitted due to retrieval misses, causing invalid plans.

- Semantic drift: retrieved neighbors match topic but differ in key identifiers (region, user ID), leading to incorrect parameters.

- Context dilution: concatenation of many partially relevant chunks causes the model to underweight crucial information, especially in lengthy contexts.

Ideal Use Cases

- Single-agent or short-duration tasks.

- Question answering over small to medium-sized datasets.

- Initial semantic indexing over logs, documents, or episodes, rather than as the sole memory source.

1.2 Hierarchical Vector Memory (MemGPT-Style Virtual Context)

Concept Overview

MemGPT introduces a virtual memory model for LLMs, combining a limited active working context with a larger external archive. The model dynamically manages memory by invoking tools to “swap in” or “archive” content, deciding what remains in immediate context versus long-term storage.

System Design

- Active context: tokens currently fed into the LLM, analogous to RAM.

- Archive/external memory: larger storage, often implemented with vector databases and object stores.

- The LLM orchestrates:

- Loading archived data into active context.

- Evicting less relevant context back to archive.

Latency Characteristics

Two operational modes exist:

- Within active context: retrieval is effectively instantaneous, limited to attention costs.

- Archive access: resembles basic vector RAG retrieval but is often scoped by task, topic, or session, with caching of frequently used entries.

This approach reduces the need to send large, irrelevant context to the model at every step, balancing retrieval cost and context size.

Recall Improvements

Compared to plain vector RAG:

- Frequently accessed information remains in the working set, avoiding repeated ANN retrieval.

- Less common or older data still depends on vector search quality.

The main new challenge is managing paging policies effectively.

Failure Modes in Multi-Agent Contexts

- Paging mistakes: important data may be archived prematurely or not recalled when needed, causing constraint violations.

- Agent divergence: individual agents maintaining separate working sets over a shared archive can develop inconsistent views.

- Complex debugging: failures arise from intertwined reasoning and memory management decisions.

Best Applications

- Extended conversations and workflows where unbounded context growth is impractical.

- Systems requiring vector RAG semantics with controlled context size.

- Environments where investment in paging policy design and tuning is feasible.

2. Graph-Based Memory Architectures

2.1 Temporal Knowledge Graph Memory (Zep / Graphiti)

Definition

Zep offers a memory layer for AI agents structured as a temporal knowledge graph (Graphiti), integrating:

- Conversational histories.

- Structured business data.

- Temporal metadata and versioning.

Evaluations on benchmarks like DMR and LongMemEval demonstrate:

- 94.8% accuracy compared to 93.4% for MemGPT on DMR.

- Up to 18.5% accuracy improvement and nearly 90% reduction in response latency on LongMemEval for complex temporal reasoning.

These results highlight the advantage of explicit temporal and relational modeling over pure vector retrieval for long-term tasks.

System Components

- Nodes: entities (users, tickets, resources) and events (messages, tool calls).

- Edges: relationships such as created_by, depends_on, updated_by, discussed_in.

- Temporal indexing: timestamps and validity intervals on nodes and edges.

- APIs: for inserting new facts and querying along entity and temporal dimensions.

The knowledge graph can be complemented by vector indices for semantic entry points.

Latency Profile

Graph queries typically involve limited-depth traversals:

- For example, to find the “latest configuration passing checks,” the system locates the entity node and traverses edges filtered by time.

- Query complexity depends on the size of the local neighborhood, not the entire graph.

Zep reports significant latency improvements over baselines that scan long contexts or use less structured retrieval.

Recall Characteristics

Graph memory excels when:

- Queries focus on specific entities and temporal aspects.

- Cross-session consistency is required (e.g., “what did this user request previously?”).

- Multi-hop reasoning is necessary (e.g., “if ticket A depends on B and B failed after policy change P, what caused the failure?”).

Recall depends heavily on graph completeness; missing edges or inaccurate timestamps reduce effectiveness.

Failure Modes in Multi-Agent Planning

- Outdated edges: delays in updating the graph cause plans to rely on obsolete information.

- Schema drift: changes in graph schema without corresponding updates in retrieval or planning logic introduce subtle errors.

- Access control issues: in multi-tenant setups, agents may have partial graph views, requiring planners to handle visibility constraints.

Ideal Use Cases

- Multi-agent systems coordinating over shared entities like tickets, users, or inventories.

- Long-duration tasks where temporal order is critical.

- Environments capable of maintaining ETL or streaming pipelines to update the knowledge graph.

2.2 Knowledge-Graph RAG (GraphRAG)

Overview

GraphRAG is a retrieval-augmented generation framework developed by Microsoft that constructs an explicit knowledge graph from a document corpus and applies hierarchical community detection (e.g., Hierarchical Leiden algorithm) to organize the graph. Summaries are generated for each community to facilitate efficient querying.

Process Workflow

- Extract entities and relationships from source documents.

- Build the knowledge graph.

- Apply community detection to form a multi-level hierarchy.

- Create summaries for communities and key nodes.

- At query time:

- Identify relevant communities using keywords, embeddings, or graph heuristics.

- Retrieve summaries and supporting nodes.

- Feed this information into the LLM for response generation.

Latency Considerations

- Indexing is more resource-intensive than basic RAG due to graph construction, clustering, and summarization.

- Query latency can be competitive or superior for large datasets because:

- Only a small number of summaries are retrieved.

- Long contexts built from many raw chunks are avoided.

Latency depends mainly on community search (often vector-based) and local graph traversal within selected communities.

Recall Performance

GraphRAG outperforms plain vector RAG when:

- Handling multi-document and multi-hop queries.

- Global structural understanding is needed (e.g., “how did this design evolve?” or “what sequence of incidents caused this outage?”).

- Answers require integrating evidence from multiple documents.

Recall quality depends on graph completeness and community detection accuracy; missing relations reduce effectiveness.

Potential Pitfalls

- Bias in graph construction: extraction errors or missing edges create blind spots.

- Over-summarization: summaries may omit rare but critical details.

- Traceability challenges: linking answers back to raw evidence adds complexity, important in regulated or safety-critical domains.

Recommended Scenarios

- Large-scale knowledge bases and documentation repositories.

- Systems requiring agents to answer complex design, policy, or root-cause questions spanning many documents.

- Contexts where one-time indexing and ongoing maintenance costs are acceptable.

3. Event Logs and Execution History Systems

3.1 Execution Logs and Checkpoints (ALAS, LangGraph)

Concept

These approaches treat agent actions and decisions as primary data, recording them in structured logs or checkpoints.

- ALAS: a transactional multi-agent framework maintaining a versioned execution log with:

- Validator isolation: a separate LLM verifies plans and results within its own context.

- Localized cascading repair: only minimal log segments are modified upon failure.

- LangGraph: provides thread-scoped checkpoints capturing agent graph states (messages, tool outputs, node states) that can be saved, resumed, or branched.

These logs and checkpoints serve as the definitive record of:

- Actions performed.

- Inputs and outputs.

- Control flow decisions.

Latency Profile

- During normal forward execution:

- Accessing the latest log entries or recent checkpoints is constant time and minimal in size.

- Most latency arises from LLM inference and tool execution, not log access.

- For analytics or global queries:

- Secondary indexes or offline processing are necessary; raw scans scale linearly with log size.

Recall Reliability

For queries like “what actions were taken?”, “which tools were invoked with what parameters?”, or “what was the system state before failure?”, recall is effectively perfect provided:

- All relevant events are properly instrumented.

- Logs are correctly persisted and retained.

However, logs alone do not offer semantic generalization; vector or graph indices are layered on top for semantic retrieval across executions.

Common Failure Points

- Log bloat: high-throughput systems generate large logs; inadequate retention or compaction can silently lose history.

- Incomplete instrumentation: missing traces create blind spots in replay and debugging.

- Unsafe replay: naive re-execution of logs can cause unintended side effects (e.g., duplicate payments) unless idempotency and compensation mechanisms are in place.

ALAS addresses some of these challenges through transactional semantics and localized repair.

When Essential

- Systems requiring strong observability, auditability, and debugging capabilities.

- Multi-agent workflows with complex failure handling.

- Environments favoring automated repair or partial re-planning over full restarts.

3.2 Episodic Long-Term Memory

Definition

Episodic memory organizes interactions into episodes: coherent segments of activity containing:

- Task descriptions and initial conditions.

- Relevant contextual information.

- Sequences of actions, often linked to execution logs.

- Outcomes and performance metrics.

Episodes are indexed by:

- Metadata such as time ranges, participants, and tools used.

- Embeddings for similarity-based retrieval.

- Optional summaries for quick reference.

Some systems periodically extract patterns from episodes to form higher-level knowledge or fine-tune specialized models.

Latency Characteristics

Retrieval typically involves two steps:

- Filtering episodes by metadata and/or vector similarity.

- Searching within selected episodes or directly accessing referenced logs.

While episodic retrieval may be slower than flat vector search on small datasets, it scales better over long histories by avoiding exhaustive event-level searches.

Recall Advantages

Episodic memory enhances recall for:

- Extended tasks: e.g., “Have we performed a similar system migration before?” or “How was this incident resolved previously?”

- Pattern reuse: retrieving entire workflows and their outcomes, not just isolated facts.

Recall quality depends on accurate episode segmentation and indexing.

Failure Modes

- Episode boundary errors: episodes that are too broad (mixing unrelated tasks) or too narrow (splitting tasks mid-way).

- Consolidation errors: incorrect abstractions during pattern distillation introduce bias into models or policies.

- Agent misalignment: episodes defined per agent rather than per task complicate cross-agent reasoning.

Ideal Use Cases

- Long-lived agents and workflows spanning weeks or months.

- Systems where recalling “similar past cases” is more valuable than raw data.

- Training or adaptation pipelines leveraging episodic feedback for model updates.

Summary and Recommendations

- Memory design is a system-level challenge: Effective multi-agent systems require deliberate strategies for data storage, retrieval, and handling of stale or missing information.

- Vector memory offers speed but limited structure: Basic and hierarchical vector stores provide fast, scalable retrieval but struggle with temporal reasoning, cross-session state, and multi-hop dependencies, limiting their reliability as sole memory backbones.

- Graph memory addresses temporal and relational gaps: Temporal knowledge graphs and hierarchical knowledge-graph RAGs improve recall and latency for entity-focused, temporal, and multi-document queries by explicitly modeling entities, relations, and time.

- Event logs and checkpoints provide authoritative records: Execution logs and checkpoints capture exact agent actions, enabling replay, targeted repair, and robust observability in production environments.

- Robust architectures integrate multiple memory layers: Practical agent systems combine vector, graph, and event/episodic memories, each with defined roles and known limitations, rather than relying on a single “magic” memory solution.