Contents

Understanding Tokenization and Chunking in AI

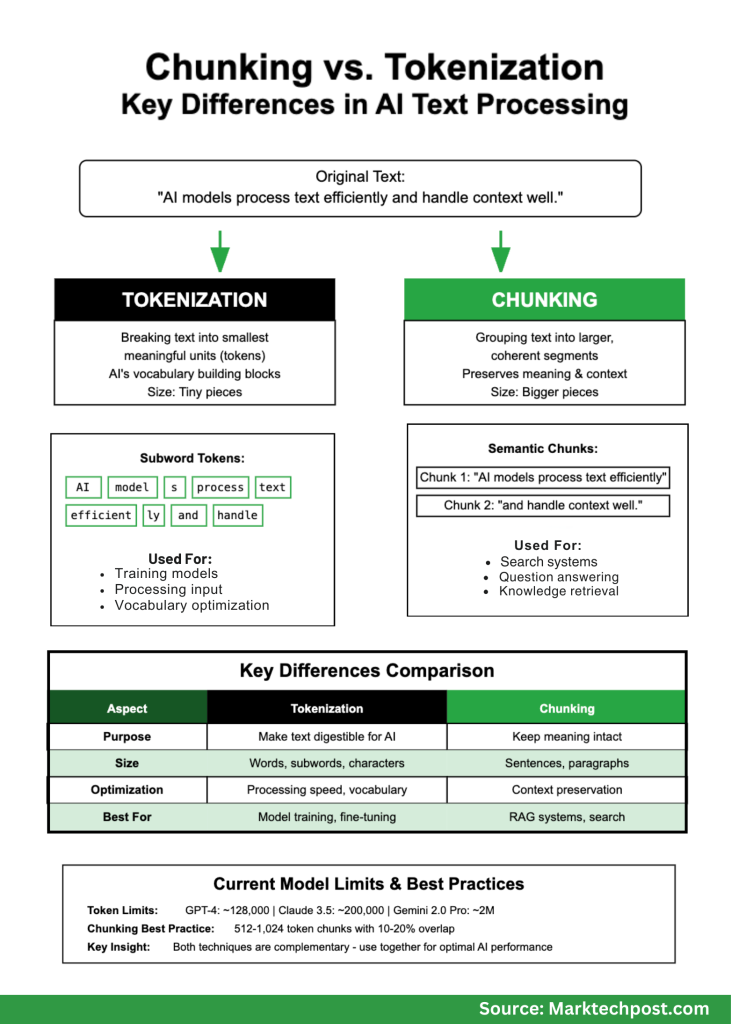

In the realm of artificial intelligence and natural language processing, two foundational techniques-tokenization and chunking-often cause confusion. Although both involve segmenting text, they operate at distinct levels and fulfill unique roles. Grasping their differences is essential for developing AI systems that perform effectively and efficiently.

Imagine preparing a meal: tokenization is akin to slicing ingredients into manageable pieces, while chunking is the act of grouping those pieces into meaningful combinations that enhance the dining experience. Both steps are vital but address separate challenges.

Decoding Tokenization: Breaking Text into Fundamental Units

Tokenization refers to dividing text into the smallest units that AI models can process-known as tokens. These tokens serve as the fundamental elements of a model’s vocabulary, often smaller than full words.

Common tokenization techniques include:

- Word-level tokenization: Splits text at spaces and punctuation marks. While simple, it struggles with rare or unseen words.

- Subword tokenization: Utilizes algorithms like Byte Pair Encoding (BPE), WordPiece, and SentencePiece to break words into frequently occurring character sequences. This method excels at handling novel or uncommon words.

- Character-level tokenization: Treats each character as a token, resulting in very long sequences that can be computationally intensive.

Example:

- Input: “AI models analyze text effectively.”

- Word tokens: [“AI”, “models”, “analyze”, “text”, “effectively”]

- Subword tokens: [“AI”, “model”, “s”, “analyze”, “text”, “effect”, “ive”, “ly”]

Notice how “models” is split into “model” and “s” in subword tokenization, enabling the model to generalize across related terms like “modeling” or “modeled.”

Exploring Chunking: Grouping Text for Contextual Integrity

Chunking, in contrast, aggregates text into larger, meaningful segments that maintain semantic coherence. This approach is crucial for applications such as chatbots and information retrieval systems, where preserving the flow of ideas is necessary.

Consider reading a novel: you wouldn’t want sentences scattered randomly; instead, you prefer paragraphs that group related thoughts. Chunking performs a similar function for AI.

Illustration:

- Text: “AI models analyze text effectively. They depend on tokens to grasp meaning. Chunking enhances information retrieval.”

- Chunk 1: “AI models analyze text effectively.”

- Chunk 2: “They depend on tokens to grasp meaning.”

- Chunk 3: “Chunking enhances information retrieval.”

Advanced chunking methods include:

- Fixed-size chunking: Divides text into uniform segments (e.g., 500 words), though it may split ideas awkwardly.

- Semantic chunking: Uses AI to detect natural topic shifts, creating more meaningful divisions.

- Hierarchical chunking: Splits text progressively from paragraphs to sentences and smaller units as needed.

- Overlapping chunking: Employs sliding windows to ensure context isn’t lost at segment boundaries.

Contrasting Tokenization and Chunking: What Sets Them Apart?

| Aspect | Tokenization | Chunking |

|---|---|---|

| Granularity | Smallest units (words, subwords, characters) | Larger segments (sentences, paragraphs) |

| Purpose | Convert text into AI-readable units | Preserve semantic context and coherence |

| Typical Use Cases | Model training, input preprocessing | Search engines, conversational AI, document analysis |

| Optimization Focus | Efficiency, vocabulary coverage | Context retention, retrieval precision |

Practical Implications for AI Systems

Impact on Model Efficiency and Cost

Tokenization directly influences computational costs and speed. For instance, GPT-4 charges based on token usage, making efficient tokenization financially beneficial. Current token limits vary across models:

- GPT-4: Approximately 128,000 tokens per input

- Claude 3.5: Supports up to 200,000 tokens

- Gemini 2.0 Pro: Handles up to 2 million tokens

Research indicates that larger vocabularies improve model performance. For example, LLaMA-2 70B employs around 32,000 tokens but could benefit from expanding to roughly 216,000 tokens, balancing accuracy and efficiency.

Enhancing Retrieval and QA Systems with Chunking

Chunking strategies are pivotal in Retrieval-Augmented Generation (RAG) systems. Overly small chunks risk losing context, while excessively large ones may overwhelm the model with irrelevant data. Optimal chunking enhances answer accuracy and reduces hallucinations-erroneous or fabricated responses.

Enterprises leveraging AI for knowledge management report significant improvements in response quality by adopting intelligent chunking methods.

When to Apply Tokenization vs. Chunking

Tokenization is Crucial For:

- Training language models: Tokenization shapes how models interpret and learn from data.

- Fine-tuning specialized models: Domains like healthcare or law require tokenizers that accommodate unique terminology.

- Multilingual applications: Subword tokenization effectively manages complex word formations across languages.

Chunking is Vital For:

- Corporate knowledge bases: Ensures employees receive precise answers from internal documents.

- Large-scale document processing: Maintains structural integrity when analyzing contracts, research, or feedback.

- Advanced search engines: Semantic chunking improves understanding of user intent beyond keyword matching.

Effective Strategies for Implementation

Chunking Best Practices:

- Begin with chunks sized between 512 and 1024 tokens for general use.

- Incorporate 10-20% overlap between chunks to maintain context continuity.

- Prefer semantic boundaries such as sentence or paragraph ends.

- Continuously test and refine chunk sizes based on real-world application feedback.

- Monitor for hallucinations and adjust chunking methods accordingly.

Tokenization Recommendations:

- Adopt proven tokenization algorithms like BPE, WordPiece, or SentencePiece instead of custom solutions.

- Tailor tokenization to domain-specific vocabularies, especially in specialized fields.

- Track out-of-vocabulary token rates during deployment to identify gaps.

- Balance token compression with semantic fidelity to optimize model input.

Conclusion: Harmonizing Tokenization and Chunking for AI Success

Tokenization and chunking are complementary techniques essential for effective AI language processing. Tokenization transforms raw text into manageable units for models, while chunking preserves the semantic integrity necessary for meaningful interactions and retrieval.

As AI technology advances, both tokenization and chunking methods continue to evolve-expanding context windows, refining vocabularies, and enhancing semantic segmentation. The key to success lies in aligning these techniques with your specific goals: prioritize chunking for conversational AI, optimize tokenization for model training, and combine both for enterprise search solutions.