Advancing AI with Unified Multimodal Models

Unified multimodal models (UMMs) represent a cutting-edge frontier in artificial intelligence, aiming to develop a single framework capable of both interpreting and creating visual content. These models aspire to combine the sophisticated reasoning skills of language-based AI with the ability to process and generate images, bridging the gap between textual and visual understanding.

Challenges in Training Multimodal AI

Despite their promise, UMMs encounter significant hurdles rooted in their training data. Most current methods depend heavily on paired image-text datasets, where captions serve as the primary supervisory signal. Unfortunately, these captions tend to be brief and lack comprehensive visual information. Even lengthy descriptions often omit vital aspects such as spatial relationships, geometric details, surface textures, and subtle visual nuances.

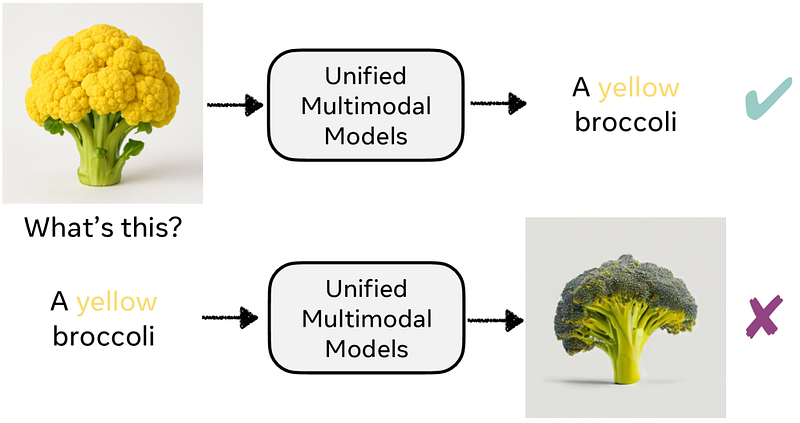

This limitation leads to a persistent bias in model behavior. For example, because captions seldom specify the color of broccoli, models default to associating broccoli exclusively with the color green. Consequently, when prompted to generate an image of “yellow broccoli,” the model struggles, despite being able to recognize such an unusual variant when presented visually. This discrepancy highlights a critical misalignment between the model’s comprehension and its generative abilities.

Reconstruction Alignment: Enhancing Visual Generation

To overcome these constraints, researchers have developed a novel technique called Reconstruction Alignment (RecA). This approach offers a cost-effective, post-training enhancement that addresses the sparse supervision issue by utilizing dense visual embeddings rather than relying solely on textual captions.

RecA harnesses the power of advanced visual encoders such as CLIP and SigLIP, which translate pixel data into a semantic space aligned with language understanding. Unlike traditional caption-based supervision, these embeddings provide rich, detailed semantic information that captures the full complexity of visual scenes.

Leveraging Dense Semantic Supervision

The fundamental advantage of RecA lies in its use of semantic embeddings from visual understanding models, which encode intricate structural and contextual information more effectively than generation-focused encoders. By treating these embeddings as highly informative “text prompts,” RecA enables the training of generative models with a much denser and semantically meaningful supervisory signal.

This strategy raises an important question: can integrating dense semantic embeddings into the training process significantly enhance a model’s ability to generate accurate and detailed images? Early results suggest that this method reduces the gap between recognition and generation, allowing models to produce visuals that better reflect complex and uncommon concepts.

Implications and Future Directions

As AI continues to evolve, the integration of dense semantic supervision techniques like RecA could revolutionize how multimodal models learn and generate content. With the increasing availability of large-scale visual datasets and powerful embedding models, future UMMs may achieve unprecedented levels of fidelity and versatility in both understanding and creating images.

For instance, in 2024, the adoption of dense embedding-based training has already shown improvements in generating diverse and contextually accurate images across various domains, from medical imaging to creative design. This progress underscores the potential of combining semantic-rich visual embeddings with generative architectures to push the boundaries of AI capabilities.