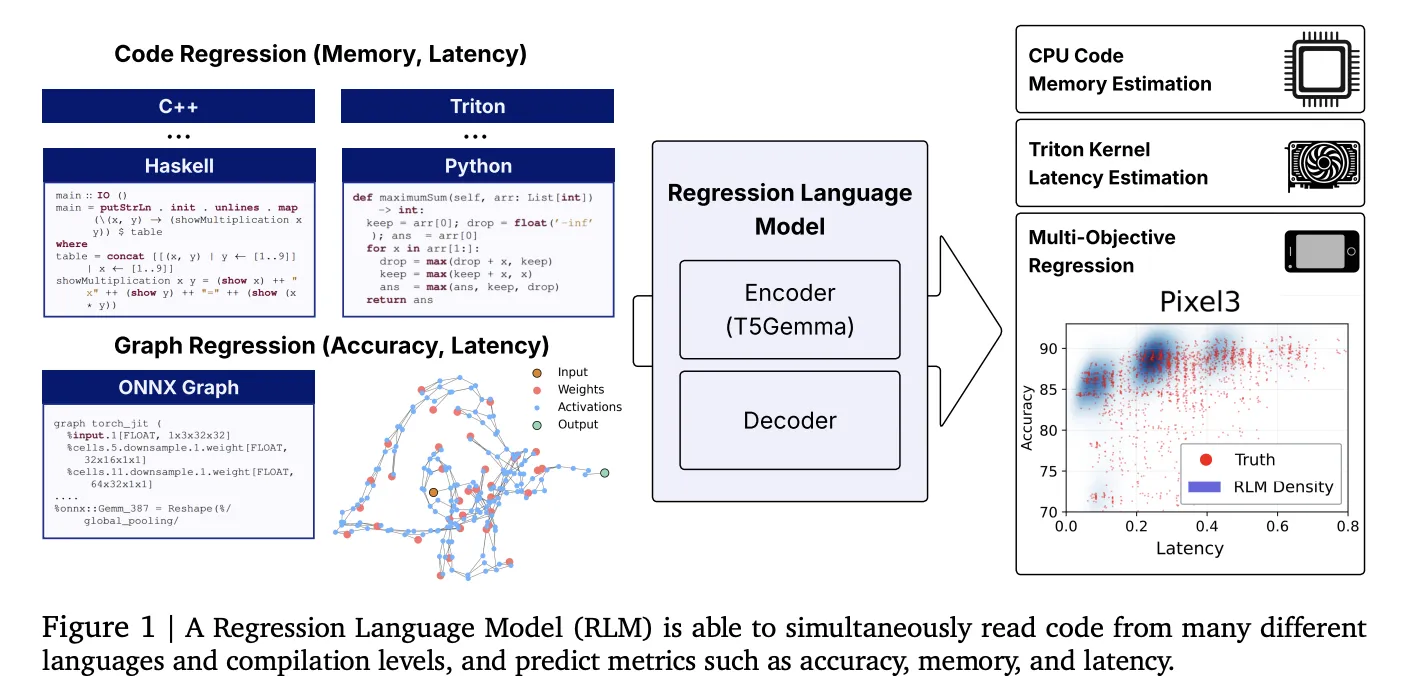

Innovators at Cornell and Google unveil a versatile Regression Language Model (RLM) capable of forecasting numerical metrics directly from code snippets-ranging from GPU kernel execution times and program memory consumption to neural network accuracy and latency-without relying on manually crafted features. Leveraging a 300-million-parameter encoder-decoder architecture initialized with T5-Gemma, this model demonstrates impressive rank correlation performance across diverse programming languages and tasks by employing a unified text-to-number decoding approach that generates digits through constrained decoding techniques.

Introducing a Groundbreaking Approach to Code-Based Metric Prediction

- All-in-One Code-to-Metric Regression: This single RLM framework predicts (i) peak memory usage from high-level languages such as Python, C, and C++, (ii) latency for Triton GPU kernels, and (iii) accuracy alongside hardware-specific latency from ONNX model graphs. It operates solely on raw textual inputs, eliminating the need for feature engineering, graph neural networks, or proxy metrics.

- Robust Empirical Evidence: The model achieves a Spearman correlation of approximately 0.93 on APPS LeetCode memory benchmarks, around 0.52 for Triton kernel latency, an average Spearman correlation exceeding 0.5 across 17 programming languages in the CodeNet dataset, and a Kendall tau near 0.46 across five established Neural Architecture Search (NAS) benchmarks-matching or outperforming graph-based prediction methods.

- Sequential Multi-Objective Decoding: Utilizing an autoregressive decoder, the model predicts metrics in sequence, conditioning later outputs (e.g., device-specific latencies) on earlier ones (e.g., accuracy), effectively modeling realistic trade-offs along Pareto frontiers.

Why This Advancement Matters in Performance Modeling

Traditional performance prediction pipelines in compiler optimization, GPU kernel selection, and NAS often depend on fragile, hand-crafted features, abstract syntax trees, or graph neural networks that struggle to generalize across new operations or programming languages. By reframing regression as a next-token prediction task over numeric sequences, this approach standardizes the workflow: source code, Triton intermediate representation, or ONNX graphs are tokenized as plain text, and numeric outputs are generated digit-by-digit with constrained decoding to ensure validity. This paradigm shift reduces maintenance overhead and enhances adaptability to novel tasks through fine-tuning.

Datasets and Benchmark Suites Supporting RLM

- Code-Regression Dataset: A comprehensive collection designed to facilitate code-to-metric prediction tasks, encompassing APPS/LeetCode runtime memory data, Triton kernel latency measurements derived from KernelBook, and memory footprint statistics from CodeNet.

- NAS and ONNX Benchmark Suite: Includes architectures from NASBench-101/201, FBNet, Once-for-All (MobileNet, ProxylessNAS, ResNet variants), Twopath, Hiaml, Inception, and NDS, all converted into ONNX textual format to predict both accuracy and device-specific latency metrics.

Technical Overview: How the Model Operates

- Model Architecture: The core is an encoder-decoder network initialized with T5-Gemma (~300 million parameters). It accepts raw textual inputs-whether source code or ONNX representations-and outputs numbers tokenized into sign, exponent, and mantissa digits. Constrained decoding ensures the generation of valid numeric sequences and supports uncertainty quantification through sampling.

- Key Ablation Findings: (i) Pretraining on language data accelerates convergence and enhances Triton latency predictions; (ii) autoregressive numeric token emission surpasses traditional mean squared error regression heads, even with output normalization; (iii) specialized tokenizers tailored for ONNX operators extend effective context length; (iv) longer input contexts improve performance; (v) scaling the Gemma encoder size further boosts correlation metrics when properly tuned.

- Open-Source Training Framework: The regress-lm library offers utilities for text-to-text regression, constrained decoding, and multi-task pretraining and fine-tuning workflows.

Performance Highlights Across Diverse Tasks

- APPS (Python) Memory Prediction: Achieves Spearman correlation exceeding 0.9.

- CodeNet Memory Usage (17 Languages): Average Spearman correlation above 0.5, with top-performing languages like C and C++ reaching approximately 0.74-0.75.

- Triton Kernel Latency on NVIDIA RTX A6000: Spearman correlation around 0.52.

- Neural Architecture Search Ranking: Average Kendall tau near 0.46 across NASNet, AmoebaNet, PNAS, ENAS, and DARTS benchmarks, rivaling FLAN and graph neural network baselines.

Summary of Key Insights

- A unified regression model effectively predicts multiple performance metrics-including memory consumption, GPU kernel latency, and model accuracy-from raw code and ONNX text without manual feature design.

- Strong statistical correlations demonstrate the model’s practical utility across a wide range of programming languages and hardware configurations.

- Digit-level constrained decoding enables multi-metric autoregressive outputs and uncertainty estimation, replacing conventional regression heads.

- The availability of the Code-Regression dataset and regress-lm training toolkit facilitates reproducibility and adaptation to emerging hardware and programming environments.

Final Thoughts

This innovative work reimagines performance prediction as a text-to-numeric generation challenge, employing a compact T5-Gemma-initialized RLM that processes source code, Triton kernels, or ONNX graphs to produce calibrated numeric estimates through constrained decoding. The model’s impressive correlations-such as over 0.9 Spearman for APPS memory, approximately 0.52 for Triton latency on RTX A6000 GPUs, and near 0.46 Kendall tau for NAS rankings-highlight its potential to streamline compiler heuristics, optimize kernel pruning, and enhance multi-objective NAS without relying on fragile, hand-engineered features or graph neural networks. The open-source dataset and training library lower barriers for researchers and practitioners aiming to fine-tune the model for new languages or hardware platforms.