Building a Context-Folding LLM Agent for Efficient Complex Task Management

This guide walks you through creating a Context-Folding Large Language Model (LLM) Agent designed to tackle extensive, multifaceted tasks by smartly handling limited context windows. The agent decomposes a large problem into manageable subtasks, applies reasoning or calculations as necessary, and then compresses each completed segment into brief summaries. This approach retains critical information while keeping the working memory compact and efficient.

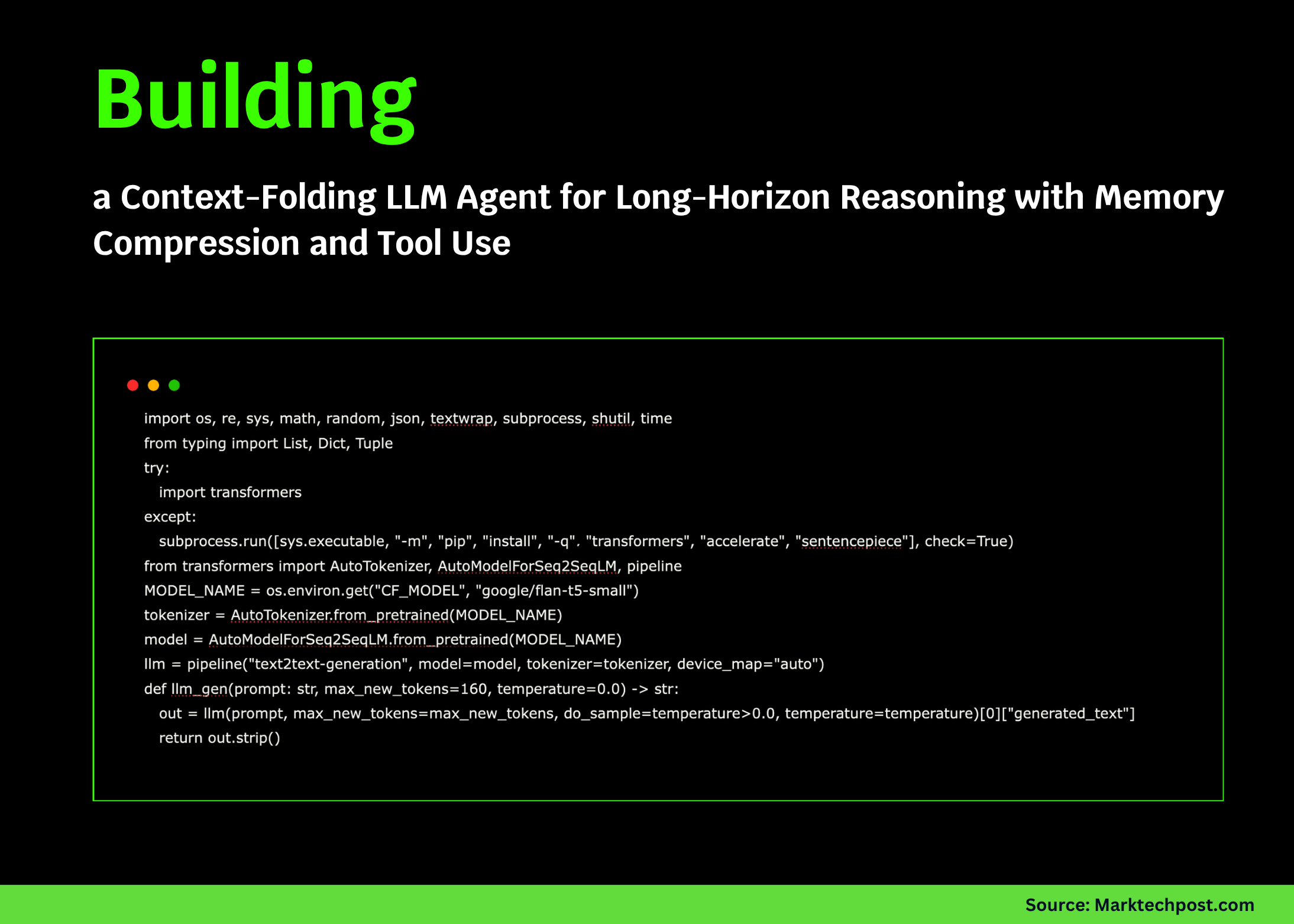

Setting Up the Environment with a Lightweight Hugging Face Model

We start by configuring our environment and loading a compact Hugging Face model locally. This setup ensures smooth operation on platforms like Google Colab without relying on external APIs, enabling offline text generation and processing.

import os, sys, subprocess

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM, pipeline

MODEL_NAME = os.environ.get("CF_MODEL", "google/flan-t5-small")

# Install dependencies if missing

try:

import transformers

except ImportError:

subprocess.run([sys.executable, "-m", "pip", "install", "-q", "transformers", "accelerate", "sentencepiece"], check=True)

tokenizer = AutoTokenizer.from_pretrained(MODEL_NAME)

model = AutoModelForSeq2SeqLM.from_pretrained(MODEL_NAME)

llm = pipeline("text2text-generation", model=model, tokenizer=tokenizer, device_map="auto")

def generate_text(prompt: str, max_tokens=160, temperature=0.0) -> str:

result = llm(prompt, max_new_tokens=max_tokens, do_sample=temperature > 0.0, temperature=temperature)[0]["generated_text"]

return result.strip()

Implementing a Basic Calculator and Dynamic Folding Memory

To support arithmetic operations within subtasks, we define a safe calculator using Python’s abstract syntax trees. Alongside, we create a folding memory system that actively compresses older context into concise summaries, maintaining a manageable active memory size without losing essential details.

import ast

import operator as op

# Supported arithmetic operations

OPS = {

ast.Add: op.add, ast.Sub: op.sub, ast.Mult: op.mul, ast.Div: op.truediv,

ast.Pow: op.pow, ast.USub: op.neg, ast.FloorDiv: op.floordiv, ast.Mod: op.mod

}

def evaluate_expression(node):

if isinstance(node, ast.Num):

return node.n

elif isinstance(node, ast.UnaryOp) and type(node.op) in OPS:

return OPS[type(node.op)](evaluate_expression(node.operand))

elif isinstance(node, ast.BinOp) and type(node.op) in OPS:

return OPS[type(node.op)](evaluate_expression(node.left), evaluate_expression(node.right))

else:

raise ValueError("Unsupported or unsafe expression")

def calculate(expr: str):

parsed = ast.parse(expr, mode='eval').body

return evaluate_expression(parsed)

class FoldingMemory:

def __init__(self, max_chars=800):

self.active = []

self.folds = []

self.max_chars = max_chars

def add(self, text: str):

self.active.append(text.strip())

while len(self.get_active_text()) > self.max_chars and len(self.active) > 1:

removed = self.active.pop(0)

summary = f"- Folded: {removed[:120]}..."

self.folds.append(summary)

def fold_in(self, summary: str):

self.folds.append(summary.strip())

def get_active_text(self) -> str:

return "n".join(self.active)

def get_folded_text(self) -> str:

return "n".join(self.folds)

def snapshot(self) -> dict:

return {

"active_chars": len(self.get_active_text()),

"fold_count": len(self.folds)

}

Crafting Prompt Templates for Task Decomposition and Summarization

We develop structured prompt templates that instruct the agent to break down tasks into subtasks, solve them efficiently, and summarize results. These templates ensure clear, stepwise communication between the agent’s reasoning process and the language model’s outputs.

SUBTASK_DECOMPOSITION_PROMPT = """

You are a skilled planner. Break down the following task into 2 to 4 clear subtasks.

List each subtask as a bullet point starting with '- ', ordered by priority.

Task: "{task}"

"""

SUBTASK_SOLVER_PROMPT = """

You are a focused problem solver aiming for minimal steps.

If a calculation is required, respond with one line: 'CALC(expression)'.

Otherwise, reply with 'ANSWER: <final result>'.

Think briefly and avoid unnecessary dialogue.

Task: {task}

Subtask: {subtask}

Context notes (folded summaries):

{notes}

Please respond with either CALC(...) or ANSWER: ...

"""

SUBTASK_SUMMARY_PROMPT = """

Summarize the subtask result in up to 3 bullet points, totaling no more than 50 tokens.

Subtask: {name}

Steps taken:

{trace}

Final result: {final}

Return only bullet points starting with '- '.

"""

FINAL_SYNTHESIS_PROMPT = """

You are an expert agent. Create a concise, coherent final solution using ONLY:

- The original task description

- Folded summaries below

Avoid repeating intermediate steps. Be clear and actionable.

Task: {task}

Folded summaries:

{folds}

Final answer:

"""

def extract_bullets(text: str) -> list:

return [line[2:].strip() for line in text.splitlines() if line.strip().startswith("- ")]

Executing Subtasks and Folding Results into Memory

The agent’s core functionality involves running each subtask, interpreting the model’s output, performing calculations when prompted, and summarizing the results. These summaries are then folded back into memory, enabling iterative reasoning without losing track of previous context.

import re

import time

from typing import List, Tuple, Dict

def execute_subtask(task: str, subtask: str, memory: FoldingMemory, max_iterations=3) -> Tuple[str, str, List[str]]:

notes = memory.get_folded_text() or "(none)"

trace = []

final_answer = ""

for _ in range(max_iterations):

prompt = SUBTASK_SOLVER_PROMPT.format(task=task, subtask=subtask, notes=notes)

response = generate_text(prompt, max_tokens=96)

trace.append(response)

calc_match = re.search(r"CALC((.+?))", response)

if calc_match:

try:

result = calculate(calc_match.group(1))

trace.append(f"TOOL: CALC -> {result}")

follow_up = generate_text(prompt + f"nTool result: {result}nNow provide 'ANSWER: ...' only.", max_tokens=64)

trace.append(follow_up)

if follow_up.strip().startswith("ANSWER:"):

final_answer = follow_up.split("ANSWER:", 1)[1].strip()

break

except Exception as e:

trace.append(f"TOOL: CALC ERROR -> {e}")

elif response.strip().startswith("ANSWER:"):

final_answer = response.split("ANSWER:", 1)[1].strip()

break

if not final_answer:

final_answer = "No definitive answer; partial reasoning:n" + "n".join(trace[-2:])

summary = generate_text(SUBTASK_SUMMARY_PROMPT.format(name=subtask, trace="n".join(trace), final=final_answer), max_tokens=80)

summary_bullets = "n".join(extract_bullets(summary)[:3]) or f"- {subtask}: {final_answer[:60]}..."

return final_answer, summary_bullets, trace

class ContextFoldingAgent:

def __init__(self, max_active_chars=800):

self.memory = FoldingMemory(max_chars=max_active_chars)

self.metrics = {"subtasks": 0, "tool_calls": 0, "chars_saved_estimate": 0}

def decompose_task(self, task: str) -> List[str]:

plan = generate_text(SUBTASK_DECOMPOSITION_PROMPT.format(task=task), max_tokens=96)

subtasks = extract_bullets(plan)

return subtasks[:4] if subtasks else ["Main solution"]

def run(self, task: str) -> Dict:

start_time = time.time()

self.memory.add(f"TASK: {task}")

subtasks = self.decompose_task(task)

self.metrics["subtasks"] = len(subtasks)

folded_summaries = []

for subtask in subtasks:

self.memory.add(f"SUBTASK: {subtask}")

final, summary, trace = execute_subtask(task, subtask, self.memory)

self.memory.fold_in(summary)

folded_summaries.append(f"- {subtask}: {final}")

self.memory.add(f"SUBTASK_DONE: {subtask}")

final_answer = generate_text(FINAL_SYNTHESIS_PROMPT.format(task=task, folds=self.memory.get_folded_text()), max_tokens=200)

end_time = time.time()

return {

"task": task,

"final_answer": final_answer.strip(),

"folded_summaries": self.memory.get_folded_text(),

"active_context_length": len(self.memory.get_active_text()),

"subtask_results": folded_summaries,

"runtime_seconds": round(end_time - start_time, 2)

}

Demonstrating the Agent with Practical Examples

To illustrate the agent’s capabilities, we test it on sample tasks that require planning and budget calculations. These examples showcase how the agent decomposes, reasons, and synthesizes results while efficiently managing context.

DEMO_TASKS = [

"Create a 3-day study plan for machine learning including daily exercise and simple meal prep; allocate specific time blocks.",

"Calculate a project budget with three items: laptop $799.99, course $149.50, snacks $23.75; add 8% tax and 5% contingency; provide a concise recommendation paragraph."

]

import json

def pretty_print(data):

return json.dumps(data, indent=2, ensure_ascii=False)

if __name__ == "__main__":

agent = ContextFoldingAgent(max_active_chars=700)

for idx, task in enumerate(DEMO_TASKS, 1):

print("=" * 70)

print(f"DEMO #{idx}: {task}")

result = agent.run(task)

print("n--- Folded Summaries ---n" + (result["folded_summaries"] or "(none)"))

print("n--- Final Answer ---n" + result["final_answer"])

print("n--- Diagnostics ---")

diagnostics = {

"active_context_length": result["active_context_length"],

"runtime_seconds": result["runtime_seconds"],

"number_of_subtasks": len(agent.decompose_task(task))

}

print(pretty_print(diagnostics))

Summary: Efficient Long-Horizon Reasoning via Context Folding

This tutorial demonstrates how context folding empowers an LLM agent to perform extended reasoning without overwhelming memory constraints. By systematically decomposing tasks, executing subtasks with tool support, and compressing knowledge into summaries, the agent mimics intelligent workflow management. This method combines decomposition, tool integration, and context compression to build a lightweight yet scalable reasoning system capable of handling complex, multi-step problems effectively.