How Mirrors Can Deceive LIDAR Systems in Autonomous Vehicles

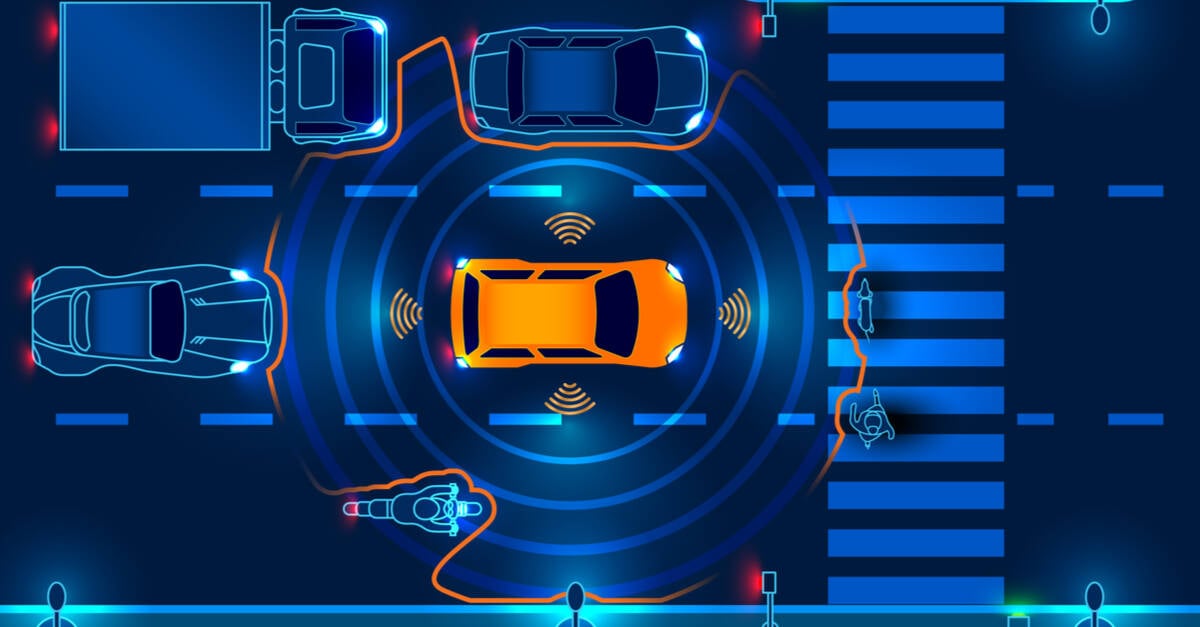

Light Detection and Ranging (LIDAR) technology, a cornerstone in many self-driving cars, can be misled by reflective surfaces such as mirrors. This vulnerability allows LIDAR sensors to either overlook actual obstacles or detect phantom objects that do not exist, posing significant safety risks for autonomous navigation.

International Research Unveils LIDAR Vulnerabilities

A collaborative team of researchers from France, Germany, and the United Kingdom conducted experiments on a university campus parking lot to explore these weaknesses. Using a vehicle equipped with commercial-grade LIDAR and the widely-used Autoware Navigation software, they demonstrated two distinct attack methods. In one scenario, the vehicle failed to recognize a real obstacle and proceeded through it, while in another, it braked abruptly to avoid a non-existent hazard.

Understanding LIDAR and Its Limitations

LIDAR operates by emitting laser pulses to map the surrounding environment, a technology integral to most autonomous vehicles except for some models like Tesla, which rely on alternative sensor arrays. Despite its precision, LIDAR struggles with reflective materials, which can distort or obscure the sensor’s perception. Previous studies have shown that simple materials like aluminum foil and colored patches can confuse LIDAR readings.

Advanced Mirror-Based Attacks: Object Removal and Addition

The European research team introduced two innovative attack strategies leveraging mirrors. The first, termed the Object Removal Attack (ORA), involved strategically placing mirrors of varying sizes to conceal a traffic cone from the LIDAR sensor. By fine-tuning mirror dimensions, they effectively rendered the obstacle invisible to the vehicle’s detection system, potentially causing it to collide with the hidden object. Additionally, these mirrors could partially block the vehicle’s own line of sight.

The second method, called the Object Addition Attack (OAA), used small mirror tiles to create the illusion of an obstacle where none existed. In controlled tests, the autonomous vehicle, under manual supervision for safety, detected a fabricated object approximately 20 meters ahead and took evasive action, demonstrating how such attacks could trigger unnecessary braking or maneuvers.

Real-World Implications and Traffic Scenario Testing

Further experiments applied the OAA technique to disrupt lawful driving behavior. For example, the researchers manipulated the system to prevent a vehicle from making a legal left turn by simulating an obstacle in the path using mirror arrangements. These findings highlight how malicious actors could exploit LIDAR vulnerabilities to interfere with traffic flow and vehicle decision-making.

Proposed Countermeasures and Their Limitations

In their published study, the researchers also explored potential defenses against such attacks, including the integration of thermal imaging sensors. Since solid objects emit distinct thermal signatures, combining thermal data with LIDAR could help differentiate real obstacles from deceptive reflections. However, the team cautioned that thermal imaging is not a comprehensive solution, especially when detecting small objects or operating in environments with high ambient temperatures.

Need for Further Research and Real-World Testing

It is important to note that these experiments were conducted at relatively low speeds compared to typical highway driving conditions. The researchers emphasized the necessity for additional testing under diverse and more demanding scenarios to fully understand the risks posed by such mirror-based attacks. While the cost of these mirror setups is minimal, their potential impact on autonomous vehicle safety warrants serious attention as self-driving technology continues to evolve.

Conclusion

As autonomous vehicles become increasingly prevalent, ensuring the robustness of their sensor systems against deceptive tactics is critical. This research underscores the importance of developing multi-modal sensing and advanced detection algorithms to safeguard against simple yet effective methods of sensor spoofing, such as those involving mirrors.