How can Retrieval-Augmented Generation (RAG) systems maintain precision and speed when queries attempt to cram thousands of tokens into the context window, yet the retriever and generator remain isolated, independently optimized components? A collaborative research effort by experts from Apple and the University of Edinburgh has introduced CLaRa (Continuous Latent Reasoning), a novel RAG framework designed to compress documents into continuous memory tokens. This approach enables both retrieval and generation to operate within a unified latent space, streamlining the process. The primary objectives are to reduce context length, eliminate redundant encoding, and empower the generator to guide the retriever on which information is truly essential for accurate downstream responses.

Transforming Documents into Compact Memory Tokens

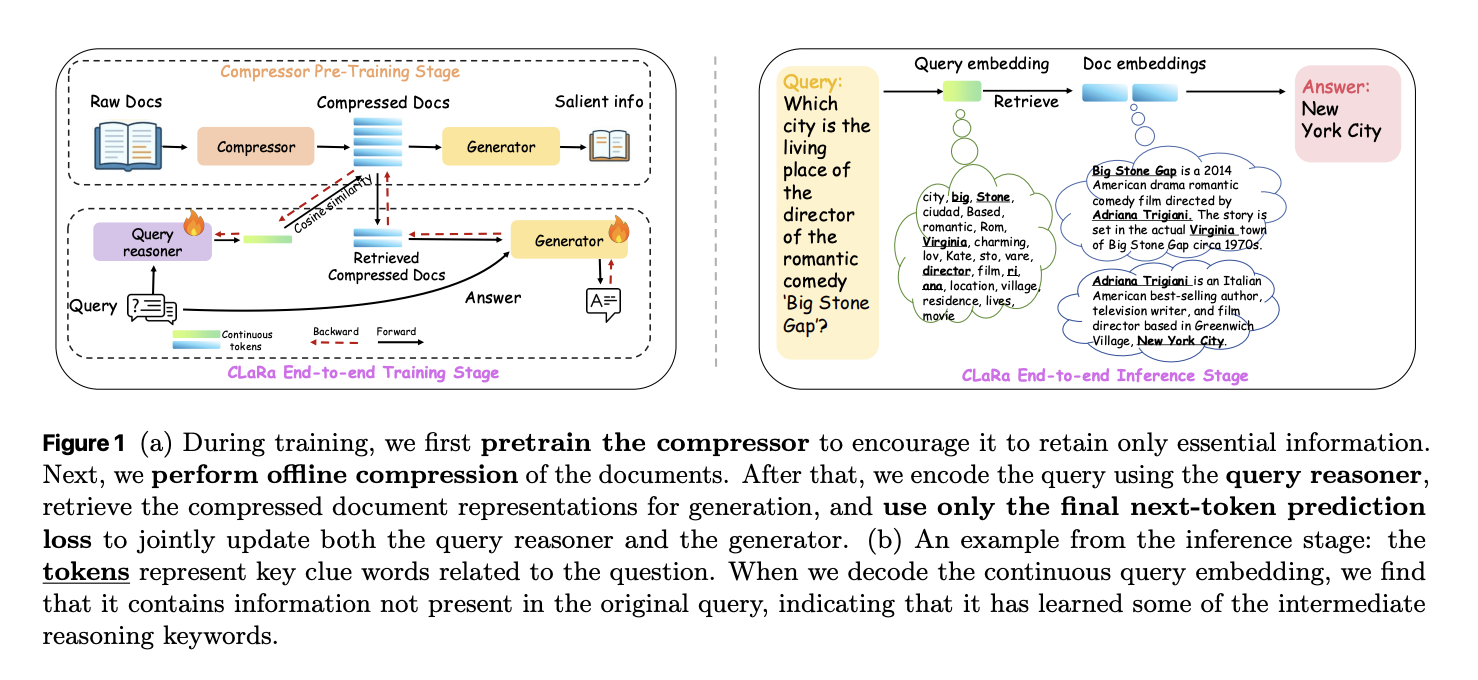

CLaRa begins by employing a semantic compression mechanism that assigns a limited set of learned memory tokens to each document. This is achieved through Salient Compressor Pretraining (SCP), where a Mistral 7B-style transformer equipped with LoRA adapters alternates between compressor and generator roles. The compressed representation of a document is derived from the final hidden states of these memory tokens.

The SCP training leverages approximately 2 million Wikipedia 2021 passages. A local Qwen-32B model generates three types of supervision signals per passage: straightforward QA pairs targeting atomic facts, complex QA pairs that require multi-hop reasoning by linking multiple facts, and paraphrases that reorganize and condense the text while preserving its meaning. A rigorous verification loop ensures factual accuracy and completeness, allowing up to ten iterations of question or paraphrase regeneration before finalizing a sample.

Training optimizes two loss functions simultaneously: a cross-entropy loss that trains the generator to produce answers or paraphrases conditioned solely on memory tokens and an instruction prefix, and a mean squared error (MSE) loss that aligns the average hidden states of the original document tokens with those of the memory tokens. The MSE component consistently improves performance by 0.3 to 0.6 F1 points at compression ratios of 32 and 128, ensuring that compressed and original representations remain semantically aligned.

Unified Latent Space for Retrieval and Generation

Once documents are compressed into memory tokens, CLaRa trains a query reasoner and an answer generator atop the same backbone model. The query reasoner, implemented as another LoRA adapter, transforms input questions into memory tokens matching the document representation size. Retrieval then simplifies to an embedding similarity search, using cosine similarity to rank candidate documents.

The top-ranked compressed document embeddings are concatenated with the query tokens and passed to the generator adapter. Training relies solely on a next-token prediction loss for the final answer, without explicit relevance labels. A key innovation is the use of a differentiable top-k selector via a Straight Through estimator: during the forward pass, hard top-k selection is applied, while the backward pass employs a softmax over document scores, allowing gradients from the generator to update the query reasoner. This mechanism aligns retrieval with answer quality in an end-to-end fashion.

Gradient analysis reveals two significant effects: the retriever learns to prioritize documents that enhance answer likelihood, and the shared compressed representations evolve to facilitate reasoning. For example, logit lens analysis of query embeddings uncovers latent topic tokens like “NFL” and “Oklahoma” in questions about Ivory Lee Brown’s nephew, even though these terms are absent from the raw query but present in supporting documents.

Evaluating Compression Efficiency and QA Performance

The SCP compressor was assessed on four multi-hop QA benchmarks: Natural Questions, HotpotQA, MuSiQue, and 2WikiMultihopQA. Under the Normal retrieval setting-where the system selects the top five Wikipedia 2021 documents per query-SCP-Mistral-7B at 4x compression achieved an average F1 score of 39.86. This outperforms the hard compression baseline LLMLingua 2 by 5.37 points and the best soft compression baseline PISCO by 1.13 points.

In the Oracle setting, where the correct document is guaranteed among candidates, SCP-Mistral-7B at 4x compression reached an impressive average F1 of 66.76, surpassing LLMLingua-2 by 17.31 points and PISCO by 5.35 points. Notably, compressed representations also outperformed a pipeline combining a BGE-based text retriever with a full-document Mistral-7B generator by approximately 2.36 F1 points for Mistral and 6.36 points for Phi 4 mini. These results demonstrate that well-trained soft compression can exceed full-text RAG performance while reducing context length by factors ranging from 4 to 128.

Although performance declines at very high compression ratios (above 32) in the Oracle setting, the drop remains moderate under Normal retrieval conditions. The researchers attribute this to retrieval bottlenecks limiting system performance before compression quality becomes a factor.

End-to-End QA and Retrieval Insights

For full pipeline evaluation, CLaRa processes 20 candidate documents per query with compression ratios of 4, 16, and 32. In the Normal setting, CLaRa-Mistral-7B with instruction-tuned weights and 16x compression achieved F1 scores of 50.89 on Natural Questions and 44.66 on 2WikiMultihopQA. These results are comparable to DRO-Mistral-7B, which operates on uncompressed full text, despite CLaRa using document representations 16 times shorter. On some datasets, such as 2Wiki, CLaRa even slightly outperforms DRO, improving F1 from 43.65 to 47.18 at 16x compression.

Under Oracle conditions, CLaRa-Mistral-7B surpasses 75 F1 on both Natural Questions and HotpotQA at 4x compression, indicating the generator’s ability to fully leverage precise retrieval from compressed memory tokens. Instruction-initialized CLaRa models generally outperform those initialized with pretraining in Normal settings, though the gap narrows in Oracle scenarios where retrieval noise is minimal.

Regarding retrieval, CLaRa used as a reranker under Oracle conditions achieves strong Recall@5 metrics. For instance, on HotpotQA with 4x compression and pretraining initialization, CLaRa-Mistral-7B attains a Recall@5 of 96.21, outperforming the supervised BGE reranker baseline at 85.93 by over 10 points and even exceeding a fully supervised Sup Instruct retriever trained with contrastive relevance labels.

Available Models and Resources from Apple

Apple’s research team has publicly released three models on Hugging Face: CLaRa-7B-Base, CLaRa-7B-Instruct, and CLaRa-7B-E2E. Among these, CLaRa-7B-Instruct is an instruction-tuned unified RAG model featuring built-in document compression at 16x and 128x ratios. It directly answers instruction-style queries from compressed representations and is based on Mistral-7B-Instruct v0.2.

Summary of Key Innovations

- CLaRa replaces bulky raw documents with a compact set of continuous memory tokens learned through QA and paraphrase-guided semantic compression, preserving critical reasoning information even at extreme compression levels (16x to 128x).

- Retrieval and generation are jointly trained within a shared latent space, with the query encoder and generator sharing compressed representations and optimized via a single language modeling objective.

- A differentiable top-k selection mechanism enables gradient flow from answer generation back to retrieval, aligning document relevance with answer quality and eliminating the traditional disconnected tuning loop in RAG systems.

- On multi-hop QA benchmarks such as Natural Questions, HotpotQA, MuSiQue, and 2WikiMultihopQA, CLaRa’s SCP compressor at 4x compression outperforms strong text-based baselines like LLMLingua 2 and PISCO, and even surpasses full-text BGE/Mistral pipelines in average F1 scores.

- Apple has made available three practical CLaRa models along with the complete training pipeline, facilitating adoption and further research.

Editorial Perspective

CLaRa represents a significant advancement in retrieval-augmented generation by elevating semantic document compression and joint optimization within a continuous latent space from peripheral considerations to core design principles. By integrating embedding-based compression with SCP and end-to-end training through a differentiable top-k estimator and a unified language modeling loss, CLaRa matches or exceeds the performance of traditional text-based RAG systems while drastically reducing context length and simplifying retrieval architectures. This unified continuous latent reasoning framework offers a compelling alternative to conventional chunk-and-retrieve RAG approaches, especially for real-world question answering applications.