Decoding Ambiguity in Graph Database Queries Through Semantic Parsing

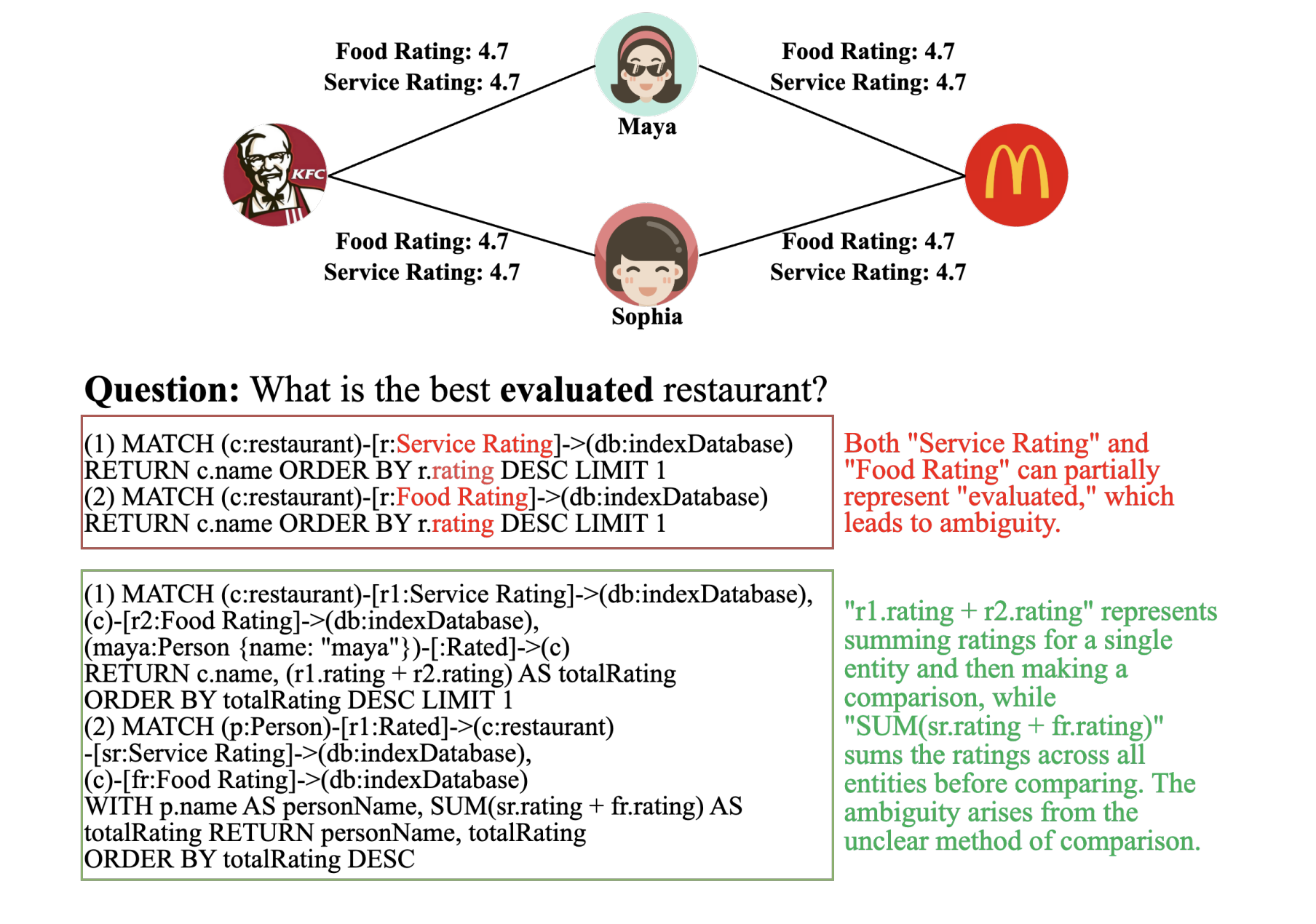

Semantic parsing serves as a bridge between natural language and formal query languages like SQL or Cypher, enabling users to interact with complex databases in a more intuitive manner. However, natural language is inherently ambiguous, often allowing multiple valid interpretations, whereas query languages require precise and unambiguous commands. While ambiguity in tabular data queries has been studied extensively, graph databases introduce unique challenges due to their intricate, interconnected data structures. For instance, a query such as “top-rated restaurant” can be interpreted differently depending on whether it refers to individual user ratings or aggregated review scores.

Challenges of Ambiguity in Graph Query Systems

Ambiguities in interactive querying systems can lead to significant issues, as misinterpretations during semantic parsing may cause the system to deviate from the user’s original intent. This misalignment can result in excessive data retrieval and unnecessary computational overhead, wasting valuable time and resources. In critical environments like real-time analytics or decision-making platforms, such errors can degrade system performance, increase operational costs, and reduce overall effectiveness. Large Language Models (LLMs) have shown potential in tackling complex and ambiguous queries by leveraging linguistic context and interactive clarification mechanisms. Nevertheless, LLMs often exhibit self-preference bias, where training on human feedback causes them to favor annotator perspectives, potentially misaligning with the actual user intent.

Innovative Approach to Ambiguity in Graph Query Generation

A collaborative research effort involving institutions such as Hong Kong Baptist University, the National University of Singapore, BIFOLD & TU Berlin, and Ant Group has introduced a novel framework to systematically address ambiguity in graph query generation. They categorize ambiguity into three distinct types: Attribute Ambiguity, Relationship Ambiguity, and Attribute-Relationship Ambiguity. To benchmark model performance, the team developed AmbiGraph-Eval, a dataset comprising 560 ambiguous queries paired with corresponding graph database samples. This benchmark evaluates nine different LLMs, assessing their proficiency in disambiguating queries and highlighting areas requiring improvement. The findings indicate that while reasoning capabilities offer some advantage, a deeper understanding of graph-specific ambiguity and mastery of query syntax are crucial for success.

Design and Methodology of AmbiGraph-Eval

The AmbiGraph-Eval benchmark is crafted to test LLMs on their ability to produce syntactically accurate and semantically meaningful graph queries, particularly in Cypher, from ambiguous natural language inputs. The dataset creation involved two main stages: data collection and human validation. Ambiguous prompts were sourced through three innovative methods: direct extraction from existing graph databases, transformation of unambiguous data into ambiguous forms using LLMs, and entirely new query generation prompted by LLMs. For evaluation, the researchers tested four proprietary LLMs, including GPT-4 and Claude-3.5-Sonnet, alongside four open-source models such as Qwen-2.5 and LLaMA-3.1. These assessments were conducted via API calls and on powerful hardware setups utilizing four NVIDIA A40 GPUs.

Performance Insights on Ambiguity Resolution

Zero-shot evaluations on AmbiGraph-Eval reveal notable differences in how models handle graph data ambiguities. In tasks involving attribute ambiguity, the O1-mini model excels in same-entity (SE) scenarios, with GPT-4o and LLaMA-3.1 also showing strong performance. GPT-4o particularly shines in cross-entity (CE) tasks, demonstrating superior reasoning across multiple entities. For relationship ambiguity, LLaMA-3.1 leads the pack, whereas GPT-4o struggles with SE tasks but performs well in CE contexts. The most complex category, attribute-relationship ambiguity, proves challenging for all models; LLaMA-3.1 performs best in SE tasks, while GPT-4o dominates CE tasks. Overall, models find multi-dimensional ambiguities significantly harder to resolve than isolated attribute or relationship ambiguities.

Conclusions and Future Directions

This research introduces AmbiGraph-Eval as a pioneering benchmark to assess LLMs’ capabilities in resolving ambiguity within graph database queries. The evaluation of nine state-of-the-art models uncovers persistent difficulties in generating accurate Cypher queries, with advanced reasoning skills providing only marginal improvements. Key obstacles include detecting ambiguous user intent, producing syntactically valid queries, interpreting complex graph structures, and handling numerical aggregations effectively. Ambiguity detection and syntax generation stand out as critical bottlenecks limiting model performance. Moving forward, enhancing LLMs’ ability to resolve ambiguity and improve syntax generation through techniques such as syntax-aware prompting and explicit ambiguity signaling will be essential for advancing natural language interfaces to graph databases.